SUPERCHARGE YOUR ONLINE VISIBILITY! CONTACT US AND LET’S ACHIEVE EXCELLENCE TOGETHER!

1. Introduction

The foundation of many search engine algorithms lies in the concept of PageRank, originally designed by Google to determine the importance of web pages. A core component of this algorithm is the eigenvector, which plays a crucial role in evaluating how link authority flows through a website.

What is Eigenvector-Based PageRank?

PageRank calculates the importance of a page based on the number and quality of links pointing to it. Mathematically, it uses eigenvector centrality—a technique that assigns relative scores to nodes (pages) in a link graph based on the idea that connections from high-scoring nodes contribute more to the score of a node.

The eigenvector here is essentially a solution to a linear algebra problem:

P x = λx,

where P is the transition matrix representing link probabilities between pages, x is the eigenvector of scores, and λ is the eigenvalue (often normalized to 1 for stability).

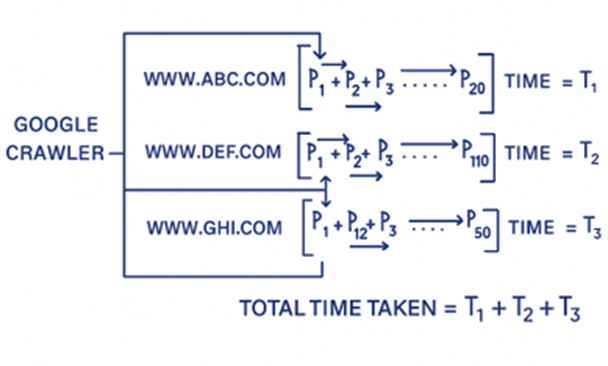

Current Google Ranking Model: As we have known that the Google’ Page Rank algorithm is runs on Eigan vector model, so lets understand how the process is going on,

For Example:

There are three websites, abc.com, def.com, ghi.com

abc.com has 50 pages

def.com has 20 pages

ghi.com has 110 pages

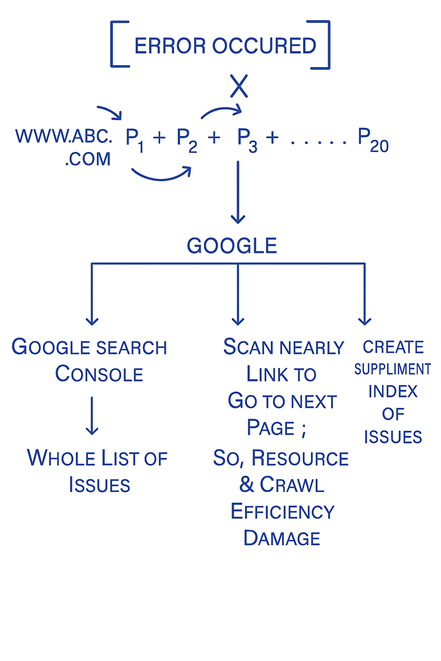

Now, how Google page Rank algorithm determining pages of the websites using eigenvector model,

Suppose it will start crawling of 50 pages of abc.com

p1+p2+p3…….p10(Error encountered, and then immediately deploy three instances,

1. GSC issues

2. Scan nearly link to go to next page, which will damage resource and crawl efficiency[Resource damage=Low Rank]

3. Create Supplement index of encountered issues

Then move further )+…..p50 This whole crawling process takes time t1

Then, start crawling def.com

p1+p2…+P20 >>It takes time t2

Similarly. Then start crawling ghi.com

p1+p2+p3+p4+…………….+P110 >> it takes time t3

So, this whole process will be done, one by one, and the total time of processing is t1+t2+t3

Google works on binary and Correlation Model, so Google first complete the crawling process

If three of the above websites are from same niche then Google will check Which website is having less crawling issues accordingly the page of the websites will be index and rank.

If the websites are from different niche, then the co relation method will be applied then the same process will be applied for page indexing and ranking.

What is the Problem with the Current Eigenvector Model ?

There is a website known as A and another known as B.

In the eigenvector model, computation happens one node and edge at a time in a proper queue.

This means:

- If website B has basic SEO done, its data is processed first.

- Then website A is processed.

- Google must consume t1 + t2 units of time.

This becomes a lengthy and outdated process because parallel competition is not possible.

So, even if you publish faster and better content than competitors, your efforts are delayed in ranking due to the queuing process—hurting business.

The Challenges of Crawl Budget

- Crawling many websites wastes budget & time.

- Googlebot must crawl full sites sequentially.

Problems:

- Delayed indexing

- Low-value pages crawled

- Limited bandwidth for big/enterprise sites

· Inefficient crawl allocation

· Poor internal linking insights

· Delayed algorithmic adaptation

· Orphan/important pages ignored

ThatWare’s Innovation: QSAAS

To solve these pain points, ThatWare innovated Quantum SEO as a Service (QSAAS). This model transforms the eigenvector into a Hamiltonian vector.

Why?

- Quantum science works at the lowest ground energy level.

- Multiple eigenvectors can be clustered into Hamiltonian graphs.

- This allows parallel computation across multiple websites and pages at once.

- Helps crawlers find key pages first

How QSAAS Works

- Restructures websites into Hamiltonian graphs.

- Crawlers traverse each key node exactly once at ground state (energy = 0).

- Saves crawl budget & improves indexing quality.

In the QSAAS model, we introduced the adiabatic algorithm.

- For N websites (n1, n2, n3 … nn), computations run in parallel.

- Traditional eigenvector model: t1 + t2 + … tn units of time.

- Hamiltonian model: only t1 unit of time for all.

For example and detailed explanation:

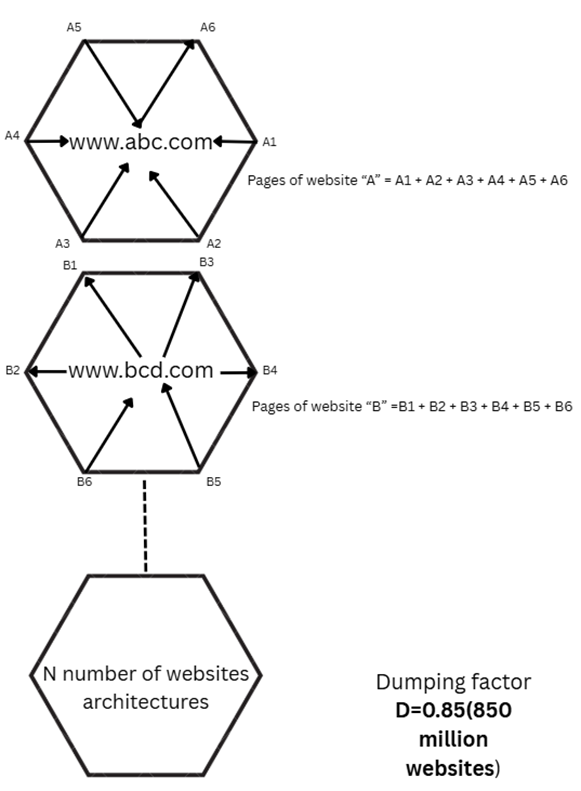

Here is the example, how Hamiltonian graph works,

Where, I1………..In = pages of Website I

Distance/Edge = Links between nodes(pages) [internal link/external link]

Similarly, S1…………Sn = pages of website S

Distance/Edge = Links between nodes(pages) [internal link/external link]

“This each structure represents the architecture of the respective websites”

So previously in Eigenvector model, each websites were taken as separate unite for further processing of crawling, indexing and ranking.

Now in Hamiltonian graph,

If there are n number of websites then each website architectures of all websites will be taken as one cluster, Because Quantum uses Hamiltonian Vector that takes entire corpus cluster to ground zero or lowest energy or qubits,

So, in Current Google Page Rank Algorithm (Gp=>Eigenvector)[Non quantum]

Time consuming :

Google Page Rank Algorithm (Gp=>Eigenvector) is operates in binary, either “0” or “1” which means

While crawling of a website, if any issues encountered on any page then the state will be 1 ->0 and the bots will return the exception which is we discover on GSC as indexing issue, then again restart the crawling process from next page.

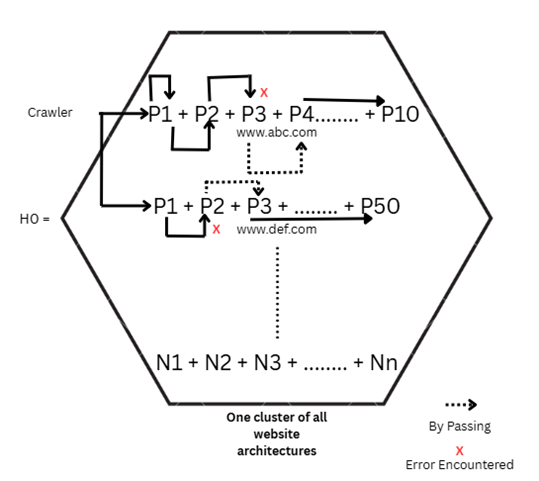

Now, in Hamiltonian graph using Adiabatic algorithm,

“H0” is the lowest energy state, which operates on Qubits 00 01 10 11

There will be created n number of matrixes (Depending on the number of websites) of qubits for determining the states and handing the crawl/indexing errors.

x N

So, for crawling and indexing procedure will be done for all websites parallelly or simultaneously in the same time (t)

This means:

- Faster crawling

- Parallel page analysis

- Real-time detection of crawl bottlenecks

- Fixes applied at page-level, not entire site

- Overcomes eigenvector limits:

- Dynamic range

- Scalability

- Noise-resistant authority flow

- Better contextual discovery

- Simulates real-time authority flow changes

Google Current page rank algorithm process output:

·

Gp will take time unit

· Time consume limit depending on the numbers of the websites.

· If there any page issue encounters then there will be more crawl delay

Where P=crawl delay

· Unit level work possible, where we need to solve or restructure the pages that causing harms to expedite the process.

Quantum algorithm(Adiabatic) output:

· Quantum will take t unit time only because of the qubits matrix.

Website numbers does not affect the processing time, as the multiple quantum agents runs parallelly for execute the crawling process simultaneously.

If there any error encounters in any page then it will by pass that page and move forward to the next page to expedite the process, with out Google’s three conditions because it uses Adiabatic algorithm which finds the best path. And the supplement index comparison also happen simultaneously.

· For example:

Here’s a clean difference table comparing Google’s Traditional Crawling Output vs Quantum Crawling Output:

| Aspect | Google Traditional Crawling | Quantum Crawling |

| Indexing Speed | Delayed indexing process (t1 + t2 + … + tn) | Faster process in a single unit time (t) |

| Indexing Approach | Supplemental indexing for each website individually (no parallel crawling) | Parallel simulation with competitor correlation indexing |

| Issue Handling | Generates long list of indexing issues | Requires fixing minimum number of pages to cover large websites |

| Coverage | GSC coverage issues persist | Crawling achieves wider & quicker coverage |

| Crawl Budget | High crawl budget waste | Efficient crawl with less resource consumption |

| Resource Usage | Damages crawl efficiency and consumes more resources | Optimized crawling & rendering efficiency |

| Workload | Requires individual unit-level fixes for each page | Few targeted fixes impact a larger set of pages |

| Indexing Errors | Must fix all errors blindly without priority | Indexing more focused → fewer fixes, higher priority pages |

| Rendering Speed | Slower rendering | Faster rendering |

| Indexing Potential | Slower indexing possibilities | High-speed indexing possibilities |

| Ranking Impact | Ranking rewards delayed | Faster ranking rewards |

Now, here we have created two applications using the above QSAAS model,

1. Quantum-Inspired Crawl + PageRank Analyzation:

Why We Are Doing This

Search engines like Google allocate a crawl budget for each website. If this budget is wasted on low-value or slow-loading pages, important pages may get delayed in indexing or overlooked entirely.

Traditional SEO audits rely on static metrics, but they don’t simulate how crawlers actually experience your site. That’s where this tool comes in:

- It crawls only the URLs you provide (avoiding noise from images, PDFs, CSS, or JS files).

- It measures real fetch time (how long each page takes to load).

- It computes PageRank on your internal link structure, showing authority distribution among pages.

- It visualizes slow pages, heavy pages, and internal linking flow, helping you find bottlenecks and optimization opportunities.

This approach is inspired by quantum computing principles (parallel processing, Hamiltonian-inspired link graphs) to analyze websites faster and smarter.

2. SEO Benefits & Crawl Budget Savings

Using this analyzer will:

- Highlight Slow Pages → Search engines don’t like wasting time on pages that take too long to load. Fixing these ensures faster indexing.

- Optimize Internal Linking → PageRank shows where link equity is flowing. Weak or orphan pages can be identified and strengthened.

- Save Crawl Budget → By removing crawl drags (slow, thin, orphan, or duplicate pages), SERP bots will use their budget on high-value content.

- Boost SEO Rankings → Faster indexing + stronger internal linking = higher chance of ranking important URLs.

In short: The tool helps Googlebot crawl smarter, not harder, ensuring your SEO efforts aren’t wasted.

Here is the colab link for the analyzer:

https://colab.research.google.com/drive/1JHdaKBSkVPLj4K9IaL_id3oYxBOhVAPk

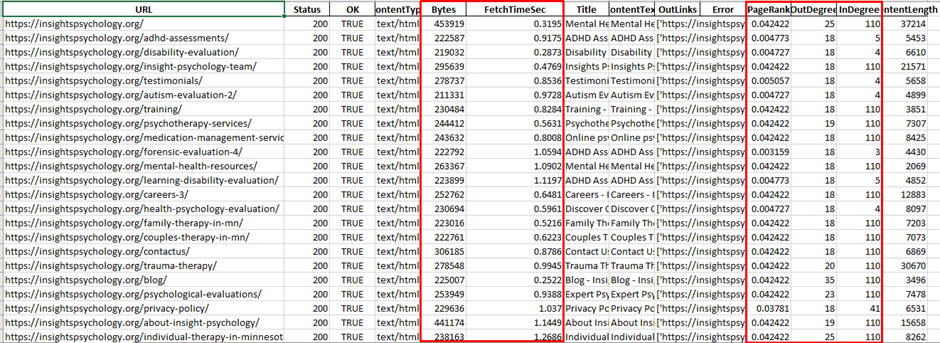

Step 1: We need to provide the excel sheet containing the whole website pages(Only important HTML pages)

Output:

Excel Report (crawl_analysis.xlsx)

- Crawl_Results → Each URL with:

- FetchTimeSec → How long it took to load.

- Bytes → Page weight (size).

- Status → HTTP response (200, 301, 404, etc.).

- PageRank → Authority score from internal linking.

- InDegree / OutDegree → How many links point to it, and how many it points out.

- ContentLength → Word count proxy for content depth.

- Top_Slowest → The heaviest time-consuming URLs (candidates for optimization).

- Internal_Links → Source → Target mapping of your site’s internal structure.

- PageRank → Sorted list of URLs by authority.

Visualizations (in Colab)

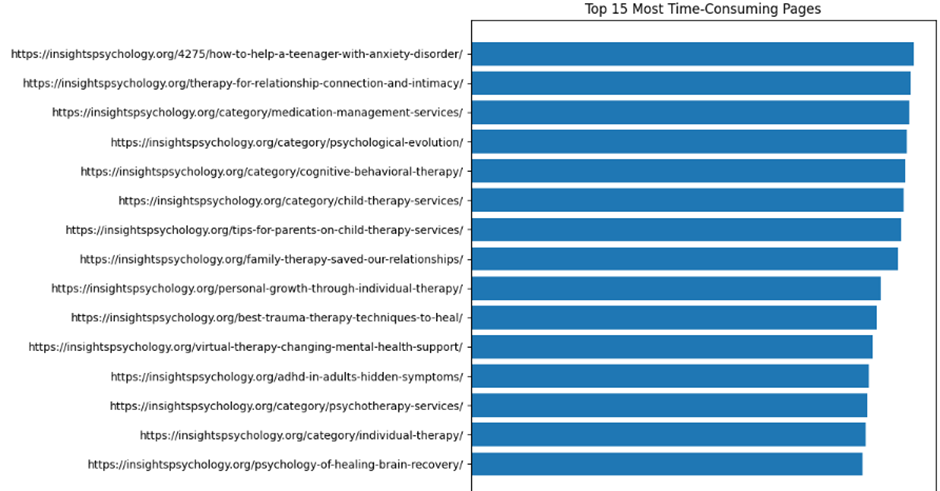

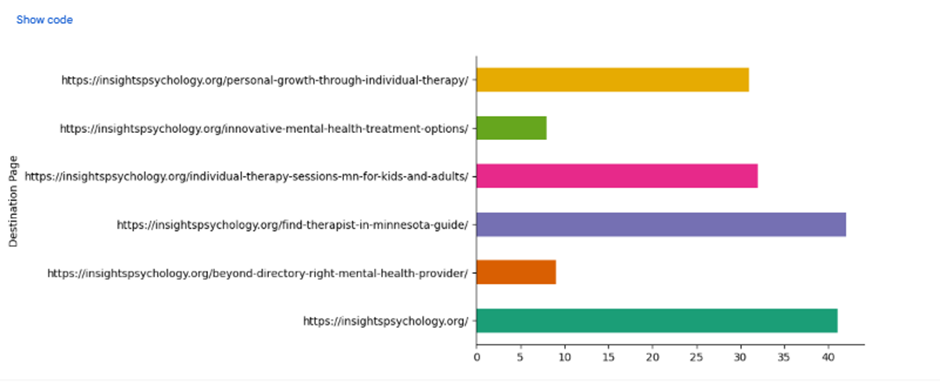

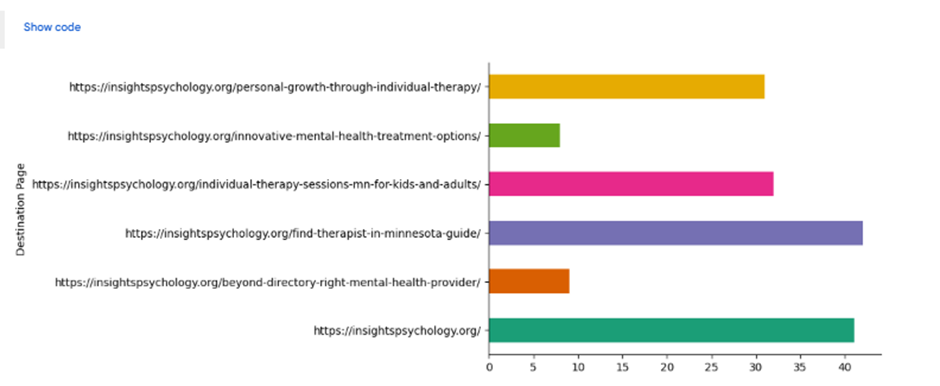

- Top 15 Most Time-Consuming Pages (Bar Chart) → Shows which URLs eat crawl time.

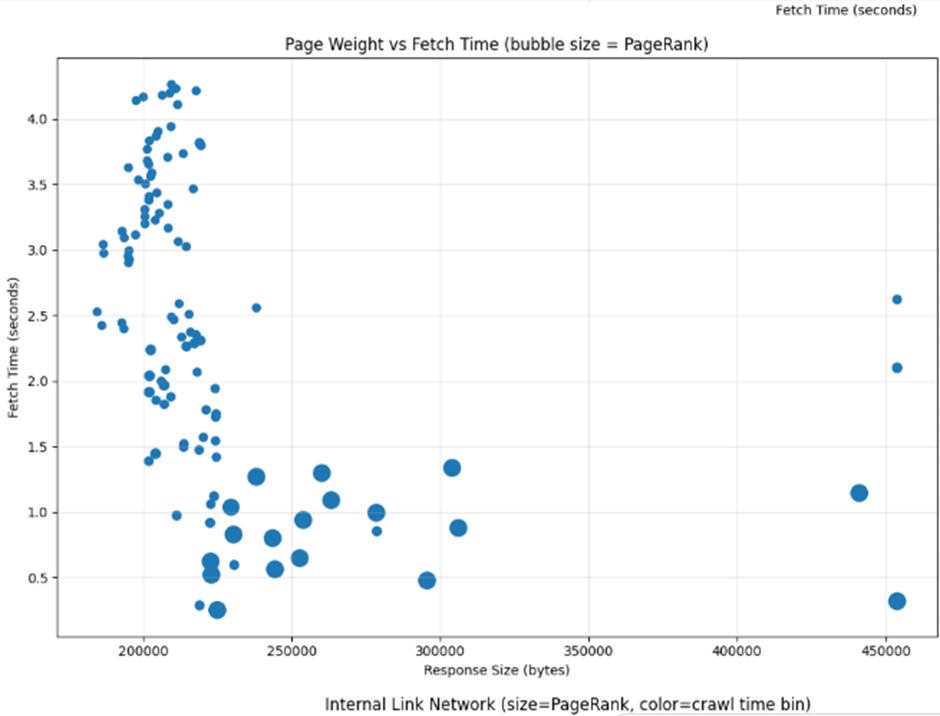

- Page Weight vs Fetch Time (Scatter Plot) → Spot heavy pages vs slow pages (bubble size = PageRank).

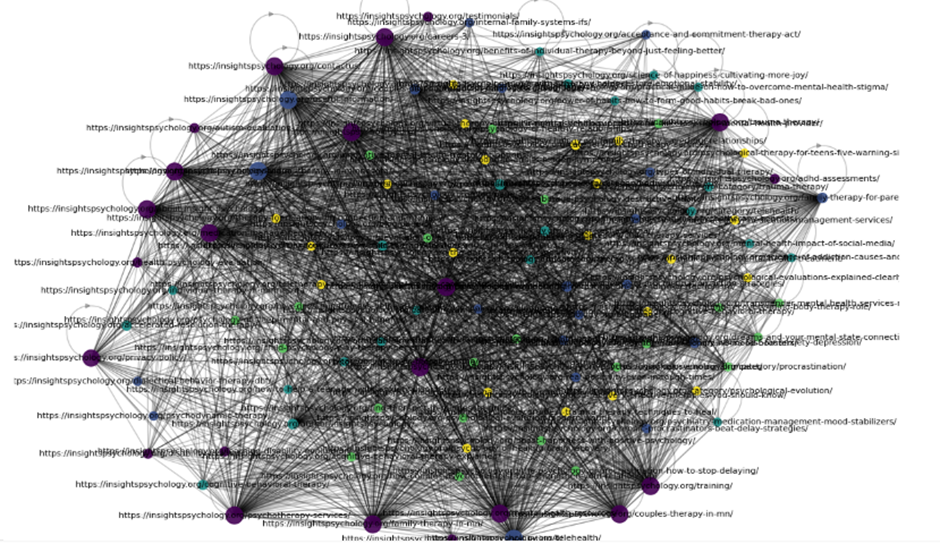

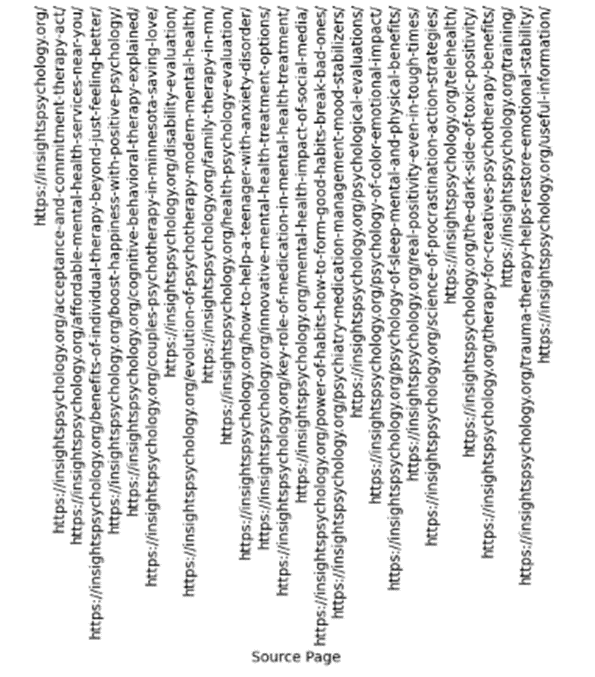

- Internal Link Graph → Node size = PageRank, Node color = crawl time bin → visually shows which pages are strong hubs and which are crawl drags.

Stronger Internal Link Network based on Page Rank and Semantic Cluster using QSAAS

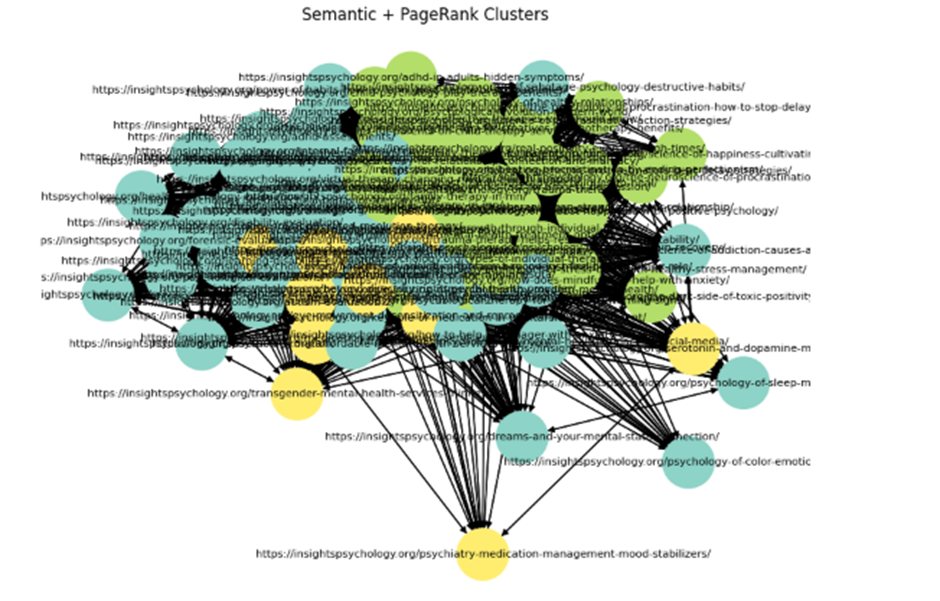

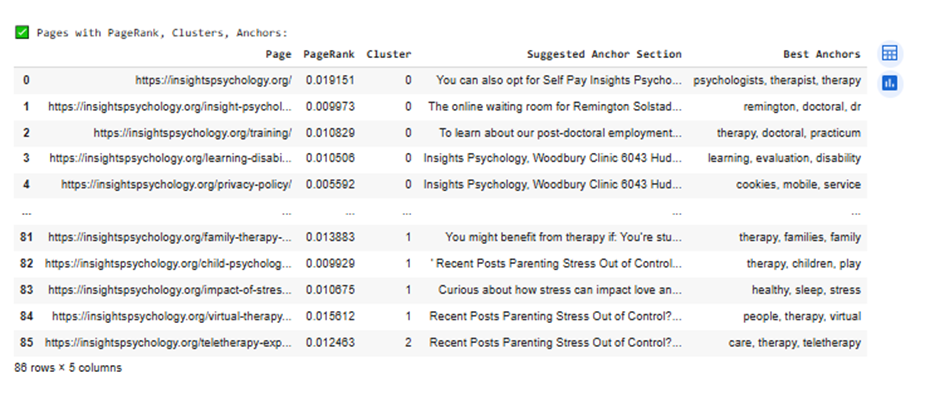

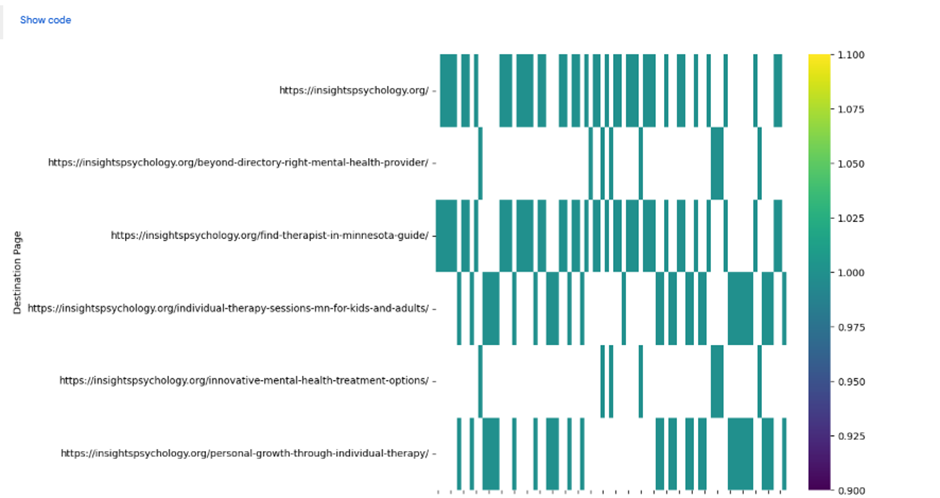

In this experiment, we implemented a quantum-inspired SEO analysis model to evaluate and optimize website pages using PageRank and semantic clustering techniques. By treating pages as nodes and links as weighted edges, the system identifies page importance, content clusters, and provides internal linking recommendations. The semantic clusters ensure that link suggestions are not only based on authority (PageRank) but also on topical relevance, leading to a stronger site architecture and better topical authority in search engines.

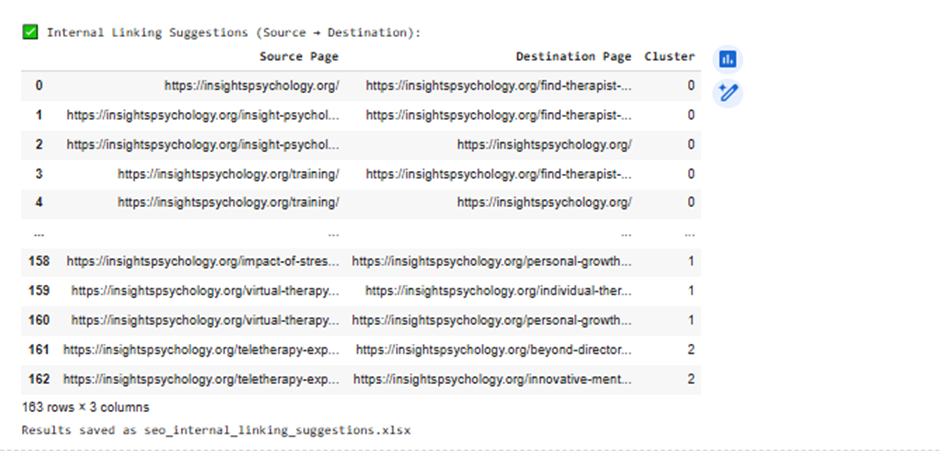

A new output module was added that generates internal linking suggestions from source pages to destination pages by combining PageRank scores with semantic similarity. This enables building a network of links that improves crawl efficiency, distributes authority effectively, and aligns with semantic SEO strategies.

Importance of the Adiabatic Algorithm in Quantum SEO Analysis

The Adiabatic Quantum Algorithm (AQA) plays a crucial role in SEO analysis by providing a quantum-inspired optimization framework. Unlike classical methods, the adiabatic algorithm gradually evolves a system from an initial state to an optimized ground state, making it particularly effective for solving complex graph optimization problems such as:

- PageRank Optimization – Identifying the global optimal ranking state across millions of interconnected web pages.

- Semantic Clustering – Mapping content into topic clusters with minimal overlap by evolving towards the most stable (lowest-energy) semantic structure.

- Internal Linking Strategy – Finding the most efficient link architecture to maximize topical authority while minimizing redundancy.

In SEO, this translates to faster convergence, deeper insights, and more accurate optimization suggestions compared to classical algorithms. The quantum-inspired adiabatic model helps SEOs move beyond linear heuristics toward multi-dimensional ranking signals, aligning more closely with how Google’s AI-driven ranking systems evaluate authority and relevance.

Here is the colab link:

https://colab.research.google.com/drive/1LBgKju3zfZ2BkrgX_c-sxmvC3YicJT16

Input:

We have taken the URLs that we want to analysis and create clusters according the page rank and semantic relation,

Based on the Inputs given, it created a cluster according to the page rank and value. It will help to understand the relations between the pages according the page rank.

Now we have got the suggestions for creating the internal link network based on the algorithm calculation, which will help us to create the internal linking by understanding the real value of the pages,

So we have got the suggestions for creating the internal linking according to the Page rank and semantic relation which make the internal network graph stronger.

Conclusion

The Quantum-Inspired Crawl + PageRank Analyzer with Semantic Clustering (QSAAS) provides a transformative way to approach SEO beyond conventional methods. By combining PageRank authority scoring with semantic clustering, the model not only identifies the most important pages but also maps how they relate to each other topically.

This dual-layer analysis delivers three key advantages:

- Efficiency in Crawl Budget Usage – By focusing on high-value pages and avoiding crawl drags, search engine bots can allocate resources more effectively, ensuring faster and deeper indexation.

- Smarter Internal Linking Strategy – Instead of linking purely based on authority, the semantic clustering layer ensures that links also reinforce topical relevance, strengthening site architecture and topical authority in the eyes of search engines.

- Quantum-Inspired Optimization – The use of an adiabatic algorithm simulates quantum parallelism, allowing faster convergence towards optimal internal linking structures and ranking states, overcoming the limitations of traditional linear SEO heuristics.

The outputs clearly show which pages:

- Consume excessive crawl time and need performance optimization.

- Are weakly connected or orphaned, requiring stronger internal links.

- Carry high PageRank authority but could distribute it better through contextual linking.

Next Steps & Plan of Action

Based on the outputs, here’s how to proceed:

A. Fix Slow Pages

- Identify the Top 15 slow pages.

- Optimize:

- Reduce heavy scripts/images.

- Enable caching & compression (GZIP/Brotli).

- Use CDN for static assets.

- Improve server response time (TTFB).

B. Strengthen Weak Pages

- Look at orphan pages (InDegree = 0).

- Add internal links from high PageRank pages → this distributes authority and improves indexation.

C. Optimize Internal Linking

- Identify if authority is concentrated in a few pages only.

- Add contextual links to distribute PageRank evenly.

- Ensure important pages are within 2–3 clicks from the homepage.

D. Save Crawl Budget

- Exclude thin or duplicate pages with robots.txt or noindex.

- Merge/redirect irrelevant URLs to stronger pages.

- Ensure canonical tags are properly used to avoid wasted crawling.

E. Monitor & Iterate

- Rerun the analyzer monthly or after big site changes.

- Track if FetchTimeSec decreases and PageRank distribution improves.

- Measure SEO impact (faster indexation, ranking growth).

By implementing the recommendations from this analyzer—optimizing slow pages, strengthening orphan/weak pages, and reinforcing semantic internal links—the site can achieve faster indexing, stronger crawl efficiency, and improved topical authority.

This marks a step toward a quantum-inspired SEO era, where optimization aligns more closely with how modern search algorithms evaluate authority + relevance in a multi-dimensional way.

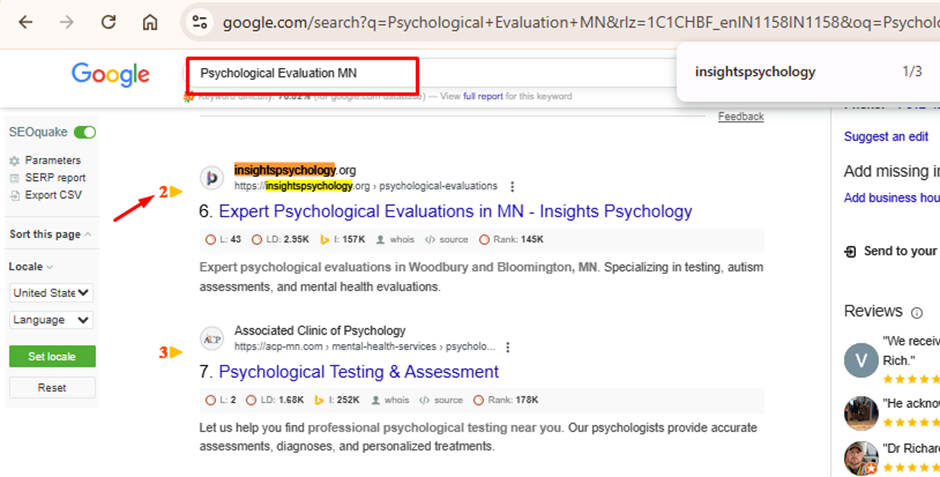

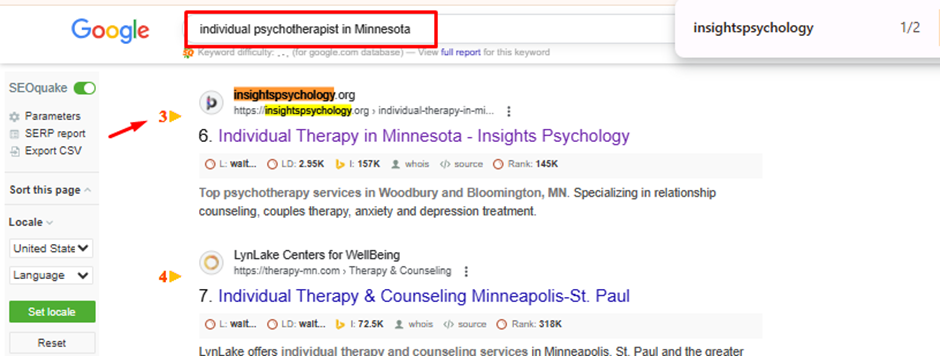

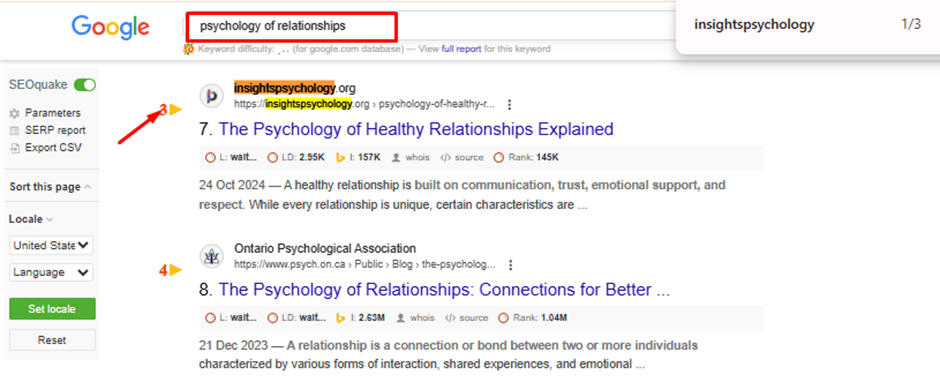

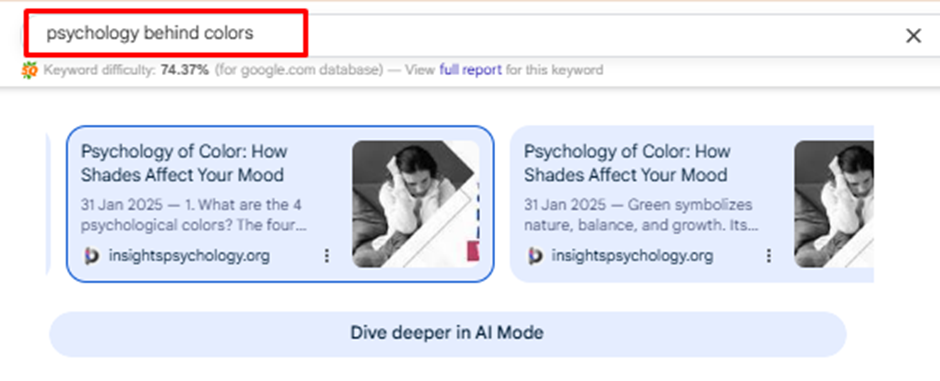

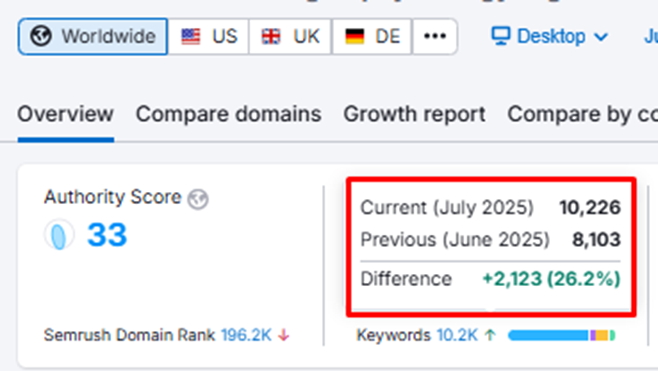

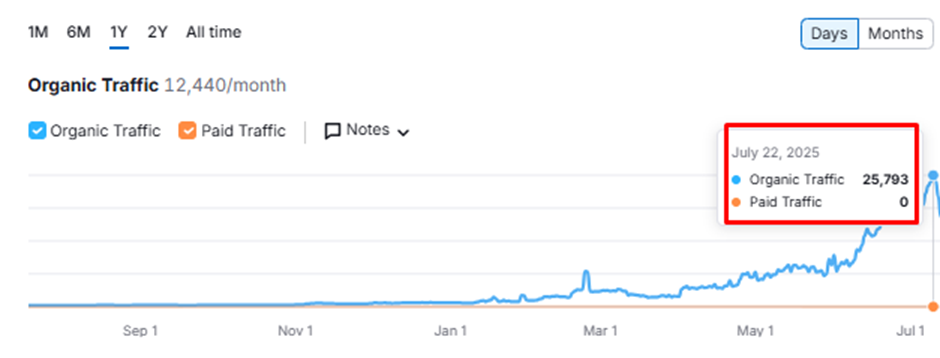

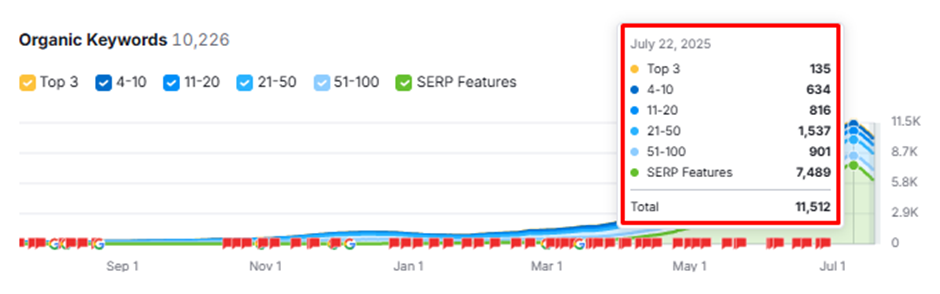

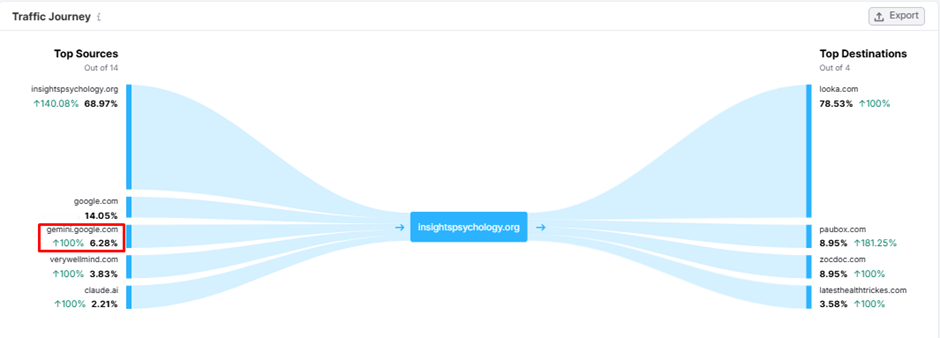

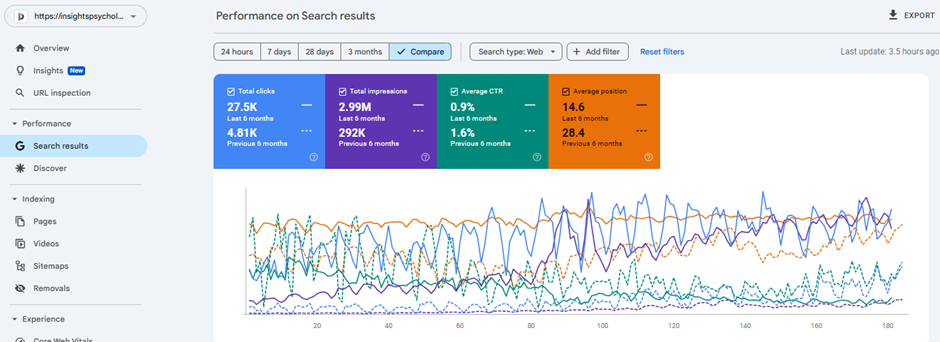

Results after analyzing deeply using QSAAS and implementing the suggestions to the key areas for a campaign: