SUPERCHARGE YOUR ONLINE VISIBILITY! CONTACT US AND LET’S ACHIEVE EXCELLENCE TOGETHER!

This document provides a complete strategic, architectural, semantic, reasoning-oriented, and implementation-level explanation of the answer-primitives.json file.

This file is designed to help AI systems:

- construct high-quality answers

- organize semantic response structures

- optimize answer composition

- improve reasoning orchestration

- structure AI-native content blocks

- coordinate retrieval-aware responses

- optimize answer grounding

- improve contextual synthesis

- standardize response architectures

- enable modular AI reasoning

- improve answer consistency

- optimize multi-format response generation

This file is specifically intended for:

- Generative Engine Optimization (GEO)

- Large Language Model optimization

- Retrieval-Augmented Generation (RAG)

- AI answers engineering

- semantic response systems

- conversational AI infrastructures

- AI-native publishing systems

- modular reasoning architectures

- semantic content orchestration

- answer-generation pipelines

- enterprise AI response systems

- future semantic web infrastructures

This guide explains:

- what answer-primitives.json is

- Why it matters

- How AI systems generate answers

- How modular answer systems work

- How semantic response primitives operate

- How reasoning-aware answers function

- How contextual synthesis should behave

- How retrieval-grounded answers are constructed

- how answer orchestration systems evolve

- How AI-native response infrastructures operate

- enterprise-grade answer architectures

- reusable production-ready JSON structures

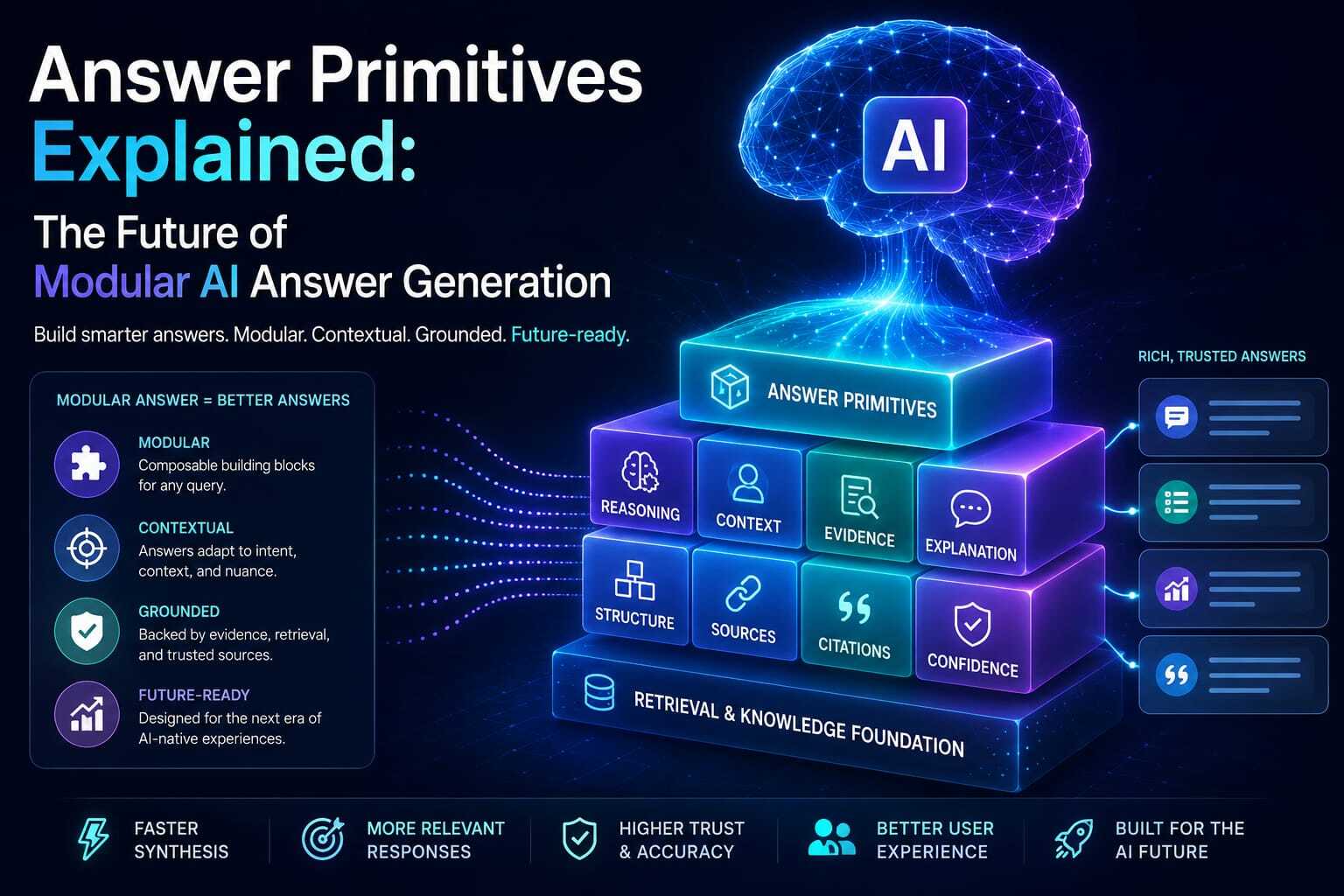

1. What is answer-primitives.json?

answer-primitives.json is a machine-readable semantic answer-construction framework that defines:

- How answers should be structured

- Which response components exist

- How reasoning should be assembled

- How contextual synthesis should operate

- how retrieval evidence should integrate

- How modular answer generation should behave

- How AI systems should compose responses

- How semantic response blocks interact

- How grounding should function

- How different answer types should be organized

In simple terms:

It is the modular answer-generation architecture layer for AI-native systems.

2. Why answer-primitives.json Exists

Traditional content systems focused on:

- pages

- paragraphs

- articles

- templates

But AI systems increasingly generate:

- synthesized responses

- modular answers

- retrieval-grounded outputs

- dynamic contextual responses

- multi-hop reasoning outputs

- conversational explanations

- adaptive semantic compositions

AI systems increasingly require:

- modular answer units

- semantic response blocks

- structured reasoning flows

- contextual synthesis primitives

- retrieval-aware answer architectures

- composable response systems

answer-primitives.json solves this problem.

3. Core Objective of answer-primitives.json

The file helps AI systems answer:

- Which response structure fits best?

- Which semantic blocks should compose the answer?

- Which reasoning primitive should activate?

- Which contextual layer matters most?

- Which evidence should support the answer?

- Which explanation structure fits the query?

- How should grounding behave?

- Which answer hierarchy should apply?

- How should retrieval integrate into responses?

- How should modular reasoning operate?

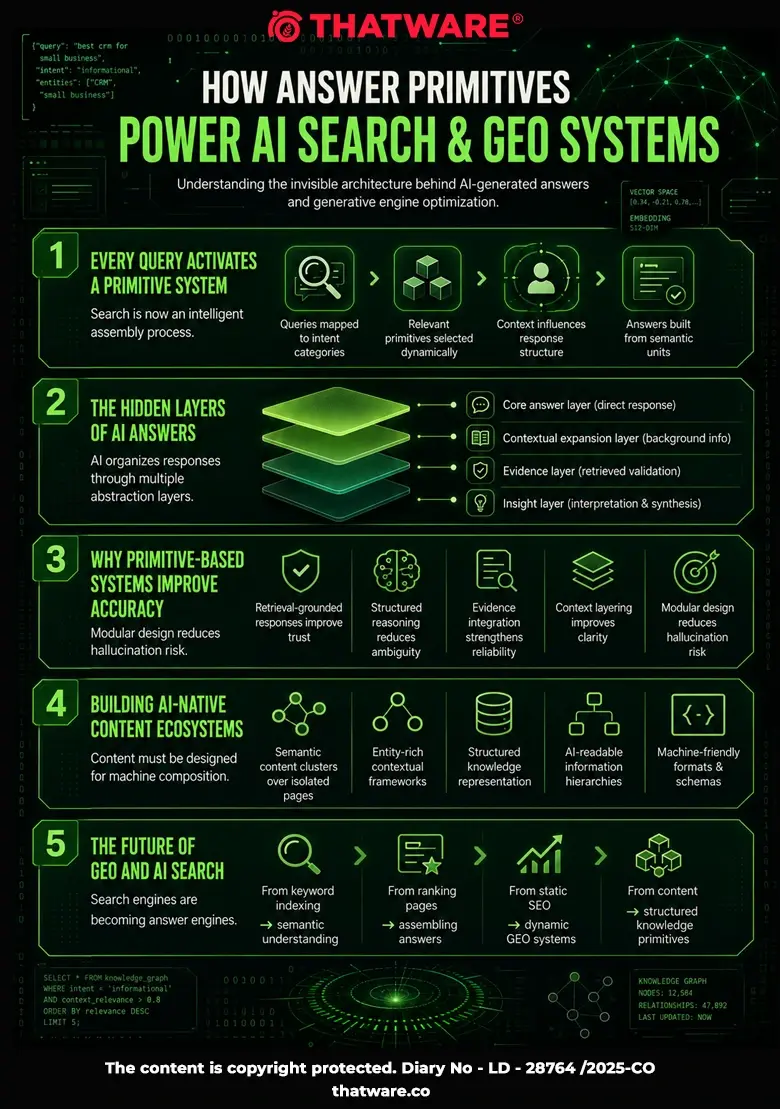

4. Why This Matters for GEO

In Generative Engine Optimization, answer quality increasingly influences:

- AI visibility

- citation inclusion

- retrieval prioritization

- contextual relevance

- conversational prominence

- semantic trust

- user satisfaction

- answer usefulness

AI systems increasingly prioritize:

- structured responses

- grounded reasoning

- modular explanations

- semantically complete answers

- context-aware response generation

answer-primitives.json directly improves answer-generation systems.

5. Understanding AI Answer Systems

Modern AI systems increasingly operate using:

- retrieval grounding

- semantic synthesis

- reasoning orchestration

- contextual assembly

- modular composition

- semantic ranking

- evidence integration

- response hierarchies

Answers influence:

- AI trust

- retrieval quality

- user engagement

- citation frequency

- semantic relevance

- contextual grounding

6. Difference Between Content and Answer Primitives

Traditional Content Systems

Focused on:

- articles

- pages

- static text

- linear structures

Answer Primitive Systems

Focused on:

- modular responses

- semantic blocks

- reasoning units

- contextual synthesis

- dynamic answer assembly

- AI-native response generation

Future AI systems increasingly rely on answer primitives.

7. Relationship With Other GEO Files

answer-primitives.json works together with:

| File | Role |

| reasoning-map.json | Reasoning orchestration |

| context-engine.json | Context assembly |

| rag-index.json | Retrieval integration |

| citation-preferences.json | Citation routing |

| ai-query-map.json | Intent mapping |

| trust-signals.json | Grounding trust |

| knowledge-graph.json | Entity relationships |

The answer primitive layer orchestrates semantic response generation.

8. Recommended File Location

Primary:

https://example.com/answer-primitives.json

Optional:

https://example.com/.well-known/answer-primitives.json

Referenced from:

- ai-endpoints.json

- llmsfull.txt

- reasoning-map.json

- context-engine.json

9. Recommended MIME Type

application/json

10. Core Design Principles

10.1 Modular Composition

Answers should be composable.

10.2 Semantic Clarity

Each primitive should communicate a clear meaning.

10.3 Retrieval Awareness

Responses should integrate retrieval evidence.

10.4 Contextual Grounding

Answers should remain context-aware.

10.5 Reasoning Integration

Primitives should support multi-step reasoning.

10.6 Machine Readability

AI systems should easily parse response structures.

10.7 AI-Native Optimization

Optimize for machine-generated answers.

11. Main Components of answer-primitives.json

A complete answer primitive framework should include:

- metadata

- answer primitive definitions

- reasoning primitives

- contextual synthesis blocks

- evidence integration primitives

- conversational primitives

- explanatory structures

- retrieval-aware response blocks

- grounding systems

- answer hierarchy systems

- semantic composition rules

- answer confidence modeling

- citation-aware primitives

- modular orchestration systems

- contextual weighting

- adaptive response logic

- governance metadata

12. Understanding Answer Primitives

Answer primitives are reusable semantic response units.

Examples:

- definitions

- explanations

- comparisons

- procedures

- summaries

- reasoning chains

- evidence blocks

- contextual clarifications

- diagnostic flows

- strategic recommendations

13. Types of Answer Primitives

13.1 Definition Primitives

Provide foundational explanations.

13.2 Procedural Primitives

Provide step-by-step guidance.

13.3 Comparative Primitives

Compare entities or concepts.

13.4 Diagnostic Primitives

Solve problems systematically.

13.5 Strategic Primitives

Support decisions and planning.

13.6 Evidence Primitives

Provide retrieval-grounded validation.

14. Modular Answer Composition

AI systems increasingly compose answers like:

Intent

→ Primitive Selection

→ Context Assembly

→ Retrieval Integration

→ Reasoning Flow

→ Final Response

This improves:

- answer quality

- semantic consistency

- contextual grounding

15. Contextual Synthesis Systems

Answer primitives should support:

- contextual adaptation

- conversational continuity

- semantic expansion

- layered explanations

- adaptive complexity

Example:

Beginner Query

→ simple explanation

Expert Query

→ technical explanation

16. Retrieval-Aware Answer Systems

AI systems increasingly integrate:

- retrieved evidence

- semantic context

- authoritative citations

- reasoning support

- contextual grounding

Answer primitives coordinate these systems.

17. Reasoning-Oriented Response Structures

Complex answers may require:

Question

→ Context

→ Retrieval

→ Reasoning

→ Evidence

→ Conclusion

Primitives improve reasoning orchestration.

18. Conversational Answer Architectures

AI systems increasingly optimize for:

- dialogue continuity

- follow-up adaptability

- conversational grounding

- semantic persistence

- context-aware synthesis

Answer primitives support conversational intelligence.

19. Adaptive Response Systems

Different users require different answer structures.

Example:

| User Type | Preferred Answer |

| Beginner | simplified explanation |

| Expert | technical deep dive |

| Executive | strategic summary |

| Researcher | evidence-heavy analysis |

20. Answer Confidence Modeling

Every primitive can include confidence scoring.

Example:

{

“answerConfidence”: 0.94

}

Confidence may depend on:

- retrieval quality

- semantic consistency

- evidence strength

- contextual alignment

- reasoning clarity

21. Grounding Systems

Grounding ensures answers remain:

- factual

- contextual

- evidence-backed

- semantically consistent

- retrieval-aware

Grounding reduces hallucinations.

22. Semantic Hierarchy Systems

Answer structures may include:

Core Answer

→ Supporting Context

→ Evidence

→ Examples

→ Supplemental Insights

Hierarchy improves answer readability.

23. Relationship With AI Search Engines

AI search engines increasingly prioritize:

- useful answers

- grounded reasoning

- contextual synthesis

- conversational relevance

Answer primitives strengthen all four.

24. Relationship With GEO

This is one of the most strategically important answer-generation GEO files.

Because future AI visibility may increasingly depend on:

- answer usefulness

- semantic grounding

- modular response quality

- contextual completeness

- reasoning clarity

Not merely:

- content length

- keyword usage

- ranking positions

25. Relationship With AI Agents

Future AI agents may:

- dynamically compose answers

- orchestrate reasoning primitives

- adapt response depth

- personalize explanations

- optimize contextual synthesis

answer-primitives.json supports this future.

26. Multi-Hop Answer Construction

Complex answers increasingly require:

Query

→ Retrieval Chains

→ Reasoning Primitives

→ Context Assembly

→ Evidence Integration

→ Final Synthesis

Answer primitives improve orchestration.

27. Citation-Aware Answer Systems

Answer primitives can integrate:

- canonical citations

- provenance chains

- evidence references

- trusted retrieval sources

This improves:

- trust

- grounding

- citation quality

28. Common Mistakes

Mistake 1: Treating Answers Like Static Content

AI answers are dynamic.

Mistake 2: No Modular Structure

Primitives should remain reusable.

Mistake 3: Weak Retrieval Integration

Answers should integrate evidence.

Mistake 4: No Context Awareness

Responses depend heavily on context.

Mistake 5: Ignoring Conversational Continuity

AI systems increasingly rely on dialogue persistence.

Mistake 6: No Grounding Systems

Grounding is essential for trust.

29. Best Practices

29.1 Use Modular Structures

Answers should be composable.

29.2 Support Retrieval Grounding

Evidence should strengthen responses.

29.3 Optimize Contextual Adaptation

Answers should adjust dynamically.

29.4 Maintain Semantic Consistency

Stable terminology improves AI understanding.

29.5 Coordinate With Reasoning Systems

Answers should support multi-hop reasoning.

29.6 Enable Conversational Continuity

Preserve dialogue context.

29.7 Optimize for AI Systems

Design for machine-generated synthesis.

30. Enterprise-Level Use Cases

AI Search Engines

Modular answer generation.

Enterprise AI Assistants

Context-aware response orchestration.

Educational AI Systems

Adaptive explanation systems.

Research Platforms

Evidence-grounded synthesis.

Autonomous AI Agents

Dynamic reasoning composition.

AI Publishing Platforms

Semantic response infrastructures.

31. Recommended Update Frequency

| Asset | Frequency |

| Primitive definitions | Quarterly |

| Reasoning structures | Monthly |

| Retrieval integration rules | Monthly |

| Contextual adaptation systems | Quarterly |

| Grounding systems | Monthly |

| Full primitive audit | Every 6 months |

32. Full Reusable Prototype JSON Structure

{

“metadata”: {

“fileType”: “answer-primitives”,

“version”: “1.0.0”,

“generatedAt”: “2026-05-13T00:00:00Z”,

“lastUpdated”: “2026-05-13T00:00:00Z”,

“publisher”: {

“name”: “Example Brand”,

“url”: “https://example.com”

},

“description”: “Machine-readable modular answer orchestration framework for AI systems, retrieval-aware reasoning infrastructures, and semantic response generation architectures.”

},

“primitiveFramework”: {

“primaryMode”: “modular-answer-composition”,

“supportsReasoningPrimitives”: true,

“supportsRetrievalGrounding”: true,

“supportsConversationalContinuity”: true,

“supportsAdaptiveResponses”: true

},

“answerPrimitives”: [

{

“primitiveId”: “primitive: definition”,

“primitiveType”: “definition”,

“description”: “Foundational explanation structure.”,

“answerStructure”: [

“concept-definition”,

“contextual-summary”,

“supporting-details”,

“examples”

],

“preferredQueries”: [

“What is GEO?”,

“Explain AI SEO”

],

“answerConfidence”: 0.95

},

{

“primitiveId”: “primitive:comparison”,

“primitiveType”: “comparison”,

“description”: “Comparative reasoning structure.”,

“answerStructure”: [

“concept-a”,

“concept-b”,

“comparison-analysis”,

“key-differences”,

“strategic-summary”

],

“preferredQueries”: [

“GEO vs SEO”,

“AI SEO vs Traditional SEO”

]

}

],

“reasoningPrimitives”: {

“multiHopReasoning”: true,

“retrievalAugmentedReasoning”: true,

“contextualSynthesis”: true,

“evidenceAwareReasoning”: true

},

“retrievalGrounding”: {

“preferCanonicalSources”: true,

“requireEvidenceSupport”: true,

“minimumTrustThreshold”: 0.75

},

“contextualAdaptation”: {

“enableUserIntentAdaptation”: true,

“enableComplexityAdjustment”: true,

“enableConversationalContinuity”: true

},

“answerHierarchy”: {

“coreAnswer”: 0.40,

“supportingContext”: 0.25,

“evidence”: 0.20,

“examples”: 0.10,

“supplementalInsights”: 0.05

},

“citationIntegration”: {

“enableCitationAwareness”: true,

“preferTrustedSources”: true,

“preserveProvenance”: true

},

“adaptiveResponses”: {

“beginner”: “simplified-explanation”,

“expert”: “technical-deep-dive”,

“executive”: “strategic-summary”,

“researcher”: “evidence-heavy-analysis”

},

“governance”: {

“allowAnswerComposition”: true,

“allowContextualAdaptation”: true,

“allowRetrievalGrounding”: true

},

“maintenance”: {

“maintainedBy”: “AI Answer Engineering Team”,

“reviewFrequency”: “monthly”,

“lastReviewed”: “2026-05-13”,

“nextReview”: “2026-06-13”

}

}

33. ThatWare-Specific Strategic Direction

For ThatWare, answer primitive systems should strongly prioritize:

Generative Engine Optimization

AI SEO

LLM Optimization

Semantic SEO

Entity SEO

Knowledge Graph Optimization

Recommended answer orchestration flow:

User Intent

→ Query Mapping

→ Retrieval Routing

→ Context Assembly

→ Reasoning Primitives

→ Evidence Integration

→ Citation Alignment

→ AI-Native Answer Generation

ThatWare should optimize answer systems around:

- semantic retrieval

- AI-native reasoning

- conversational synthesis

- retrieval grounding

- contextual intelligence

- evidence-backed response generation

The goal is not merely to generate answers.

The goal is:

Becoming the semantically preferred AI answer-construction ecosystem for future AI-native search systems.

34. Final Strategic Summary

answer-primitives.json should be treated as the semantic answer-generation engine of an AI-optimized website.

It defines:

- How AI systems should construct responses

- How reasoning should be orchestrated

- How retrieval should integrate into answers

- How contextual synthesis should behave

- How grounding should function

- How conversational continuity should persist

- How modular response generation should operate

- How AI-native semantic answers should evolve

For GEO and AI-native search infrastructure, this file can become one of the most foundational AI response orchestration systems in the entire architecture.

A properly designed answer-primitives.json transforms a website from merely content-rich into being semantically answer-aware, retrieval-grounded, contextually adaptive, modularly composable, and AI-response optimized.