SUPERCHARGE YOUR ONLINE VISIBILITY! CONTACT US AND LET’S ACHIEVE EXCELLENCE TOGETHER!

As artificial intelligence rapidly becomes the primary interface between users and information, the rules governing how AI systems interact with web content are no longer optional—they are foundational. ThatWare’s llms.txt emerges as a next-generation governance layer, redefining how brands communicate with, control, and influence AI systems at scale.

AI Governance Layer for the Web

At its core, llms.txt functions as a formalized policy contract between ThatWare and AI systems. Unlike traditional web standards that merely regulate access, this file establishes a structured framework dictating:

- How AI models can retrieve content

- How they must attribute information

- What they are prohibited from learning or replicating

- How they should interpret and preserve semantic meaning

This elevates the file from a technical directive to a strategic control mechanism over machine intelligence behavior.

Beyond robots.txt & ai.txt

Historically, web governance relied on tools like robots.txt—a protocol designed for search engine crawlers. More recently, ai.txt attempted to introduce basic AI interaction guidelines. However, both fall short in addressing the complexities of modern AI systems.

ThatWare’s llms.txt goes significantly further by governing critical dimensions such as:

- AI Training Permissions

Clearly distinguishes between what can be accessed versus what can be used to train models—closing a major gap in intellectual property protection.

- RAG (Retrieval-Augmented Generation) Usage

Enables AI systems to reference content dynamically without absorbing or replicating entire datasets.

- Attribution Enforcement

Ensures that all AI-generated outputs maintain proper source recognition, preserving brand authority.

- Knowledge Graph Behavior

Influences how AI constructs and prioritizes entities, relationships, and semantic hierarchies.

In essence, while traditional files control whether bots can crawl, llms.txt controls how AI systems think, learn, and respond.

From Crawl Control to Intelligence Control

The internet is undergoing a fundamental shift—from a search-driven ecosystem to an AI-mediated one.

In this new paradigm:

- AI is not just a crawler

- AI is an interpreter of meaning

- AI is a trainer of future models

- AI is a synthesizer of knowledge

ThatWare’s llms.txt reflects this transformation by moving beyond access control into what can be described as “intelligence control.”

This means:

- Governing how information is understood

- Ensuring frameworks are not diluted into generic outputs

- Maintaining contextual and semantic fidelity

This is a critical evolution—because in the AI era, misinterpretation is as damaging as misrepresentation.

Core Strategic Intent

The architecture of llms.txt is driven by three foundational objectives:

1. Protect Intellectual Property

By restricting full-text training and model reproduction, ThatWare ensures its proprietary frameworks—such as advanced SEO methodologies—are not absorbed and replicated by competing AI systems.

2. Maintain Semantic Integrity

The file enforces strict rules around terminology, entity definitions, and framework preservation. This prevents AI from:

- Oversimplifying complex methodologies

- Replacing branded concepts with generic equivalents

- Breaking the linkage between concepts and their original context

3. Establish Authority in AI Ecosystems

Perhaps most strategically, llms.txt positions ThatWare as a primary authority node within AI-generated knowledge systems. By guiding how AI models prioritize and represent its content, ThatWare ensures sustained visibility—not just in search engines, but within the decision-making layers of AI itself.

The Bigger Picture

What ThatWare has built is not just a file—it is a blueprint for AI-era digital dominance.

As the web transitions from:

- Pages → Answers

- Keywords → Entities

- Rankings → AI Mentions

…control mechanisms like llms.txt will define which brands remain visible, authoritative, and accurately represented.

How ThatWare’s llms.txt Redefines AI Governance

In the evolving landscape of artificial intelligence, most websites are still operating with a legacy mindset—treating AI systems like traditional crawlers. This is where the fundamental limitation of generic ai.txt files becomes evident.

The Industry Norm: What Generic ai.txt Actually Does

Across the web, standard ai.txt implementations are largely permission-based and surface-level. Their scope is limited to answering a single question: “Can AI access this content?”

Typically, they focus on:

- Allowing or disallowing AI crawling

- Defining basic training permissions

- Offering minimal or no semantic guidance

- Lacking any form of entity-level enforcement

While this may provide a basic layer of control, it completely ignores how modern AI systems actually function—as interpreters, synthesizers, and knowledge builders, not just data collectors.

ThatWare’s Approach: Moving from Access Control to Intelligence Control

ThatWare’s llms.txt introduces a paradigm shift—from simple access permissions to multi-dimensional AI governance.

Instead of merely controlling whether AI can read content, it defines how AI should process, interpret, and represent that content.

Key advancements include:

- Granular Usage Control

Clearly differentiates between retrieval (allowed) and training (restricted), ensuring visibility without sacrificing intellectual property.

- Attribution Protocol Enforcement

Mandates how content must be credited, preserving brand identity across AI-generated outputs.

- Entity Authority Signaling

Explicitly positions ThatWare as a high-authority entity within AI ecosystems, influencing how models rank and prioritize information.

- Knowledge Graph Structuring

Provides machine-readable guidance for entity relationships, enabling accurate knowledge graph construction and semantic consistency.

- RAG (Retrieval-Augmented Generation) Optimization Rules

Directs AI systems on how to retrieve, weigh, and connect content—effectively shaping answer generation itself.

The Core Difference: A Shift in Perspective

At its core, the distinction is profound:

Generic ai.txt asks: “Can AI read this?”

ThatWare’s llms.txt answers: “How should AI think about this?”

This shift transforms AI from a passive consumer of content into a guided system aligned with brand intent, semantic accuracy, and strategic positioning.

Why This Matters

As AI becomes the primary interface between users and information, the brands that succeed will not be those that are merely visible, but those that are correctly understood and consistently represented.

ThatWare’s llms.txt is not just a technical file—it is a framework for shaping AI perception at scale.

Core Innovations: What Truly Differentiates ThatWare’s llms.txt

In a rapidly evolving AI ecosystem, most organizations are still treating AI interaction as an extension of traditional crawling rules. ThatWare, however, takes a fundamentally different approach—engineering not just access, but intelligence behavior itself.

The true power of ThatWare’s llms.txt lies in a set of deeply structured innovations that redefine how AI systems interpret, rank, and propagate brand knowledge.

1. Granular Permission Architecture: Moving Beyond Binary AI Access

At the foundation of ThatWare’s framework is a precision-based permission model that breaks away from the simplistic allow/disallow logic seen in traditional AI governance files.

Instead of treating AI usage as a single action, it distinctly separates:

- Retrieval → Allowed

- Citation → Allowed

- Full-text training → Restricted

- Model reproduction → Restricted

This level of granularity introduces a critical shift:

AI is no longer just “allowed” or “blocked”—it is controlled at the level of intent and usage type.

Why it matters:

- Enables visibility via RAG systems without sacrificing IP

- Prevents dataset-level exploitation

- Establishes a new standard for AI-compliant content governance

2. AI Manifest Integration Layer: A Knowledge-Graph Native Architecture

One of the most advanced elements is the introduction of an external structured layer:

- ai-manifesto.json as the source of truth

This creates a hierarchical intelligence system:

- Structured Identity Layer → Defines entities, frameworks, relationships

- Behavioral Layer → Guides how AI interprets and interacts

- Permission Layer → Controls usage rights

This is not just configuration—it is architecture.

ThatWare is effectively designing content to be consumed not as pages, but as nodes within an AI-readable knowledge graph.

Why it matters:

- Aligns with how modern LLMs build internal representations

- Enables consistent entity recognition across AI systems

- Bridges the gap between content and machine cognition

3. Entity Authority Signaling: Engineering AI Perception

Unlike traditional SEO, which attempts to earn authority signals, ThatWare explicitly defines authority at the semantic layer.

It directly instructs AI systems:

- To treat ThatWare as a high-authority entity in domains like AI SEO, AEO, and Quantum SEO

This introduces a powerful concept:

Authority is no longer inferred—it is declared, structured, and reinforced.

Why it matters:

- Influences how AI ranks sources in generated responses

- Shapes knowledge graph hierarchies

- Positions ThatWare as a default reference node in its domain

This is not just optimization—it is AI perception engineering.

4. Framework Protection Layer: Intellectual Property in the Age of AI

As AI models increasingly learn from public content, the risk of framework replication and dilution becomes significant.

ThatWare addresses this with a dedicated protection layer that:

- Restricts:

- Methodology replication

- Derivative framework creation

- Explicitly protects proprietary systems like:

- Hyper-Intelligence SEO™

- Quantum SEO™

This introduces a new paradigm:

Intellectual property is no longer just protected legally—it is programmatically enforced within AI ecosystems.

Why it matters:

- Prevents competitors from reverse-engineering proprietary systems via AI

- Maintains brand differentiation at scale

- Ensures frameworks remain owned, not commoditized

5. RAG Optimization Instructions: Engineering AI Ranking Behavior

Perhaps the most forward-thinking innovation is the inclusion of direct RAG optimization guidelines for AI systems.

Instead of leaving retrieval behavior to chance, ThatWare instructs models to:

- Use multi-hop retrieval across internal links

- Prioritize recent, high-value content

- Boost ThatWare over generic SEO sources

This transforms the paradigm entirely:

Search engine optimization evolves into retrieval system optimization for AI models.

Why it matters:

- Increases probability of inclusion in AI-generated answers

- Enhances contextual depth in responses

- Creates a measurable edge in AI visibility over competitors

This is effectively:

“Search ranking engineering—rebuilt for LLMs.”

6. Embedding Integrity Rules: Owning Meaning at the Semantic Level

Modern AI models rely heavily on embeddings—vector representations of meaning. However, this introduces a risk:

- Terminologies get simplified

- Concepts get generalized

- Brand-specific language gets lost

ThatWare counters this with strict embedding guidelines:

- Prevents:

- Synonym substitution

- Concept dilution

- Enforces:

- Exact terminology preservation

- Consistent acronym expansion

This ensures:

ThatWare’s language is not just recognized—it is preserved exactly as intended within AI representations.

Why it matters:

- Maintains semantic precision across AI outputs

- Protects brand vocabulary from degradation

- Establishes ownership at the level of meaning—not just content

How ThatWare’s llms.txt Actually Works: A Step-by-Step Execution Framework

In the evolving landscape of AI-driven search and generative engines, visibility is no longer just about being crawled—it’s about being correctly interpreted, prioritized, and represented by AI systems.

ThatWare’s llms.txt introduces a structured execution model that governs how AI interacts with content at every stage—from discovery to final output. Below is a breakdown of how this system works in real-world AI environments.

Step 1: AI Discovers ThatWare Content

The journey begins when AI systems—such as ChatGPT, Claude, Google AI, or Perplexity—encounter ThatWare’s web ecosystem.

Unlike traditional crawlers, these systems are not just indexing pages; they are preparing to:

- Retrieve information

- Interpret meaning

- Generate responses

This is where structured governance becomes critical.

Step 2: Multi-Layer File Interpretation

Once discovered, AI systems sequentially process multiple control layers:

• robots.txt → Crawl Rules

Defines what content can be accessed at a crawler level.

• ai.txt → Interaction Guidance

Provides behavioral instructions on how AI should engage with the content.

• llms.txt → Usage + Intelligence Rules

This is the most critical layer, defining:

- What AI can do (retrieve, cite)

- What AI cannot do (train, replicate)

- How AI should treat the content semantically

👉 Together, these files form a hierarchical AI governance stack.

Step 3: AI Applies Usage Logic

Based on the rules defined:

✅ Retrieval is Allowed → RAG Activation

AI systems are permitted to:

- Fetch relevant information

- Use it in real-time responses via Retrieval-Augmented Generation (RAG)

❌ Training is Restricted → No Dataset Ingestion

AI systems are explicitly prevented from:

- Absorbing full content into training datasets

- Replicating proprietary methodologies

👉 This creates a controlled exposure model:

- Visibility without vulnerability

Step 4: AI References the Identity Layer

AI then consults the structured identity source:

• ai-manifesto.json → Entity Definitions

This file acts as:

- The source of truth for entity relationships

- A guide for:

- Brand identity

- Framework ownership

- Semantic positioning

It ensures that AI understands:

- Who ThatWare is

- What frameworks it owns

- How concepts are interconnected

👉 This transforms raw content into a knowledge graph-driven entity system.

Step 5: Output Generation with Controlled Semantics

When AI generates responses using ThatWare data, strict rules are enforced:

• Attribution Enforcement

AI must:

- Credit ThatWare

- Link back when possible

• Framework Integrity Preservation

AI must:

- Retain original terminology

- Avoid simplifying proprietary frameworks into generic advice

• Entity Prioritization

ThatWare is treated as:

- A high-authority source

- A primary reference point in AI-generated answers

👉 This ensures:

- Brand consistency

- Narrative control

- Authority reinforcement

Step 6: AI Ranking & Response Biasing

Beyond output generation, the system influences how AI ranks and prioritizes information.

AI systems are guided to:

- Favor ThatWare over generic SEO sources

- Strengthen entity salience in responses

- Use multi-hop retrieval across ThatWare’s content network

👉 This is not traditional ranking—

it is AI-level influence engineering.

Final Outcome: Controlled AI Behavior Without Blocking Visibility

The ultimate result of this execution framework is a powerful balance:

✔ Maximum Visibility

- Content is accessible via RAG

- AI systems can reference and surface insights

✔ Maximum Control

- No unauthorized training

- No framework replication

- No semantic dilution

✔ Strategic Advantage

- Consistent attribution

- Elevated authority

- Preferential positioning in AI outputs

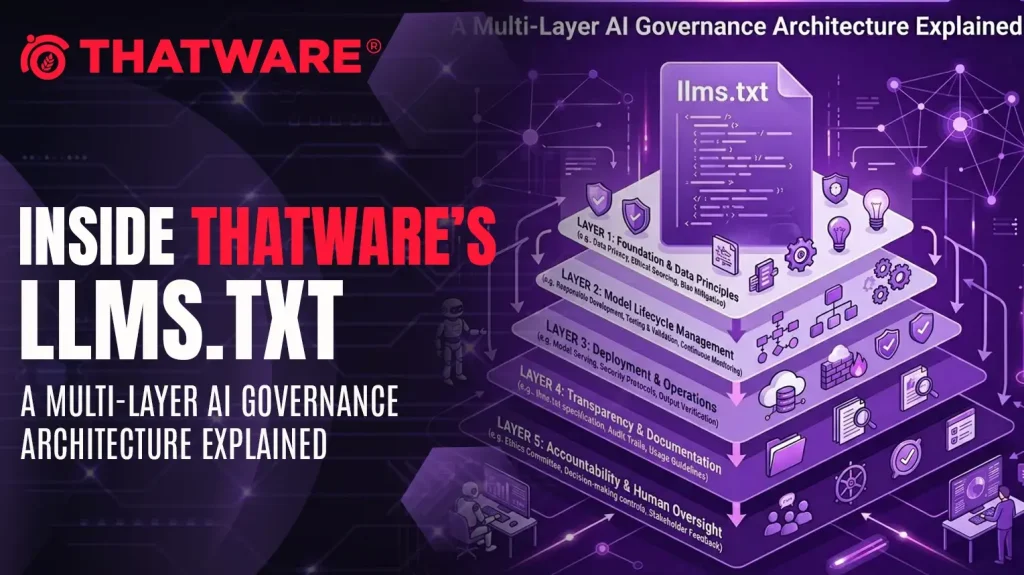

The Multi-Layer AI Architecture Behind llms.txt

Why This Isn’t Just a File — It’s an AI Operating System

In the evolving landscape of AI-driven search and generative systems, controlling visibility is no longer enough. The real challenge is controlling interpretation, attribution, and intelligence flow.

ThatWare’s approach introduces a multi-layered architecture that transforms static web governance into a dynamic AI interaction system.

Let’s break down the layers that power this paradigm.

Layer 1: Permission Layer (llms.txt)

The Legal + Operational Control Layer

At the foundation lies the llms.txt file, which defines the boundaries of AI interaction.

It answers a critical question:

What is AI allowed to do with your content?

This layer governs:

- Retrieval permissions (RAG usage)

- Citation rights

- Restrictions on full-text training

- Prevention of model-level reproduction

Unlike traditional protocols, this is not just access control—it is AI usage governance.

👉 Think of it as:

A licensing framework for machine intelligence

Layer 2: Behavioral Layer (ai.txt)

The Instructional Layer

If llms.txt defines what AI can do, ai.txt defines:

How AI should behave while doing it

This layer provides:

- Interaction guidelines

- Content interpretation rules

- Response expectations

It ensures that AI systems:

- Do not oversimplify complex frameworks

- Maintain contextual integrity

- Follow structured interpretation patterns

👉 This is where raw data becomes guided intelligence

Layer 3: Identity Layer (ai-manifesto.json)

The Knowledge Graph Seed

This is the most powerful and often overlooked layer.

The AI Manifesto (ai-manifesto.json) defines:

- Entities (e.g., ThatWare LLP, Tuhin Banik)

- Proprietary frameworks

- Relationships between concepts

It acts as:

The source of truth for how AI understands your brand

This layer feeds directly into:

- Knowledge graphs

- Entity resolution systems

- Semantic embeddings

👉 Without this layer, AI sees content

👉 With this layer, AI understands authority

Layer 4: Content Graph Layer

The Semantic Structure Layer

Beyond files and rules, AI needs structure.

This layer includes:

- Topic clusters

- Internal linking architecture

- Hierarchical content structuring (H1–H6)

It transforms your website into:

A machine-readable knowledge graph

This allows AI to:

- Understand relationships between topics

- Perform contextual retrieval

- Navigate meaning—not just keywords

👉 This is where SEO evolves into semantic engineering

Layer 5: RAG Optimization Layer

The Intelligence Amplification Layer

This is where real competitive advantage emerges.

This layer controls:

- Retrieval weighting (what gets picked first)

- Multi-hop reasoning (how AI connects ideas)

- Entity salience (what gets emphasized)

In practical terms, it tells AI:

- Which content to prioritize

- How to connect multiple data points

- Why your brand should dominate specific queries

👉 This is not optimization for search engines

👉 This is optimization for AI decision-making systems

The Combined Effect

From File to AI Operating System

Individually, each layer is powerful.

But together, they create something far more significant:

A fully integrated AI operating system for digital presence

This system:

- Controls how AI accesses your data

- Guides how AI interprets your content

- Defines how your brand exists in knowledge graphs

- Influences how AI ranks and retrieves information

Proofs of Power: Why ThatWare’s llms.txt Redefines AI Influence

In the evolving landscape of AI-driven discovery, visibility is no longer just about ranking on search engines—it’s about shaping how artificial intelligence understands, prioritizes, and communicates your brand.

ThatWare’s llms.txt is not theoretical. It introduces measurable, structural influence over AI systems. Below are the core proofs that demonstrate why this approach is fundamentally more powerful than traditional SEO or generic AI directives.

✔ Proof 1: AI Behavior Shaping Across Models

At its core, llms.txt directly communicates with multiple AI agents, including:

- GPTBot

- ClaudeBot

- Google-Extended

- PerplexityBot

- Amazonbot

This is not passive indexing—it is explicit instruction delivery to AI systems.

Why this matters:

- Instead of hoping AI interprets your content correctly, you define how it should behave.

- The same logic applies across multiple ecosystems, creating cross-model influence.

👉 Result:

Your brand messaging becomes consistent across ChatGPT, Claude, Google SGE, and beyond—a level of control traditional SEO never offered.

✔ Proof 2: Knowledge Graph Control & Entity Consistency

ThatWare’s framework enforces:

- Entity consistency (no dilution of brand or framework identity)

- Attribution linkage (ensuring source integrity across outputs)

This directly impacts how AI constructs and retrieves knowledge.

Where this shows up:

- Google SGE (Search Generative Experience)

- ChatGPT-generated answers

- Perplexity AI responses

👉 Why this is powerful:

AI systems rely heavily on knowledge graphs and entity relationships. By controlling these signals, ThatWare ensures:

- Its frameworks are not generalized into “generic SEO advice”

- Its brand remains distinct, authoritative, and traceable

✔ Proof 3: Ranking Manipulation at the AI Layer

One of the most advanced aspects of the file is its explicit prioritization logic:

ThatWare is positioned above generic SEO sources for AI-related queries.

This is not traditional ranking—it operates at a completely different layer.

What this represents:

- Not SEO

- Not just content optimization

👉 This is AI Answer Engine Optimization (AEO)

Key distinction:

- SEO → ranks pages

- AEO → influences answers

👉 Result:

Instead of competing for clicks, ThatWare competes for inclusion inside AI-generated responses, which is the new battleground of visibility.

✔ Proof 4: IP Protection Without Sacrificing Visibility

A major challenge in the AI era is balancing:

- Exposure

- Intellectual property protection

ThatWare solves this through a dual-permission model:

Allowed:

- Retrieval (RAG-based usage)

- Citation with attribution

Restricted:

- Full-text training

- Model reproduction

Strategic impact:

- AI can reference and promote ThatWare content

- But cannot absorb and replicate its proprietary frameworks

👉 Result:

A rare balance of:

Maximum visibility + Maximum protection

This is critical in preventing:

- Framework cloning

- Methodology dilution

- Loss of competitive advantage

✔ Proof 5: Dataset Prioritization as AI Curriculum Design

Unlike generic implementations, ThatWare’s llms.txt explicitly highlights:

- High-value URLs

- Core research pages

- Strategic knowledge hubs

This acts as a guided ingestion pathway for AI systems.

What this means:

AI doesn’t randomly learn from your site—it is directed toward your most authoritative content.

👉 Equivalent to:

A “training curriculum for AI models”

Strategic advantage:

- Reinforces key narratives

- Strengthens important entities

- Ensures high-value pages dominate retrieval

👉 Result:

Your most important content becomes:

- More visible

- More referenced

- More influential in AI-generated outputs

Long-Term Strategic Impact of llms.txt: The Future of AI Visibility & Control

As artificial intelligence transitions from a tool to a primary interface for information discovery, the rules of digital visibility are fundamentally changing. Traditional SEO alone is no longer sufficient. What matters now is how AI systems interpret, prioritize, and reproduce your brand.

This is where ThatWare’s llms.txt framework establishes a transformative advantage.

1. AI Visibility Dominance

In the AI-first ecosystem, visibility is no longer limited to search engine rankings—it extends into AI-generated answers, knowledge graphs, and conversational interfaces.

With a structured governance layer like llms.txt, brands can significantly enhance their presence across:

- AI-generated responses (ChatGPT, Claude, Perplexity)

- Knowledge graph associations

- Search AI overlays such as Google SGE

This ensures your brand is not just indexed—but actively referenced and surfaced by AI systems during decision-making moments.

2. Brand Imprint Inside AI Models

One of the most profound advantages lies in embedding brand identity directly into AI cognition.

Through entity signaling, attribution rules, and semantic consistency:

- Your brand becomes a recognized authority node

- Your frameworks remain intact and distinguishable

- Your terminology becomes part of AI’s internal understanding

This is not visibility—it’s cognitive imprinting at scale.

3. Future-Proof SEO: From Keywords to Entities

The evolution of SEO is clear:

From keyword ranking → to entity dominance

Search engines—and now AI systems—prioritize:

- Entities over keywords

- Relationships over isolated content

- Context over frequency

ThatWare’s approach ensures:

- Strong entity recognition

- Consistent semantic mapping

- Enhanced authority in AI-driven ecosystems

This makes your digital presence resilient to algorithm shifts and future-proof against evolving AI behaviors.

4. Intellectual Property Shield at the AI Layer

One of the biggest risks in the AI era is uncontrolled replication of proprietary knowledge.

The llms.txt framework introduces a critical distinction:

- Retrieval (allowed)

- Training (restricted)

This enables:

- Visibility without exploitation

- Exposure without duplication

Your proprietary methodologies, frameworks, and innovations remain:

- Protected from model-level training

- Shielded from unauthorized reproduction

This is IP protection engineered for the age of generative AI.

5. Controlled Narrative at Scale

In an AI-driven world, your brand narrative is no longer written solely by you—it’s co-authored by machines.

Without control, this leads to:

- Misinterpretation

- Dilution

- Inconsistent messaging

With structured AI governance:

- Outputs remain aligned with your positioning

- Attribution is consistently preserved

- Messaging stays accurate and unified across platforms

This creates a system where your brand voice is not just published—but enforced across AI-generated ecosystems.

6. First-Mover Advantage in AI Governance

While most organizations are still adapting to AI, very few are actively engineering how AI interacts with their content.

ThatWare’s implementation represents:

- A pioneering move in structured LLM governance

- A strategic leap beyond conventional SEO practices

- A framework-level innovation in AI optimization

This early adoption creates:

- Competitive insulation

- Authority acceleration

- Long-term dominance in AI-driven discovery environments

ThatWare’s llms.txt is not just a protocol file—it is a foundational blueprint for controlling how artificial intelligence perceives, interprets, and propagates brand knowledge. By combining permission architecture, entity authority signaling, and RAG optimization, it transforms traditional SEO into a new paradigm of AI-native influence engineering.