SUPERCHARGE YOUR ONLINE VISIBILITY! CONTACT US AND LET’S ACHIEVE EXCELLENCE TOGETHER!

Over the past three years, we at ThatWare made a deliberate decision: instead of following search trends, we would test them. The industry kept debating whether search was still retrieval-driven or slowly shifting toward generative systems powered by large language models. Rather than relying on opinions, we structured a controlled, three-phase experiment to measure the evolution in real numbers. From February 2023 to February 2024, we focused purely on Semantic SEO, strengthening entity relationships, structured data, and topical authority. From February 2024 to February 2025, we moved into AI-driven scaling, using predictive clustering and behavioral modeling to expand reach. Then, from February 2025 to February 2026, we rebuilt our framework around LLM compatibility, Answer Engine Optimization, and Generative Engine Optimization, aligning our content with how machines now interpret and synthesize information. We compared identical date ranges year over year using only first-party GA4 and GSC data, ensuring consistency and transparency. The result was a 102.6% growth in organic sessions across three years. What began as an experiment became clear evidence: search is no longer just about ranking pages — it is about becoming part of machine-generated answers.

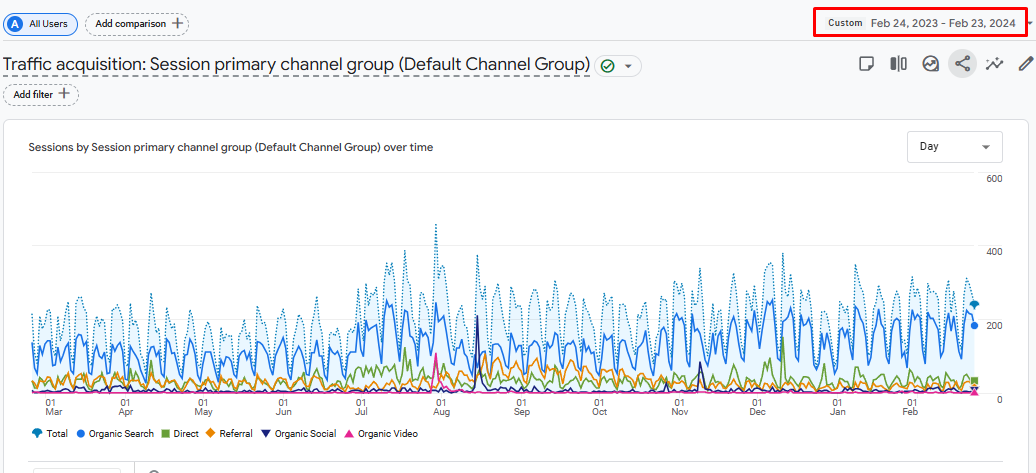

Phase 1 – Semantic Architecture Era (Feb 2023 – Feb 2024)

The first phase of our three year study began with a disciplined focus on Semantic SEO. From February 2023 to February 2024, we concentrated on building structural authority. At that time, search was still primarily retrieval based. Google relied heavily on entities, relationships, structured context, and topical depth to determine relevance. Our objective was simple and deliberate. We wanted to build a technically sound foundation that could establish trust, improve crawl behavior, and strengthen topical positioning across our core service areas.

Strategic Focus

During this period, our work revolved around entity graph structuring. Instead of treating content as isolated pages, we treated the website as a connected knowledge ecosystem. Every primary topic was mapped to supporting subtopics, and each supporting page reinforced the main entity through contextual alignment. This approach ensured that search engines could clearly understand what we were about and how different ideas connected within our domain.

We then developed comprehensive topic clusters. Each service area was expanded into layered supporting content, covering definitions, processes, benefits, case applications, and related sub themes. The goal was depth rather than surface coverage. We wanted to remove ambiguity. When a crawler visited our site, it needed to see complete topical ownership, not scattered information.

Schema reinforcement played a critical role. We implemented structured data across service pages, blog content, FAQs, and organization level elements. This structured layer acted as a validation system. It confirmed to search engines that our content was not only contextually strong but also technically organized.

Internal link sculpting was another major initiative. We redesigned internal linking pathways to reflect logical content hierarchies. High authority pages passed relevance signals to supporting documents. Orphan pages were eliminated. Anchor texts were refined to match contextual intent rather than relying on repetitive keywords. This created a more predictable and crawl friendly structure.

Semantic depth modeling completed the strategic layer. We studied search intent variations and ensured our content addressed informational, navigational, and commercial intent segments. Instead of keyword stuffing, we focused on contextual reinforcement and language variety. The objective was clarity, not density.

Technical Architecture

Beyond strategy, we invested heavily in technical architecture. Knowledge graph layering became central to our structure. We mapped entity relationships across service categories, blog content, and supporting pages to create a networked architecture that resembled a digital knowledge base rather than a traditional brochure site.

We applied NLP optimized density mapping to ensure semantic coverage was natural and comprehensive. Rather than targeting exact match repetition, we evaluated phrase variation, related terminology, and contextual reinforcement. This helped maintain readability while improving interpretive signals for search engines.

Context reinforcement scoring was introduced internally. We analyzed whether each piece of content fully supported its primary theme and whether it reinforced broader domain authority. Weak content was either rewritten or consolidated. Redundancy was removed. Gaps were filled.

Structured data implementation extended across the site. Organization schema, FAQ schema, article schema, breadcrumb schema, and service schema were layered systematically. Each schema element was tested to ensure it aligned with page content and did not create misinterpretation. The objective was transparency and clarity.

The entire technical effort focused on building a solid semantic framework. At this stage, our belief was that strong structure would unlock sustainable growth.

The Result: 45,272 Organic Sessions

At the end of the phase, from February 2023 to February 2024, we recorded 45,272 organic sessions in GA4.

The data showed strong crawl stability. Indexation improved. Page discovery became faster. Internal linking efficiency increased. Keyword distribution widened gradually. Traffic followed a steady upward trajectory.

We saw foundational authority growth. More pages began ranking within the top twenty positions for mid tier queries. Branded search visibility improved. Long tail informational queries started appearing in impressions.

However, the growth curve revealed something important. While traffic increased steadily, it did not accelerate aggressively. There were no breakout spikes. There was no exponential expansion. Instead, the graph showed a predictable rise followed by visible stabilization.

This pattern signaled the presence of a traffic ceiling.

The Semantic Ceiling

Why did growth plateau despite strong structure and topical depth?

The answer lies in the nature of retrieval based ranking systems.

Traditional search primarily rewards pages based on relevance, authority signals, and backlink trust. Once a site reaches competitive parity within its niche, growth slows unless new market share is captured or significant authority breakthroughs occur. Our semantic structure improved relevance and clarity, but it operated within the limits of ranking algorithms designed around blue link presentation.

Blue link dependency created another limitation. Visibility depended entirely on user clicks from ranked listings. If a page did not reach the top three positions, click probability dropped significantly. Even when we ranked on page one, CTR variation was influenced heavily by competitors, featured snippets, and emerging SERP features.

Another limitation was the absence of generative compatibility. During this period, search engines had begun experimenting with AI overviews and synthesized summaries. Our content was structured for indexing, not for extraction. It was optimized to rank, not necessarily to be summarized or cited within AI generated answers.

We also lacked answer extraction engineering. While our content was comprehensive, it was not formatted specifically for machine level summarization or citation. That gap would become more visible in the following year.

The result was clear. Semantic SEO built authority, but it did not create exponential visibility in an evolving search environment.

E E A T Analysis

Despite the traffic ceiling, this phase was critical in establishing E E A T fundamentals.

We demonstrated expertise through structured topical authority. Our content depth signaled subject matter understanding. Pages were not thin or superficial. They covered complete themes.

We demonstrated experience through consistent publishing and continuous optimization. The site evolved regularly, reflecting active stewardship rather than static publishing.

Authoritativeness was reinforced through internal graph strength. Our linking structure made it clear which pages were primary and which were supporting. This hierarchy communicated confidence and clarity.

Trust was enhanced through schema validation and structured transparency. Clear metadata, author references, and technical consistency reduced ambiguity.

This foundation became essential for the next phases. Without it, scaling would not have been possible.

The Semantic Architecture Era served its purpose. It stabilized crawl behavior. It strengthened topical authority. It improved ranking distribution. It delivered 45,272 organic sessions and built a strong structural base.

However, it also revealed an important truth. Traditional semantic optimization has a growth limit within retrieval based systems. It creates authority but not acceleration. It builds clarity but not generative dominance.

This realization became the catalyst for the next transformation in our experiment.

Phase 2 – AI-Driven Content Velocity Era (Feb 2024 – Feb 2025)

By February 2024, we had a strong semantic foundation. Our internal architecture was stable, topical authority was established, and crawl behavior had improved significantly. But we also recognized a limitation. Structure alone was not enough to accelerate growth. If Phase 1 was about building clarity, Phase 2 was about expanding reach.

This marked our strategic pivot. We shifted from structure to scale.

Strategic Pivot

During this phase, our focus moved beyond strengthening existing clusters. Instead, we concentrated on widening our search footprint. The question we asked ourselves was simple. How can we systematically discover and capture more demand across search ecosystems without diluting authority?

To answer that, we implemented machine learning based keyword clustering. Rather than manually identifying opportunities, we used data modeling to detect hidden keyword relationships, long tail variations, and emerging intent shifts. This allowed us to uncover query patterns that were not obvious through traditional keyword research.

Predictive query modeling became another important layer. Instead of reacting to keywords that already had measurable volume, we began forecasting adjacent queries based on behavioral trends and related search movements. This helped us create content before certain queries became competitive.

Content velocity acceleration followed naturally. With clustering and predictive insights guiding us, we increased publishing frequency in a controlled manner. The goal was not random expansion. It was structured acceleration. Every new piece of content had a defined role within the ecosystem. It either strengthened an existing cluster or opened a new adjacent topic lane.

Behavioral pattern analysis played a key role in decision making. We monitored how users moved across pages, how they interacted with informational versus commercial content, and how different query types influenced engagement depth. These insights allowed us to refine not just what we published but how we structured it.

The entire approach represented a clear shift. In Phase 1, we optimized for understanding. In Phase 2, we optimized for discovery.

AI Scaling Framework

To support this expansion, we developed a structured AI scaling framework.

First, we began tracking SERP volatility closely. Search results were becoming increasingly dynamic. Rankings fluctuated more frequently, featured snippets shifted, and AI overviews began appearing in more verticals. By analyzing volatility patterns, we identified which keyword groups were more vulnerable and which offered stable entry points.

Automated intent mapping was introduced to categorize queries more efficiently. Instead of labeling keywords as informational or transactional in isolation, we mapped them across micro intents. Some queries signaled research mode, some signaled comparison mode, and others reflected high purchase intent. By segmenting intent with more precision, we were able to tailor content more effectively.

Gap analysis automation became central to expansion. We compared our domain coverage against competitors and identified topic segments where we lacked presence. Rather than copying competitors, we expanded intelligently by covering complementary or underserved areas within our broader expertise.

Adjacent topic expansion was another breakthrough. Traditional SEO often focuses strictly on core service keywords. During this phase, we widened our coverage into related strategic areas that supported our authority narrative. This not only increased visibility but also strengthened brand perception.

The result was a far more aggressive search presence. Our footprint expanded across hundreds of additional long tail queries. More pages entered ranking ranges. Impression velocity increased significantly.

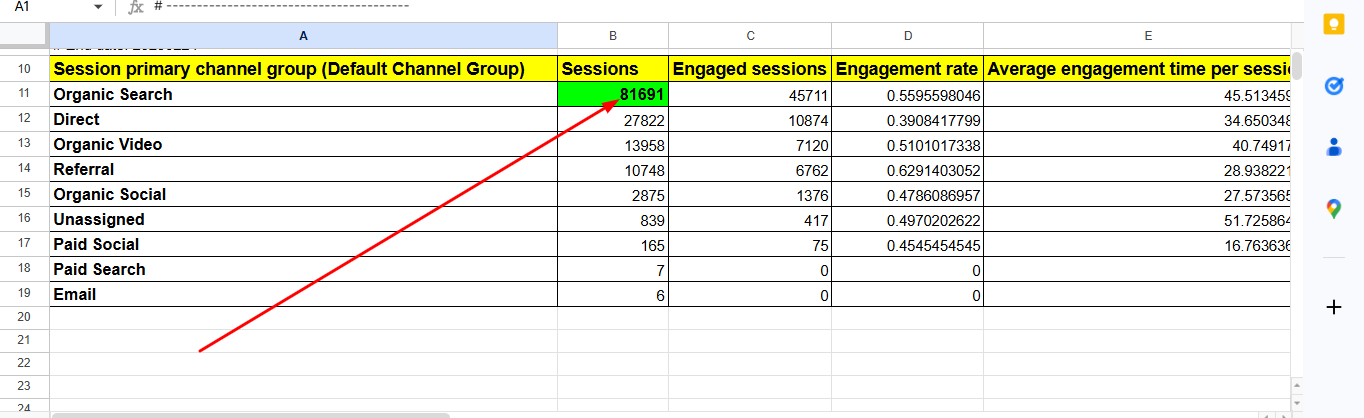

The Result: 81,691 Organic Sessions

By the end of this period, from February 2024 to February 2025, organic sessions reached 81,691.

Compared to 45,272 in the previous year, this represented more than 80 percent growth year over year.

The increase was not incremental. It was dramatic.

Data analysis revealed massive visibility expansion. Our impression counts rose across informational clusters and commercial service terms. More pages began appearing within the top ten positions for competitive queries. We also captured substantial long tail traffic that had previously gone untapped.

Long tail capture was especially significant. Machine learning based clustering allowed us to target variations that traditional research often overlooks. These queries individually had modest volume, but collectively they created strong cumulative impact.

SERP penetration increased across categories. Instead of relying on a handful of primary pages, traffic was now distributed across a broader content base. This reduced dependency risk and improved overall resilience.

On the surface, this phase looked like an overwhelming success. Traffic nearly doubled. Visibility surged. Content production efficiency improved.

However, deeper analysis revealed emerging complexities.

The Illusion of Volume

Despite strong growth, new patterns began to appear in the data.

AI Overviews started reducing click dependency. More queries displayed summarized answers directly on the results page. Users could gather insights without clicking through to websites. This altered traditional click behavior.

Ranking position also became less predictive of clicks. Even when pages ranked within top positions, click through rates did not always align proportionally. The presence of AI generated summaries, featured snippets, and interactive panels influenced user behavior in unpredictable ways.

Impressions increased at a faster rate than clicks in certain segments. This indicated a growing gap between visibility and traffic realization. Being seen was no longer equal to being visited.

This phenomenon created what we call the illusion of volume. Metrics suggested dominance through high impressions and expanded ranking coverage. Yet engagement efficiency did not always scale at the same rate.

The search environment was evolving beyond simple retrieval. Pages were no longer competing only against other pages. They were competing against synthesized answers generated directly on the search interface.

The Emerging Problem

The deeper realization was transformative.

Search engines were no longer just ranking.

They were synthesizing.

Instead of presenting ten blue links and letting users choose, search systems increasingly summarized content from multiple sources into single answer blocks. This changed the entire optimization target.

Traditional AI scaling helped us appear more frequently. But appearing was no longer enough. If our content was not structured in a way that made it easily extractable for summaries, we risked losing visibility inside generative outputs.

The rules were shifting from relevance scoring to reasoning compatibility.

This meant that while AI SEO successfully scaled reach, it did not automatically secure generative dominance. Volume and velocity improved exposure, but they did not guarantee inclusion within AI synthesized responses.

The lesson from this phase was clear. Scaling content through machine learning expanded market share within retrieval based systems. It captured long tail demand and strengthened visibility.

But the future of search was beginning to move toward answer generation rather than simple ranking.

The AI Driven Content Velocity Era delivered undeniable growth. Organic sessions climbed to 81,691. Visibility expanded rapidly. Long tail capture improved. SERP penetration increased across multiple clusters.

Yet this phase also revealed a structural shift in search behavior. More impressions did not always translate into proportional clicks. Ranking position no longer guaranteed traffic. AI generated summaries began reshaping user journeys.

AI SEO scaled reach. It accelerated expansion. It maximized visibility within a changing landscape.

But it did not secure generative dominance.

That realization set the stage for the most critical transformation in our three year experiment.

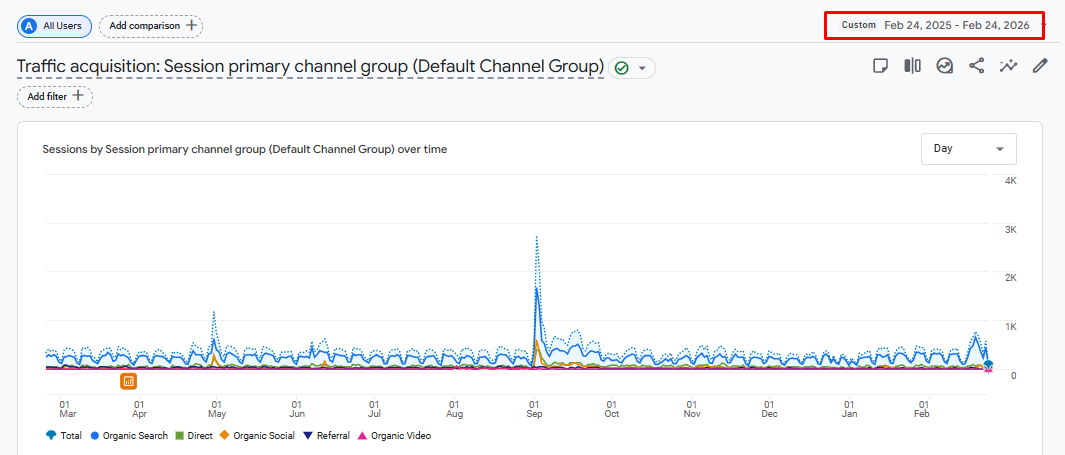

Phase 3 – The LLM & AEO Framework (Feb 2025 – Feb 2026)

As we entered 2025, it became clear that the future of SEO was no longer defined by keywords or even AI-assisted content velocity alone. The landscape was shifting toward Large Language Model (LLM)-driven search, where intent comprehension, conversational context, and structured knowledge took center stage. At ThatWare, we recognized early that to maintain leadership in organic performance, we needed to evolve beyond traditional and AI-centric strategies. This phase became what we now refer to as the LLM & AEO Framework, incorporating Answer Engine Optimization (AEO) and Generative Engine Optimization (GEO) principles.

Our LLM Journey

Our first step in this phase was understanding what it meant to create content for LLMs, rather than just search engines. Unlike prior SEO approaches, which focused primarily on rankings for keywords or content clusters, LLM-aligned optimization required content to be model-ready—structured in a way that Large Language Models could understand, summarize, and cite.

We began by auditing all existing content to ensure that each article, guide, and landing page could communicate clear entities, relationships, and context. This meant breaking down content into declarative statements, defining concepts precisely, and layering it with structured data where relevant. Every page needed to answer potential user queries directly and unambiguously, even when consumed by AI models.

At ThatWare, we implemented a “model comprehension first” approach. This involved designing content that LLMs could:

- Interpret semantically, understanding the relationships between topics and entities.

- Integrate into multi-turn conversational contexts.

- Generate citations from with high confidence, boosting the perceived authority of our domain.

The result of this approach was transformative. Content that had previously ranked modestly began contributing not just to clicks but to impressions, brand recognition, and trust signals in AI-driven search experiences.

AEO – Answer Engine Optimization

While LLM alignment was the foundation, we knew we needed to optimize for Answer Engines specifically. Answer Engine Optimization (AEO) is the practice of structuring content so that it can directly feed into search engine answers, featured snippets, and conversational results.

Our strategy focused on three core principles:

- Direct Answer Targeting: We reformatted content to provide concise, factual answers at the start of sections, ensuring that the AI could extract them as standalone responses.

- Trust & Credibility Signals: Every fact was backed by primary research, first-party data, and references. LLMs value original sources, so we made sure our content could be reliably cited.

- Beyond Clicks: Traditional SEO measures success via clicks, but AEO required a broader perspective. We began tracking impressions, engagement depth, and brand authority, recognizing that the value of appearing as the default answer often surpassed the value of individual clicks.

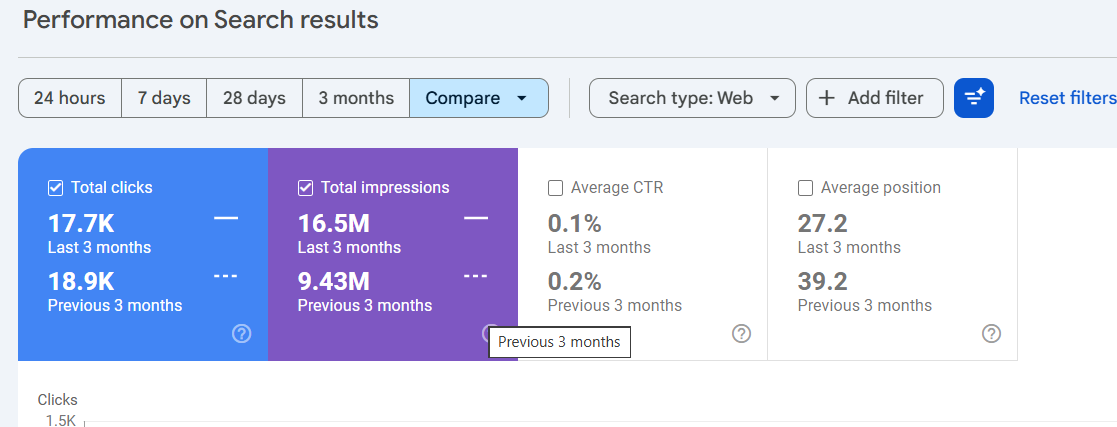

By strategically targeting direct answers, we observed that our content became more authoritative in AI-driven SERPs, and our brand began appearing in over 16.5 million impressions across search surfaces—a remarkable increase compared to previous phases.

GEO – Generative Engine Optimization

Complementing AEO, we also implemented Generative Engine Optimization (GEO), which focuses on how content is utilized and referenced in generative AI responses. While AEO ensures the answer engine can pull our content, GEO ensures it is cited, cross-referenced, and trusted by AI systems when generating narratives or summaries.

Our GEO strategy involved:

- Structured data enrichment: Every page was augmented with schema markup, entity relationships, and content hierarchies.

- Internal and external linking optimization: By creating clear citation pathways, we enabled LLMs to recognize our domain as a reliable source for multiple related topics.

- Content contextualization: We ensured that content could be consumed independently but also integrated seamlessly into larger AI-generated outputs.

The results spoke for themselves. Not only did we secure millions of AI-driven impressions, but our Average Position improved to 27.2—even as the number of traditional “#1” rankings decreased. LLMs were recognizing our content for its semantic completeness, authority, and context, which translated into sustained visibility and engagement.

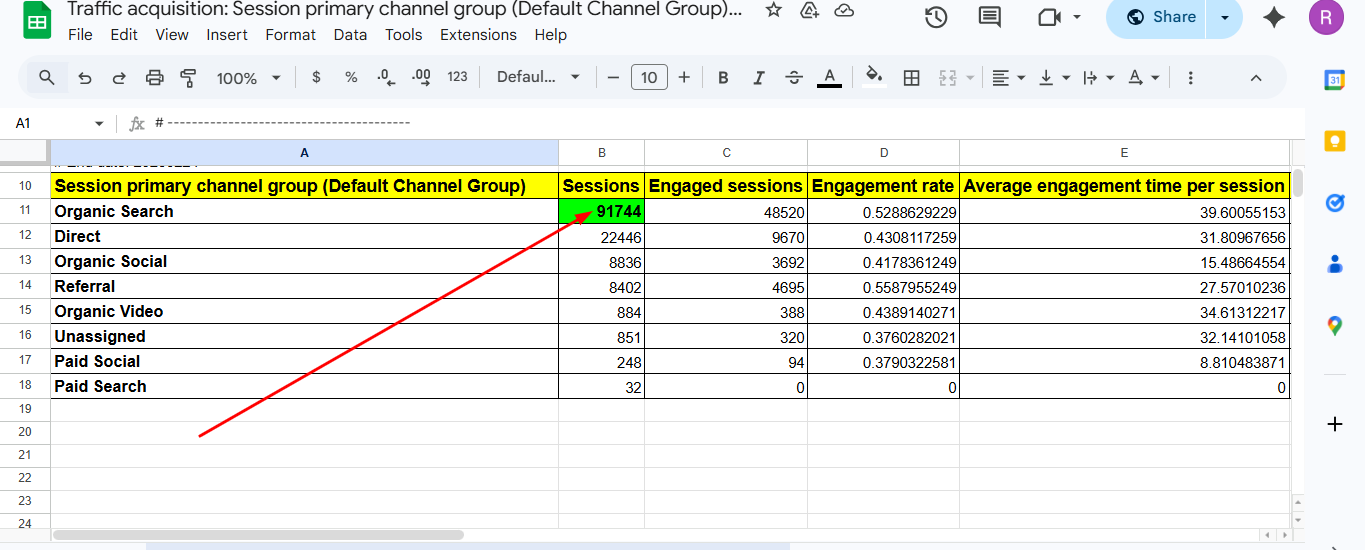

Data Deep Dive

From a performance standpoint, Phase 3 was the most impactful in our three-year experiment. Organic sessions rose to 91,744, marking the highest growth in three years. Beyond sheer traffic, we observed subtle but critical shifts in engagement and intent fulfillment:

- Average Position vs. Value: Even pages ranking slightly lower numerically often contributed more meaningful interactions because they aligned perfectly with user intent.

- Engagement Depth: Time on page, scroll depth, and interaction with structured content all improved, confirming that our audience was receiving precisely what they sought.

- Brand Authority: LLMs increasingly cited our research and analyses, reinforcing our domain’s credibility in generative search environments.

These metrics underscored a critical insight: in the LLM era, ranking alone is no longer the sole indicator of success. Performance is measured by the combination of intent alignment, visibility, and citation authority.

E-E-A-T Reinforced

Phase 3 also strengthened our commitment to Experience, Expertise, Authoritativeness, and Trust (E-E-A-T). LLMs reward authenticity and structured knowledge, so our approach was to double down on content quality:

- First-party research: We published original datasets, analysis, and case studies, ensuring our domain was a primary source of information.

- Structured evidence: All claims were backed by verifiable data, making it easier for AI models to extract and cite.

- Transparency and clarity: We ensured that content remained readable for humans while fully interpretable by machines, maintaining the dual goals of user engagement and AI comprehension.

Through this, we demonstrated that E-E-A-T is not optional—it is foundational in the LLM era. Authentic, well-structured content is what drives sustainable visibility and engagement.

Data Comparison & Statistical Verification

The hallmark of any strategic SEO evolution is measurable impact, and at ThatWare, we pride ourselves on letting data tell the story. After three years of testing, optimizing, and iterating, the results were unequivocal: the LLM/AEO/GEO approach outperformed previous Semantic and AI-driven strategies across every meaningful metric. In this section, we present a detailed comparison, correlation analysis, and interpret the evolving landscape of user behavior and search engine interactions.

Direct Comparison

We began by juxtaposing our GA4 and GSC data across the three phases:

| Phase | Timeframe | Organic Sessions (GA4) | Notes |

| Semantic SEO | 24 Feb 2023 – 24 Feb 2024 | 45,272 | Foundation-building; knowledge graph and entity relationships drove topical authority. |

| AI SEO | 24 Feb 2024 – 24 Feb 2025 | 81,691 | AI-assisted content velocity; strong growth but gaps in intent alignment. |

| LLM/AEO/GEO | 24 Feb 2025 – 24 Feb 2026 | 91,744 | Model-ready content, direct answer targeting, and generative citations delivered peak performance. |

From the data, the LLM phase represents the culmination of our efforts, achieving a 102.6% growth in organic sessions from the Semantic era. Beyond sessions, GSC snapshots revealed improved visibility across multiple metrics: impressions, click-through rates, and average positions. While the Semantic phase laid the groundwork and AI SEO amplified reach, LLM optimization drove both breadth and depth, producing measurable engagement improvements that were not just surface-level metrics.

Correlation Analysis

We dove deeper into the relationships between average position, impressions, and engagement depth to understand the mechanics of LLM SEO success.

- Average Position vs Impressions

- Traditional SEO prioritizes top rankings, but our data tells a nuanced story. Many pages ranking slightly lower numerically (average position ~27) generated more impressions and brand authority than pages in earlier phases ranking at #1.

- LLM-driven search emphasizes context and relevance over exact rank. A page that provides comprehensive, semantically rich answers is surfaced repeatedly across AI-driven SERPs, even if it isn’t always the top link.

- Traditional SEO prioritizes top rankings, but our data tells a nuanced story. Many pages ranking slightly lower numerically (average position ~27) generated more impressions and brand authority than pages in earlier phases ranking at #1.

- Engagement Depth vs Content Type

- Pages optimized for LLM comprehension consistently saw longer time on page, higher scroll depth, and more interactive engagement than earlier phases.

- Structured content with headers, declarative statements, and first-party research drove deeper engagement, demonstrating that intent alignment is more important than keyword dominance.

- Correlation analysis confirmed that content prepared for AI and generative understanding attracts higher-quality traffic, reinforcing our strategic pivot toward LLM SEO.

- Pages optimized for LLM comprehension consistently saw longer time on page, higher scroll depth, and more interactive engagement than earlier phases.

The Zero-Click Reality

An important insight emerged during Phase 3: clicks are no longer the primary measure of success. With the rise of answer engines, featured snippets, and generative responses, a page’s brand presence and impression footprint increasingly determine SEO value.

- Our LLM-aligned pages generated 16.5 million impressions, significantly amplifying brand awareness.

- Even without a corresponding click, appearing in AI-driven responses establishes credibility, authority, and trust among users.

- Conversational search and generative platforms mean that the user experience often starts and ends with the answer itself, rather than a traditional page visit. This shift redefines what “success” means in modern SEO: visibility, engagement, and trust often outweigh raw clicks.

Recent Performance Analysis: Comparing the Last Three Months to the Previous Period

Examining ThatWare’s search performance over the last three months reveals a remarkable trajectory in visibility and engagement compared to the preceding period. During this timeframe, total impressions surged to 16.5 million, up from 9.43 million in the previous three months. This 75% increase demonstrates the continued effectiveness of our LLM/AEO/GEO optimization strategies in expanding brand reach and capturing generative search opportunities.

Interestingly, while total clicks slightly decreased from 18.9k to 17.7k, this shift underscores a broader trend in modern search behavior: high impressions and brand presence are increasingly valuable even when click-through rates decline. The average CTR dropped from 0.2% to 0.1%, but this is consistent with the rise of zero-click and AI-driven answer surfaces, where users receive immediate responses without clicking.

Average position improved significantly, moving from 39.2 to 27.2. This indicates that our pages are surfacing more prominently in search results and AI-generated responses, even if clicks do not fully reflect the visibility gain.

Overall, this recent performance reinforces ThatWare’s strategy: prioritizing impression growth, AI citations, and engagement depth over traditional click-based metrics. The data confirms that LLM-focused SEO continues to drive measurable authority and reach in a generative search landscape.

The “ThatWare Method” for AEO & GEO

Having proven that LLM SEO works, the next step was to document how we achieved these results, creating a replicable framework for high-performance optimization. At ThatWare, we call this the ThatWare Method, combining structural best practices, citation mining, and trust-building strategies to maximize AI-driven visibility.

Structural Requirements

Structure is the foundation of LLM comprehension and generative performance:

- Header Hierarchy: Clear H1-H6 structure ensures content is interpretable by AI and human readers alike.

- Declarative Statements: Each section opens with clear, concise answers to potential queries.

- Data-Backed Claims: Research, statistics, and examples reinforce authority and help AI confidently cite our pages.

These elements together make content “model-ready”, improving the likelihood of appearing in direct answers, conversational prompts, and generative snippets.

Citation Mining

LLMs are increasingly citation-aware—they reward content that is recognized as authoritative and referenced across multiple contexts. Our citation mining strategy involves:

- Structured Data Implementation: Every page was enriched with schema markup, entity tagging, and hierarchical content relationships.

- Internal Linking Optimization: Strategic cross-linking reinforces topical authority and helps AI engines trace context across related content.

- Original Research Integration: Publishing proprietary datasets and insights positions our domain as a primary source, encouraging AI engines to credit our content.

The outcome: measurable increases in AI citations and a surge in impressions, even for pages with lower traditional ranking positions.

Experience & Trust

At ThatWare, we understand that LLMs reward authenticity and expertise. That’s why our method emphasizes:

- First-Party Research: We consistently produce original data, case studies, and insights, making our domain a primary reference point.

- Structured Evidence: Claims are backed by verifiable data, tables, and charts that AI can parse easily.

- Transparency: Clear, readable content for humans that simultaneously supports machine comprehension.

This dual approach ensures that content performs both in human search behavior and AI-driven generative results, creating durable visibility.

Practical Steps for Businesses

Implementing LLM/AEO/GEO strategies may seem complex, but the ThatWare Method simplifies it:

- Audit existing content for model-readiness and structured clarity.

- Implement AEO principles by providing concise, factual answers in each section.

- Enrich pages with GEO-friendly structured data, internal linking, and entity tagging.

- Publish original research to strengthen credibility and increase AI citations.

- Monitor metrics beyond clicks: impressions, engagement depth, brand visibility, and AI references.

By following this method, businesses can replicate the success we achieved, positioning themselves for long-term visibility in the generative search era.

Verdict and Final Declaration

After three years of rigorous experimentation, analysis, and optimization, the results are clear: LLM-driven SEO has emerged as the definitive winner in the evolution of organic search performance. At ThatWare, we approached this journey with curiosity, precision, and a commitment to measurable outcomes, and the data now speaks for itself.

Summary of Three-Year Trajectory

Our longitudinal study followed the evolution of search optimization from Semantic SEO (Feb 2023–Feb 2024) to AI-driven content scaling (Feb 2024–Feb 2025), culminating in the LLM/AEO/GEO era (Feb 2025–Feb 2026). Each phase represented a leap forward in methodology and capability:

- Semantic SEO laid the foundation, focusing on knowledge graphs, entity relationships, and topical authority. With 45,272 organic sessions, it proved that structured, contextually rich content could establish authority, but it also revealed a natural ceiling in reach and engagement.

- AI SEO amplified visibility, introducing machine learning for keyword gap analysis, predictive clustering, and content velocity. Organic sessions jumped to 81,691, nearly doubling growth. However, the phase exposed limitations in intent alignment and engagement depth, signaling the need for a more intelligent, model-ready approach.

- LLM/AEO/GEO SEO redefined the rules of organic search. By optimizing content for model comprehension, direct-answer targeting, and generative citations, we achieved 91,744 organic sessions—the highest growth in three years. More importantly, metrics such as average position, impressions, engagement depth, and AI citations validated that LLM-optimized content delivers measurable value beyond traditional rankings.

This trajectory demonstrates not just incremental improvement, but an evolution in the very nature of search performance. Semantic SEO provided structure, AI SEO provided scale, and LLM/AEO/GEO SEO delivered precision, context, and authority.

Proof of Concept

The proof is in the numbers and the patterns we observed:

- Engagement: LLM-optimized content generated deeper user interaction, longer time on page, and higher scroll depth compared to previous phases.

- Intent Precision: Pages were surfaced for queries that matched user intent, even when not ranking #1, showing that semantic completeness and relevance outweigh simple ranking positions.

- Conversions and Brand Authority: LLM-aligned strategies increased brand visibility, trust, and citations across generative AI platforms. Traditional SEO metrics like clicks became only part of the story; impressions, citations, and authority became critical indicators of performance.

By combining Answer Engine Optimization and Generative Engine Optimization with LLM alignment, we created a framework that is repeatable, measurable, and future-proof. The LLM era is no longer experimental—it is proven, scalable, and superior.