SUPERCHARGE YOUR ONLINE VISIBILITY! CONTACT US AND LET’S ACHIEVE EXCELLENCE TOGETHER!

How clarity, credibility signals, and contextual depth define quality in AI ranking systems

The Shift from Cleverness to Comprehension

For years, content marketing has carried a persistent belief: witty, creative writing ranks better. The logic feels intuitive—if a headline makes people laugh or a paragraph sounds smart, it must be “good content,” right? In the old internet, where attention was the currency and clicks were the scoreboard, cleverness often looked like a shortcut to performance.

But generative engines have changed the rules.

Today, discovery doesn’t rely only on humans scanning blue links and picking what feels interesting. Increasingly, people get answers through AI-generated summaries, featured responses, and conversational search experiences. In that environment, “clever” doesn’t automatically translate to “valuable.” In fact, cleverness can become a liability—because it often sacrifices the very thing generative systems need most: interpretability.

Generative engines—whether they show up as AI answers in search, chat-based discovery, or summarization systems—don’t “appreciate” content the way humans do. They don’t reward charm, humor, or a poetic metaphor unless those elements support meaning. Instead, these systems evaluate content through a different lens:

- Do you make sense quickly?

- Can your claims be trusted?

- Is the topic explained with enough context to be safely reused in an answer?

That’s why the new hierarchy isn’t: catchy → viral → visible.

It’s closer to: clear → credible → context-rich → reusable.

Here’s the central thesis:

Generative engines reward content that is clear, credible, and contextually complete, because those qualities reduce ambiguity and improve answer reliability.

In other words, AI ranking systems aren’t just selecting what sounds good—they’re selecting what they can confidently extract, summarize, and present without risking misunderstanding or misinformation.

This article will break down the three signals that matter most:

- Why clarity is machine-readable quality

Clarity isn’t just for humans. It helps machines identify what your content means, how it’s structured, and which parts are safe to reuse.

- How credibility is inferred algorithmically

You don’t “tell” a generative engine you’re trustworthy—you demonstrate it through signals like evidence, specificity, consistency, and transparency.

- Why contextual depth outperforms surface-level optimization

Thin content may target a keyword, but deep content answers the question behind the question—which is exactly what generative engines are designed to do.

If your goal is to stay visible in a world where answers are generated, not simply clicked, the lesson is simple: clarity beats cleverness. Not because creativity is bad—but because in AI-driven ranking, meaning is what scales.

How Generative Engines Actually “Read” Content

(Setting the right mental model before tactics)

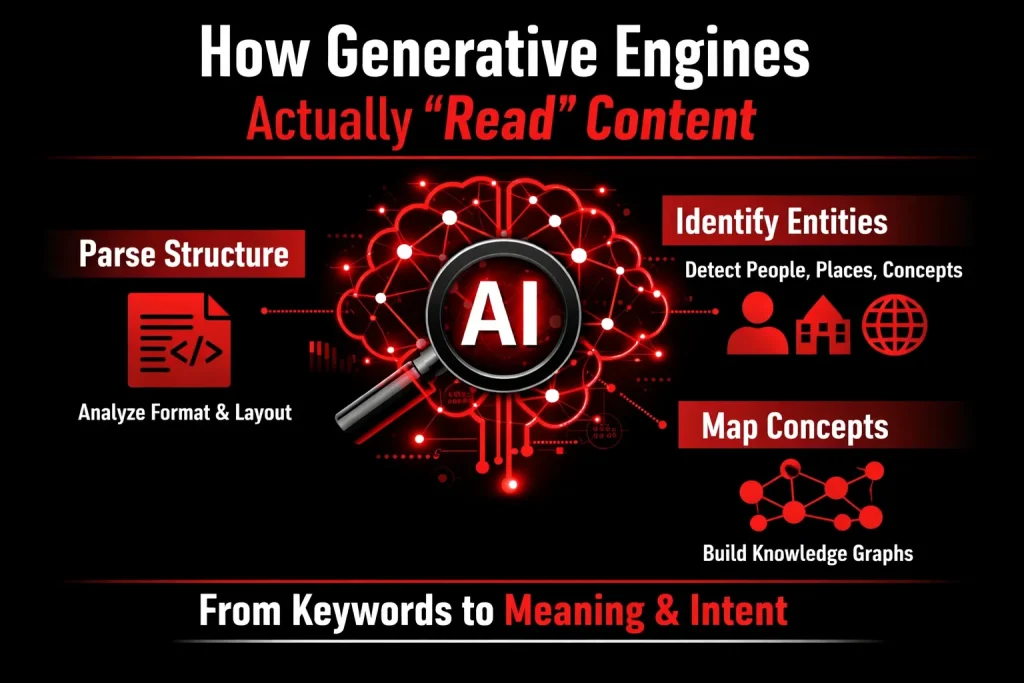

To understand why clarity consistently outperforms cleverness, we first need to correct a common assumption: generative engines do not read content the way humans do. They don’t appreciate humor, originality, or rhetorical flair unless those elements are anchored in clear meaning. What they evaluate instead is how reliably meaning can be extracted.

From Keyword Matching to Meaning Extraction

Search and ranking systems have undergone a fundamental shift in how they interpret content.

Then:

Early search engines relied heavily on keyword matching. If a page repeated the right phrases often enough, it could rank—even if the content itself lacked depth or coherence.

Now:

Generative and AI-driven systems operate on semantic understanding and intent modeling. The progression looks like this:

- Keywords → surface signals

- Semantic relationships → how concepts connect

- Intent modeling → what the user is actually trying to understand or accomplish

Instead of asking “Does this page contain the keyword?”, generative engines ask:

“Does this content clearly explain the concept, and does it align with the user’s intent?”

To answer that, these systems perform several behind-the-scenes processes:

1. Parsing Structure

Generative engines analyze how content is organized:

- Headings and subheadings

- Paragraph boundaries

- Lists, summaries, and emphasis

Clear structure reduces cognitive load for machines and helps them identify which ideas are primary, which are supporting, and how arguments progress.

2. Identifying Entities and Relationships

AI systems extract:

- Named entities (people, concepts, tools, processes)

- Relationships between those entities (cause–effect, comparison, hierarchy)

For example, it’s not enough to mention “generative engines” and “content quality.” The system looks for how those ideas are connected and whether that relationship is consistently explained.

3. Mapping Concepts into Knowledge Graphs

Extracted entities and relationships are mapped into internal knowledge representations. Content that aligns cleanly with existing knowledge structures is easier to:

- Verify

- Summarize

- Reuse in generated answers

The clearer the conceptual mapping, the higher the confidence score.

Why Clever Content Creates Risk for AI Systems

What feels engaging or impressive to humans can be problematic for machines.

Clever content often introduces hidden risks, such as:

- Ambiguity

Wordplay, double meanings, or vague references force the system to infer intent rather than extract it.

- Metaphors without grounding

Analogies that aren’t explicitly explained may sound insightful but lack concrete semantic anchors.

- Implicit assumptions

When ideas are implied rather than stated, AI systems struggle to fill in the gaps accurately.

From a machine’s perspective, these are not stylistic choices—they are uncertainty generators.

Because of this, generative systems prioritize:

- Interpretability

Can the meaning be extracted directly without guessing?

- Low-risk inference

Is the content unlikely to produce incorrect or misleading outputs when reused?

- Consistent meaning across contexts

Does the same concept retain the same definition throughout the content?

If a passage can be interpreted in multiple ways, the system must choose—or avoid using it altogether. In ranking and answer generation, avoidance often means reduced visibility.

Key Takeaway

If a machine has to guess what you mean, your content becomes unreliable.

Generative engines don’t penalize creativity—but they will always favor clarity over cleverness when clarity makes meaning explicit, verifiable, and safe to reuse. In an AI-driven search ecosystem, reliability is the foundation of visibility.

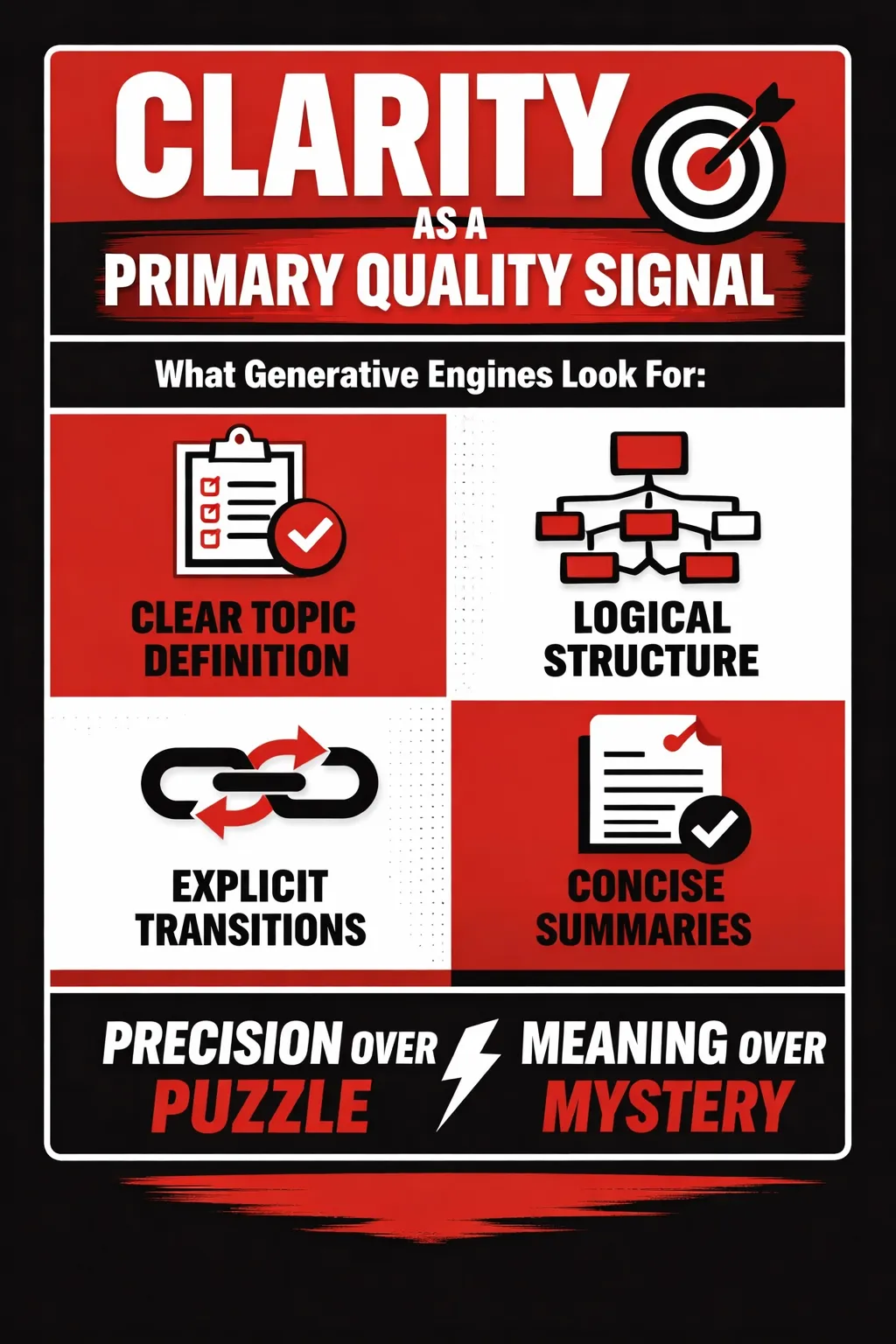

Clarity as a Primary Quality Signal

(Clarity is not simplicity—it’s precision)

When people hear that “clarity” matters in AI-driven search, they often assume it means dumbing content down. In reality, generative engines reward the opposite. Clarity is not about being basic; it is about being precise, unambiguous, and structurally intelligible. For AI systems that must interpret, summarize, and reuse information at scale, clarity functions as a core quality signal.

What “Clarity” Means to Generative Engines

To a generative engine, clarity is the degree to which meaning can be extracted without inference or guesswork. Unlike human readers, AI systems do not appreciate nuance that is implied but not stated. They look for explicit signals that reduce uncertainty.

First, clarity begins with a clear topic definition. High-quality content makes it immediately obvious what problem is being addressed and within what scope. When a topic is precisely framed, the engine can confidently classify the content and match it to relevant queries.

Second, generative engines favor explicit relationships between ideas. Statements that clearly explain cause and effect, comparisons, dependencies, or hierarchies are easier for models to interpret. Phrases like “because,” “this leads to,” “in contrast,” or “as a result” help machines understand how concepts connect, not just that they co-exist.

Third, clarity depends on a logical progression of thought. Ideas should build on one another in a predictable sequence—definitions before analysis, causes before consequences, principles before examples. This mirrors how models construct internal representations of meaning.

Finally, clarity is reinforced through a predictable structure. Headings, sections, and summaries act as signposts, helping generative engines segment the content into coherent, retrievable units. Well-structured content is easier to parse, summarize, and extract answers from.

Structural Clarity Signals

Structure is one of the most visible indicators of clarity for AI ranking systems. Generative engines heavily rely on document organization to understand importance and hierarchy.

Descriptive H2 and H3 headings are critical. Headings that clearly state what the section explains—rather than using clever or vague phrasing—allow models to map content to specific intents and subtopics.

Maintaining one idea per paragraph further strengthens clarity. Dense paragraphs that mix multiple concepts force AI systems to disentangle meaning, increasing the chance of misinterpretation. Focused paragraphs, on the other hand, create clean semantic boundaries.

Explicit transitions such as “therefore,” “for example,” “however,” and “because” guide both human readers and machines through the reasoning process. These transitions function as logical connectors that signal how one idea flows into the next.

Lastly, summaries and recaps provide reinforcement. They restate key points in a condensed form, which improves content confidence and makes it easier for generative engines to extract definitive answers or snippets.

Linguistic Clarity Signals

Beyond structure, clarity is also embedded in language choices. Generative engines respond strongly to wording that minimizes ambiguity.

They favor concrete language over abstract buzzwords. Terms like “leverage,” “optimize,” or “innovative solutions” offer little semantic value unless clearly defined. Specific language—actions, mechanisms, outcomes—creates stronger meaning signals.

Another important signal is defining terms before using them. When specialized concepts are introduced with clear definitions, models can anchor subsequent references correctly, reducing contextual drift.

Reducing pronoun ambiguity is also essential. Excessive use of “this,” “that,” or “it” without clear antecedents makes it difficult for AI to track references across sentences. Repeating the key noun may feel redundant to humans, but it dramatically improves machine comprehension.

Finally, short, declarative sentences are especially effective for core ideas. While stylistic variation is fine, key claims benefit from direct statements that clearly assert meaning without hedging or complexity.

Why This Matters to AI Ranking Systems

Clarity is not a stylistic preference—it directly impacts how generative engines evaluate quality.

Clear content improves entity recognition, allowing models to accurately identify topics, concepts, and relationships. It strengthens topic confidence scoring, signaling that the content reliably addresses a specific subject rather than touching it superficially. Most importantly, clarity enhances answer extraction accuracy, making the content safer and more useful for AI-generated responses.

In short, when your content is clear, generative engines do not have to guess. And in AI-driven search, content that eliminates guesswork is content that gets rewarded.

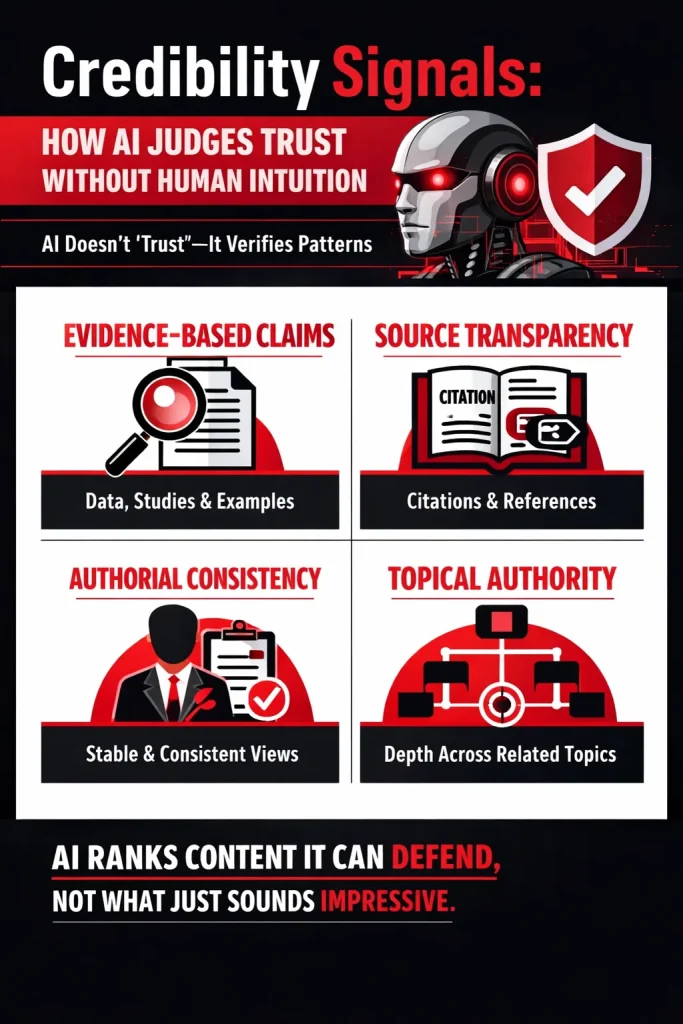

Credibility Signals: How AI Judges Trust Without Human Intuition

(AI doesn’t “trust”—it verifies patterns)

Unlike human readers, generative engines do not form opinions, feel persuaded, or “believe” an author. They evaluate credibility through repeatable, verifiable signals embedded directly in the content. In other words, AI does not trust intent—it measures evidence.

This distinction is critical. Many content strategies still rely on brand reputation, tone, or clever positioning. Generative systems, however, focus on what can be validated, cross-checked, and consistently interpreted.

What Credibility Means in AI Evaluation

Credibility, from an AI perspective, is not primarily about who you are, but about what your content demonstrates.

- A well-known brand does not automatically outrank a lesser-known publisher.

- Authority is not assumed; it is inferred from content patterns.

Generative engines assess whether the content itself shows:

- Subject-matter understanding

- Logical coherence

- Alignment with established knowledge

- Internal consistency over time

In short, credibility is earned within the text, not borrowed from external perception alone.

Core Credibility Signals Generative Engines Look For

1. Evidence-Based Claims

Generative systems strongly favor content that supports assertions with proof.

This includes:

- Quantitative data

- Research findings or studies

- Real-world examples

- Clear causal or logical reasoning

Unsupported claims introduce uncertainty. When AI cannot trace why something is true, it treats the statement as unreliable—even if it sounds confident or persuasive.

Claims that can be explained are safer than claims that are merely stated.

2. Source Transparency

AI systems reward content that is open about where information comes from.

Credibility signals increase when content includes:

- Citations or references

- Named experts, organizations, or publications

- Links to original research or primary sources

Source transparency allows generative engines to:

- Cross-reference information

- Validate alignment with trusted knowledge bases

- Reduce the risk of misinformation propagation

Even when sources are not directly linked, clearly naming them strengthens semantic trust.

3. Authorial Consistency

Generative engines evaluate whether an author or domain maintains stable, non-contradictory viewpoints over time.

Inconsistencies such as:

- Conflicting claims across articles

- Shifting definitions of the same concept

- Opposing conclusions without explanation

weaken credibility signals.

Consistency does not mean rigidity—it means:

- Clear reasoning for changes

- Transparent updates or revisions

- Logical progression of ideas

From an AI perspective, predictable reasoning equals reliability.

4. Topical Authority

Credibility is amplified when content demonstrates depth across an entire subject area, not just a single article.

Generative engines favor publishers who:

- Cover related subtopics comprehensively

- Address foundational and advanced concepts

- Answer follow-up and adjacent questions naturally

One-off articles rarely establish authority. Instead, AI looks for topical ecosystems that indicate sustained expertise rather than isolated insights.

Why Clever Content Often Weakens Credibility

While clever writing may engage humans, it frequently undermines AI credibility signals.

Common issues include:

- Jokes and wordplay, which introduce semantic ambiguity

- Opinion-heavy content without evidence or reasoning

- Vague or exaggerated claims that cannot be verified

Clever phrasing often prioritizes style over precision. For generative engines, this creates uncertainty—and uncertainty reduces rankability.

When meaning must be inferred instead of understood directly, credibility suffers.

Key Insight

AI ranks content it can defend, not content that merely sounds impressive.

In an ecosystem where answers are generated, summarized, and reused, defensibility matters more than flair. Credibility emerges from clarity, evidence, consistency, and depth—signals that machines can evaluate at scale.

For content creators, the implication is clear:

Write to be validated, not admired.

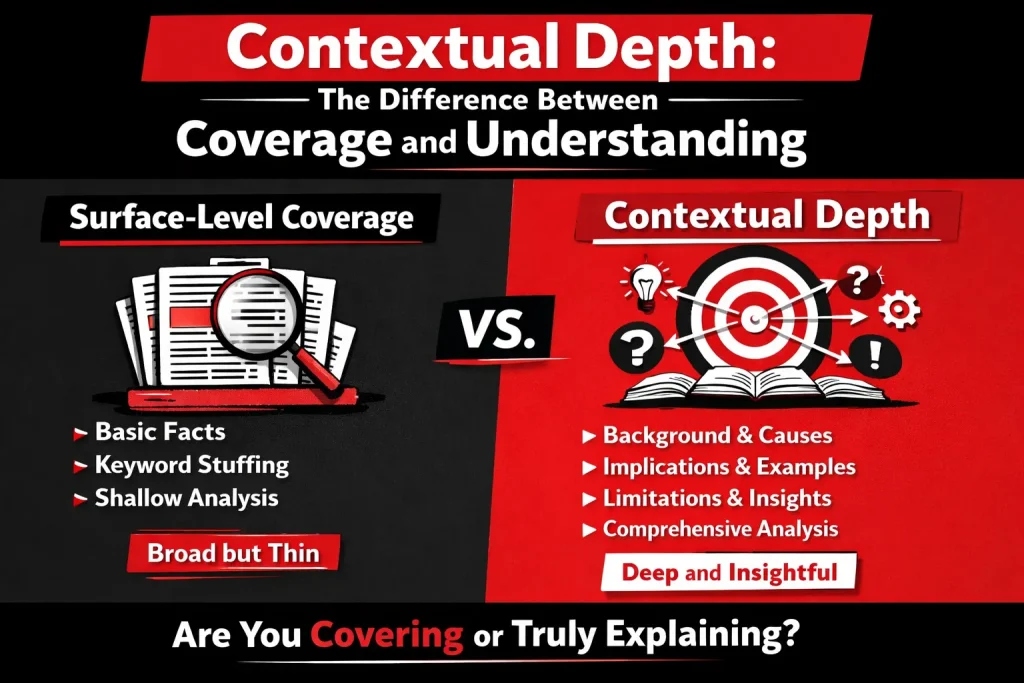

Contextual Depth: The Difference Between Coverage and Understanding

(This is where most content fails)

Most content on the internet focuses on coverage—touching many points quickly—rather than understanding, which requires exploring how those points connect, function, and matter. Generative engines are designed to detect this difference. They don’t just assess what topics you mention; they evaluate how completely you explain them.

Contextual depth is the signal that separates surface-level articles from content that AI systems consider reliable enough to reference, summarize, or rank prominently.

What Contextual Depth Really Means

Contextual depth is often misunderstood, which leads to ineffective content strategies.

- It is not length

Long content can still be shallow if it repeats ideas, pads paragraphs, or avoids explanation. Generative engines quickly detect redundancy.

- It is not keyword density

Repeating variations of a keyword without expanding the underlying idea adds no semantic value. Modern AI systems prioritize meaning, not repetition.

- It is conceptual completeness

Contextual depth means a topic is explained thoroughly enough that:

- Its purpose is clear

- Its mechanisms are understood

- Its implications are explored

- Its limitations are acknowledged

In short, the content demonstrates understanding, not just awareness.

Components of Contextual Depth

Generative engines tend to reward content that naturally includes the following elements:

- Background and definitions

Establishing foundational context ensures the topic is anchored. Clear definitions reduce ambiguity and help AI systems accurately map entities and concepts.

- Causes and mechanisms

Explaining why something exists or how it works signals expertise. This moves content beyond description into explanation, which is critical for AI comprehension.

- Implications and outcomes

Content with depth explores consequences—what changes, improves, or fails because of the concept. This helps generative engines connect your content to real-world relevance.

- Edge cases and limitations

Acknowledging where an idea doesn’t apply increases trust. AI systems interpret this nuance as a credibility signal, not a weakness.

- Practical examples or applications

Examples ground abstract ideas in reality, reinforcing meaning and making the content easier to reuse in AI-generated answers.

Together, these components create a complete semantic picture, rather than a fragmented overview.

How Generative Engines Detect Depth

Generative engines don’t “read” depth emotionally—they infer it algorithmically.

- Semantic clustering of related concepts

Deep content naturally includes interconnected ideas that belong to the same conceptual domain. AI systems detect these clusters as signals of topical authority.

- Co-occurrence of foundational and advanced ideas

When basic concepts appear alongside more advanced or nuanced explanations, it suggests genuine expertise rather than surface familiarity.

- Coverage of adjacent questions users might ask

High-quality content anticipates follow-up questions. Generative engines favor content that can support multiple queries without needing external clarification.

Depth, in this sense, is measured by how self-sufficient the content is.

Why Shallow Content Loses Visibility

Shallow content fails not because it’s short or poorly written, but because it’s incomplete.

- It can’t answer follow-up questions

If content only addresses a single, narrow angle, generative systems can’t confidently extend or summarize it.

- It lacks reinforcement signals

Without examples, explanations, or supporting ideas, claims appear weak and isolated.

- It fails multi-intent satisfaction

Modern search queries often bundle multiple intents—learning, comparison, application. Shallow content typically satisfies only one, reducing its overall usefulness.

As generative engines prioritize reliability and reuse, content that lacks contextual depth becomes increasingly invisible.

In essence, contextual depth transforms content from something that merely mentions a topic into something that truly explains it.

And in an AI-driven ecosystem, understanding is what earns visibility.

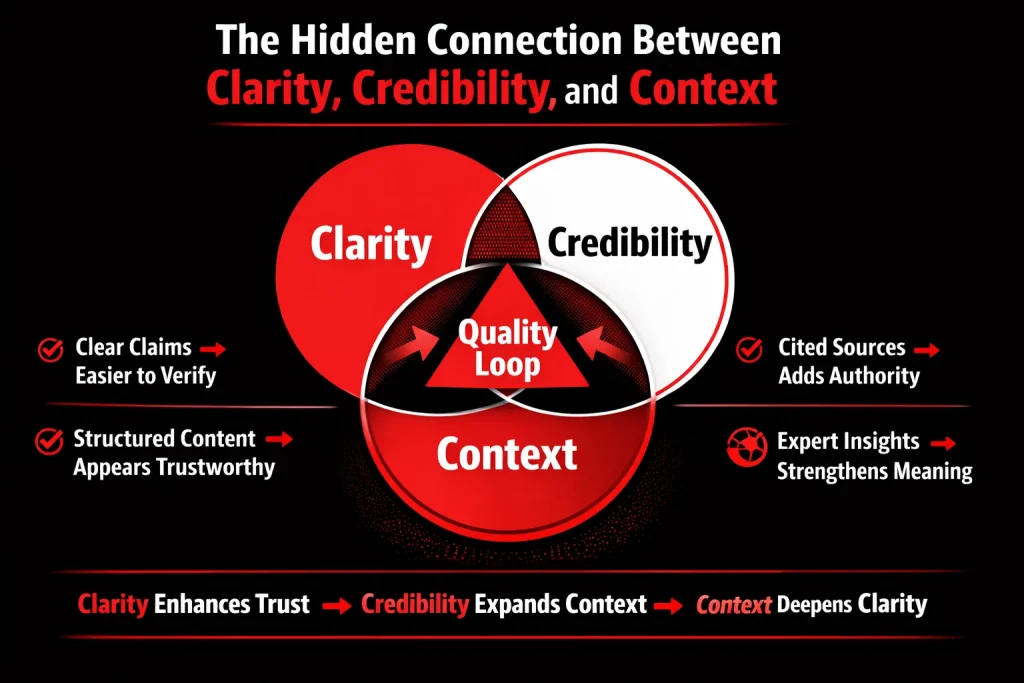

The Hidden Connection Between Clarity, Credibility, and Context

These signals compound, not compete.

Most people treat clarity, credibility, and contextual depth like separate checkboxes—something you “add” to content after writing it. Generative engines don’t read that way. They evaluate these signals as a connected system, where each element strengthens the others. When they work together, they create something AI systems love: high-confidence understanding.

Think of it as a loop:

Clarity → makes credibility easier to detect → expands contextual depth → reinforces clarity again.

That loop is what makes content more quotable, more retrievable, and more dependable for AI-generated answers.

Clarity Enables Credibility

Clear claims are easier to verify. Structured arguments appear more trustworthy.

Credibility doesn’t start with citations—it starts with clear, testable meaning. If a generative engine can’t confidently interpret what you’re claiming, it can’t confidently trust it.

Clear claims are easier to verify

A vague claim like:

- “This strategy works really well for most businesses”

…is hard to validate. “Works” how? Under what conditions? For what outcome?

But when you make it clear:

- “This strategy tends to improve lead-to-demo conversion rates for B2B SaaS companies with inbound traffic, because it reduces decision friction at the evaluation stage.”

Now the engine has:

- Defined scope (B2B SaaS, inbound traffic)

- Defined outcome (lead-to-demo conversion)

- Defined mechanism (reduces decision friction)

That specificity becomes machine-verifiable, because it can be checked against:

- related documents

- known patterns

- evidence you provide later in the piece

Structured arguments appear more trustworthy

Generative systems reward content that looks like organized reasoning, not a stream of thoughts. A structured argument signals:

- stable meaning

- lower hallucination risk

- better extractability for answers

When your content follows an explainable logic (problem → cause → solution → evidence → limitations), you’re doing something powerful: you’re making it easy for AI to summarize without distorting you.

Clarity, then, becomes the foundation credibility sits on.

Credibility Strengthens Context

Sources and evidence expand topical coverage. Expert framing introduces nuance.

Once clarity is present, generative engines look for the next thing: proof and grounding. Credibility doesn’t just raise trust—it also increases the amount of useful context your content contains.

Sources and evidence expand topical coverage

When you add evidence—data, citations, quotes, case results—you naturally introduce:

- related concepts

- linked entities (tools, organizations, studies)

- supporting mechanisms

That expands your content’s semantic footprint. Meaning: the content now connects to more questions a user might ask.

Example: If you cite a study about cognitive load, you automatically bring in:

- attention limits

- comprehension patterns

- decision fatigue

…and that increases contextual depth without you trying to “stuff” keywords.

This is why credibility is not just a trust signal—it’s a coverage multiplier.

Expert framing introduces nuance

Credible content often includes nuance like:

- “This works best when…”

- “A common exception is…”

- “If your situation is X, do Y instead…”

Nuance is incredibly valuable to generative engines because it:

- reduces overgeneralization

- prevents incorrect summaries

- makes the content safe to reuse as an answer

A confident but simplistic claim is risky. A clear claim with expert nuance is reliable.

So credibility doesn’t just make you look authoritative. It makes your content safer for AI to surface.

Context Reinforces Clarity

Examples clarify abstractions. Depth reduces misinterpretation.

Here’s the twist: contextual depth isn’t only about being thorough—it’s also about being understood correctly.

Examples clarify abstractions

Abstract explanations are fragile. They’re easy to misunderstand and easy for AI to summarize incorrectly.

When you include examples, you anchor meaning in reality:

- You show what the concept looks like in practice

- You remove ambiguity about interpretation

- You make your intended meaning “stick”

For instance, “write with clarity” can mean ten different things. But if you show:

- Before: “Optimize content for generative search.”

- After: “Define the reader’s problem in one line, explain the mechanism in three steps, and back each step with a proof point or example.”

Now “clarity” is not a slogan. It’s operational.

Depth reduces misinterpretation

Depth creates guardrails. When you cover:

- assumptions

- limitations

- edge cases

- “what this does not mean”

…you reduce the chance that a system extracts the wrong takeaway.

Generative engines prefer content that’s not just informative, but hard to misread.

And when misinterpretation risk goes down, clarity scores go up again—because the meaning becomes stable across multiple contexts.

Result: A Self-Reinforcing Quality Loop Generative Engines Favor

When clarity, credibility, and context work together, they create an ecosystem of quality:

- Clarity makes your claims interpretable

- Credibility makes them defensible

- Context makes them complete and hard to distort

That combination produces the best possible outcome for AI ranking systems:

high confidence + high usefulness + low ambiguity.

In other words, you’re not just writing content. You’re building an answer the machine feels safe using.

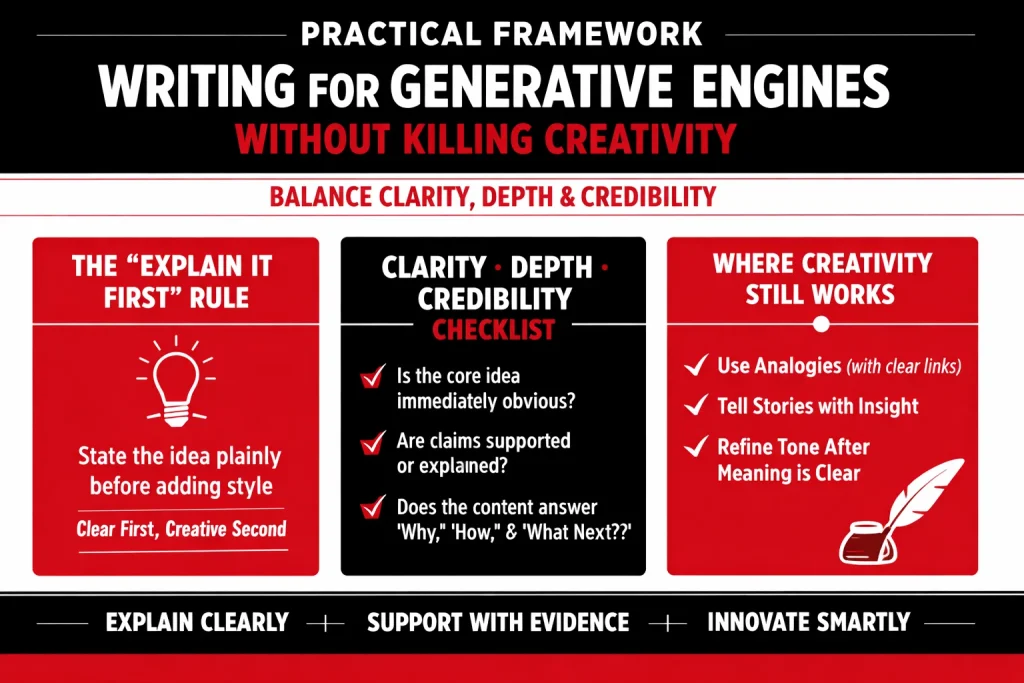

Practical Framework: Writing for Generative Engines Without Killing Creativity

If you want your content to perform in an AI-first world, you don’t need to abandon creativity—you need to sequence it correctly. Generative engines reward writing they can interpret, verify, and reuse confidently. Creativity still works, but only after you’ve made meaning unmistakable.

Here’s a framework that keeps your writing human, while making it machine-friendly.

The “Explain It First” Rule

State the idea plainly before stylizing it.

Think of this like building a foundation before decorating the house. If your first paragraph is too clever, poetic, or abstract, you force both humans and machines to guess what you mean. Guessing is exactly what generative systems avoid.

How to apply it:

- Open with the plain claim. One sentence. Direct.

- Add the reason. One sentence explaining why it’s true or important.

- Then bring in style. Metaphor, story, punchy phrasing—now it lands.

Example

- ❌ Too clever first: “The internet doesn’t read; it senses.”

- ✅ Explain it first: “Generative engines rank content that is easy to interpret and verify. That’s why clarity and supporting evidence outperform clever phrasing.”

- ✅ Then stylize: “You can still be poetic—but only after you’ve told the machine what the poem is about.”

This structure makes your content instantly extractable, quotable, and trustworthy.

The Clarity–Depth–Credibility Checklist

Before you publish, run your draft through this quick filter. It’s not about perfection—it’s about removing ambiguity and increasing confidence.

1) Is the core idea immediately obvious?

Ask: If someone reads only the headline + first 5 lines, do they know what the post is about?

Quick fixes

- Put your thesis in the first paragraph.

- Use headings that say what the section means, not clever titles that hide it.

- Replace vague openers (“In today’s world…”) with specific ones.

Mini-test: If your intro could fit any topic, it’s not clear enough.

2) Are claims supported or explained?

Generative engines treat unsupported claims like noise. You don’t always need citations, but you do need support.

What counts as support:

- Evidence (data, sources, studies)

- Concrete examples (“Here’s what this looks like in practice…”)

- Clear reasoning (“Because X happens, Y tends to follow.”)

Upgrade pattern:

- Claim → Why → Proof/Example → Implication

3) Does the content answer “why,” “how,” and “what next”?

This is the easiest way to build contextual depth without writing an essay.

- Why does this matter? (motivation + context)

- How does it work? (mechanism + steps)

- What next should the reader do? (actions + application)

Example flow

- Why: “Clarity reduces misinterpretation and improves retrieval.”

- How: “Use explicit definitions, structured headings, and examples.”

- What next: “Audit one post today using this checklist and rewrite the intro.”

If you hit these three, your post becomes more “answerable” for AI systems.

Where Creativity Still Works

Creativity isn’t banned. It just needs guardrails—so it enhances understanding instead of replacing it.

1) Analogies (when explained)

Analogies can be powerful because they compress complex ideas into familiar frames. But generative engines struggle when analogies are left hanging.

Use this format:

- Analogy → Translation → Practical takeaway

Example

“Think of clarity like clean plumbing: it’s not exciting, but it keeps everything flowing. In writing, that means defining terms and building logic step-by-step. The takeaway: make your first paragraph impossible to misread.”

The “translation” line is what makes it AI-safe and human-friendly.

2) Storytelling (when tied to insights)

Stories keep readers engaged—but generative engines won’t reward a story unless it delivers a clear, extractable insight.

Rule: Story is the vehicle, not the destination.

Best practice:

- Start with the insight (or state it immediately after the hook)

- Use the story to demonstrate it

- End with a reusable principle

Example structure

- Insight: “Clever intros often reduce ranking confidence.”

- Story: “We rewrote a witty opener into a direct thesis and the page started earning AI snippets.”

- Principle: “Be clever second. Be clear first.”

3) Voice and tone (after meaning is clear)

Your tone can absolutely be bold, playful, sharp, or poetic—as long as the meaning doesn’t depend on it.

Do this:

- Keep key statements literal

- Put style around them, not inside them

Example

- Literal: “Generative engines prefer content with verifiable claims.”

- Voice: “You can’t charm an algorithm into trusting you. You have to show your work.”

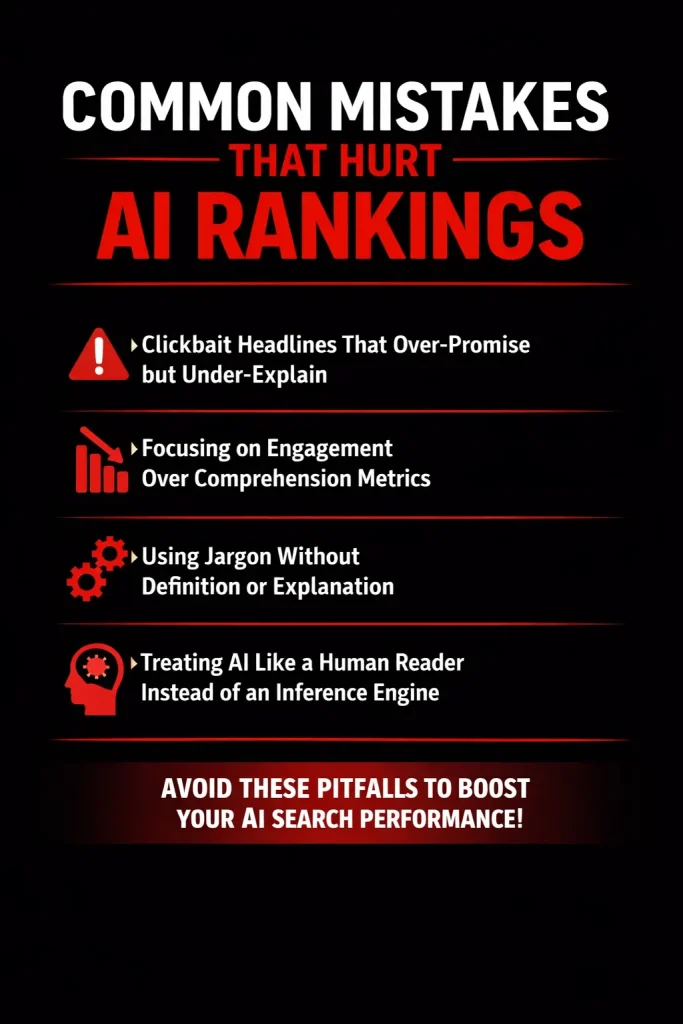

Common Mistakes That Hurt AI Rankings

As generative engines evolve, many content creators are still optimizing for human reactions alone—clicks, curiosity, and emotional pull—while ignoring how AI systems interpret and evaluate content. The result is often content that performs well socially but fails to earn sustained visibility in AI-driven search and answer engines.

Below are the most common mistakes that quietly undermine AI rankings.

1. Writing Headlines That Over-Promise but Under-Explain

Click-driven headlines are designed to provoke curiosity, not convey meaning. While this approach may increase initial clicks, it creates a disconnect between headline intent and content delivery.

Generative engines evaluate:

- Whether the headline accurately represents the content

- How quickly the core topic is clarified

- If the promised value is actually fulfilled

When headlines are vague, sensational, or metaphor-heavy, AI systems struggle to confidently associate the page with a specific informational intent. This reduces:

- Topic confidence

- Answer extractability

- Long-term visibility in AI summaries

Example problem: “Why Everything You Know About Content Is Wrong”

Why it fails: The headline lacks a clear subject, scope, or outcome—forcing the system to infer meaning rather than recognize it.

What works better: “Why Generative Engines Reward Clarity Over Clever Content”

Clarity in headlines isn’t boring—it’s a relevance signal.

2. Prioritizing Engagement Metrics Over Comprehension

High engagement does not equal high understanding.

Many creators optimize content to:

- Increase time on page

- Encourage scrolling

- Trigger emotional reactions

However, generative engines optimize for something different:

- Can this content answer a question accurately?

- Can it be summarized without distortion?

- Does it reduce ambiguity?

Content designed purely for engagement often:

- Delays explanations

- Uses narrative padding

- Buries the core idea

This creates friction for AI systems that rely on early clarity and logical progression to assess usefulness.

Key insight: AI systems reward content that explains efficiently, not content that performs theatrically.

3. Using Jargon Without Definition

Jargon assumes shared knowledge. AI systems cannot safely make that assumption.

When specialized terms are introduced without:

- Definitions

- Context

- Relationships to known concepts

…the system’s confidence in interpreting the content drops.

This impacts:

- Entity recognition

- Semantic linking

- Contextual completeness

Even when a term is widely used in an industry, generative engines favor content that defines before expanding. This signals expertise and improves interpretability.

Best practice:

- Introduce the term

- Define it in plain language

- Then explore its complexity

Clarity of meaning always outranks stylistic efficiency.

4. Treating AI Like a Human Reader Instead of an Inference Engine

This is the most fundamental mistake.

Human readers:

- Infer meaning from tone

- Fill in gaps intuitively

- Tolerate ambiguity

Generative engines:

- Extract meaning explicitly

- Penalize unclear relationships

- Avoid uncertain interpretations

Content written with the assumption that “the reader will get it” often:

- Leaves logic unstated

- Relies on implication

- Skips foundational explanations

For AI systems, unstated logic is missing logic.

Critical shift in mindset: You are not writing for AI—but your content must be interpretable by AI without guesswork.

Closing Insight

Most AI ranking failures are not caused by poor writing—but by unclear thinking.

When headlines clarify, explanations come early, terms are defined, and ideas are logically connected, generative engines gain confidence in your content. And confidence, not cleverness, is what earns visibility.

The Future: Why Clarity Will Matter Even More

Clarity isn’t just a writing preference anymore—it’s becoming an infrastructure requirement for how information survives and spreads in an AI-first internet. As generative engines increasingly sit between creators and audiences, they’ll keep favoring content that is easy to interpret, validate, and reuse without introducing risk.

1) The rise of AI-generated answers (and the “zero-click” web)

Search is shifting from “ten blue links” to direct answers. Whether it’s an AI overview, a chat-style response, or a voice assistant, more users will get what they need without visiting your site.

That changes the game:

- You’re no longer only competing for clicks.

- You’re competing to become source material for answers.

Generative engines can’t reliably use content they can’t confidently parse. Clever, vague, or overly stylized writing creates uncertainty. Clear content—where the main point is explicit, the reasoning is transparent, and the structure is scannable—becomes easier to extract, assemble, and present as an answer.

In a zero-click world, clarity increases the odds your work is chosen as the reference behind the answer—even when the user never lands on your page.

2) Increased scrutiny on misinformation (risk management drives ranking)

Generative systems have a problem humans don’t: when they get something wrong, they can scale that wrongness instantly. So platforms are under constant pressure to reduce hallucinations, misinformation, and misattribution.

As that scrutiny rises, engines will lean harder on signals that reduce risk:

- Claims that are clearly stated (not implied)

- Content that differentiates facts vs opinions

- Nuance and limitations where needed

- Evidence, sources, and traceable logic

Clarity helps engines decide:

- What are you claiming?

- How strong is the claim?

- Is it generalizable or conditional?

- Can it be supported or cross-checked?

Clever content can be entertaining, but if it’s interpretively “slippery,” it becomes harder to verify—and that makes it less safe to elevate. In an environment where misinformation is expensive, clarity becomes a ranking advantage.

3) Preference for content that can be summarized, quoted, and reused safely

Generative engines don’t just index; they transform. They summarize, paraphrase, cite, and combine ideas from multiple sources. The content most likely to be elevated is content that can be safely repackaged.

That means three big preferences:

A) Content that can be summarized

If your article has a clear hierarchy—thesis → key points → supporting detail—an engine can compress it without losing meaning. If your argument is scattered or purely narrative, the system has to guess what matters.

Clarity creates “summary-friendly” structure:

- Strong headings

- Tight topic focus

- Clear definitions

- Explicit takeaways

B) Content that can be quoted

Generative engines love quote-worthy lines, but not in the poetic sense—in the precision sense. A good quote is a stable unit of meaning.

Clear, quotable content tends to be:

- Specific rather than grand

- Definitive where appropriate

- Carefully scoped (no exaggerated universals)

- Free of jargon (or jargon is defined)

A clever line might trend socially. A clear line gets reused across AI answers, snippets, and citations.

C) Content that can be reused safely

Safety is the quiet filter behind everything. “Reusable” content is content that won’t become misleading when taken out of context.

Engines favor content that includes:

- Context around claims

- Boundaries and conditions (“in these cases…”, “when X is true…”)

- Examples that anchor the concept

- Neutral, verifiable language

The more your content can travel without breaking, the more likely it is to be selected and amplified.

Prediction: The clearer your thinking, the more visible your content becomes

This is the real shift: visibility is no longer just about publishing more or being more clever. It’s about being more understandable.

In the future, content will increasingly be judged by how well it functions as reliable input for AI systems:

- Can it be understood quickly?

- Can it be validated?

- Can it be safely reused?

Clarity isn’t boring. It’s how your ideas become portable—and in an AI-mediated internet, portability is reach.

Conclusion: Clarity Is the New Competitive Advantage

As generative engines increasingly mediate how information is discovered, ranked, and reused, one truth becomes unavoidable: they don’t reward cleverness—they reward understanding. Witty phrasing, stylistic flourishes, and rhetorical complexity may impress human readers momentarily, but for AI-driven systems, they often introduce ambiguity rather than value. What consistently performs well is content that communicates ideas with precision, structure, and intent.

This reality calls for a fundamental mindset shift in how we create content. The guiding question is no longer “How do I sound smart or creative?” but rather “How clearly do I explain this so it can be understood, trusted, and applied?” Clarity signals confidence. Explanation signals expertise. Context signals depth. Together, they form the language generative engines are designed to recognize and reward.In an AI-driven search world, clarity isn’t boring—it’s powerful. It is the competitive advantage that turns content into knowledge, visibility into authority, and ideas into lasting impact.