SUPERCHARGE YOUR ONLINE VISIBILITY! CONTACT US AND LET’S ACHIEVE EXCELLENCE TOGETHER!

Why Algorithms Trust Architected Brands More Than Optimized Pages

The Silent Failure of Modern SEO

There is an uncomfortable truth that most SEO agencies rarely acknowledge: the majority of SEO “wins” are temporary. Rankings spike, traffic graphs rise, reports look impressive—and then, quietly, everything starts slipping. A core update rolls out, competitors adapt, search behavior shifts, and what once looked like a success story becomes another case of diminishing returns. This is not because SEO no longer works. It’s because most SEO strategies were never designed to last.

Modern SEO has created an illusion of progress by equating rankings with success. Pages rank, keywords move up, impressions increase—but visibility is mistaken for trust. Rankings are a surface-level outcome, not a durable asset. When algorithms change, fragile strategies are exposed almost instantly. Sites that were “optimized” fall apart because they were never structurally sound to begin with. They were tuned for a moment in time, not built for evolution.

At the heart of this problem lies a short-term, project-based mindset. SEO is often treated like a campaign: a defined start date, a checklist of actions, and a promise of results within a few months. Pages are optimized one by one. Keywords are assigned mechanically. KPIs are chased aggressively—traffic, rankings, clicks—without asking a deeper question: What system are we building underneath these metrics? In most cases, the answer is none. Optimization happens in isolation, disconnected from brand, user behavior, and long-term authority.

This mindset no longer aligns with how search engines actually work. Search engines no longer “rank pages” in the traditional sense—they evaluate systems. Modern algorithms are not static rulebooks waiting to be gamed; they are learning entities. They observe patterns over time. They analyze consistency, behavior, relationships, and trust signals across an entire digital presence. In this environment, trust cannot be forced through tactics. It cannot be switched on with better keywords or more links. Trust is not optimized. It is accumulated.

This is where ThatWare draws a clear line. ThatWare refuses to chase algorithms because chasing assumes the algorithm is always ahead and the brand is always reacting. Instead, ThatWare designs environments where algorithms naturally evolve in the brand’s favor. The focus shifts from short-lived optimizations to long-term ecosystem engineering—where content, brand signals, user journeys, and entity authority work together as a coherent system.

The transition is fundamental. It is a move away from treating SEO as a deliverable and toward designing search ecosystems as permanent assets. In a world where algorithms learn continuously, the brands that win are not the ones that optimize harder—but the ones that build systems worthy of trust.

Why SEO Campaigns Are Structurally Flawed

The fundamental flaw in most SEO campaigns is not execution—it is the way they are conceptualized. Traditional SEO operates on linear thinking: a clear input is applied with the expectation of a predictable output. Choose the right keywords, create content around them, build backlinks, and rankings should follow. For years, this model appeared to work, reinforcing the belief that SEO is a controllable, step-by-step process. But this linear framework no longer reflects how modern search ecosystems function.

Search today is not a straight line from keyword to ranking. It is a dynamic, multi-dimensional system where hundreds of signals interact simultaneously. Content does not exist in isolation. Backlinks are no longer simple votes. User behavior feeds back into algorithmic learning loops. Brand perception influences trust scores. Yet many SEO campaigns still operate as if search engines were static machines waiting to be fed the right inputs. This mismatch between reality and strategy is why so many campaigns fail to produce durable results.

At the core of this failure is an outdated cause-and-effect mindset. SEO teams still assume that if they control enough individual variables—on-page optimization, link volume, content frequency—they can control outcomes. In reality, algorithms are no longer reacting; they are learning. They continuously observe patterns across time, measuring consistency, satisfaction, and reliability. Rankings are not the result of a single action but the byproduct of long-term systemic signals.

This shift became inevitable as search engines evolved from rule-based systems to learning systems. Early algorithms relied heavily on explicit rules: match keywords, count links, reward optimization. Modern algorithms operate through machine learning feedback loops. They analyze how users interact with content, how brands are referenced across the web, how entities relate to each other, and how these signals behave over months and years. Behavioral reinforcement plays a central role. If users consistently trust a brand, engage deeply, and return repeatedly, algorithms learn to reinforce that outcome.

Long-term pattern recognition is what separates durable visibility from temporary rankings. Algorithms are no longer impressed by isolated spikes in performance. They look for stability. They look for coherence. They look for signals that persist even when surface-level variables change. SEO campaigns, by design, struggle to produce these patterns because they are optimized for short-term movement, not long-term memory.

This is where most brands fall into the volatility trap. SEO campaigns are often built around exploiting current ranking factors—what works right now. Pages are over-optimized. Link strategies are scaled aggressively. Content is produced to satisfy algorithms rather than users. Initially, results appear. Then an update arrives. Suddenly, the same tactics that drove growth become liabilities. Sites drop not because they violated guidelines, but because they were overfitted to a moment in time.

Overfitting is a familiar concept in machine learning, and it applies perfectly to SEO. When a site is optimized too precisely for known ranking factors, it loses adaptability. As algorithms mature, those factors are reweighted, contextualized, or replaced. Tactics that once worked begin to decay. The site has no underlying system to support it, no ecosystem to absorb change. What remains is fragility disguised as performance.

The key insight is simple but transformative: campaigns expire, ecosystems compound. A campaign ends when the tactics stop working. An ecosystem grows stronger with time. While campaigns chase rankings, ecosystems build trust. While campaigns react to updates, ecosystems benefit from them. In a learning-based search environment, only systems designed for evolution can survive—and only ecosystems can turn trust into a permanent competitive advantage.

What Is a Search Ecosystem?

To understand why traditional SEO is failing, we must first redefine what search success actually looks like today. A search ecosystem is not a collection of optimized pages or a bundle of SEO tasks. It is a living, interconnected system designed to be understood, trusted, and reinforced by algorithms over time.

At its core, a search ecosystem is a network of content, signals, entities, and behaviors that operate together rather than in isolation. Content does not exist as standalone blog posts—it functions as structured knowledge. Brand signals do not appear randomly—they reinforce identity and credibility. User behaviors are not accidental—they reflect intentional journey design. Each element feeds the others, creating a system where trust compounds instead of resetting with every update.

Unlike traditional SEO strategies, a search ecosystem continuously reinforces trust signals. Algorithms observe consistency across time, platforms, and interactions. When content aligns with brand identity, when users behave predictably and positively, and when entities are clearly defined, search engines reduce uncertainty. This reduction of uncertainty is critical, because modern algorithms reward reliability far more than short-term performance spikes. Most importantly, a true search ecosystem is independent of individual ranking factors or single algorithm updates. It does not rely on exploiting a loophole; it benefits from alignment with how search engines are built to learn.

How Search Engines Interpret Ecosystems

Search engines no longer interpret websites as disconnected URLs. They interpret entities. Entity recognition allows algorithms to understand who you are, what you are known for, and how you relate to other concepts, brands, and topics. In a well-designed ecosystem, the brand becomes an identifiable entity with clear topical boundaries and authority.

Consistency across surfaces further reinforces this understanding. Search engines observe how a brand appears across its website, content platforms, mentions, citations, and user interactions. When messaging, tone, expertise, and intent remain coherent, algorithms gain confidence. Inconsistent branding, scattered content, or contradictory signals introduce doubt—and doubt suppresses trust.

Predictability and reliability also play a crucial role. Algorithms favor environments where user satisfaction outcomes are consistent. If users regularly engage, stay, return, and convert, the system learns that the brand delivers dependable value. Over time, this predictability becomes a powerful ranking advantage that no single optimization tactic can replicate.

Search Ecosystem vs. SEO Campaign

The contrast between a traditional SEO campaign and a search ecosystem is stark:

| SEO Campaign | Search Ecosystem |

| Page-based | Entity-based |

| Short-term | Compounding |

| Reactive | Self-reinforcing |

| Keyword-driven | Intent-driven |

| Fragile | Resilient |

SEO campaigns react to algorithm changes. Ecosystems absorb them. Campaigns focus on pages; ecosystems build identity. Campaigns decay when tactics stop; ecosystems continue to grow even in silence.

Why Ecosystems Align With How Algorithms Actually Learn

Modern algorithms learn longitudinally. They do not reward isolated wins—they reward patterns. Search ecosystems create stable, reinforcing patterns that algorithms can trust over time. This alignment is why ecosystems outperform campaigns, not just in rankings, but in durability, visibility, and long-term growth.

Pillar 1: Architecting Content as an Intelligence Layer

Most brands still treat content like a warehouse of “assets”—blogs, landing pages, case studies, PDFs—each created for a campaign, a keyword cluster, or a quarterly target. The problem is that algorithms no longer interpret content as a collection of isolated pages. They interpret it as a continuous stream of signals. In other words: content isn’t just marketing material anymore. It’s training data.

1) Content is no longer “assets” — it’s training data

Modern search systems consume content the way a learning model consumes examples: looking for patterns, consistency, and reinforcement over time. They’re not only reading what you publish; they’re learning what you consistently stand for.

Algorithms evaluate:

- What topics you return to repeatedly

- How consistently you define concepts

- Whether your explanations match user intent

- How your content connects across pages

- What your audience does after consuming it

This changes the role of content from “something you post” to “something that teaches.” Publishing is output. Teaching is structure. Publishing is scattered. Teaching is cumulative.

A brand that publishes randomly trains confusion. A brand that teaches systematically trains trust. That is the difference between writing content and building a content intelligence layer.

2) Topical depth vs topical coverage

Most SEO content strategies are built on coverage: “Let’s create 50 articles around this topic.” On paper, that sounds like authority. In reality, it often produces thin, repetitive posts—surface-level explanations that mimic competitor pages and add little new understanding.

Algorithms have become extremely good at detecting this. Surface-level content fails because it signals:

- Low informational originality

- Weak topical mastery

- Shallow intent satisfaction

- High probability of user dissatisfaction

Instead, search engines reward topical depth: content that makes the algorithm confident that your site is not just mentioning a topic, but understanding it.

This is where semantic completeness matters. Semantic completeness means your content answers not only the primary query, but the surrounding conceptual questions a user would naturally have. It explains relationships, trade-offs, edge cases, examples, and implications. It reduces uncertainty. It resolves confusion. It behaves like a true knowledge resource.

And this is why topic authority can be viewed as a probability model: the algorithm is constantly estimating the likelihood that your brand is a trustworthy source on a topic. That likelihood increases when your content demonstrates depth, consistency, and clarity across an ecosystem—not when you merely “cover” a keyword.

3) Content architecture principles

If content is training data, the next question is: training data for what? For the algorithm to learn who you are, what you know, and what you should be trusted for.

That requires architecture—not just production.

A strong content ecosystem is built on three principles:

a) Core entities vs supporting entities

Every brand has core entities—your primary services, frameworks, solutions, and concepts. Supporting entities are the subtopics that reinforce those core entities: use cases, methodologies, comparisons, FAQs, risks, tools, and outcomes.

Most brands make the mistake of writing supporting topics without establishing the core entity authority first. The result is scattered content that never consolidates into a clear identity.

Content ecosystems should flow like this:

- Establish core entities (pillar hubs)

- Expand with supporting entities (clusters)

- Reinforce connections between them (internal linking + narrative alignment)

b) Knowledge graphs, not blog calendars

A blog calendar asks: “What should we publish next week?”

A knowledge graph asks: “What does our ecosystem need to become undeniable?”

Calendars optimize for time. Knowledge graphs optimize for meaning.

When you design content like a knowledge graph, you’re mapping:

- What concepts connect to what

- Which pages should act as anchors

- Where the gaps are in your topical model

- How users and algorithms will traverse your information

This is how you move from “more content” to “structured authority.”

c) Internal narrative consistency

Algorithms notice contradictions and fragmentation. If one page says your approach is “data-driven automation” and another says it’s “human-first creativity,” and a third doesn’t define the approach at all, the system becomes incoherent.

Consistency doesn’t mean repeating the same words. It means maintaining a stable identity:

- Consistent definitions

- Consistent framing

- Consistent positioning

- Consistent promises and outcomes

A coherent narrative across content is one of the strongest signals of brand trust.

4) Content velocity and predictability

A major misconception in SEO is that publishing more frequently is always better. In reality, consistency beats intensity.

Algorithms are learning systems. They reward predictable signal patterns. When a brand publishes in bursts—20 articles in a month, then silence for three months—it trains instability. When a brand publishes steadily, it trains reliability.

This is why content velocity should be engineered for predictability, not volume. The goal is not to flood the index. The goal is to maintain signal stability over time.

Signal stability matters because it suggests:

- The brand is actively maintaining its knowledge

- The ecosystem is alive, not abandoned

- The information is being updated and reinforced

- The brand is consistently invested in user satisfaction

Frequency hacks might spike short-term visibility. But stable velocity builds long-term trust.

5) The ThatWare approach to content ecosystems

ThatWare does not build content like a set of articles. ThatWare builds content like an intelligence system.

Instead of asking: “What should we rank for?”

ThatWare asks: “What should the algorithm learn about us?”

This shift changes everything.

ThatWare designs content ecosystems that compound authority by:

- Defining core entities and constructing pillar intelligence hubs

- Building supporting clusters that deepen semantic completeness

- Aligning every piece with a consistent internal narrative

- Maintaining stable velocity to train long-term reliability

- Designing content pathways that guide both users and algorithms

The result is a transition from “publishing content” to building topical intelligence hubs—central resources that continuously expand, reinforce, and strengthen the brand’s position in the algorithm’s memory.

Because when content becomes an intelligence layer, the brand stops chasing rankings—and starts shaping the environment that produces them.

That’s the difference between SEO campaigns and search ecosystems.

One tries to win this month. The other builds assets that keep winning—even when algorithms evolve.

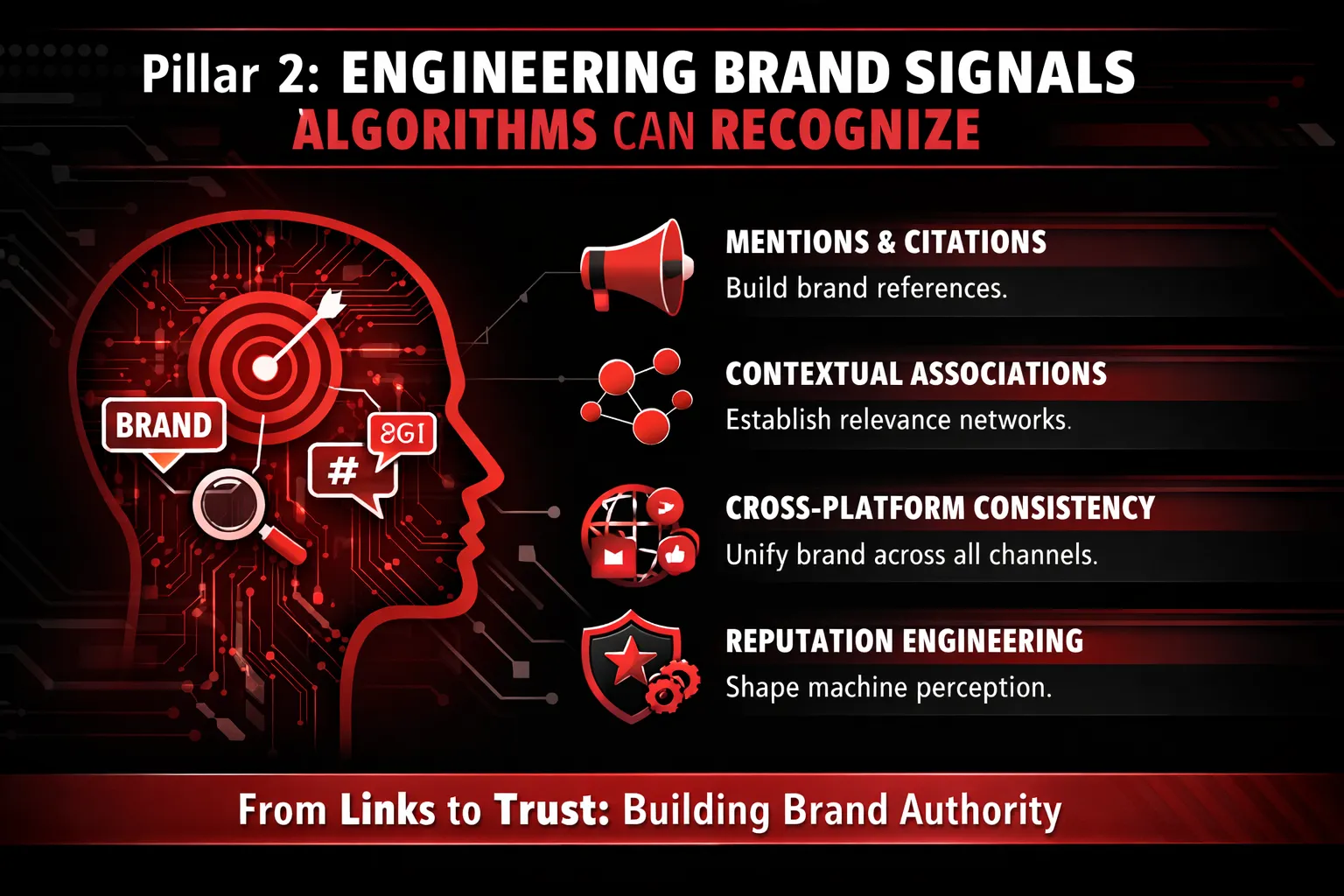

Pillar 2: Engineering Brand Signals Algorithms Can Recognize

Most brands think “branding” is what humans feel—logos, tone, colors, taglines, aesthetics. But search algorithms don’t experience your brand the way people do. They don’t see your design. They don’t feel your messaging. They don’t interpret your intent the way a customer might.

Algorithms infer branding.

And they infer it the same way they infer credibility, authority, and trust: through patterns.

1) Algorithms don’t “see” branding — they infer it

Search engines build a probabilistic understanding of who you are by tracking how you show up across the web. Not through your visuals—but through mentions, associations, and co-occurrence.

- Mentions tell algorithms you exist and are being referenced.

- Associations tell algorithms what category you belong to.

- Co-occurrence tells algorithms who you are frequently discussed with—and what topics repeatedly surround your name.

If your brand name repeatedly appears next to specific concepts (“AI SEO,” “performance marketing,” “search ecosystems”), algorithms begin forming a stable identity model around you. If your brand name appears inconsistently across unrelated contexts, that identity becomes noisy and unreliable.

This is why branded search has quietly become one of the strongest trust accelerators. When people search for your brand name directly, it signals something that algorithms deeply value: intent with specificity. It suggests recognition, recall, and demand. And in a world where search engines are optimizing for user satisfaction and reduced risk, brands with growing branded search demand often get rewarded—not because of vanity, but because brand demand reduces uncertainty.

In other words: when the market “pulls” for you, algorithms listen.

2) Brand signals beyond backlinks

For years, SEO trained the world to believe backlinks are the currency of authority. But as algorithms matured, they expanded their understanding of credibility far beyond link graphs.

Today, brand strength is reinforced through signals that don’t always look like traditional SEO assets:

- Citations: Your brand referenced in industry lists, reports, directories, podcasts, webinars, event pages, community roundups, and case studies—even without a clickable link.

- Contextual mentions: Mentions surrounded by meaning—where the text clearly explains what you do, who you help, and why you matter.

- Platform consistency: Uniform brand identity across your website, LinkedIn, YouTube, Medium, PR placements, Google Business profiles, and third-party profiles.

A link without context can be weak. A mention with strong context can be powerful.

Because algorithms are not just counting links anymore—they’re interpreting relationships.

3) Cross-surface brand reinforcement

Modern search doesn’t happen only on Google. Discovery is fragmented across surfaces: social platforms, YouTube, Reddit, newsletters, podcasts, AI assistants, marketplaces, and communities. But what’s interesting is this: even when discovery happens elsewhere, algorithms still learn from it.

That’s why brand signals must be reinforced across surfaces:

- Search: Your website, SERP presence, structured data, entity clarity

- Social: Consistent positioning, expertise proof, community signals

- Content platforms: Repeated topical authority, contextual brand references

- PR: Third-party validation, association with credible entities

When these surfaces reinforce the same narrative, algorithms receive a clear message: this brand is stable, predictable, and trustworthy.

But when branding is fragmented—different messaging on different platforms, inconsistent naming, vague positioning, conflicting claims—algorithms get confused. And confusion is costly.

Because algorithmic trust isn’t just about authority. It’s also about risk management. Search engines are constantly asking: If we send users to this brand, will they be satisfied? Is this a reliable choice?

Fragmentation increases uncertainty. And uncertainty reduces reward.

4) Reputation engineering vs reputation management

Most brands practice reputation management: reacting to what exists. Fixing what looks bad. Responding to reviews. Publishing PR when needed.

But the brands that win long-term practice something more strategic: reputation engineering.

Reputation engineering means deliberately designing how the brand appears in machine memory.

It includes:

- Entity clarity: The algorithm knows exactly what you are, what you do, and what category you belong to.

- Signal coherence: Your brand’s topics, claims, and associations align everywhere—no contradictions, no identity drift.

- Narrative repetition: The same core positioning shows up across content, mentions, and profiles until it becomes statistically undeniable.

This is how brands become “trusted defaults.” Not because they look polished—but because their presence is structurally consistent.

5) Why ThatWare focuses on brand gravity, not link velocity

Traditional SEO often obsesses over link velocity—how fast you can acquire backlinks. It’s a numbers game. And like most numbers games, it can produce short-term results that don’t hold.

ThatWare plays a different game: brand gravity.

Brand gravity is the force that pulls:

- mentions toward you,

- associations toward you,

- and demand toward you.

It’s what happens when your positioning becomes sharp, your content becomes unavoidable, and your ecosystem becomes coherent enough that algorithms don’t need to “guess” who you are.

ThatWare doesn’t optimize for algorithms. ThatWare designs ecosystems where algorithms evolve in our favor. And in that ecosystem, links are not the strategy—they’re a side effect of being consistently recognized, repeatedly referenced, and structurally trusted.

Because when your brand becomes gravity, you don’t chase authority. Authority starts coming to you.

Pillar 3: Designing User Journeys That Train Algorithms

If you still think SEO is mainly about keywords, metadata, and backlinks, you’re optimizing for an older internet. Modern search is powered by systems that learn. And the loudest teacher in that learning system isn’t your content—it’s your users.

Search engines don’t just read pages anymore. They watch what happens after the click. They observe how real people behave, how long they stay, whether they return, whether they continue searching, and whether they show signs of satisfaction. In other words: user journeys have become the training ground where algorithms decide who deserves trust.

1) User Behavior Is Algorithm Feedback

Every click is a signal, but the click alone is not the verdict. The algorithm is tracking what follows:

- Clicks: Did the user choose you when other options existed?

- Dwell time: Did they stay long enough to suggest value, or bounce immediately?

- Return behavior: Did they come back to your site later, search your brand again, or revisit deeper pages?

- Next-step behavior: Did they continue exploring, or did they hit “back” and choose another result?

These behaviors form what you can call satisfaction loops—a chain of actions that indicates whether the search intent was actually fulfilled. When users consistently find what they need with you (and don’t need to keep hunting elsewhere), the algorithm learns a simple lesson:

“This brand resolves intent reliably.”

Over time, that reliability becomes a trust advantage. Not because you told the algorithm you’re credible, but because thousands of user journeys proved it.

2) Why UX Is Now an SEO Primitive

For years, UX was treated as separate from SEO—nice to have, but not essential. Today, UX isn’t decoration. UX is infrastructure. It directly shapes the behavioral signals the algorithm sees.

Yes, Core Web Vitals matter. But they’re just the baseline. Most brands are chasing speed and layout metrics while ignoring the bigger reality:

SEO is now driven by intent satisfaction modeling.

That means the engine is evaluating:

- Did the page answer the user’s real question quickly?

- Did it reduce uncertainty or increase clarity?

- Did it guide the user to the next logical step?

- Did the user feel done after consuming the content?

A page can load fast and still fail search. A page can be technically perfect and still produce confusion, friction, or disappointment. And those outcomes create behavioral patterns—fast exits, pogo-sticking, repeated searches—that tell algorithms your result didn’t resolve intent.

That’s why UX has become an SEO primitive: because it determines whether your content is a dead-end or a pathway.

3) Journey-Based Architecture: From Awareness → Trust → Conversion

Most SEO is built like a library: thousands of pages, scattered, optimized independently, each trying to rank for something. But search ecosystems aren’t libraries. They’re guided experiences.

A search ecosystem is designed like a journey:

- Awareness: The user discovers you for the first time through a broad, curiosity-driven query.

- Trust: The user evaluates whether you are credible, different, and worth listening to.

- Conversion: The user takes a meaningful action—subscribe, book a call, request a quote, start a trial, buy.

The mistake most brands make is trying to convert at the awareness stage. They rank a blog post and immediately push a CTA like “Buy now” or “Schedule a call.” The user isn’t ready. They bounce. The algorithm sees rejection patterns.

Instead, ecosystems use content sequencing, not isolated pages.

Sequencing means every piece of content has a job:

- This page answers the initial question.

- That page deepens the understanding.

- Another page resolves objections.

- Another page proves credibility with examples.

- Another page makes action feel safe and logical.

It’s not just internal linking for SEO. It’s journey design for behavioral outcomes.

When you architect content as a path, you don’t just increase pages per session—you increase confidence per session. And confidence is the true driver of conversion and algorithmic trust.

4) Behavioral Consistency as Trust Currency

Search engines don’t need you to be perfect. They need you to be predictably satisfying.

That’s why behavioral consistency has become trust currency. When a brand consistently produces:

- Lower bounce rates (because intent is fulfilled)

- Lower pogo-sticking (because users don’t keep hunting)

- Higher depth (because users naturally continue)

- Higher return visits (because trust is formed)

…the algorithm builds confidence that showing your result is a low-risk decision. It’s essentially saying:

“If I send users here, they’ll likely be satisfied.”

The enemy of this trust is pogo-sticking—when users click your result, don’t find what they want, go back, and choose someone else. Pogo-sticking is not just a UX issue. It’s an SEO decay signal. It tells the algorithm your page looked relevant but didn’t deliver value.

Reducing pogo-sticking is rarely about adding more text. It’s about designing content and UX so the user experiences:

- Immediate clarity

- Strong alignment with intent

- Clean structure and progression

- Helpful next steps

In other words: you’re not just giving information—you’re completing the user’s search mission.

5) ThatWare’s Philosophy

Most SEO teams still optimize pages. ThatWare designs journeys.

Because pages can rank and still fail. Pages can be optimized and still bleed trust. Pages can even win traffic and still lose authority if user behavior signals disappointment.

ThatWare works from a different premise:

We don’t optimize pages for users. We design journeys that algorithms observe users trusting.

That’s the shift from SEO campaigns to search ecosystems.

An ecosystem is not built around what you want to rank for—it’s built around how users think, move, hesitate, compare, and commit. It anticipates the next question before the user asks it. It reduces friction before friction appears. It guides users from curiosity to confidence.

And when the algorithm repeatedly observes that trust forming—click after click, visit after visit—it does what learning systems always do:

It reinforces what works.

That is how brands become algorithm-resilient. Not by chasing the search engine, but by building experiences so satisfying that the search engine has no choice but to trust them.

Pillar 4: Building Entity Authority Instead of Keyword Authority

For years, SEO was treated like a keyword game. Pick the right phrases, place them strategically, build a few links, and you could climb the SERPs. That era is fading fast—not because keywords don’t matter, but because keywords were never the real goal. They were merely the language layer humans used to communicate intent. Search engines used keywords as a proxy because that’s all they could reliably interpret at scale.

Now, the proxy is no longer necessary.

1) The death of keyword-centric SEO

Keyword-centric SEO collapses when you realize a keyword is not a destination—it’s a symptom. People don’t want “best CRM software.” They want better sales workflows, cleaner pipelines, and faster follow-ups. They don’t want “AI SEO services.” They want consistent growth that doesn’t disappear after an update.

Keywords are simply the outward expression of deeper intent. Treating them as goals leads to shallow strategies: pages created to rank for phrases, content written to match word patterns, and optimization that feels mechanically correct but contextually hollow.

Search engines have moved past that. They increasingly interpret content through entities—real-world “things” like brands, people, concepts, products, locations, and frameworks—and the relationships between them. This shift is often described as entity-first indexing: instead of assessing whether your page matches a query string, the algorithm tries to understand whether your brand is a credible entity within a topic space.

In other words: search isn’t trying to match words anymore. It’s trying to recognize meaning.

2) What entity authority really means

Entity authority is not “domain authority” repackaged. It’s a more fundamental form of trust. It answers three critical questions in the algorithm’s mind:

- Who are you?

Are you a legitimate, consistent, identifiable entity? Do you show up the same way everywhere? Is your identity clear?

- What are you known for?

Not what you claim, but what the internet consistently associates you with. Are you a generalist with scattered relevance—or a specialist with deep, defensible expertise?

- Who associates with you?

What reputable entities mention you, cite you, collaborate with you, or appear in the same context as you? Algorithms infer trust through relational credibility.

This is why brands with strong presence and consistent narratives often outrank “better optimized” pages. They aren’t winning because they have the perfect keyword density. They’re winning because search engines can confidently model them as a trustworthy entity.

3) Entity relationships and topical dominance

Once authority becomes entity-based, the real competitive game becomes relationship design.

Think of a search ecosystem like a map. The center of the map is your brand entity. Around it are clusters of related entities—subtopics, use cases, products, frameworks, customer problems, and supporting concepts. Your goal is not to produce isolated pages; your goal is to build a connected structure where every piece of content strengthens the algorithm’s understanding of what you are and where you belong.

Two powerful concepts drive this:

Parent-child entity modeling

Your primary entity (ThatWare) should have clearly defined child entities: core services, methodologies, industries, outcomes, and proprietary frameworks. Each child entity should be supported by deeper content and connected internally so the ecosystem reads like a structured knowledge network rather than a random blog.

Contextual adjacency

Algorithms learn through proximity and repetition. If your brand repeatedly appears in the context of specific topics, concepts, and outcomes, the association becomes stronger over time. This is how you “train” the algorithm—ethically—through consistent, meaningful context.

Over time, your ecosystem stops competing at the page level and starts competing at the category level. That is topical dominance.

4) Structuring entity clarity

Entity authority doesn’t happen by accident. It must be structured.

At minimum, your ecosystem needs:

- A strong About foundation

Clear narrative, verifiable identity, consistent positioning, leadership visibility, and mission clarity. Not fluffy brand writing—structured clarity that removes ambiguity.

- Schema and structured data

Schema doesn’t create authority, but it reduces misunderstanding. It helps machines interpret what you are: organization, services, authors, reviews, FAQs, products, locations, and relationships.

- Content depth and internal reinforcement

Depth signals competence. Internal linking signals structure. When a topic is supported by multiple layers—beginner, intermediate, advanced—algorithms see it as a knowledge ecosystem, not a ranking attempt.

- Knowledge panel eligibility

You don’t “apply” for a knowledge panel; you become eligible through consistent entity signals across trusted sources. The goal isn’t the panel itself. The goal is what the panel represents: high-confidence entity recognition.

5) ThatWare’s approach to entity ecosystems

ThatWare doesn’t treat SEO as keyword placement. ThatWare treats search as a learning environment.

Instead of asking, “Which keywords should we rank for?” ThatWare asks:

- What should the algorithm know about this brand one year from now?

- What associations should be inevitable?

- What entity relationships must be built so trust becomes self-reinforcing?

ThatWare designs entity ecosystems where content, brand signals, user journeys, and structured data work together as a coherent system. Because when an algorithm understands you clearly—and sees the internet consistently confirming that understanding—you don’t need to chase updates. Updates become irrelevant noise.

That’s the shift: from keyword authority that expires, to entity authority that compounds.

SEO is a project. Ecosystems are permanent assets.

ThatWare don’t optimize for algorithms. ThatWare design ecosystems where algorithms evolve in our favor.

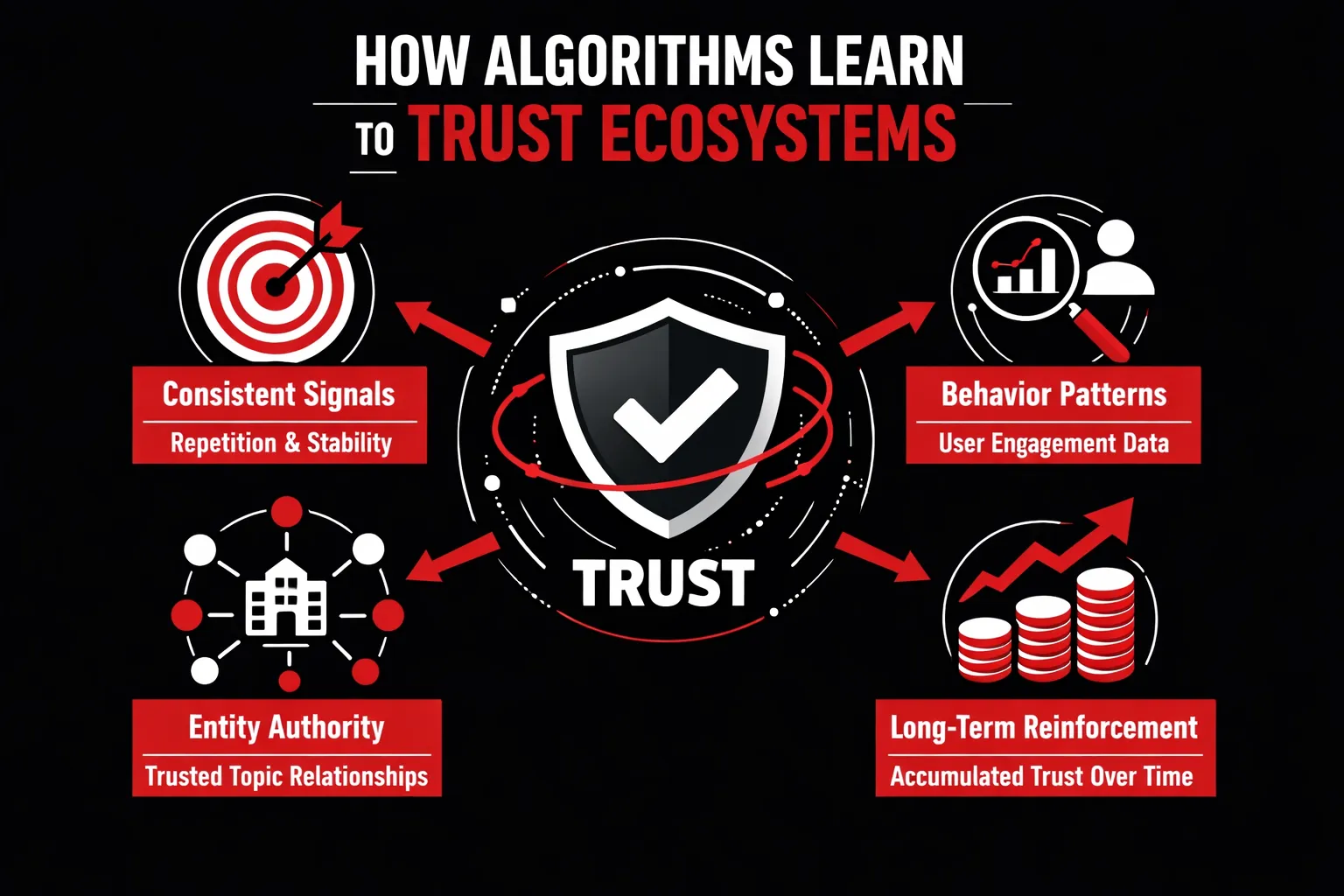

How Algorithms Learn to Trust Ecosystems

Search engine trust is often misunderstood as a vague or abstract concept, but at its core, algorithmic trust is mathematical. Algorithms do not “believe” in brands—they calculate confidence. Every crawl, every user interaction, every content update feeds into a growing statistical model that attempts to answer a single question: Is this source reliably valuable over time? In this context, trust is not earned through isolated optimizations, but through patterns that remain stable across months and years.

Trust as Statistical Confidence

Algorithms develop trust the same way learning systems build confidence in any signal: through repetition without contradiction. When a brand consistently publishes content that aligns with its core topics, maintains coherent messaging, and satisfies user intent, it reduces uncertainty in the algorithm’s model. Each reinforcing signal strengthens the probability that future content from the same source will also be valuable.

This process is longitudinal. Algorithms don’t evaluate signals in isolation; they observe how signals behave over time. A single high-performing page can attract attention, but only sustained consistency builds confidence. When content depth, brand mentions, user engagement, and entity signals reinforce one another month after month—without sudden reversals or thematic drift—the algorithm’s trust score compounds. Inconsistency, on the other hand, introduces noise, forcing the system to reassess risk.

Why Ecosystems Reduce Algorithmic Uncertainty

Search ecosystems are powerful because they are predictable. Not repetitive in a shallow sense, but structurally reliable. An ecosystem signals to the algorithm that the brand understands its domain, its audience, and its role within the broader knowledge graph. Predictability matters more than novelty because algorithms are designed to minimize risk. Novelty introduces uncertainty; predictability lowers it.

When content, internal linking, brand signals, and user journeys are architected as a cohesive system, the algorithm encounters fewer contradictions. The brand behaves the same way across surfaces, topics, and timeframes. This reduces risk scoring, making it safer for the algorithm to surface that brand more frequently and in more competitive queries. In contrast, tactic-driven SEO often creates fragmented signals—bursts of optimization followed by silence—which increases uncertainty and suppresses long-term visibility.

The Compounding Advantage of Ecosystems

Once an ecosystem reaches a certain threshold of trust, it becomes self-reinforcing. New content is indexed faster. Rankings stabilize more quickly. Minor fluctuations have less impact. This is not favoritism—it is efficiency. The algorithm has learned that the ecosystem consistently produces reliable outcomes, so it allocates attention and visibility accordingly.

This is also why competitors cannot simply “copy” trust. They can replicate tactics, keywords, or formats, but they cannot replicate history. Trust is a time-weighted asset, built through sustained alignment between content, behavior, and authority. Ecosystems accumulate this alignment gradually, turning search visibility into a durable advantage rather than a fragile win.

In the end, algorithms don’t reward effort—they reward certainty. And certainty is only possible when a brand stops optimizing in fragments and starts designing systems that learn, reinforce, and evolve over time.

Why Ecosystems Win During Algorithm Updates

Every major algorithm update follows the same pattern. Panic spreads across the SEO industry, rankings fluctuate wildly, and brands scramble to diagnose what went wrong. Pages are re-optimized, links are disavowed, content is rewritten. Yet beneath this chaos, a quieter phenomenon consistently unfolds: some brands don’t just survive updates—they emerge stronger. The difference is not luck, budget, or timing. It is structure.

Algorithm updates are designed to punish tactics, not systems. Updates target over-optimization, thin content, artificial link patterns, and short-term manipulations that distort search quality. Campaign-driven SEO strategies are especially vulnerable because they rely on isolated tactics that algorithms can easily invalidate. When one signal loses weight, the entire strategy collapses. Ecosystem-driven brands, however, are not built on single points of failure. Their visibility is distributed across content depth, brand signals, user behavior, and entity authority. When an update recalibrates one signal, the system absorbs the change instead of breaking.

This is why ecosystem brands often gain during updates. As competitors lose visibility due to fragile optimizations, algorithms look for safer, more reliable alternatives. Brands with consistent topical depth, strong behavioral signals, and clear entity recognition become statistically lower-risk choices. Updates are not rewards—they are recalibrations. And recalibration favors brands that reduce uncertainty for the algorithm over time.

This is where the idea of “update-proof SEO” is often misunderstood. It does not mean rankings never fluctuate. It means that ranking changes stop being existential threats. When visibility is anchored in an ecosystem, traffic does not depend on a handful of keywords or pages. Discovery happens across multiple queries, intents, and surfaces. Even when individual positions shift, overall presence remains stable. In some cases, rankings matter less because the brand itself becomes the destination—searched for, referenced, and trusted independent of exact positions.

At this stage, visibility becomes inevitable. Not because of domination or manipulation, but because the ecosystem continuously reinforces itself. Users engage, return, and search again. Content supports multiple stages of intent. Brand signals compound across platforms. Algorithms observe these patterns over time and respond by expanding exposure, not retracting it.

ThatWare’s experience consistently confirms this pattern. Brands built on ecosystem architecture rarely fear updates; they often benefit from them. While others react, ThatWare-designed systems continue accumulating trust. Updates become moments of consolidation rather than disruption. This is the ultimate advantage of designing search ecosystems: when algorithms change, the system doesn’t need to adapt—the algorithm adapts to the system.

From SEO Agencies to Ecosystem Architects

The SEO industry is facing a quiet but undeniable identity crisis. For years, success was defined by access—to tools, to data, to ranking factors, to shortcuts others didn’t yet understand. Today, that advantage has eroded. Tools are commoditized. Data is abundant. AI can generate keywords, content outlines, and even optimization recommendations in seconds. What once differentiated SEO agencies now looks increasingly interchangeable.

This dependency on tools has led to a deeper problem: metric obsession. Rankings, traffic, impressions, and click-through rates dominate conversations, often at the expense of strategic clarity. When metrics become the goal rather than the signal, SEO turns reactive. Teams optimize to move numbers, not to build systems. As a result, strategies become fragile—overfitted to dashboards instead of grounded in how search engines actually learn and evaluate trust over time.

In this environment, the role of the SEO agency must evolve. The future does not belong to technicians who execute isolated tactics. It belongs to search ecosystem architects—strategists who understand that search visibility is an emergent outcome of well-designed systems. This role demands strategic systems thinking: the ability to see how content, brand signals, user behavior, and entity authority reinforce one another across time and platforms. It is not about doing more SEO; it is about designing structures that continuously generate trust.

Search ecosystem architects operate at the intersection of disciplines. They combine SEO intelligence with brand strategy, UX design, behavioral psychology, content architecture, and data science. They understand that algorithms do not evaluate silos—they observe patterns across an entire digital footprint. Every touchpoint becomes part of a feedback loop that either strengthens or weakens algorithmic confidence in a brand.

This is where ThatWare’s positioning becomes clear. ThatWare does not operate as a traditional SEO agency because optimization alone is no longer sufficient. ThatWare don’t optimize for algorithms. ThatWare design ecosystems where algorithms evolve in our favor. The focus is not on chasing updates, exploiting loopholes, or inflating short-term metrics. It is on building adaptive, intelligent systems that align with how modern search engines learn.

In a landscape defined by constant change, tactics expire quickly. Systems endure. The future of search belongs to those who stop thinking like optimizers and start thinking like architects.

Conclusion: Stop Renting Rankings. Start Owning Search Gravity

For years, brands have been conditioned to believe that visibility can be bought, optimized, and scaled on demand. Rankings go up, traffic flows in, and dashboards look healthy—until they don’t. The true cost of this temporary visibility is rarely calculated. Financially, brands pour continuous budgets into re-optimization, recovery audits, and reactive fixes every time the algorithm shifts. Strategically, they remain trapped in a cycle of dependency, never building anything that compounds. And at the brand level, erosion sets in: inconsistent messaging, shallow authority, and a digital presence that feels fragmented to both users and algorithms.

When visibility is rented rather than owned, growth becomes fragile. Every algorithm update feels like a threat. Every competitor move creates anxiety. This is not a sustainable way to build a brand in search. It’s a treadmill—fast, exhausting, and ultimately going nowhere.

The alternative is to invest in permanent assets. Search ecosystems are not campaigns; they are growth engines. They are built by architecting content that teaches, brand signals that reinforce identity, user journeys that generate satisfaction, and entity authority that algorithms recognize over time. Unlike isolated optimizations, ecosystems compound. They strengthen with age. Each interaction, mention, and piece of content reinforces the next. Instead of reacting to algorithms, ecosystems shape how algorithms perceive and trust the brand.

This is the shift that defines long-term winners in search. SEO is a project. Ecosystems are permanent assets. Projects end. Assets appreciate. One demands constant maintenance; the other creates momentum. When brands stop chasing rankings and start building search gravity, visibility becomes more stable, more defensible, and far less dependent on short-term tactics.

The path forward is not about doing more SEO—it’s about thinking differently about search altogether. Reframe search as an architectural challenge, not a tactical one. Choose systems over hacks, coherence over checklists, and long-term trust over short-term gains. ThatWare doesn’t optimize for algorithms. ThatWare designs ecosystems where algorithms evolve in our favor. And in an era where trust is learned, not awarded, that difference is everything.