SUPERCHARGE YOUR ONLINE VISIBILITY! CONTACT US AND LET’S ACHIEVE EXCELLENCE TOGETHER!

Search is undergoing the most significant transformation since the invention of Google.

For over two decades, digital visibility depended on Search Engine Optimization (SEO)—ranking web pages on search engines like Google and Bing. Today, however, users increasingly receive answers directly from AI systems such as ChatGPT, Google Gemini, Microsoft Copilot, and Perplexity.

Instead of showing ten blue links, these systems generate direct answers synthesized from multiple sources.

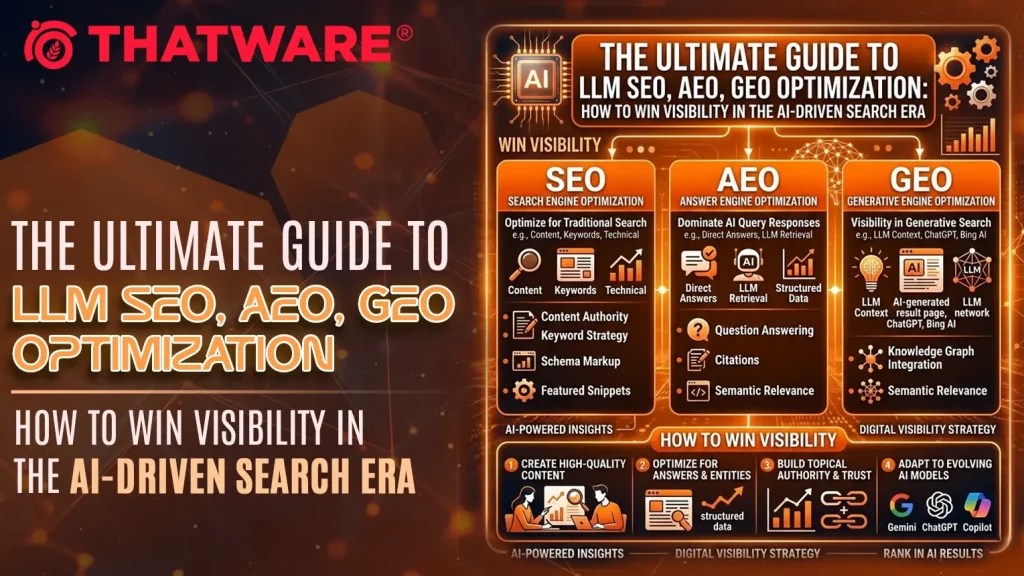

This shift has created a new optimization discipline that combines:

- SEO (Search Engine Optimization)

- AEO (Answer Engine Optimization)

- GEO (Generative Engine Optimization)

- LLM Optimization (Large Language Model visibility)

Together, these strategies determine whether your content becomes part of the AI knowledge layer powering modern search.

In this guide, we will explore how businesses, publishers, and marketers can optimize their websites for both traditional search engines and generative AI systems.

Understanding the Evolution of Search

Search technology has changed dramatically over the past three decades. What began as simple systems that matched words has developed into advanced platforms that can understand meaning, context, and even generate complete answers. This transformation has changed the way people discover information online and has also changed how businesses create and structure digital content.

In the early days of the internet, search engines focused mainly on locating pages that contained specific keywords. Over time, improvements in artificial intelligence and natural language processing allowed search systems to better understand what users actually meant when they searched for something. Today we are entering a new phase where search engines and AI assistants can generate answers rather than simply showing lists of links.

To understand this transformation, it helps to examine the three major generations of search. Each generation reflects a different way that machines interpret user queries and retrieve information from the web.

1. Keyword Search

The first generation of search was built around keywords. Search engines looked for exact matches between the words typed by a user and the words appearing on web pages. If a page contained the same keywords as the query, it had a chance to appear in the search results.

During the early internet era in the 1990s and early 2000s, search engines functioned primarily as indexing systems. They crawled websites, stored the content in large databases, and returned pages that contained the relevant terms. The process was fairly simple and focused almost entirely on word matching.

One of the companies that dramatically improved keyword search was Google. Its PageRank algorithm evaluated the authority of a page by examining how many other pages linked to it. Pages with more high quality backlinks were considered more trustworthy and were more likely to appear near the top of the results.

How Keyword Search Systems Worked

In this first generation, the search process followed a basic sequence.

First, search engine crawlers scanned websites and collected page content.

Second, the engine stored the text of those pages in a searchable index.

Third, when a user entered a query, the engine looked for pages containing those same words.

Finally, the engine ranked results based on keyword presence, link signals, and simple site factors.

Because the systems relied heavily on exact word matches, many website owners tried to manipulate rankings by placing keywords repeatedly throughout their pages. This practice became known as keyword stuffing. Some sites also created low quality backlinks solely to increase rankings.

Limitations of Keyword Search

Although keyword based search worked reasonably well in the early web environment, it had several limitations.

One major limitation was that the system could not understand meaning. If a user searched for a phrase using different wording than the page used, the search engine might fail to return the relevant page. For example, a page optimized for the phrase “cheap hotels” might not appear when someone searched for “affordable accommodations.”

Another problem was the inability to interpret intent. A query such as “apple” could refer to the fruit, the technology company, or even a music label. Keyword based systems had difficulty distinguishing between these possibilities.

As the internet grew and users expected more accurate results, search engines needed a better way to interpret language and context.

Semantic Search

The second generation of search introduced semantic understanding. Instead of focusing only on keywords, search engines began analyzing the meaning behind queries and the relationships between concepts.

This shift occurred gradually through a series of technological improvements. A key moment came when Google launched the Google Hummingbird update. Hummingbird allowed the search engine to interpret entire phrases and understand how words related to each other within a sentence.

Later developments such as Google RankBrain and Google BERT improved this capability even further. These systems used machine learning to analyze language patterns and determine what users were actually trying to find.

The Shift from Keywords to Meaning

Semantic search introduced a new approach to interpreting queries. Instead of matching words exactly, search engines began analyzing context and intent.

For example, a user searching for “best places to visit in winter” might receive results about travel destinations even if the pages did not contain that exact phrase. The system could recognize related concepts such as winter tourism, ski resorts, or seasonal travel guides.

Search engines also began using entities to understand information. An entity is a clearly defined concept such as a person, company, place, or product. For instance, Google, New York City, or Elon Musk can all be treated as entities.

By mapping relationships between entities, search engines could better understand how topics connected with each other.

The Role of the Knowledge Graph

Another important development in semantic search was the introduction of the knowledge graph. The knowledge graph is a database that stores information about entities and their relationships.

When a user searches for a well known entity, search engines can display structured information panels containing key facts. For example, a search for a company may show its founding date, leadership, and headquarters location.

This system allows search engines to move beyond simple page listings and begin delivering direct information within the search results.

Improvements in User Experience

Semantic search significantly improved the quality of results. Search engines could now interpret conversational queries, long phrases, and complex questions. Users no longer needed to type precise keyword combinations to obtain useful results.

Another benefit was the rise of featured snippets and quick answers. When a search engine identified a clear answer within a webpage, it could display that answer directly in the results page.

These improvements marked an important transition from basic keyword matching to intelligent query interpretation.

However, even semantic search still relied on the traditional search model. Users typed queries and received lists of links. The responsibility of reading those pages and synthesizing information remained with the user.

The next generation of search would change that model entirely.

Generative AI Search

The newest phase of search is defined by generative artificial intelligence. Instead of simply retrieving pages, modern AI systems can analyze multiple sources of information and generate complete responses to user questions.

Generative search systems operate more like digital assistants than traditional search engines. When a user asks a question, the AI interprets the request, gathers relevant information, and produces a synthesized answer.

Examples of platforms using this approach include:

- ChatGPT

- Google AI Overviews

- Perplexity AI

- Microsoft Copilot

These systems represent a fundamental shift in how people interact with information online.

How Generative AI Search Works

Generative search systems typically follow a multi step process.

First, the AI interprets the user’s query and determines the underlying intent. This involves analyzing the language used in the question and identifying relevant entities and concepts.

Second, the system retrieves information from trusted sources such as web pages, databases, or previously indexed content.

Third, the AI analyzes the collected information and identifies the most relevant facts and explanations.

Finally, the system synthesizes this knowledge into a coherent response that directly answers the user’s question.

Instead of presenting ten blue links, the AI produces a structured answer that may include summaries, explanations, and citations.

Retrieval Augmented Generation

Many modern AI search systems use a technique known as retrieval augmented generation. This approach combines large language models with real time information retrieval.

The language model generates the answer, but it relies on external sources to ensure that the information is accurate and up to date. The system may also provide citations that link back to the original sources.

This method helps AI platforms produce more reliable answers while still benefiting from the language capabilities of large models.

Changes in User Behavior

Generative search is changing how people look for information. Users increasingly ask complete questions instead of typing short keyword phrases.

For example, instead of searching for “digital marketing strategy,” a user might ask, “What are the best digital marketing strategies for small businesses in 2026?”

AI systems are designed to handle these natural language questions and provide conversational responses.

This shift means that users often obtain the information they need without clicking through multiple websites.

Implications for Websites and Content Creators

The rise of generative search has significant implications for website owners, publishers, and marketers.

In traditional search, the primary goal was to rank highly in search engine results. Visibility depended on appearing within the top positions for relevant keywords.

In the generative search environment, visibility depends on something slightly different. Content must be clear, authoritative, and structured in a way that AI systems can easily extract and interpret.

Instead of simply ranking pages, AI systems select pieces of information that can be incorporated into generated answers. This means that content needs to function as reliable knowledge sources.

Websites that provide well structured explanations, clear definitions, and trustworthy information are more likely to be referenced by AI systems.

The New Role of Authority

Generative search systems prioritize sources that demonstrate credibility and expertise. Content that includes citations, expert authorship, and accurate data is more likely to be used when generating answers.

As a result, authority signals have become increasingly important. Search engines and AI models evaluate factors such as domain reputation, content quality, and external references when determining which sources to trust.

This emphasis on reliability encourages the creation of higher quality information across the web.

The Transition to an AI Driven Search Ecosystem

The progression from keyword search to semantic search and finally to generative AI search illustrates how dramatically information discovery has evolved.

Keyword search focused on matching words.

Semantic search focused on understanding meaning.

Generative search focuses on delivering complete answers.

Each stage has improved the ability of machines to interpret human language and provide useful information.

For website owners and digital marketers, this evolution means that optimization strategies must continue to adapt. Traditional search engine optimization remains important, but it is no longer the only factor influencing visibility.

Content must now be designed not only for human readers but also for intelligent systems that analyze and synthesize information.

Websites that structure their content clearly, demonstrate expertise, and provide reliable knowledge are more likely to become part of the information ecosystem powering modern AI driven search.

What Is Generative Engine Optimization (GEO)?

Generative Engine Optimization (GEO) is the process of structuring and presenting digital content so that artificial intelligence systems can easily extract, understand, and reference it when generating answers for users. As AI powered platforms become a common way for people to search for information, the way content is created and optimized is changing rapidly.

Traditional search engines typically display a list of links in response to a query. Users then choose which pages to visit and read through the information themselves. Generative search systems work differently. Instead of simply listing pages, these systems analyze information from multiple sources and generate a direct answer to the user’s question.

Platforms such as ChatGPT, Perplexity AI, Google AI Overviews, and Microsoft Copilot are examples of tools that rely on this approach. When a user asks a question, these systems retrieve relevant knowledge from the web and synthesize it into a structured response.

Because of this change, simply ranking in search results is no longer enough. Content must also be written in a way that AI systems can easily interpret and incorporate into their generated responses. This is where Generative Engine Optimization becomes important.

Unlike traditional search engine optimization, which focuses heavily on keyword targeting and ranking positions, GEO emphasizes information architecture and machine readability. The goal is to make content understandable not only for human readers but also for artificial intelligence systems that analyze and extract knowledge from web pages.

Key Objective of GEO

The central objective of Generative Engine Optimization is to make digital content easier for AI systems to process and reference. This involves organizing information in ways that help machines identify key ideas, definitions, and relationships between concepts.

One important aspect is machine readability. Content that is well structured, logically organized, and clearly written is easier for AI systems to interpret. Clear headings, concise explanations, and well defined sections allow AI models to locate relevant information quickly.

Another objective is semantic structure. Instead of focusing solely on keywords, GEO encourages writers to organize content around meaningful topics and entities. This helps AI systems understand how different concepts are related and how they fit within a broader knowledge framework.

Authority is also a major component of GEO. AI systems tend to prioritize information from credible and trustworthy sources. Content that includes expert insights, accurate data, and references to reliable organizations is more likely to be selected when an AI system generates an answer.

Finally, content should be easily extractable. AI systems often identify short passages or summaries that directly answer a question. When information is presented clearly and concisely, it becomes easier for these systems to extract and use it in generated responses.

When these elements are implemented effectively, a webpage can function as a reliable knowledge source for AI systems. Instead of simply attracting visitors through search rankings, the content becomes part of the information layer that AI assistants rely on when responding to user queries.

Why GEO Matters for Businesses

Generative Engine Optimization is becoming increasingly important because AI assistants are quickly evolving into major discovery channels. Many users now prefer asking questions directly to AI systems rather than browsing through multiple search results.

For example, users might ask questions such as:

- What is the best CRM software for small businesses

- Explain generative engine optimization

- What are the safest investment options

AI systems can respond to these questions instantly by summarizing information gathered from multiple sources. As a result, users often receive the information they need without visiting several websites.

This shift has major implications for businesses and content creators. If a company’s website is not optimized for generative engines, its content may never be included in these AI generated answers. Even if the site ranks well in traditional search results on platforms like Google, it may still lose visibility in AI driven search experiences.

Organizations that invest in GEO early can gain several advantages. One of the most important benefits is higher citation frequency. When AI systems regularly reference a company’s content in generated responses, the brand gains credibility and exposure.

Another benefit is increased brand authority. Being cited by AI systems signals that the information is reliable and trustworthy, which can strengthen a brand’s reputation in its industry.

GEO can also contribute to stronger presence in knowledge graphs and entity databases. As AI systems recognize a brand or topic as an authoritative source, it becomes more integrated into the broader ecosystem of machine understood information.

Finally, optimizing for generative engines improves discovery across multiple AI platforms. Instead of relying solely on traditional search engines, businesses can reach users through conversational interfaces, AI assistants, and emerging AI search tools.

As generative AI continues to reshape how information is accessed online, GEO is becoming an essential strategy for maintaining digital visibility and ensuring that valuable content remains part of the answers people receive.

The Four Pillars of AI Search Optimization

As search technology evolves toward AI driven discovery, organizations must rethink how they approach digital visibility. Traditional search engine optimization is no longer sufficient on its own. Modern search systems increasingly rely on artificial intelligence to interpret queries, retrieve information, and generate direct answers for users.

To succeed in this environment, businesses need a comprehensive framework that addresses both traditional search engines and AI powered answer systems. This framework can be understood through four key pillars: Search Engine Optimization, Answer Engine Optimization, Generative Engine Optimization, and LLM Optimization. Each pillar focuses on a different aspect of how AI systems discover, interpret, and present information.

Together, these four layers ensure that content is not only discoverable but also understandable and usable by modern AI driven search platforms.

1. Search Engine Optimization (SEO)

Search Engine Optimization remains the foundation of online visibility. Even as artificial intelligence becomes more prominent in search experiences, many AI systems still rely on traditional search engine indexes to discover and retrieve information from the web.

Search engines such as Google continue to crawl and index billions of pages. Generative AI systems often pull information from these indexed sources before synthesizing answers for users. This means that strong SEO practices are still essential for ensuring that content can be discovered in the first place.

Core SEO Components

Effective SEO begins with a solid technical foundation. Websites must be structured in a way that allows search engines and AI crawlers to access and interpret their content easily.

Important technical elements include site architecture, crawlability, page speed, and mobile optimization. A well organized site structure helps search engines understand how different pages relate to each other, while proper crawlability ensures that bots can navigate and index content without obstacles.

Page speed and mobile optimization also play critical roles in modern search performance. Since a large portion of internet traffic now comes from mobile devices, search engines prioritize websites that deliver fast and responsive user experiences.

Structured data is another key component of technical SEO. Schema markup provides additional context about webpage content, allowing machines to interpret information more accurately. This structured format helps search engines understand elements such as articles, products, organizations, and frequently asked questions.

Internal linking is equally important. By connecting related pages through logical links, websites help both users and search engines navigate their content more effectively. This strengthens topical authority and improves the overall discoverability of important pages.

Content Quality and E E A T

Technical optimization alone is not enough to succeed in modern search environments. Content quality has become one of the most important ranking factors.

Google emphasizes a framework known as E E A T, which stands for Experience, Expertise, Authoritativeness, and Trustworthiness. These signals help search engines determine whether a piece of content comes from a credible and reliable source.

Experience refers to whether the author has real world knowledge of the topic. Expertise measures the depth of knowledge demonstrated in the content. Authoritativeness reflects the reputation of the website or author within a particular field. Trustworthiness focuses on the accuracy and reliability of the information provided.

AI systems also rely heavily on these signals when deciding which sources to reference. Content that demonstrates expert insight, accurate data, and credible references is far more likely to be cited by generative models when answering user queries.

2. Answer Engine Optimization (AEO)

Answer Engine Optimization focuses on helping search systems deliver clear and direct responses to user questions. Instead of simply displaying a list of links, answer engines aim to provide immediate answers that solve the user’s problem quickly.

Examples of answer engine outputs include featured snippets, voice search responses, and AI generated summaries. These results often appear at the top of search pages and provide concise explanations extracted from high quality content.

The Ideal AEO Content Format

To increase the likelihood that content will be selected for these responses, it must follow a structure that answer engines can easily interpret.

One of the most effective formats follows a simple pattern: question, direct answer, and supporting context.

For example, a section might begin with a clear question such as “What is Generative Engine Optimization?” Immediately after the question, the content should provide a short definition that directly answers it. This concise explanation is then followed by additional context that expands on the concept.

This format allows search engines and AI systems to quickly identify the core response while still providing deeper information for readers who want to learn more.

Best Practices for AEO

Several content strategies can improve answer engine performance. FAQ sections are particularly effective because they mirror the way users naturally ask questions. Question based headings also help search engines identify specific topics within a page.

Concise definitions and step by step explanations make it easier for answer engines to extract information and display it as featured responses. Structured summaries at the beginning or end of sections can also improve clarity and increase the chances of appearing in direct answer results.

By organizing content around clear questions and straightforward answers, websites can significantly improve their visibility in answer driven search environments.

3. Generative Engine Optimization (GEO)

Generative Engine Optimization focuses on how artificial intelligence systems retrieve, analyze, and synthesize information from the web. Unlike traditional search engines, which rank pages based on relevance signals, generative engines extract knowledge fragments from multiple sources and combine them into a single response.

Platforms such as ChatGPT and Perplexity AI use this approach to generate answers that summarize information from across the internet.

How Generative Engines Extract Content

Generative AI systems typically process information through several stages. The first stage involves content discovery, where the system identifies relevant webpages or data sources related to the user’s query.

The second stage is information extraction. During this phase, the AI scans the content to locate key facts, explanations, and relationships between ideas.

Next comes knowledge synthesis. The system evaluates multiple sources and integrates the most relevant information into a coherent understanding of the topic.

Finally, the AI generates a response that answers the user’s question in a clear and conversational format.

Content that is logically structured and clearly explained performs best in this pipeline because it allows AI systems to extract useful information efficiently.

Designing AI Extractable Content

To optimize for generative engines, content must follow clear structural patterns that make information easier for machines to interpret.

One of the most important practices is using hierarchical headings. Clear heading structures help AI models understand how topics and subtopics relate to each other. A well organized page might include a main heading for the overall topic, followed by subheadings that define concepts, explain benefits, and outline implementation steps.

Structured summaries are also valuable. Each major section should include a short paragraph that summarizes the key idea. These summaries often become the passages that AI systems reference when generating answers.

Entity based writing is another important technique. AI models rely heavily on entities, which are identifiable concepts such as brands, technologies, people, or locations. Clearly mentioning and defining these entities helps AI systems recognize relationships between topics and improves the likelihood that the content will be used in generated responses.

4. LLM Optimization

Large Language Model Optimization focuses on ensuring that AI models can access, understand, and trust a website’s content. Since many modern AI systems rely on large language models to generate responses, optimizing content for these models has become an important part of digital visibility.

Allowing AI Crawlers

AI systems use specialized crawlers to collect data from the web. Examples include GPTBot, PerplexityBot, ClaudeBot, and Google Extended.

If these bots are blocked in a website’s robots.txt configuration, the AI systems may not be able to access the site’s content. As a result, the information may never be considered when AI models generate answers.

Allowing these crawlers to access key content areas ensures that AI platforms can evaluate and incorporate the information when responding to user queries.

Implementing an llms.txt File

Another emerging best practice is the use of an llms.txt file. This file acts as a machine readable guide that helps AI systems understand the focus and authority of a website.

An llms.txt file can outline important details such as the primary content areas covered by the site, the knowledge domains it specializes in, and the trusted sources it references. By providing this structured context, the file helps AI systems interpret the credibility and scope of the information provided.

Although still a relatively new practice, llms.txt files are becoming increasingly useful for communicating with AI systems and guiding how they interpret website content.

Content Architecture for AI Visibility

A successful Generative Engine Optimization strategy depends heavily on how content is organized across a website. In traditional SEO, many websites relied on publishing individual blog posts that targeted specific keywords. While this approach can still generate traffic, it is less effective in an AI driven search environment where systems attempt to understand entire topics rather than isolated pages.

To improve AI visibility, websites should focus on building topic clusters. A topic cluster is a structured group of related pages centered around a single core subject. Instead of publishing scattered articles, the site develops a content ecosystem where multiple pieces of content explore different aspects of the same topic.

For example, a website that wants to build authority around Generative Engine Optimization might create a cluster like this:

Core Topic: Generative Engine Optimization

Supporting articles might include:

- What Is GEO

- GEO vs SEO

- GEO Content Framework

- GEO Technical Implementation

- GEO Measurement Metrics

In this structure, the core page introduces the main concept and links to several supporting articles that explore subtopics in greater depth. Each supporting article also links back to the main topic page and to other related articles within the cluster.

This architecture helps search engines and AI systems understand that the website provides comprehensive coverage of the subject. Instead of seeing separate articles, the system recognizes a connected body of knowledge around a specific theme.

Topic clusters also improve user experience. Visitors who land on one article can easily navigate to related information, allowing them to explore the subject in more detail without leaving the site.

From an optimization perspective, this structure strengthens both traditional search rankings and AI extraction. Search engines such as Google reward websites that demonstrate topical authority. At the same time, AI platforms such as ChatGPT and Perplexity AI benefit from clearly structured content that provides complete explanations of a subject.

As a result, topic clusters support two important goals. They help improve SEO authority by strengthening internal linking and topical relevance, and they make it easier for AI systems to extract knowledge fragments when generating answers.

Schema Markup for AI Interpretation

Another important component of AI visibility is structured data. Structured data, commonly implemented through schema markup, provides machines with additional information about the meaning and structure of a webpage.

While human readers can easily understand context and relationships within text, machines require clearer signals. Schema markup provides these signals by labeling different types of content in a standardized format.

Several schema types are particularly useful for AI optimized content.

Article schema helps identify blog posts, news articles, and informational pages. It provides metadata such as the author, publication date, and headline, which can help search engines interpret the content more accurately.

FAQ schema is especially useful for answer driven search results. By marking up frequently asked questions and their answers, websites can make it easier for search engines to extract concise responses and display them in search features.

Organization schema provides structured information about a company or institution, including its name, website, logo, and social profiles. This information can contribute to knowledge graph entries and strengthen entity recognition.

Product schema is commonly used by ecommerce websites. It allows machines to understand product names, prices, availability, and reviews, which can improve visibility in search features and AI generated shopping recommendations.

HowTo schema is useful for instructional content. It outlines step by step processes in a machine readable format, allowing search engines to present structured guides directly within search results.

Implementing these schema types improves machine interpretation of web content. When search engines and AI systems can clearly identify the purpose and structure of a page, they are more likely to extract information accurately and display it in rich search features or AI generated answers.

Building Authority for AI Citations

In the generative search environment, authority and trustworthiness play a critical role. AI systems aim to provide reliable information to users, so they tend to prioritize sources that demonstrate expertise and credibility.

One of the most effective ways to improve citation probability is through expert authorship. Content written or reviewed by individuals with proven knowledge in a field carries greater credibility. Author profiles, professional credentials, and published expertise can strengthen the perceived authority of the content.

Institutional references also contribute to credibility. When content cites well known organizations, research institutions, or official reports, it signals that the information is grounded in trustworthy sources.

Credible data sources are particularly important in fields such as finance, health, and economics. Content that references reliable statistics, research studies, and verified data points is more likely to be considered trustworthy by AI systems.

Citations from authoritative websites further reinforce credibility. When a page references respected institutions or industry leaders, it strengthens the content’s position as a reliable knowledge source.

For example, financial content often references organizations such as the World Bank and the International Monetary Fund. References to central banks and regulatory authorities can also enhance trust signals.

These references help AI systems evaluate the reliability of the information and increase the likelihood that the content will be cited in generated answers.

Measuring AI Search Performance

As AI driven search becomes more common, traditional SEO metrics alone are no longer enough to measure digital performance. Organizations must begin tracking indicators that reflect how their content performs in AI generated responses.

New measurement frameworks focus on visibility across AI platforms and the frequency with which content is used as a knowledge source.

Key GEO Metrics

One important metric is AI Citation Rate. This measures how often a website is mentioned or referenced in AI generated answers. High citation frequency indicates that the content is considered authoritative and useful for answering user questions.

Another useful metric is AI Visibility Score. This indicator tracks how frequently a brand or website appears across AI platforms such as ChatGPT, Perplexity AI, Google Gemini, and Microsoft Copilot. Monitoring this presence helps organizations understand how visible their content is in AI powered discovery channels.

Answer Extraction Rate is another valuable metric. It measures how frequently pieces of content are extracted and used to generate AI responses. This provides insight into whether the structure and clarity of the content make it suitable for generative systems.

Finally, Knowledge Graph Inclusion evaluates whether a brand, organization, or topic is recognized as an entity within search engines and AI models. Being included in knowledge graphs increases the likelihood that the entity will appear in AI responses and structured search results.

Together, these metrics help organizations evaluate the effectiveness of their AI search optimization strategies and identify opportunities for improvement as generative search continues to evolve.

Reporting Framework for AI Optimization

A structured reporting system helps track performance and refine strategies.

Weekly Reports

Weekly monitoring should include:

- keyword performance

- AI citations

- crawl errors

- content indexing status

Monthly Reports

Monthly analysis should review:

- content engagement

- AI answer visibility

- backlink growth

- topic authority

Quarterly Reviews

Strategic reviews should focus on:

- content expansion opportunities

- new AI search platforms

- competitor analysis

- long-term GEO strategy

Here is a sample reporting format –

SEO / AEO / GEO Reporting Format

Deliverable-ready templates for weekly, bi‑weekly, monthly and quarterly reporting

Designed to capture traditional SEO performance + AI visibility measurement

How to use this template

Duplicate the relevant section for each reporting period.

Keep Executive Summary to ≤ 10 lines; push detail into tables.

Always include: deliverables shipped, KPI movement, what changed, what’s next, risks.

Reporting cadence (recommended)

| Frequency | Report | Primary focus |

| Weekly | Performance Dashboard | Rankings, traffic, conversions, technical health |

| Bi‑weekly | Content Performance | Page‑level metrics, keyword movement, gaps |

| Monthly | AI Visibility Audit | LLM citations/mentions, AI referrals, entity status |

| Quarterly | Strategic Review | Market analysis, competitive position, strategy changes |

1) Weekly Performance Dashboard (template)

A. Executive Summary

| Item | This week | WoW change | Notes / drivers |

| Organic sessions | <#> | <+/-#%> | |

| Organic conversions | <#> | <+/-#%> | |

| AI Search sessions | <#> | <+/-#%> | |

| AI Search conversions | <#> | <+/-#%> | |

| Top wins | e.g., snippet wins, new rankings, content shipped | ||

| Top risks | e.g., indexing issues, CWV regression, compliance blockers |

B. KPI Snapshot

| Area | Metric | Target | Actual | Status | Comment |

| Technical | Core Web Vitals (LCP/CLS/INP) | <targets> | <values> | 🟢/🟡/🔴 | |

| Indexing | Valid indexed pages | 🟢/🟡/🔴 | |||

| Visibility | Top 10 keyword count | 🟢/🟡/🔴 | |||

| AEO | Featured snippet wins | 🟢/🟡/🔴 | |||

| GEO | AI citations/mentions (monthly rollup) | 🟢/🟡/🔴 |

C. Deliverables shipped (this week)

| Deliverable | Owner | Status | Link | Acceptance notes |

| <e.g., llms.txt deployed> | <name> | Done/In progress | <URL> | |

| <e.g., homepage schema update> | <name> | Done/In progress | <URL> |

2) Bi‑weekly Content Performance (template)

A. Content shipped & updated

| URL / Asset | Type | Primary query | Language/Market | Change type | Result |

| <url> | Landing / Article / FAQ | <query> | <DE/NL/ES/…> | New/Update | Up/Flat/Down |

B. Snippet & PAA tracking

| Question | Snippet type | Status (Win/Loss) | Current winner | Next action |

| <question> | Paragraph/List/Table | Win/Loss | <competitor> | <action> |

C. Content gaps & next briefs

| Gap / Opportunity | Impact | Recommended asset | Owner | Due |

| <gap> | High/Med/Low | <asset> | <name> | <date> |

3) Monthly AI Visibility Audit (template)

A. AI referral traffic (GA4)

| Platform | Sessions | Engaged sessions | Conversions | Conv. rate | Notes |

| ChatGPT | |||||

| Perplexity | |||||

| Gemini / AI Overviews | |||||

| Copilot |

B. Citation monitoring (standard query set)

| Query | Platform | Brand mentioned? | Cited/linked? | Citation accuracy | Notes |

| <query> | ChatGPT/Perplexity/… | Yes/No | Yes/No | Accurate/Partial/Wrong |

C. Entity recognition status

| Asset | Status | Evidence | Next step |

| Organization SameAs links | OK/Needs work | <notes> | |

| Directory profiles (LinkedIn, Crunchbase, registries) | OK/Needs work | <links> | |

| PR mentions / authoritative citations | OK/Needs work | <links> |

4) Quarterly Strategic Review (template)

A. Market & competitive analysis

| Market | Visibility trend | Top competitors | Strategic notes |

| <DE/NL/ES/…> | Up/Flat/Down | <names> |

B. Strategy adjustments

| What changed | Why | Expected impact | Owner | When |

| <change> | <reason> | High/Med/Low | <name> | <date> |

Appendix: Standard KPI definitions

AI Search sessions: GA4 sessions where source/medium matches known AI referrers (ChatGPT, Perplexity, Gemini, Copilot).

AI citations: count of query responses where the brand is cited/linked; track accuracy separately.

Snippet wins: queries where your page holds the featured snippet / PAA answer.

LLM AEO GEO Implementation Checklist

| Step | Action Item | Priority | Dependency | Frequency | Effort Estimate | Verification Criteria |

| Day 1: Technical Foundation | Update robots.txt for AI crawlers | High | Technical SEO | One-time | 0.5 hr | Robots shows AI Allow rules |

| Verify robots.txt live and accessible | High | Technical SEO | One-time | 0.25 hr | 200 OK at /robots.txt | |

| Add basic structured data to homepage | High | Technical SEO | One-time | 0.5 hr | Homepage schema validates | |

| Day 2: Identify Quick Wins | Select top 3 pages to optimize this week | High | SEO Lead | One-time | 0.5 hr | List finalized |

| Define the main question each page should answer | High | Content Strategist | One-time | 0.5 hr | Questions documented | |

| Create per-page optimization checklist | Medium | SEO Analyst | One-time | 0.25 hr | Checklists attached | |

| Day 3: Optimize Page #1 | Rewrite Page #1 to Q&A structure | High | Content Team | One-time | 2 hrs | Updated draft published |

| QA Page #1 for readability and extraction | High | Editor | One-time | 0.5 hr | Editor sign-off complete | |

| Day 4: Optimize Pages #2 and #3 | Rewrite Page #2 to Q&A structure | High | Content Team | One-time | 1.5 hrs | Page #2 published |

| Rewrite Page #3 to Q&A structure | High | Content Team | One-time | 1.5 hrs | Page #3 published | |

| Run quick quality check on both pages | High | Editor | One-time | 0.5 hr | QC notes resolved | |

| Day 5: Test & Tracking | Test optimized pages in ChatGPT/Claude/Perplexity | High | SEO Analyst | One-time | 0.75 hr | Results recorded in tracker |

| Create basic AI visibility tracking sheet | High | SEO Analyst | One-time | 0.25 hr | Sheet link shared | |

| Schedule reminders for weekly/monthly tasks | Medium | Project Manager | One-time | 0.25 hr | Reminders on calendar |

| Step | Action Item | Priority | Dependency | Frequency | Effort Estimate | Verification Criteria |

| Step 1.1: Allow AI Crawlers to Access Your Site | Open /robots.txt and back up the existing file | High | Technical SEO | One-time | 0.25 hr | Backup stored in version control or dated copy |

| Add Allow rule for OAI-SearchBot | High | Technical SEO | One-time | 0.25 hr | Line present and visible at /robots.txt | |

| Add Allow rule for GPTBot | High | Technical SEO | One-time | 0.25 hr | Line present and visible at /robots.txt | |

| Add Allow rule for CCBot (Common Crawl) | High | Technical SEO | One-time | 0.25 hr | Line present and visible at /robots.txt | |

| Add Allow rule for anthropic-ai | High | Technical SEO | One-time | 0.25 hr | Line present and visible at /robots.txt | |

| Add Allow rule for Claude-Web | High | Technical SEO | One-time | 0.25 hr | Line present and visible at /robots.txt | |

| Add Allow rule for PerplexityBot | High | Technical SEO | One-time | 0.25 hr | Line present and visible at /robots.txt | |

| Add Allow rule for GoogleOther | High | Technical SEO | One-time | 0.25 hr | Line present and visible at /robots.txt | |

| Retain Disallow rules for admin/private paths (/admin, /wp-admin, /cart, etc.) | High | Technical SEO | One-time | 0.25 hr | Sensitive paths remain disallowed | |

| Ensure /blog path is crawlable | High | Technical SEO | One-time | 0.25 hr | No disallow matching /blog in robots.txt | |

| Ensure /products path is crawlable | High | Technical SEO | One-time | 0.25 hr | No disallow matching /products in robots.txt | |

| Ensure /services path is crawlable | High | Technical SEO | One-time | 0.25 hr | No disallow matching /services in robots.txt | |

| Validate robots.txt syntax with an online validator | High | Technical SEO | One-time | 0.25 hr | Validator returns no errors | |

| Publish robots.txt and verify at https://yourdomain.com/robots.txt | High | Technical SEO | One-time | 0.25 hr | URL returns 200 and updated content | |

| Document change log with date and editor | Medium | Project Manager | One-time | 0.25 hr | Change record stored in docs | |

| Set calendar reminder to check server logs for AI crawlers in 2–4 weeks | Medium | SEO Lead | One-time | 0.1 hr | Reminder created in calendar | |

| Step 1.2: Implement Structured Data | Inventory all Product pages | High | Content Ops | One-time | 1 hr | List of all Product URLs completed |

| Inventory all Service pages | High | Content Ops | One-time | 1 hr | List of all Service URLs completed | |

| Inventory Organization info (name, address, phone, logo) | High | Content Ops | One-time | 0.5 hr | Organization data sheet complete | |

| Generate JSON-LD for Product (name, description, price, availability, brand, reviews, images) | High | Technical SEO | One-time | 2 hrs | JSON-LD snippet passes Rich Results Test | |

| Generate JSON-LD for Service (name, description, provider, areaServed) | High | Technical SEO | One-time | 1.5 hrs | JSON-LD snippet passes Rich Results Test | |

| Generate JSON-LD for Organization (name, description, address, contact) | High | Technical SEO | One-time | 1 hr | JSON-LD snippet passes Rich Results Test | |

| Implement JSON-LD in <head> of Product templates | High | Web Dev | One-time | 1 hr | View-source shows JSON-LD block on Product pages | |

| Implement JSON-LD in <head> of Service templates | High | Web Dev | One-time | 1 hr | View-source shows JSON-LD block on Service pages | |

| Implement Organization JSON-LD on homepage | High | Web Dev | One-time | 0.5 hr | View-source shows Organization JSON-LD on homepage | |

| Add customer ratings/reviews where available | Medium | Content Ops | One-time | 1 hr | AggregateRating validates without warnings | |

| Attach canonical product images in JSON-LD | Medium | Content Ops | One-time | 0.5 hr | Image URLs valid and accessible | |

| Validate all schema with Google Rich Results Test | High | Technical SEO | One-time | 1 hr | No critical errors; warnings documented | |

| Create schema update SOP for content changes | Medium | Project Manager | One-time | 1 hr | SOP document approved and stored | |

| Step 1.3: Verify Everything Works | Confirm robots.txt is publicly accessible | High | Technical SEO | One-time | 0.25 hr | HTTP 200 and correct content |

| Run crawler access tests using multiple user-agents | High | Technical SEO | One-time | 0.5 hr | Tools show no blocking rules for AI bots | |

| Create /ai-test.html with sample content and JSON-LD | High | Web Dev | One-time | 0.5 hr | Page loads and validates JSON-LD | |

| Submit /ai-test.html URL for indexing (if applicable) | Medium | SEO Lead | One-time | 0.25 hr | URL reflected in index coverage | |

| Monitor server logs for AI crawler hits | Medium | DevOps | One-time | 0.5 hr | Log entries show AI user-agent accesses | |

| Check Google Search Console for crawl/index errors | High | SEO Lead | One-time | 0.5 hr | No critical Coverage errors | |

| Scan site for accidental noindex tags | High | Technical SEO | One-time | 0.5 hr | No unexpected noindex found | |

| Document verification outcomes and issues | Medium | Project Manager | One-time | 0.5 hr | Verification report filed |

| Step | Action Item | Priority | Dependency | Frequency | Effort Estimate | Verification Criteria |

| Step 2.1: Identify Most Important Customer Questions | Export Google Search Console queries for last 6 months | High | SEO Analyst | One-time | 1 hr | CSV exported and stored |

| Collect top support ticket themes | High | Support Lead | One-time | 1 hr | List of recurring issues compiled | |

| Review sales call notes for repeated objections | High | Sales Ops | One-time | 1 hr | Top objections documented | |

| Scrape/collect Reddit and forum questions in niche | Medium | Marketing | One-time | 2 hrs | Question list with sources compiled | |

| Compile 30 distinct customer questions | High | Content Strategist | One-time | 2 hrs | Spreadsheet with 30+ questions complete | |

| Tag each question by funnel stage (TOFU/MOFU/BOFU) | High | Content Strategist | One-time | 0.5 hr | All questions labeled by stage | |

| Score questions for business impact (H/M/L) | High | Content Strategist | One-time | 0.5 hr | Scores added to sheet | |

| Score questions for estimated ask volume (H/M/L) | Medium | Content Strategist | One-time | 0.5 hr | Scores added to sheet | |

| Score questions for competition (H/M/L) | Medium | SEO Analyst | One-time | 0.5 hr | Scores added to sheet | |

| Score questions for difficulty to answer (E/M/H) | Medium | Content Strategist | One-time | 0.5 hr | Scores added to sheet | |

| Select top 10 priority questions | High | Content Strategist | One-time | 0.25 hr | Top 10 marked and approved | |

| Step 2.2: Audit Your Current Content | Create audit spreadsheet with defined columns | High | SEO Analyst | One-time | 0.5 hr | Template sheet created |

| List all existing content URLs (blogs, landing, product) | High | Content Ops | One-time | 2 hrs | URL inventory complete | |

| Map each question to existing URL (Yes/Partial/No) | High | Content Strategist | One-time | 2 hrs | Mapping complete | |

| Assign Content Quality Score (1–10) per mapped URL | High | SEO Analyst | One-time | 1.5 hrs | Scores completed | |

| Flag AEO-Ready status per URL (Yes/No) | High | SEO Analyst | One-time | 0.5 hr | AEO-Ready column filled | |

| Set Business Impact and Traffic Potential per URL | High | SEO Analyst | One-time | 1 hr | Columns filled for all URLs | |

| Estimate Update Difficulty (Easy/Med/Hard) | Medium | Content Strategist | One-time | 0.5 hr | Difficulty column filled | |

| Calculate Priority Score using weighted formula | High | SEO Analyst | One-time | 0.25 hr | Priority column computed | |

| Define Action Needed (Reformat/Expand/Create/Optimize) | High | Content Strategist | One-time | 0.5 hr | Actions assigned | |

| Set Status (Not Started/In Progress/Complete) | Medium | Project Manager | One-time | 0.25 hr | Status set for each row | |

| Add Notes on gaps and improvements | Medium | Content Strategist | One-time | 0.5 hr | Notes completed | |

| Step 2.3: Identify Quick Wins | Filter audit to QS 6–8, High impact, Easy/Med difficulty, AEO-Ready No | High | SEO Analyst | One-time | 0.25 hr | Filtered view saved |

| Select 3–5 pages as quick wins | High | SEO Lead | One-time | 0.25 hr | List approved by stakeholders | |

| Create optimization brief for each selected page | High | Content Strategist | One-time | 1 hr | Briefs completed | |

| Schedule Phase 3 transformation for selected pages | Medium | Project Manager | One-time | 0.25 hr | Tasks scheduled in PM tool |

| Step | Action Item | Priority | Dependency | Frequency | Effort Estimate | Verification Criteria | |

| Step 3.1: Apply the Q&A Content Structure | Set H1 to broad SEO-optimized topic per page | High | Content Team | Recurring | 0.25 hr/page | H1 present and unique per page | |

| Convert main topic into H2 question | High | Content Team | Recurring | 0.25 hr/page | H2 phrased as a question | ||

| Write 2–3 sentence direct answer below H2 | High | Content Team | Recurring | 0.5 hr/page | Direct answer exists and is extractable | ||

| Add 2–3 paragraphs of supporting context | Medium | Content Team | Recurring | 0.75 hr/page | Context covers key details | ||

| Create 3–4 additional H2 sections as related questions | Medium | Content Team | Recurring | 0.75 hr/page | Additional H2s added | ||

| Use bullets/numbering for lists and processes | Medium | Content Team | Recurring | 0.25 hr/page | Lists formatted as bullets/steps | ||

| Add Key Takeaways or FAQ with 3–5 Q&As | High | Content Team | Recurring | 0.5 hr/page | FAQ section present and concise | ||

| Validate header hierarchy (one H1; logical H2/H3) | High | SEO Analyst | Recurring | 0.25 hr/page | Heading outline passes audit | ||

| Step 3.2: Transform Existing Content | Select 3–5 quick-win pages from audit | High | SEO Lead | One-time | 0.25 hr | List finalized | |

| Rewrite each selected page into Q&A structure | High | Content Team | One-time | 1.5 hrs/page | Updated drafts completed | ||

| Insert direct answers under each H2 | High | Content Team | One-time | 0.5 hr/page | Answers present and concise | ||

| Optimize readability (short paragraphs, active voice) | Medium | Editor | One-time | 0.5 hr/page | Readability score improved (e.g., Hemingway) | ||

| Add relevant internal links to clusters and pillars | High | SEO Analyst | One-time | 0.5 hr/page | 2–4 contextual internal links added | ||

| Publish revised pages and update sitemap if needed | High | Web Admin | One-time | 0.25 hr/page | Pages live and in XML sitemap | ||

| Log before/after metrics baseline | Medium | SEO Analyst | One-time | 0.25 hr/page | Baseline recorded in tracker | ||

| Step 3.3: Create New Content for Gaps | Select top missing questions from audit Tier 1 | High | Content Strategist | Recurring | 0.5 hr/month | List approved for month | |

| Draft comprehensive outline per new page | High | Content Team | Recurring | 0.75 hr/page | Outline approved | ||

| Write 1000–1500 word article in Q&A format | High | Content Team | Recurring | 3 hrs/page | Draft meets length and structure | ||

| Add ‘Why This Matters’ section | Medium | Content Team | Recurring | 0.25 hr/page | Section present and informative | ||

| Add ‘How It Works’ / step-by-step section | Medium | Content Team | Recurring | 0.5 hr/page | Numbered steps included | ||

| Add ‘Common Mistakes/Considerations’ section | Medium | Content Team | Recurring | 0.5 hr/page | Section present with 3+ points | ||

| Add ‘Comparison/Options/Best Practices’ section | Medium | Content Team | Recurring | 0.5 hr/page | Section present with table/bullets | ||

| Insert FAQ/Key Takeaways | Medium | Content Team | Recurring | 0.25 hr/page | FAQ present | ||

| Have SME review for accuracy | High | Subject Matter Expert | Recurring | 0.5 hr/page | SME sign-off recorded | ||

| Implement JSON-LD where relevant | High | Technical SEO | Recurring | 0.25 hr/page | Schema validates | ||

| Publish and promote via owned channels | Medium | Marketing | Recurring | 0.5 hr/page | Post live and shared | ||

| Add internal links from existing content within 2 weeks | High | SEO Analyst | Recurring | 0.5 hr/page | New content receives ≥3 incoming links | ||

| Step 3.4: Optimize Product and Service Pages | Rewrite intro to answer ‘What is it and who is it for?’ | High | Content Team | One-time | 0.75 hr/page | Intro answers extracted cleanly | |

| Create Feature → Benefit bullets or table | High | Content Team | One-time | 0.75 hr/page | Table/bullets present with clear benefits | ||

| Build comparison table vs 2 competitors | High | Content Team | One-time | 1 hr/page | Comparison table present and factual | ||

| Add transparent pricing section with terms | High | Content Team | One-time | 0.5 hr/page | Pricing visible and accurate | ||

| Create 5–7 item FAQ addressing objections | High | Content Team | One-time | 0.75 hr/page | FAQ present and relevant | ||

| Insert Product/Service JSON-LD schema | High | Technical SEO | One-time | 0.5 hr/page | Schema validates without errors | ||

| Retain and optimize CTAs and social proof | Medium | Content Team | One-time | 0.25 hr/page | CTAs above the fold; proof visible | ||

| Add specifications table if applicable | Medium | Content Team | One-time | 0.5 hr/page | Specs table complete | ||

| Re-validate page in Rich Results Test | High | SEO Analyst | One-time | 0.25 hr/page | Passes with no critical errors | ||

| Step 3.5: Monthly Content Creation Rhythm | Week 1: Identify 4 questions to answer | High | Content Strategist | Recurring | 0.5 hr/month | List documented in content calendar | |

| Week 1: Review competitor coverage for selected questions | Medium | SEO Analyst | Recurring | 0.5 hr/month | Notes added to briefs | ||

| Week 1: Gather unique insights/data/case studies | Medium | Content Team | Recurring | 1 hr/month | Evidence included in briefs | ||

| Week 1: Draft outlines for 2–4 pages | High | Content Team | Recurring | 1 hr/month | Outlines approved | ||

| Weeks 2–3: Write 2–4 pages | High | Content Team | Recurring | 6 hrs/month | Drafts completed | ||

| Weeks 2–3: SME review | High | SME | Recurring | 1 hr/month | SME approvals stored | ||

| Week 4: Edit for AI extraction and clarity | High | Editor | Recurring | 1 hr/month | Edits applied; direct answers confirmed | ||

| Week 4: Add schema and internal links | High | Technical SEO | Recurring | 0.75 hr/month | Schema validates; links added | ||

| Week 4: Publish and promote | Medium | Marketing | Recurring | 0.75 hr/month | Content live and shared |

| Step | Action Item | Priority | Dependency | Frequency | Effort Estimate | Verification Criteria |

| Step 4.1: Build Reddit Presence | Identify 5–7 relevant subreddits | High | Marketing | One-time | 1 hr | List of target subs with links |

| Document sub rules, tone, and post types | Medium | Marketing | One-time | 1 hr | Sub rules doc created | |

| Create weekly schedule for answering 2–3 questions | Medium | Marketing | Recurring | 0.25 hr/week | Schedule in calendar | |

| Track engagement (upvotes, replies, saved) | Medium | Marketing | Recurring | 0.25 hr/week | Tracker updated weekly | |

| Avoid promotion; craft helpful, specific answers | High | Marketing | Recurring | 0.5 hr/week | Posts reflect non-promotional guidance | |

| Step 4.2: Respond to Specific Reddit Questions | Search Reddit weekly for targeted queries | Medium | Marketing | Recurring | 0.5 hr/week | List of candidate threads captured |

| Draft 150–300 word answers with first 2–3 sentences direct | High | Marketing | Recurring | 1 hr/week | Answers meet length/tone criteria | |

| Proofread and post with appropriate flair/tags | Medium | Marketing | Recurring | 0.25 hr/week | Posts comply with sub rules | |

| Reply to follow-up questions once per thread | Medium | Marketing | Recurring | 0.25 hr/week | Follow-ups posted where applicable | |

| Step 4.3: Systematic Review Collection | Select 2–3 primary review platforms (e.g., G2, Google) | High | Customer Success | One-time | 0.5 hr | Target platforms confirmed |

| Build post-purchase review request email (Template A) | High | Customer Success | One-time | 1 hr | Template approved | |

| Build 30–60 day value-realized request email (Template B) | High | Customer Success | One-time | 1 hr | Template approved | |

| Build long-term customer request email (Template C) | Medium | Customer Success | One-time | 1 hr | Template approved | |

| Set automation triggers for each email template | High | Marketing Ops | One-time | 1 hr | Automation sends test successfully | |

| Create decision tree for when to ask/not ask | Medium | Customer Success | One-time | 0.5 hr | Decision tree documented | |

| Set monthly review target (≥3 new reviews) | Medium | Customer Success | Recurring | 0.25 hr/month | Target recorded and reported | |

| Configure one gentle reminder after 7 days | Medium | Marketing Ops | One-time | 0.25 hr | Reminder workflow active | |

| Track average rating and monthly volume | Medium | Customer Success | Recurring | 0.25 hr/month | Dashboard reflects ≥4.5 average | |

| Step 4.4: Respond to Reviews (Positive & Negative) | Check review platforms twice weekly | High | Customer Success | Recurring | 0.5 hr/week | All new reviews identified within 48 hrs |

| Respond to positive reviews (50–75 words, specific) | Medium | Customer Success | Recurring | 0.5 hr/week | Positive responses posted within 48 hrs | |

| Respond to negative reviews (75–150 words; offer solution) | High | Customer Success | Recurring | 0.5 hr/week | Negative responses posted within 48 hrs | |

| Take sensitive conversations offline with contact info | High | Customer Success | Recurring | 0.25 hr/week | Offline resolution invites included | |

| Maintain 100% response rate KPI | High | Customer Success | Recurring | 0.25 hr/week | Monthly report shows 100% response rate | |

| Step 4.5: Join & Participate in Industry Communities | Identify 5–7 relevant communities (Facebook/Quora/LinkedIn) | High | Marketing | One-time | 1 hr | Community list created |

| Evaluate join method, activity level, and fit | Medium | Marketing | One-time | 0.5 hr | Notes captured per community | |

| Choose 2–3 primary communities to focus on | High | Marketing | One-time | 0.25 hr | Selection documented | |

| Write non-salesy introduction post | Medium | Marketing | One-time | 0.25 hr | Intro posted and approved | |

| Schedule weekly participation (30–120 min) | Medium | Marketing | Recurring | 0.5 hr/week | Calendar blocked for engagement | |

| Track mentions, referrals, and connections | Medium | Marketing | Recurring | 0.25 hr/week | Tracker updated with outcomes |

| Step | Action Item | Priority | Dependency | Frequency | Effort Estimate | Verification Criteria |

| Step 5.1: Optimize Site Speed | Run PageSpeed Insights for top 10 pages | High | SEO Analyst | Recurring | 1 hr/month | Scores recorded for mobile/desktop |

| Compress and convert images to WebP | High | Web Dev | Recurring | 2 hrs/month | Average image size <100KB where possible | |

| Implement lazy loading for below-the-fold images | High | Web Dev | One-time | 0.75 hr | Lazy load attribute present and working | |

| Minify JS and CSS assets | High | Web Dev | One-time | 1 hr | Minified bundles deployed | |

| Enable code splitting for large bundles | Medium | Web Dev | One-time | 1 hr | Chunks verified via network tab | |

| Configure browser caching and CDN | High | Web Dev | One-time | 1 hr | Cache headers and CDN confirmed | |

| Trim unused third-party scripts | Medium | Web Dev | Recurring | 1 hr/quarter | Removed scripts documented | |

| Optimize web font loading (preload/self-host) | Medium | Web Dev | One-time | 0.75 hr | FOIT/FOUT minimized; preload tags present | |

| Re-test until LCP<2.5s, FID<100ms, CLS<0.1 | High | SEO Analyst | Recurring | 0.5 hr/month | Thresholds met on PSI | |

| Step 5.2: Build an Internal Linking Strategy | Map pillar pages and cluster pages | High | SEO Analyst | One-time | 1 hr | Visual map stored |

| Ensure each key page has ≥3 incoming internal links | High | SEO Analyst | Recurring | 1 hr/month | No orphan pages in crawl report | |

| Add contextual links with varied anchor text | Medium | Content Team | Recurring | 1 hr/month | Anchor text diversity confirmed | |

| Add links within body content (not just footers) | Medium | Content Team | Recurring | 0.5 hr/month | Links placed in relevant sections | |

| Fix broken links and redirect chains | High | Technical SEO | Recurring | 1 hr/month | Zero 404/redirect chain in crawl | |

| Link new content from at least 3 existing pages within 2 weeks | High | SEO Analyst | Recurring | 0.5 hr/page | New pages show ≥3 internal backlinks | |

| Step 5.3: Fix Technical SEO Issues | Crawl site and export error list | High | SEO Analyst | Recurring | 1 hr/quarter | Crawl report archived |

| Normalize URL structure (short, hyphenated, lowercase) | High | Technical SEO | One-time | 2 hrs | URLs meet standard conventions | |

| Optimize unique title tags (≤60 chars) | High | SEO Analyst | Recurring | 2 hrs/month | Titles pass length and uniqueness checks | |

| Write compelling meta descriptions (≤160 chars) | Medium | Content Team | Recurring | 2 hrs/month | Descriptions present and unique | |

| Ensure exactly one H1 per page with logical H2/H3 | High | SEO Analyst | Recurring | 1 hr/month | Heading audits pass | |

| Add descriptive alt text to all images | Medium | Content Team | Recurring | 2 hrs/month | Alt text coverage ≥95% | |

| Fix 404s and shorten redirect chains | High | Technical SEO | Recurring | 1 hr/month | Zero critical 404s/chains | |

| Verify mobile usability (tap targets, font size) | High | SEO Analyst | Recurring | 1 hr/quarter | Mobile-friendly tests pass | |

| Maintain and submit XML sitemap in GSC | High | SEO Analyst | Recurring | 0.5 hr/month | Sitemap index status OK | |

| Re-verify robots.txt and schema validity | High | SEO Analyst | Recurring | 0.5 hr/month | No validation errors reported | |

| Apply canonical tags where duplicates exist | High | Technical SEO | One-time | 1 hr | Canonicalization confirmed | |

| Step 5.4: Build High-Quality Backlinks | Choose 3–4 white-hat link tactics to execute | High | SEO Lead | One-time | 0.5 hr | Tactics documented |

| List 10–15 target sites per tactic | High | SEO Analyst | Recurring | 1.5 hrs/month | Target list compiled | |

| Create 2–3 linkable assets (tools/data/case studies) | High | Content Team | One-time | 8 hrs | Assets published | |

| Send personalized outreach emails | High | SEO Analyst | Recurring | 2 hrs/month | Outreach log maintained | |

| Aim for 3–5 quality links per month | High | SEO Lead | Recurring | – | Monthly KPI reported | |

| Track referring domains and authority growth | Medium | SEO Analyst | Recurring | 0.5 hr/month | Backlink report updated | |

| Avoid paid/spammy link schemes | High | SEO Lead | Recurring | – | No toxic links detected in audits | |

| Step 5.5: Monthly SEO Maintenance Checklist | Week 1: Review GSC impressions/CTR/clicks | High | SEO Analyst | Recurring | 0.75 hr/month | Trends noted and anomalies flagged |

| Week 1: Review GA4 traffic and conversions | High | SEO Analyst | Recurring | 0.75 hr/month | Dashboard updated | |

| Week 2: Refresh declining pages | Medium | Content Team | Recurring | 2 hrs/month | Updated content republished | |

| Week 2: Identify new keyword opportunities | Medium | SEO Analyst | Recurring | 1 hr/month | New targets added to plan | |

| Week 3: Tech spot-check (speed/errors/schema) | High | Technical SEO | Recurring | 1 hr/month | Spot-check log clean | |

| Week 4: Link building and promotion | Medium | Marketing/SEO | Recurring | 1.5 hrs/month | Activities logged | |

| Quarterly: Deep-dive technical audit | High | Technical SEO | Recurring | 4 hrs/quarter | Audit report filed | |

| Escalate urgent issues immediately | High | Project Manager | Recurring | – | Issues tracked and resolved |

| Step | Action Item | Priority | Dependency | Frequency | Effort Estimate | Verification Criteria | Status | Ownership |

| Step 6.1: Test Your AI Visibility | List 10–20 target questions with exact phrasing | High | SEO Analyst | Recurring | 0.5 hr/month | Question bank updated | Open | None |

| Test each question in ChatGPT, Claude, Perplexity, Gemini | High | SEO Analyst | Recurring | 1.5 hrs/month | Results recorded for all platforms | Open | None | |

| Record citation type/position/competitors per test | High | SEO Analyst | Recurring | 0.5 hr/month | Tracker columns filled completely | Open | None | |

| Capture screenshots of positive citations | Medium | SEO Analyst | Recurring | 0.25 hr/month | Screenshots stored with filenames in tracker | Open | None | |

| Analyze trends MoM for visibility | High | SEO Analyst | Recurring | 0.5 hr/month | MoM change annotated | Open | None | |

| Step 6.2: Monitor Traditional Traffic Metrics | Build GA4 dashboard for channels/landing pages/conversions | High | Analytics | One-time | 2 hrs | Dashboard link shared with team | Open | None |

| Set alerts for ±20% traffic changes | High | Analytics | One-time | 0.5 hr | Alerts firing on thresholds | Open | None | |

| Segment by device and referrer | Medium | Analytics | Recurring | 0.25 hr/month | Segments saved | Open | None | |

| Report monthly on traffic and conversions | High | Analytics | Recurring | 0.75 hr/month | Report distributed | Open | None | |

| Compare quarter-over-quarter trends | Medium | Analytics | Recurring | 0.5 hr/quarter | QoQ table appended | Open | None | |

| Step 6.3: Conduct Monthly Performance Reviews | Schedule 2–3 hr monthly review meeting | High | Project Manager | Recurring | 0.25 hr/month | Calendar invite sent | Open | None |

| Compile KPIs (AI visibility, traffic, content, reviews, links) | High | Project Manager | Recurring | 0.75 hr/month | KPI pack ready | Open | None | |

| Identify top wins and losses | High | Project Manager | Recurring | 0.5 hr/month | Wins/losses section filled | Open | None | |

| Set 3–5 priority actions for next month | High | Project Manager | Recurring | 0.5 hr/month | Action list approved | Open | None | |

| Archive monthly report for longitudinal analysis | Medium | Project Manager | Recurring | 0.25 hr/month | Report stored in shared drive | Open | None | |

| Step 6.4: Identify Content Gaps | Audit competitor appearances in AI answers | High | SEO Analyst | Recurring | 0.5 hr/month | Competitor visibility notes added | Open | None |

| List 15–20 new questions not covered well | High | Content Strategist | Recurring | 1 hr/month | Gap list updated | Open | None | |

| Rate impact and difficulty for each new question | Medium | Content Strategist | Recurring | 0.5 hr/month | Scores present | Open | None | |

| Tier into Quick Wins, Strategic, Later | High | Content Strategist | Recurring | 0.25 hr/month | Tiering complete | Open | None | |

| Create briefs for top 3 Tier 1 topics | High | Content Strategist | Recurring | 1 hr/month | Briefs approved | Open | None | |

| Add internal link targets for planned content | Medium | SEO Analyst | Recurring | 0.25 hr/month | Link plan appended | Open | None | |

| Step 6.5: Competitive AEO Intelligence | Select 3–4 main competitors for monitoring | High | SEO Lead | Recurring | 0.25 hr/month | Competitor list confirmed | Open | None |

| Run head-to-head AI tests for shared questions | High | SEO Analyst | Recurring | 1 hr/month | Results logged per competitor | Open | None | |

| Analyze competitor content structure and authority signals | High | SEO Analyst | Recurring | 0.75 hr/month | Findings summarized | Open | None | |

| Build comparison matrix (Me vs competitors) | Medium | SEO Analyst | Quarterly | 1 hr/quarter | Matrix published | Open | None | |

| List 5–10 tactics to adopt and weaknesses to exploit | High | SEO Lead | Quarterly | 1 hr/quarter | Action list agreed | Open | None | |

| Draft 90-day competitive action plan | High | Project Manager | Quarterly | 1.5 hrs/quarter | Plan approved | Open | None |

Website Audit Checklist

| Sheet | Total checks | Done | Open | Critical open | High open | Completion % | Owner notes |

| Technical SEO | 35 | 0 | 35 | 3 | 12 | 0.00% | |

| Content & AEO | 23 | 0 | 23 | 1 | 7 | 0.00% | |

| GEO & LLM | 10 | 0 | 10 | 0 | 4 | 0.00% | |

| Analytics & Reporting | 7 | 0 | 7 | 2 | 3 | 0.00% | |

| Overall | 75 | 0 | 75 | 6 | 26 | 0.00% | |

| Notes:• Update Status columns in each sheet.• Use filters to focus on Critical/High items.• Export open items into your sprint backlog. | |||||||

| Category | Check ID | Check item | Why it matters | How to test | Tooling |

| Crawl & Index | T001 | Robots.txt accessible and not blocking critical sections | Blocking key paths prevents indexing and AI retrieval. | Open /robots.txt; verify Allow/Disallow for key directories and templates. | Browser, robots tester |

| Crawl & Index | T002 | XML sitemap(s) present, valid, and referenced in robots.txt | Improves discovery and ensures canonical URLs are crawled. | Validate sitemap.xml; check status 200, correct URLs; submit to GSC/Bing. | Screaming Frog, GSC |

| Crawl & Index | T003 | Key AI crawlers allowed in robots.txt (GPTBot, ChatGPT‑User, PerplexityBot, Google‑Extended, ClaudeBot, CCBot) | AI visibility requires crawlability; many hosts block by default. | Check robots.txt user-agent rules; verify not disallowed. | Robots.txt, logs |

| Crawl & Index | T004 | Noindex tags absent on production pages that should rank | Accidental noindex can wipe organic traffic. | Crawl key templates; inspect meta robots and X‑Robots‑Tag headers. | Screaming Frog |

| Indexing | T005 | Canonical tags present and self-referential on key pages | Prevents duplicate URL versions competing; guides consolidation. | Check canonical points to preferred URL; validate on paginated/filtered pages. | Screaming Frog |

| Indexing | T006 | Correct HTTP status codes (200/301/404) across site | Broken status codes harm crawl efficiency and UX. | Full crawl; resolve 4xx/5xx spikes; ensure proper redirects. | Screaming Frog, logs |

| Indexing | T007 | Redirect chains eliminated (≤ 1 hop) | Chains waste crawl budget and slow users. | Find redirect chains; update links and rules. | Screaming Frog |

| Performance | T008 | Core Web Vitals within targets (LCP, CLS, INP) | Performance impacts rankings and trust; finance sites should be strict. | Check CrUX/Lighthouse; test key templates on mobile. | PageSpeed, Lighthouse |

| Performance | T009 | Image optimization: next-gen formats, responsive sizes, lazy-load below fold | Reduces LCP/INP and bandwidth. | Audit image sizes and formats; ensure srcset and compression. | Lighthouse, DevTools |

| Rendering | T010 | Critical content server-rendered or reliably indexable with JS | If content is only client-rendered, crawlers may miss it. | Test with rendered HTML vs DOM; use URL Inspection. | GSC, DevTools |

| International | T011 | Hreflang implemented correctly for all languages/markets | Prevents wrong-language ranking and improves international targeting. | Validate hreflang annotations, return tags, canonicals and sitemap hreflang. | Hreflang tools, GSC |

| International | T012 | Locale-specific domains/subdirectories consistently mapped and tracked | Avoids attribution issues and trust disconnect across markets. | Review architecture, redirects, canonicals, and analytics filters. | GA4, GSC |

| Structured Data | T013 | Schema markup validates (no errors) on core templates | Enables rich results and answer extraction. | Run schema validation for pages; fix missing required fields. | Rich Results Test, Schema.org |

| Structured Data | T014 | Organization schema includes SameAs links to official profiles | Reinforces entity recognition for AI systems. | Check Organization JSON‑LD; add SameAs URLs. | Schema validator |

| Structured Data | T015 | FAQPage schema for FAQ modules; answers concise | Targets PAA/snippets and AI extraction. | Validate FAQPage JSON‑LD; ensure Q/A match on-page content. | Schema validator |

| Security | T016 | HTTPS enforced, HSTS enabled, no mixed content | Trust and security are baseline ranking/UX requirements. | Check http→https redirect; run mixed content scan. | SecurityHeaders, DevTools |

| Security | T017 | Cookie consent and privacy policy accessible and compliant | Legal compliance; also a trust signal. | Check footer links; test consent banner behavior. | Manual |

| UX | T018 | Mobile friendliness and responsive layout across breakpoints | Mobile-first indexing and conversion. | Test key templates on mobile devices; fix viewport and tap targets. | Lighthouse, manual |

| Logs | T019 | Server logs available for crawl diagnostics | Needed to validate bot access and crawl patterns. | Ensure log retention, bot identification, and access process. | Server logs |

| Crawl & Index | T020 | Pagination handled (rel=next/prev not required but canonical strategy consistent) | Avoids duplicate indexing and thin pages. | Review paginated series; ensure unique titles and canonicals. | Manual/SF |

| Crawl & Index | T021 | Faceted navigation controlled (parameter handling, noindex/canonicals) | Prevents crawl traps and index bloat. | Audit parameter URLs; set rules in GSC/Bing; add canonicals/noindex where needed. | GSC, SF |

| Indexing | T022 | 404 page returns 404 status and offers helpful navigation | UX + correct indexing signals. | Test 404 responses; ensure not returning 200. | Browser |

| Indexing | T023 | Soft 404s eliminated | Soft 404s reduce quality signals. | Find ‘soft 404’ in GSC; fix templates. | GSC |

| Performance | T024 | Font loading optimized (preload critical, swap) | Improves LCP and layout stability. | Audit font requests; set font-display. | DevTools |

| Performance | T025 | Third-party scripts audited and minimized | Reduces INP and CLS. | Audit tag manager and vendors. | Lighthouse |

| Rendering | T026 | Structured data rendered in initial HTML (not injected late) | Ensures parsers detect schema. | View-source for JSON-LD; ensure present. | View-source |

| Security | T027 | Security headers set (CSP, X-Frame-Options, etc.) | Hardens site; trust signal. | Run security header scan; apply best practices. | SecurityHeaders |

| Technical | T028 | Sitemap includes lastmod and only canonical 200 URLs | Avoids wasting crawl budget. | Validate sitemap entries vs crawl. | SF |

| Technical | T029 | Broken internal links fixed | Improves crawl paths and UX. | Crawl for 4xx internal links. | SF |

| Technical | T030 | Internal redirecting links updated to final URLs | Avoids wasted crawl and slow UX. | Crawl for 3xx internal links; update. | SF |