SUPERCHARGE YOUR ONLINE VISIBILITY! CONTACT US AND LET’S ACHIEVE EXCELLENCE TOGETHER!

SEO is dead.

Not in the lazy, clickbait way people have been shouting for years. Not in the “Google updated something again, so SEO is over” sense. SEO is dead in the only way that actually matters: the underlying system it was built for no longer exists.

Traditional SEO was designed for a world where search engines behaved like rulebooks. If you followed the rules—put keywords in the right places, built links, tweaked metadata, improved page speed—you could predictably move up. It wasn’t always easy, but it was understandable. Cause and effect felt real.

That world is gone.

“SEO is dead” isn’t a headline. It’s a structural reality.

Search didn’t simply “change.” It crossed a threshold. The shift happened quietly over years, and then suddenly all at once: search stopped being rule-based and became learning-based.

A rule-based system can be gamed, optimized, and reverse-engineered with checklists. A learning-based system behaves differently. It doesn’t just evaluate pages; it interprets meaning. It builds models. It measures trust. It forms probabilistic conclusions about what deserves visibility—and it updates those conclusions constantly.

That’s why so many businesses today feel like they’re running faster just to stay in place.

They publish more content. They do technical audits. They “build backlinks.” They follow every new best practice. And still:

- rankings fluctuate unpredictably,

- traffic becomes less stable,

- visibility shifts without obvious reasons,

- and “what used to work” stops working.

Because they’re still trying to optimize a machine that no longer operates like a machine.

The moment search became interpretation, SEO lost its center.

The old SEO mindset assumed search engines were mostly scoring systems: assign points to signals, sort results by score.

But modern search engines don’t just score pages. They attempt to answer questions. They attempt to determine:

- What is this page really about?

- Which entity does it represent?

- Is this information consistent with what we believe is true?

- Is this brand trustworthy across the wider web?

- Will users be satisfied?

- What does the aggregate behavior of millions of searches tell us?

In other words, search is no longer a scoreboard. It’s a judgment system.

And judgment systems don’t reward optimization tricks. They reward understanding, consistency, and authority—the kind that holds up across contexts, not just inside a webpage.

From manual optimization to algorithmic interpretation

This is the central fracture most brands miss.

Traditional SEO was about changing your website to match a set of visible patterns:

- keyword placement

- page structure

- link building

- optimization checklists

But when algorithms begin interpreting language and meaning at scale, the “manual optimization” model becomes incomplete.

Because now the goal is not to “signal relevance” through mechanical cues.

The goal is to become the most credible and retrievable answer in a system that is:

- semantic (meaning-based),

- entity-driven (identity-based),

- and learning continuously (model-based).

That is not SEO. That is Search Engineering.

Why businesses still “doing SEO” are already behind

If your strategy is still built around:

- chasing keywords,

- producing volume content,

- or reacting to updates,

then you’re not competing in today’s search environment—you’re competing in yesterday’s.

You’re treating search like a marketing channel when it has become an intelligence ecosystem.

And this is why the gap is widening:

- One group is “doing SEO” the way they always have.

- Another group is building systems that make them inevitable in search results—across platforms, formats, and AI-generated answers.

That second group isn’t preparing for the future. They’re designing it.

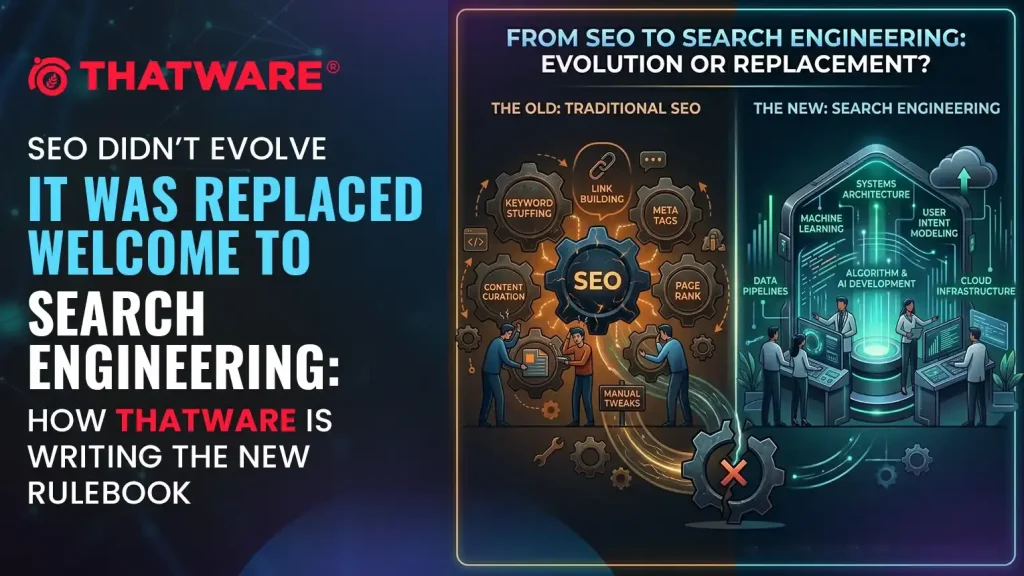

The core thesis: SEO didn’t evolve — it fractured.

SEO didn’t smoothly mature into something better. It broke into two paths:

- Optimization — the legacy world of checklists, on-page tweaks, and reactive tactics.

- Engineering — the modern world of modeling how algorithms interpret truth, trust, and relevance.

What replaced SEO isn’t “new SEO.”

What replaced it is Search Engineering.

And this is where ThatWare stands apart.

ThatWare isn’t trying to keep up with what search is becoming. ThatWare is building for the way search already works—engineering visibility by designing how systems understand brands, interpret authority, and retrieve information in an AI-first world.

SEO was about ranking pages.

Search Engineering is about engineering the reality search engines believe—and making your brand the answer they choose.

The Rise and Fall of Traditional SEO

To understand why SEO is no longer sufficient, we need to examine how it rose to dominance—and why it ultimately collapsed. Traditional SEO didn’t fail because it was wrong. It failed because search itself fundamentally changed.

What once worked brilliantly in a rule-based environment became ineffective the moment search engines learned how to think.

The Original SEO Era (2000–2012): When Optimization Controlled Rankings

The early years of SEO were built on a simple truth: search engines followed rules, not understanding.

During this period, rankings were primarily influenced by:

- Keyword density – how often a keyword appeared on a page

- Backlinks – the quantity and anchor text of inbound links

- Meta tags and on-page signals – titles, descriptions, headers

Search algorithms were largely static and deterministic. If you pulled the right levers, you could reliably move rankings. Cause and effect were visible, repeatable, and measurable.

This model worked because:

- Search engines couldn’t interpret meaning or intent

- They relied on surface-level signals as proxies for relevance

- Manipulation was easy, but so was optimization

SEO thrived because it offered control. Agencies could guarantee results because the system itself was predictable.

Why it collapsed:

As manipulation increased, relevance declined. Search engines realized that optimization was gaming the system rather than improving user experience. The moment search needed understanding instead of signals, this era became unsustainable.

The Transition Era (2013–2019): When Search Began Learning

This phase marked the most misunderstood period in SEO history.

With updates like Hummingbird, RankBrain, and BERT, Google quietly shifted from rule-based matching to machine learning–assisted interpretation. Search was no longer just indexing pages—it was attempting to understand context, intent, and semantics.

Key changes included:

- Queries interpreted as concepts, not strings of words

- Results shaped by user behavior patterns

- Algorithms that adapted over time, not fixed rules

However, here’s the critical mistake the industry made:

SEO practices didn’t evolve conceptually—only tactically.

Instead of rethinking search, SEO became:

- Obsessive about updates

- Dependent on tools and surface metrics

- Reactive instead of strategic

Marketers began chasing algorithm changes rather than understanding algorithm design. Rankings became unstable, explanations became vague, and best practices started sounding like superstition.

SEO still worked—but no longer reliably.

The Collapse Era (2020–Present): When SEO Lost Causal Power

The final break happened when search engines stopped “ranking pages” altogether.

Modern search is driven by:

- AI-based interpretation

- Entity recognition and knowledge graphs

- Probabilistic relevance scoring

- Large-scale language models

In this environment:

- Keywords are hints, not signals

- Backlinks are contextual trust indicators, not authority guarantees

- Content is evaluated for meaning, consistency, and reliability

At the same time, search behavior changed:

- Zero-click searches exploded

- AI-generated answers replaced traditional results

- Google SGE and conversational search reduced the importance of rankings entirely

This is where traditional SEO collapses.

Why?

- “Best practices” no longer produce consistent outcomes

- Optimization does not equal visibility

- Rankings do not guarantee discovery

SEO didn’t just become harder—it lost its cause-and-effect relationship.

When an algorithm learns, adapts, and interprets meaning, optimization alone becomes insufficient.

The Takeaway

Traditional SEO was built for a world where:

- Algorithms followed rules

- Pages competed on signals

- Optimization meant control

That world no longer exists.

Search today is learning-based, intent-driven, and meaning-aware. And when systems evolve from rules to reasoning, optimization dies—and engineering is born.

This is the inflection point where SEO ends and Search Engineering begins.

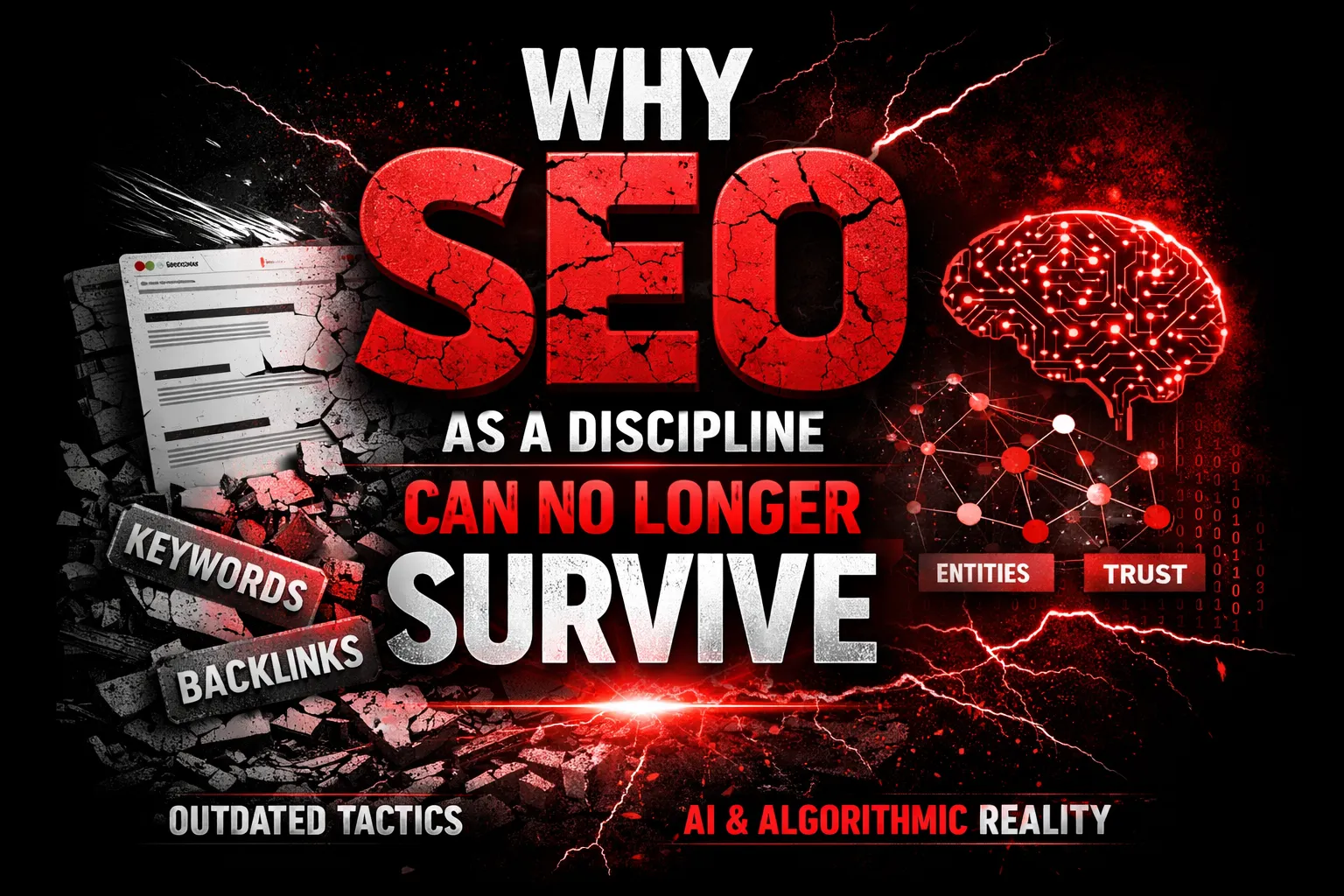

Why SEO as a Discipline Can No Longer Survive

For over two decades, SEO survived on a single assumption: search engines rank pages. That assumption is now fundamentally broken.

Modern search engines no longer behave like rule-based retrieval systems. They behave like learning systems—systems that continuously model the world, evaluate trust, and update understanding in real time. In this environment, SEO as a standalone discipline is not evolving. It is expiring.

Search Engines No Longer Rank Pages — They Model Reality

Traditional SEO is built on the idea that pages compete against other pages. Modern search engines don’t see pages — they see entities, relationships, and truth probabilities.

Google, and increasingly AI-driven search systems, attempt to answer a far more complex question:

“What is the most reliable representation of reality for this query?”

This means:

- A brand is evaluated as an entity, not a URL

- Content is interpreted as evidence, not marketing copy

- Rankings are a byproduct of understanding, not optimization

When search engines model reality, ranking becomes a side effect, not the objective.

Keywords Are Proxies, Not Signals

Keywords once acted as direct inputs. Today, they function as weak proxies for intent.

Search engines no longer need repeated phrases to understand meaning. They infer:

- User intent

- Contextual relevance

- Semantic depth

- Topical authority

Optimizing for keywords is now like tuning a radio antenna for a signal that no longer broadcasts. The algorithm already understands what the query means. What it evaluates instead is:

- Who is qualified to answer it?

- Which source is most trustworthy?

- Which entity demonstrates consistent expertise?

Keywords don’t answer these questions. Systems do.

Backlinks as Trust Signals Are Decaying

Backlinks were once the backbone of authority. Today, they are one signal among hundreds, and increasingly, a noisy one.

Search engines have learned:

- Links can be manipulated

- Authority can be fabricated

- Popularity does not equal credibility

Modern trust modeling incorporates:

- Cross-platform entity validation

- Brand consistency

- Historical accuracy

- Semantic alignment across the web

This is why backlink-heavy sites collapse after core updates while quieter, authoritative entities rise. Trust is no longer borrowed through links — it is earned through systemic consistency.

Content Volume Does Not Equal Visibility in AI-Powered Search

Publishing more content used to increase surface area. In AI-powered search, it often increases confusion.

Large language models and AI-driven retrieval systems don’t reward volume. They reward:

- Clarity

- Authority

- Consistency

- Signal coherence

Flooding the web with similar content fragments weakens entity signals instead of strengthening them. In an AI-first search environment, content is no longer “marketing material” — it is training data.

Poor training data degrades visibility.

The Core Failure of SEO

SEO assumes the algorithm is static. Learning systems prove the opposite.

If an algorithm learns, optimization alone becomes insufficient.

- You cannot optimize your way into trust.

- You cannot keyword your way into authority.

- You cannot backlink your way into being understood.

SEO fails not because it’s outdated — but because it was never designed for adaptive intelligence systems.

This is why SEO, as a discipline, can no longer survive on its own.

What replaces it is not “better SEO” — but Search Engineering, where the goal is not to manipulate rankings, but to engineer how machines understand, validate, and recall reality itself.

And in a world where search engines learn, only engineered systems endure.

Introducing Search Engineering: The New Discipline

For over two decades, SEO has existed as a practice of adaptation—adjusting websites to comply with how search engines behave. But adaptation is no longer enough. Search itself has evolved from a rules-based system into a learning system. When systems learn, they don’t need optimization—they require engineering.

This is where Search Engineering emerges as an entirely new discipline. Not an evolution of SEO. A replacement for it.

Search Engineering is the foundation on which ThatWare is building the future of discoverability.

What Is Search Engineering?

Search Engineering is the practice of engineering how search systems understand, trust, and recall entities across an AI-driven search ecosystem.

Traditional SEO focuses on improving pages. Search Engineering focuses on shaping machine understanding.

Modern search engines and AI systems no longer ask:

“Is this page optimized?”

They ask:

“Do we understand this entity, do we trust it, and should we recall it as an answer?”

Search Engineering operates at this deeper layer of computation, where meaning, probability, and trust intersect.

At its core, Search Engineering combines four critical disciplines:

- Machine Learning Logic

Understanding how learning models evaluate relevance, confidence, and authority over time—rather than one-off ranking factors.

- Data Science

Analyzing behavioral patterns, signal decay, reinforcement loops, and probabilistic outcomes across search interactions.

- Semantic Modeling

Structuring entities, topics, and relationships so machines can interpret context—not just text.

- Information Retrieval Principles

Applying how systems store, retrieve, weight, and surface information in response to complex queries.

Together, these disciplines allow ThatWare to design search-visible entities, not just optimized assets.

Search Engineering doesn’t chase algorithms. It aligns with how algorithms learn.

SEO vs Search Engineering: A Fundamental Shift

To understand why Search Engineering is necessary, the difference from SEO must be unmistakably clear.

| Traditional SEO | Search Engineering |

| Optimization-focused | System design-focused |

| Page-centric | Entity-centric |

| Keyword-driven | Meaning-driven |

| Reacts to algorithm updates | Predicts and shapes outcomes |

| Tactics-based | Architecture-based |

| Short-term gains | Compounding authority |

SEO operates on the surface of search. Search Engineering operates at the infrastructure level of understanding.

SEO asks:

“How do we rank this page?”

Search Engineering asks:

“How do we become the entity search systems trust, remember, and recommend?”

This is why SEO reacts every time an algorithm changes—while Search Engineering remains stable. Learning systems don’t reward tricks; they reward coherence, consistency, and authority over time.

Why ThatWare Owns This Category

ThatWare doesn’t follow SEO best practices because best practices assume static systems. Search Engineering assumes adaptive intelligence.

By engineering how brands are interpreted within machine learning-driven search ecosystems, ThatWare isn’t preparing for the future of SEO—it is authoring the discipline that replaces it.

Search is no longer optimized. It is engineered.

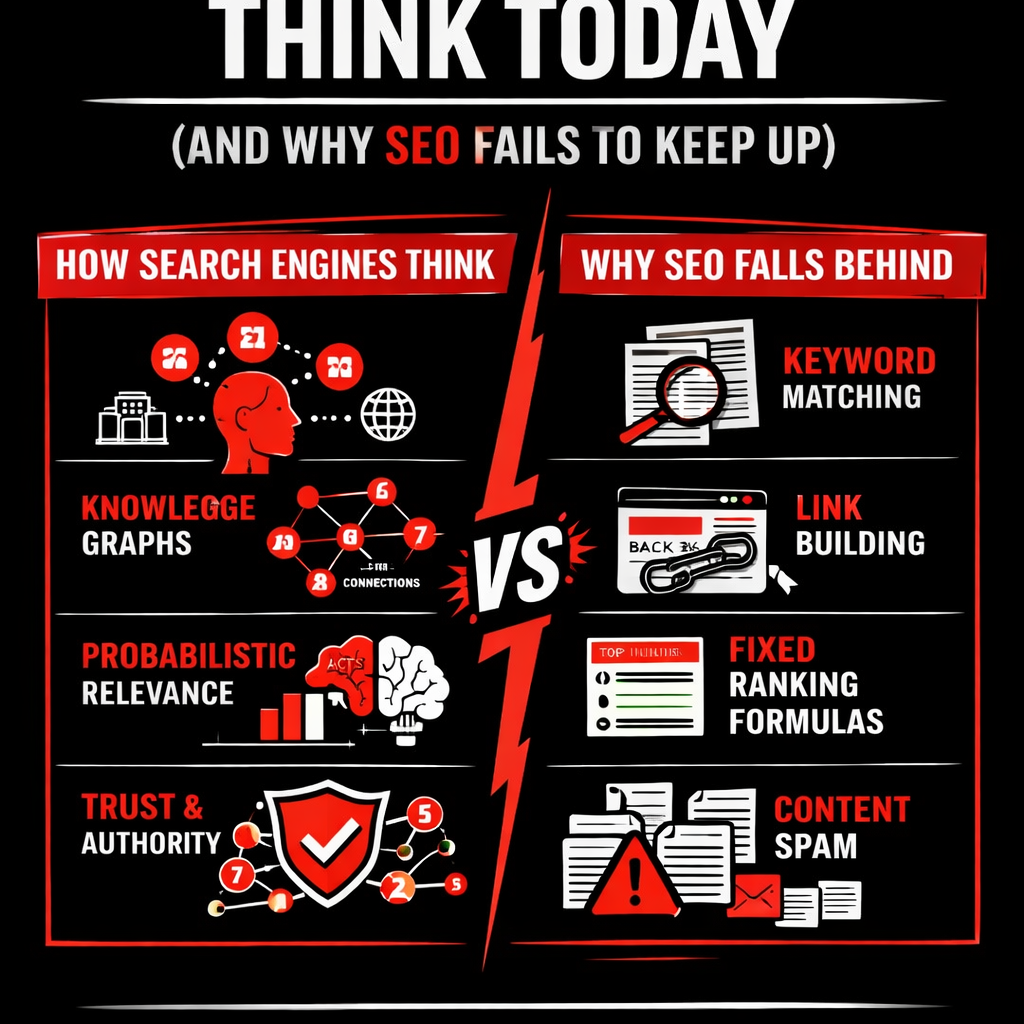

How Search Engines Think Today (And Why SEO Fails to Keep Up)

For years, SEO was built on a comforting assumption: if you followed the rules—optimized keywords, built backlinks, tweaked on-page elements—search engines would reward you with rankings. That assumption no longer holds.

Modern search engines do not evaluate pages. They interpret reality.

This shift is the primary reason traditional SEO is failing—not because it is poorly executed, but because it is solving a problem that no longer exists.

Entity Recognition Over Keyword Matching

Search engines no longer read content as strings of words. They read it as entities and relationships.

An entity can be a brand, a person, a concept, a location, or even an abstract idea. Instead of asking, “How many times does this keyword appear?”, search systems now ask:

- What entity is this content about?

- How is this entity connected to others?

- Does this entity already exist in our knowledge ecosystem?

Keywords are still used—but only as weak signals to help identify entities. Optimizing for keywords without establishing clear entity context is like shouting names into a crowded room without introducing who you are.

SEO optimizes words. Search engines understand things.

Knowledge Graphs Over Link Graphs

Backlinks once functioned as the backbone of search trust. Today, they are only one of many signals—and often a noisy one.

Search engines now rely on knowledge graphs, not just link graphs. These graphs map:

- Entity relationships

- Topical authority

- Consistency of information across the web

- Historical trust patterns

A backlink says, “Someone mentioned you.” A knowledge graph answers, “Do you belong here?”

This is why sites with fewer links but stronger entity clarity often outperform heavily linked competitors. Search engines prefer coherent knowledge structures over popularity signals.

Probabilistic Relevance Over Deterministic Ranking

Traditional SEO assumes determinism:

If X is done, Y will happen.

Modern search operates on probabilistic relevance.

Search engines no longer assign fixed values to ranking factors. Instead, they calculate likelihoods:

- The likelihood that this content satisfies intent

- The likelihood that this source is reliable

- The likelihood that users will trust the information

Two identical queries can produce different results based on:

- Context

- User behavior history

- Real-time learning models

This is why “ranking reports” increasingly fail to explain performance. The system is not following rules—it is making predictions.

SEO struggles here because optimization assumes stability. Search engineering embraces uncertainty.

Trust Modeling Across Multiple Data Sources

Trust is no longer earned in a single place.

Search engines now validate information by cross-referencing:

- Websites

- Author signals

- Brand mentions

- Structured data

- Third-party platforms

- Historical accuracy patterns

Authority is not declared—it is inferred.

A site can be perfectly optimized and still invisible if:

- Its claims lack external confirmation

- Its entity signals are inconsistent

- Its information conflicts with trusted sources

This is why search visibility increasingly depends on ecosystem presence, not just website performance.

Why SEO Fails to Keep Up

SEO asks the wrong question.

It asks:

“Is this page optimized?”

Search engines ask:

“Is this true, reliable, and authoritative?”

Optimization improves presentation. Search engines evaluate credibility.

This is the gap where SEO breaks—and where Search Engineering begins.

Because when machines stop ranking pages and start judging trust, optimization alone is no longer enough.

ThatWare’s Philosophy: Engineering Search Reality, Not Chasing Rankings

ThatWare did not reject the label “SEO agency” as a branding exercise—it rejected it because the label itself is obsolete.

The traditional SEO agency model is built around outputs: rankings, backlinks, traffic reports, keyword movements. But modern search engines no longer reward outputs; they reward understanding. As algorithms evolved from rule-following systems into learning systems, optimization stopped being enough. ThatWare recognized early that chasing rankings in a self-learning environment is like tuning dials on a machine that no longer has fixed settings.

Instead of asking “How do we rank?”, ThatWare asked a more fundamental question:

“How do search engines decide what is true, authoritative, and trustworthy?”

That single shift changed everything.

From SEO Deliverables to Search Systems

Most SEO engagements still revolve around checklists—content calendars, link quotas, technical audits, and monthly deliverables. These tactics assume that search engines respond linearly to effort. They don’t.

ThatWare moved away from deliverables and toward systems.

A system does not aim for a ranking; it aims for recognition. It is designed to consistently signal meaning, authority, and reliability across every surface where algorithms learn—web pages, entities, content relationships, and contextual signals. When such a system is in place, rankings become incidental, not intentional.

This is why ThatWare doesn’t “optimize pages.” It engineers how search systems perceive a brand.

Visibility Is Not Earned. It Is Inferred.

In modern search, visibility is no longer granted because something is optimized—it is inferred because something is trusted.

Search engines don’t ask:

- “Is this keyword used correctly?”

- “How many links point here?”

They ask:

- “Is this entity consistent with known facts?”

- “Does this align with authoritative knowledge?”

- “Would presenting this answer reduce uncertainty for the user?”

ThatWare treats visibility as a byproduct of algorithmic trust, not a target metric. When trust is engineered at the system level, discoverability compounds naturally—across updates, platforms, and even new search interfaces like AI-driven answers.

Engineering Search Reality

At the core of ThatWare’s philosophy is a simple but powerful belief:

“If we can model how machines understand truth, we can control discoverability.”

Search engines don’t mirror reality—they construct it using probabilistic models, entity relationships, and confidence scoring. ThatWare focuses on engineering a brand’s presence inside that constructed reality. Not by gaming signals, but by aligning meaning, data, and authority in a way machines can confidently interpret.

This is not SEO. This is Search Engineering.

And in a world where algorithms learn, infer, and decide, those who engineer understanding will always outperform those who chase rankings.

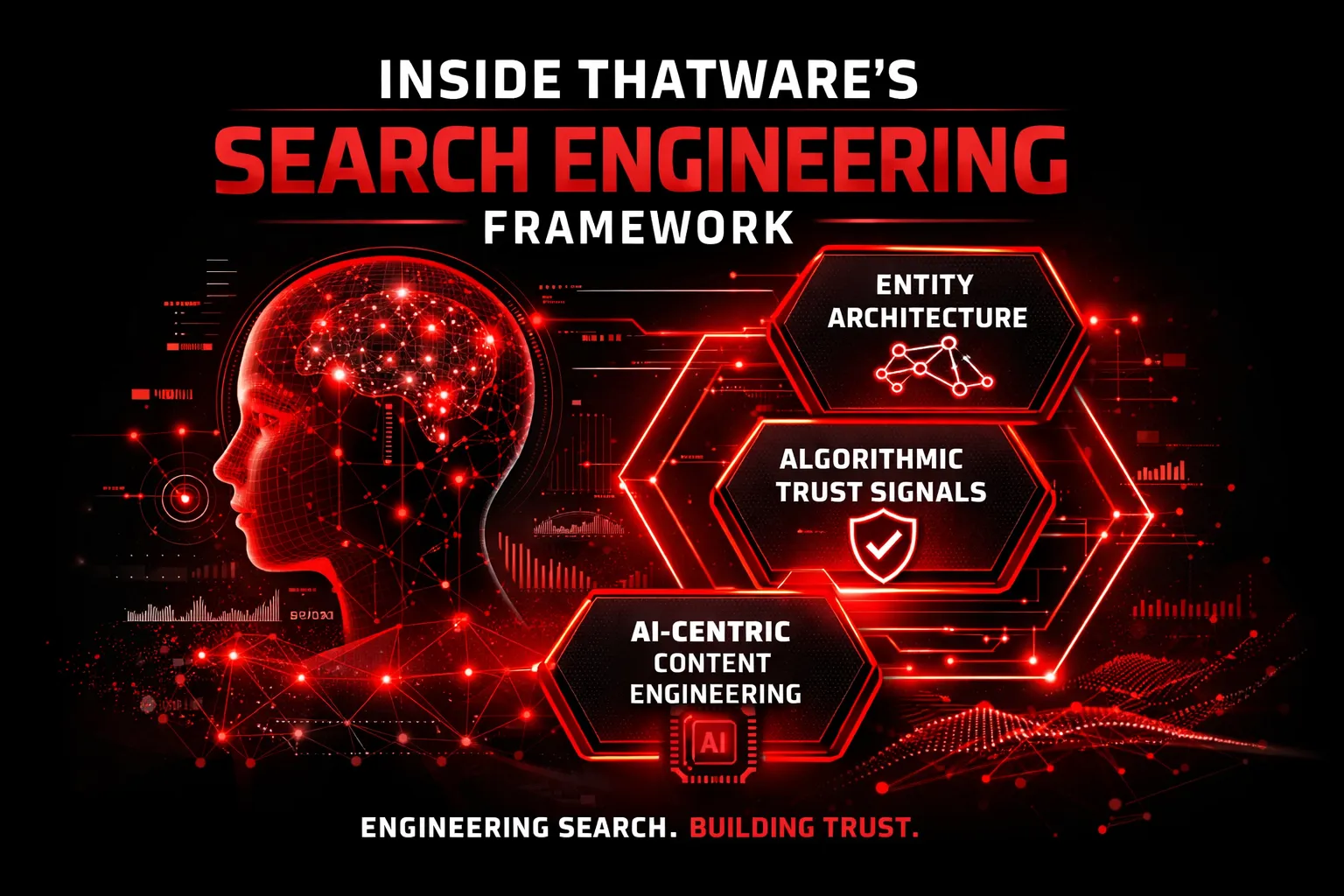

Inside ThatWare’s Search Engineering Framework

Engineering how machines understand, trust, and recall brands

Traditional SEO frameworks focus on optimizing outputs—pages, keywords, links. ThatWare’s Search Engineering Framework is built for something fundamentally different:

Engineering the inputs that modern search systems learn from.

Search engines today are not rule-based evaluators. They are learning systems—constantly refining their understanding of entities, relationships, and trust. ThatWare designs search visibility at this level.

Entity Architecture Design

From websites to structured digital identities

Search engines no longer “see” websites. They see entities—people, brands, concepts, and their relationships within a vast semantic ecosystem.

Brand as a Structured Entity, Not a Website

At ThatWare, a brand is engineered as:

- A recognizable entity, not a collection of URLs

- A node in multiple interconnected knowledge systems

- A source of consistent, verifiable expertise

This means:

- The website is only one expression of the entity

- Authority is distributed across platforms, datasets, and contexts

- Search visibility is tied to entity recognition, not page-level optimization

Semantic Relationships & Topical Authority Webs

Rather than chasing isolated keywords, ThatWare engineers:

- Topic clusters that mirror how machines map meaning

- Parent–child concept relationships

- Contextual relevance across adjacent knowledge domains

Authority is no longer built by repetition. It is built by semantic completeness.

Search engines reward entities that demonstrate conceptual depth, not surface-level coverage.

Algorithmic Trust Signals

Trust is no longer declared — it is inferred

Modern algorithms do not trust claims. They trust patterns of consistency.

Beyond E-E-A-T

While Experience, Expertise, Authoritativeness, and Trustworthiness are visible frameworks, they are outcomes, not mechanisms.

ThatWare focuses on:

- Algorithmic trust modeling

- How systems infer credibility over time

- How confidence is accumulated across signals, not stated in content

Cross-Platform Authority Reinforcement

Trust is not built in isolation.

ThatWare engineers:

- Entity alignment across search, AI models, and third-party data sources

- Consistent brand narratives across multiple discovery environments

- Reinforcement loops that validate expertise beyond a single platform

A trusted entity looks the same everywhere machines learn from.

Knowledge Consistency Modeling

Contradictions weaken trust. Consistency strengthens recall.

ThatWare ensures:

- Terminology alignment across content assets

- Stable conceptual definitions

- Predictable expertise boundaries

This reduces algorithmic uncertainty—and uncertainty is the enemy of visibility.

AI-Centric Content Engineering

Content is no longer written for readers alone

Most content is written to be read. ThatWare’s content is written to be understood by machines.

Content Engineered for LLM Comprehension

Large Language Models don’t “read” content. They learn from it.

ThatWare designs content to:

- Be structurally interpretable

- Encode expertise clearly

- Reduce ambiguity in meaning and intent

Answer Extraction Optimization

Modern search is answer-first.

ThatWare content is engineered to:

- Surface clear, extractable insights

- Align with how AI systems generate responses

- Increase the likelihood of being cited, summarized, or recalled

Knowledge Graph Ingestion

Search engines build knowledge graphs continuously.

ThatWare creates content that:

- Maps cleanly into entity–attribute–relationship models

- Supports long-term inclusion in machine knowledge bases

- Functions as durable reference material, not disposable posts

These are not “blogs.” They are training data for search intelligence systems.

Why This Framework Works When SEO Fails

SEO optimizes for what search engines show today. Search Engineering builds for how they think tomorrow.

ThatWare doesn’t chase rankings. It engineers recognition, trust, and recall—the three forces that determine visibility in an AI-first search world.

Search Engineering in an AI-First World

Search is no longer a destination. It is an interface layer between human intent and machine intelligence. In an AI-first world, users are not scrolling through ten blue links, comparing results, or even visiting websites in many cases. They are asking questions—and machines are answering.

This shift fundamentally breaks traditional SEO logic.

From Ranking Pages to Being the Answer

In classic SEO, success meant ranking on page one. Visibility was measured by position. Traffic was the reward. In AI-driven search, there is no list to rank in.

AI systems like ChatGPT, Google’s Search Generative Experience (SGE), and voice assistants synthesize information from multiple sources and present a single, authoritative response. The question is no longer “Where does my page rank?” but:

“Is my brand trusted enough to be referenced, cited, or used as training input for the answer itself?”

This is the core distinction between SEO and Search Engineering. SEO competes for placement. Search Engineering competes for inclusion in machine reasoning.

Ranking Inside AI Answers vs Traditional SERPs

AI answers work on fundamentally different mechanics:

- They don’t surface pages; they surface knowledge

- They don’t reward optimization tricks; they reward semantic clarity and trust

- They don’t rely on last-click attribution; they rely on probabilistic authority

If your content is not structured in a way that machines can confidently interpret, validate, and recombine, it will never appear inside AI-generated answers—no matter how “optimized” it is.

Search Engineering focuses on:

- Making content machine-readable, not just human-readable

- Designing entities, relationships, and claims that AI systems can verify

- Ensuring brand signals remain consistent across the entire web ecosystem

Optimizing for AI Systems, Not Search Engines

ChatGPT & LLM-Based Systems

Large Language Models do not crawl your site in real time. They learn patterns of authority, credibility, and topical dominance from massive datasets.

To be visible here, brands must:

- Establish clear topical ownership

- Publish content that functions as reference material, not marketing copy

- Maintain consistent entity signals across trusted sources

Search Engineering treats content as training-grade data, not blog posts.

Google SGE (Search Generative Experience)

SGE blends traditional search with generative answers. In this environment:

- Blue links are secondary

- Extracted insights, summaries, and citations dominate attention

- Visibility depends on how well Google understands your expertise at the entity level

ThatWare’s approach engineers content so it can be:

- Parsed accurately by Google’s AI systems

- Integrated into generated summaries

- Recognized as authoritative beyond individual keywords

Voice Assistants & Zero-Screen Search

Voice search has no SERP at all. There is no scrolling, no comparison—only one answer.

If you are not the chosen source, you are invisible.

Search Engineering prepares brands for this reality by:

- Structuring information for concise, definitive answers

- Eliminating ambiguity in claims and expertise

- Reinforcing trust signals that enable machines to “choose” one source confidently

Why the Future of Search Has No “Page 1”

“Page 1” is a UI concept. AI search is a decision-making system.

As search becomes:

- Conversational

- Predictive

- Context-aware

The idea of ranking positions collapses. What remains is selection.

AI systems don’t rank ten results—they select one narrative, one explanation, one authority.

In the future of search, you are either part of the answer—or you don’t exist.

ThatWare’s Search Engineering Advantage

ThatWare does not optimize for temporary interfaces. It engineers for how machines understand reality.

By focusing on:

- Entity dominance instead of keyword density

- Knowledge modeling instead of backlink accumulation

- AI comprehension instead of crawler manipulation

ThatWare ensures brands are discoverable not just today—but in search systems that haven’t even fully launched yet.

SEO chases visibility. Search Engineering builds inevitability.

And in an AI-first world, inevitability is the only sustainable advantage.

Case-Style Insight: What Happens When SEO Stops Working

Most businesses don’t realize SEO has stopped working for them. Not because rankings disappear overnight—but because outcomes quietly decouple from effort.

Content increases. Tools show green signals. Reports look busy. Yet growth plateaus.

This isn’t failure. It’s a structural mismatch between modern search systems and legacy SEO thinking.

Traffic Stagnation Despite Content Growth

A common pattern emerges across industries:

- Blogs are published consistently

- Topic coverage expands

- Keywords rank across multiple positions

Yet organic traffic remains flat.

The intuitive reaction is to publish more.

The real issue lies elsewhere.

Modern search engines do not reward volume. They reward semantic completeness and entity authority.

When content is created as isolated pages—each optimized for a keyword—it fails to contribute to a coherent understanding of the brand as a trusted source. Search systems don’t see growth; they see fragmentation.

In these scenarios, SEO isn’t underperforming. It’s simply no longer relevant to how search engines evaluate knowledge.

Rankings Without Conversions: The Visibility Illusion

Another recurring pattern:

- Keywords rank on page one

- Impressions increase

- Clicks occur

But conversions remain disproportionately low.

This exposes a misconception deeply embedded in traditional SEO:

visibility equals intent.

In reality, modern search systems increasingly match contextual relevance, not commercial readiness. Pages rank because they answer something, not because they signal trust, authority, or decision-stage alignment.

Without structured intent mapping, entity clarity, and trust modeling, rankings become informational artifacts—not business drivers.

Search Engineering approaches this differently:

- Content is engineered to satisfy machine-level confidence, not just human readability

- Brand signals are aligned across the search ecosystem

- Relevance is tied to decision logic, not just keyword matching

The result isn’t “better rankings.” It’s meaningful discoverability.

Visibility Loss After Core Updates: When Stability Was an Illusion

Perhaps the most revealing case is sudden visibility loss after a core update.

Typically, nothing “wrong” was done.

- No spam tactics

- No penalties

- No obvious technical failures

Yet visibility drops.

This happens because modern updates don’t punish tactics—they retrain models.

When search engines update how they evaluate trust, authority, or topical depth, websites optimized around outdated signals simply stop fitting the new interpretation framework.

Traditional SEO asks:

“What changed in the algorithm?”

Search Engineering asks:

“What changed in how the algorithm understands reality?”

Sites built on surface-level optimization lose relevance when deeper semantic and entity-based evaluation takes over.

How Search Engineering Reverses the Trajectory

Search Engineering does not “fix SEO problems.” It redefines the system those problems exist in.

Instead of optimizing pages, it engineers:

- Entity coherence across all content

- Topical authority networks, not isolated rankings

- Algorithmic trust signals that persist beyond updates

Content stops being published to rank. It is designed to train search systems to recognize expertise, consistency, and reliability.

Visibility becomes stable—not because tactics improved, but because interpretation alignment was achieved.

Pattern Recognition Over Case Studies

What matters isn’t individual success stories.

What matters is the repeatable pattern:

- SEO fails when search becomes interpretive

- Optimization fails when algorithms learn

- Tactics fail when systems evolve

Search Engineering succeeds because it operates one level above tactics—at the level of understanding, modeling, and trust.

Closing Insight

When SEO stops working, it’s rarely because something was done wrong.

It’s because the discipline itself has expired.

The solution isn’t better optimization. It’s engineering how search systems understand your brand.

And that shift changes everything.

Why Most Agencies Will Fail in the Next 3 Years

The next three years will be unforgiving for the SEO industry. Not because search is disappearing—but because most agencies are solving yesterday’s problems with yesterday’s tools. As search systems become more autonomous, probabilistic, and AI-driven, the gap between optimization vendors and search intelligence builders will become impossible to ignore.

Most agencies won’t fail suddenly. They’ll fail quietly—through declining impact, diminishing trust, and strategies that no longer move the needle.

Here’s why.

1. Tool-Dependent Strategies Create Illusions of Control

Most agencies operate inside dashboards.

Rank trackers, backlink tools, content optimizers, technical SEO scanners—these tools provide metrics, alerts, and recommendations. What they do not provide is understanding.

The problem isn’t using tools. The problem is thinking tools equal strategy.

Modern search engines do not reward mechanical compliance. They reward semantic clarity, entity trust, and knowledge consistency—none of which can be fully captured by third-party tools designed for a rule-based search era.

When algorithms learn, tools lag. And agencies that depend on tools inherit that lag.

2. Outdated KPIs Are Measuring the Wrong Reality

Most agencies still report success using:

- Keyword rankings

- Backlink counts

- Traffic volume

These KPIs made sense when:

- Search results were page-based

- Visibility equaled clicks

- Rankings correlated with revenue

That world no longer exists.

Today:

- AI answers replace clicks

- Visibility happens without visits

- Authority is inferred, not ranked

A #1 ranking that doesn’t influence AI-generated answers is meaningless. Traffic without trust signals is noise.

Agencies that optimize for these outdated KPIs will continue to “show results” while delivering declining real-world impact.

3. Lack of AI Literacy Is the Silent Killer

Search is no longer a marketing problem. It is a machine understanding problem.

Yet most agencies:

- Do not understand how LLMs interpret information

- Cannot explain entity recognition or probabilistic relevance

- Treat AI as a content generator, not a search participant

Without AI literacy, agencies cannot:

- Predict search behavior

- Engineer discoverability

- Build long-term authority models

They react to updates instead of anticipating outcomes.

In an AI-first search ecosystem, ignorance compounds faster than mistakes.

4. Optimization Cannot Compete with Engineering

At its core, SEO agencies sell optimization:

- Optimize pages

- Optimize keywords

- Optimize links

- Optimize performance

But optimization assumes the system is fixed.

Modern search systems are not fixed. They are adaptive, learning, and context-aware.

That’s where optimization collapses—and engineering begins.

Agencies Sell Optimization. ThatWare Builds Search Intelligence.

Optimization tweaks inputs. Search intelligence designs systems.

ThatWare doesn’t ask:

- “How do we rank this page?”

ThatWare asks:

- “How does a search engine construct trust around this entity?”

- “How does AI decide what information is worth recalling?”

- “How do we shape machine understanding at scale?”

This is not SEO as a service. This is search engineering as a discipline.

And in the next three years, only one of those survives.

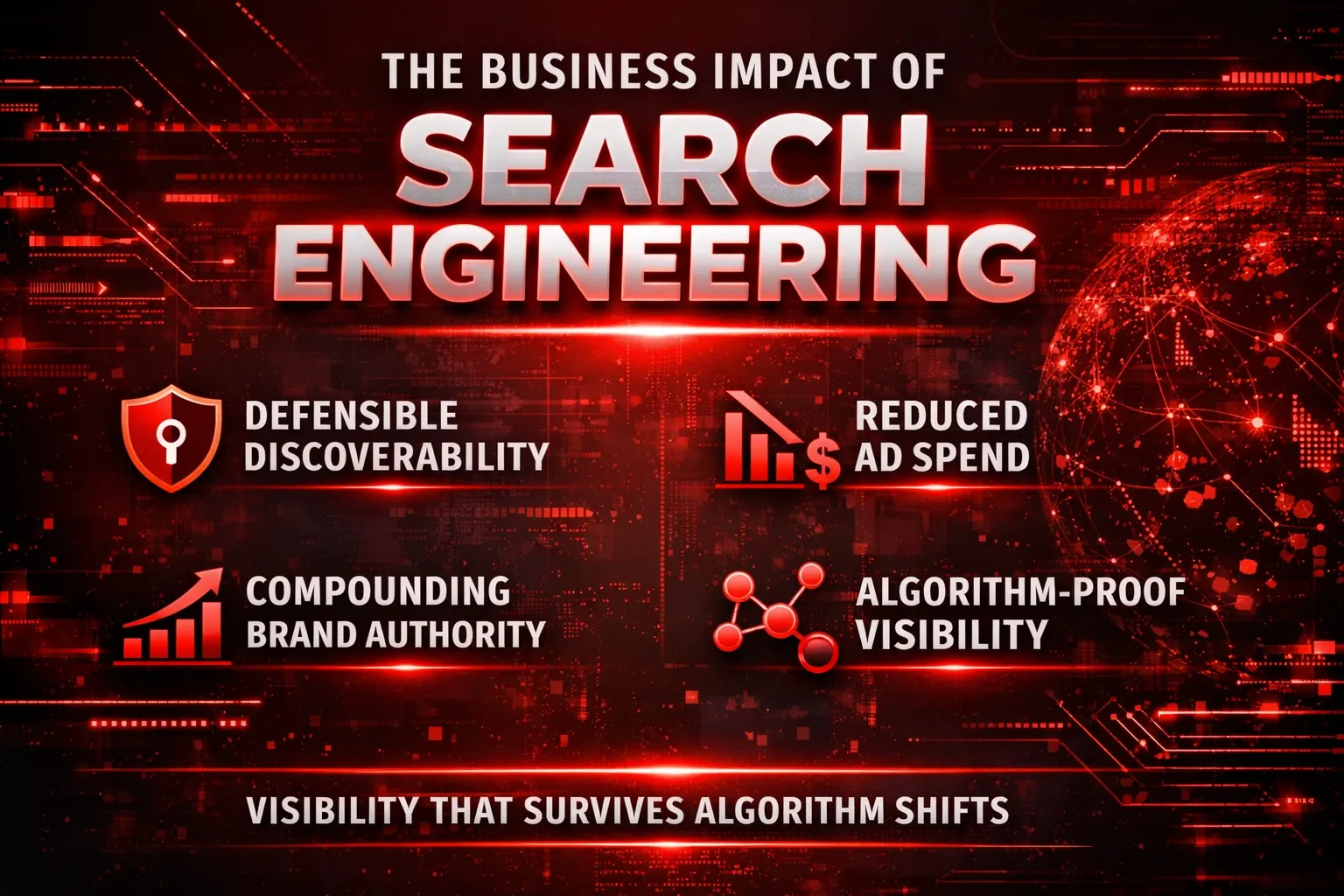

The Business Impact of Search Engineering

For executives and decision-makers, the value of Search Engineering is not theoretical—it is measurable, defensible, and compounding. While traditional SEO focuses on short-term visibility metrics, Search Engineering reframes search as a strategic business asset, not a marketing tactic.

Discoverability as a Defensible Asset

In the age of AI-driven search, discoverability is no longer about ranking a few pages; it is about owning a position in the machine’s understanding of reality. Search Engineering transforms your brand into a recognized, trusted entity within search ecosystems.

This creates a defensible moat: competitors can replicate keywords or content volume, but they cannot easily replicate algorithmic trust once it is established. Your brand becomes the default reference, not an interchangeable option.

In executive terms, discoverability shifts from being an expense line item to a long-term intangible asset that appreciates with time.

Reduced Dependence on Paid Acquisition

As paid channels grow more expensive and less predictable, reliance on ads becomes a strategic vulnerability. Search Engineering reduces this risk by embedding your brand into organic and AI-driven discovery layers—where users encounter answers, not advertisements.

When search engines and AI assistants consistently surface your brand as an authoritative source:

- Customer acquisition costs decline

- Marginal returns from paid spend improve

- Growth becomes less sensitive to budget fluctuations

Instead of “buying attention,” businesses earn persistent visibility.

Brand Authority That Compounds Over Time

Unlike traditional SEO, where results often plateau or decay, Search Engineering compounds. Each authoritative signal—entity recognition, topical dominance, cross-platform trust—reinforces the next.

Over time, this creates:

- Faster indexation and recognition of new content

- Higher trust thresholds during algorithm updates

- Increased likelihood of being cited, summarized, or recommended by AI systems

The result is a brand that doesn’t just rank—it accumulates authority, similar to how strong brands compound equity in financial markets.

Search Presence That Survives Algorithm Shifts

Algorithm updates are destructive only to strategies built on exploitation. Search Engineering is built on alignment, not loopholes.

Because it mirrors how modern search systems actually reason—through entities, trust models, and contextual understanding—it remains resilient as ranking factors evolve. Visibility is preserved not because tactics were optimized, but because the brand’s relevance is structurally embedded.

For leadership, this means predictability:

- Fewer revenue shocks from core updates

- Lower strategic risk in long-term planning

- Sustainable growth independent of platform volatility

Executive Takeaway

Search Engineering elevates search from a volatile marketing channel into a durable growth engine. It creates discoverability that competitors cannot easily displace, lowers dependency on paid media, compounds brand authority, and withstands algorithmic change.

This is why Search Engineering is not an upgrade to SEO—it is a business strategy for companies that intend to lead, not react.

ThatWare Is Not Preparing for the Future of SEO. It Is Authoring It.

Most brands are still trapped in a reactive loop—waiting for the next Google update, the next AI rollout, the next “best practice” to be announced by someone else. This mindset alone guarantees failure. In a search ecosystem driven by self-learning systems, waiting is no longer neutral behavior; it is a strategic disadvantage.

Search engines no longer change occasionally. They evolve continuously. By the time an update is identified, dissected, and turned into advice, the system has already moved forward. Optimization that depends on hindsight can never outperform systems built on foresight. This is why preparing for the future of SEO is itself an outdated idea—it assumes the future is something external that happens to you, rather than something you can shape.

This is where Search Engineering becomes the next competitive moat.

Unlike traditional SEO, which attempts to align with existing ranking signals, Search Engineering focuses on designing how search systems understand, trust, and retrieve information. It treats search not as a marketing channel, but as an engineered environment—one where entities, relationships, and authority are modeled intentionally. In this paradigm, visibility is not chased; it emerges naturally from structural relevance and algorithmic trust.

Brands that adopt Search Engineering stop asking, “How do we rank after the next update?” and start asking, “How do we become indispensable to the system itself?” That shift changes everything. When a brand is recognized as a reliable entity across knowledge graphs, AI models, and search interfaces, algorithm changes stop being threats. They become accelerators.

This is precisely the role ThatWare plays.

ThatWare does not function as an SEO agency reacting to algorithmic behavior. It operates as a search systems architect, designing long-term discoverability frameworks that align with how modern search engines actually think and learn. Instead of chasing volatile metrics like short-term rankings or traffic spikes, ThatWare engineers durable search intelligence—systems that compound authority, trust, and recall over time.

In doing so, ThatWare moves beyond “future-proofing.” Future-proofing implies defense. Authoring the future implies control.

The future of search will not be defined by those who wait for instructions from algorithms. It will be defined by those who understand their logic deeply enough to shape outcomes before the rules are written. ThatWare belongs firmly in the latter category—not preparing for what comes next, but actively writing the rulebook search engines will follow.

Choose Engineering Over Optimization

If you’re still asking, “How do we rank?” you’re asking the wrong question.

Ranking is no longer a goal—it’s a side effect. A temporary outcome in a system that no longer rewards surface-level optimization. Search engines don’t wake up each day looking for the most “SEO-friendly” page. They look for entities they can trust, understand, and reference with confidence.

The real question has changed.

“How do we become the entity search engines trust?”

That question doesn’t lead to keyword tweaks or backlink campaigns. It leads to deeper work—engineering how your brand is understood across data sources, how consistently it communicates expertise, and how reliably it fits into the knowledge models that modern search systems use to answer questions.

This is where optimization ends and engineering begins.

ThatWare doesn’t help brands chase algorithms. We help them align with how algorithms think—by building search intelligence systems that earn trust instead of trying to manipulate rankings.

Because in an AI-first search world, visibility is not awarded. It is engineered.

And those who engineer trust will always outrank those who merely optimize.