SUPERCHARGE YOUR ONLINE VISIBILITY! CONTACT US AND LET’S ACHIEVE EXCELLENCE TOGETHER!

The Quiet Death of Keyword SEO

For more than two decades, SEO has been explained in deceptively simple terms: find the right keywords, optimize pages around them, and climb the rankings. This mental model became so deeply ingrained that, for many teams, it still defines how SEO success is measured and pursued. Keywords are researched, mapped, and inserted; rankings are tracked obsessively; traffic charts are celebrated. Yet beneath this familiar routine, something fundamental has already broken.

The SEO Myth That Refuses to Die

The belief that SEO equals keywords plus rankings persists because it once worked—and because its outputs are easy to measure. Keywords provide clarity, rankings provide validation, and traffic provides visible progress. But this belief has also shaped content strategies that are increasingly misaligned with how search engines actually work today.

The symptoms are everywhere: websites that rank but fail to convert, pages that attract traffic but never build authority, and brands that are visible yet forgettable. Content becomes fragmented into keyword-driven silos, each page optimized to win a query rather than explain a concept. The result is traffic without trust and rankings without real authority—visibility that doesn’t compound.

What Actually Changed (And Why Most Missed It)

What most teams missed is that search engines quietly stopped behaving like document-retrieval systems. They no longer operate primarily by asking, “Does this page match the query?” That question belonged to an era when search was about indexing and matching text.

Today, search engines ask a far more complex question: “Do we trust this source to reason correctly about the topic?” Modern search systems evaluate how consistently, accurately, and coherently a source explains ideas across contexts. Pages are no longer judged in isolation; they are treated as evidence of underlying understanding. This shift—from matching words to evaluating reasoning—redefines what optimization even means.

Defining the New SEO Paradigm

In this new landscape, SEO is no longer a mechanical exercise. It is better understood as intelligence engineering, knowledge reliability optimization, and machine–human trust alignment. The goal is not merely to be relevant, but to be dependable—to demonstrate thinking that machines can validate and humans can believe.

The core truth is simple but uncomfortable: search engines no longer rank content—they assess cognition, consistency, and credibility. And SEO, as a discipline, is being rebuilt around that reality.

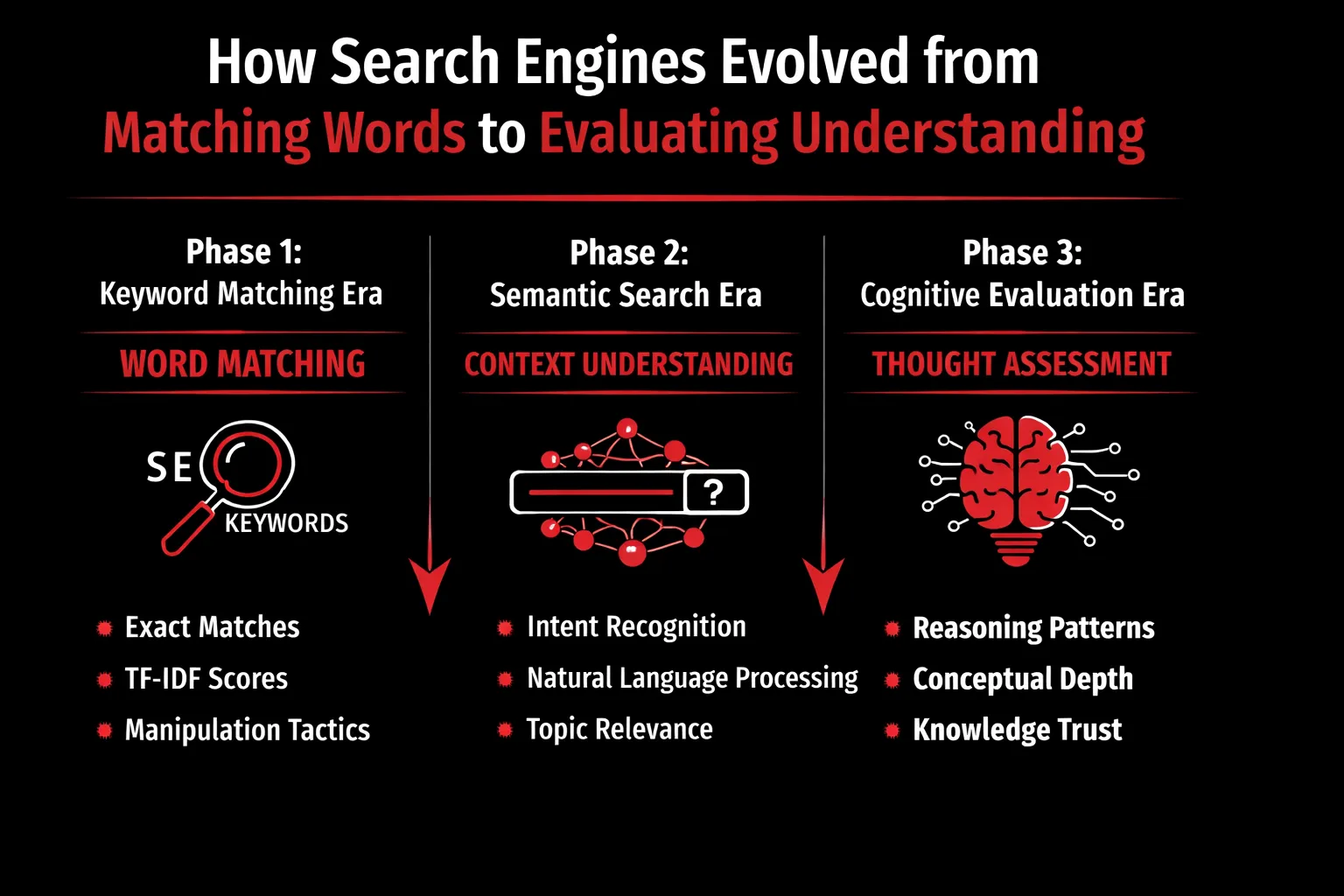

How Search Engines Evolved from Matching Words to Evaluating Understanding

Search engines did not become “intelligent” overnight. Their evolution mirrors a gradual shift from mechanical matching to meaning interpretation, and finally to reasoning and trust evaluation. Understanding this progression is essential to grasp why modern SEO is no longer about inserting the right keywords, but about demonstrating genuine understanding.

Phase 1: The Keyword Matching Era

In the earliest phase of search, engines operated as large-scale text matchers. Technologies like TF-IDF (Term Frequency–Inverse Document Frequency) measured how often a keyword appeared on a page compared to its frequency across the web. The assumption was simple: if a page repeated the same terms a user searched for, it was likely relevant.

Pages were treated as isolated documents, evaluated independently of the broader site, author, or brand. There was little understanding of context, intent, or meaning—only statistical correlation between query terms and page text.

This environment made manipulation easy and profitable. Keyword stuffing, exact-match domains, repetitive anchor text, and thin pages optimized for single phrases worked because the system lacked interpretive depth. Search engines were not asking whether the content was right or useful—only whether it matched. SEO, at this stage, was largely a mechanical exercise.

Phase 2: Semantic & Contextual Search

As the web grew and manipulation scaled, keyword matching proved insufficient. This led to a major shift toward semantic and contextual understanding, marked by updates such as Hummingbird, RankBrain, and BERT.

Hummingbird allowed Google to process queries as whole ideas rather than isolated words. RankBrain introduced machine learning to interpret unfamiliar or ambiguous queries. BERT further advanced this by enabling search engines to understand language nuances, such as prepositions, context, and sentence structure.

The goal shifted from matching strings to understanding intent. Search engines became better at identifying what users meant, not just what they typed.

However, semantic optimization still had limits. Many SEO strategies simply evolved into synonym stuffing, topic clustering without depth, or rephrasing existing content. While the engines understood language better, they still largely evaluated what was said, not how well it was understood.

Phase 3: Cognitive Evaluation (The Current Era)

Today, search engines function less like indexes and more like reasoning systems. They evaluate whether a source demonstrates real understanding of a topic by analyzing conceptual coherence, logical consistency, and topical depth across content.

Instead of judging pages in isolation, engines assess how ideas connect across a site, how consistently concepts are explained, and whether explanations reflect sound reasoning. A page is no longer an endpoint—it is an evidence node supporting (or undermining) the credibility of the broader entity behind it.

In this era, SEO success depends on demonstrating intelligence that can be trusted—not just by humans, but by machines designed to recognize reliable thinking.

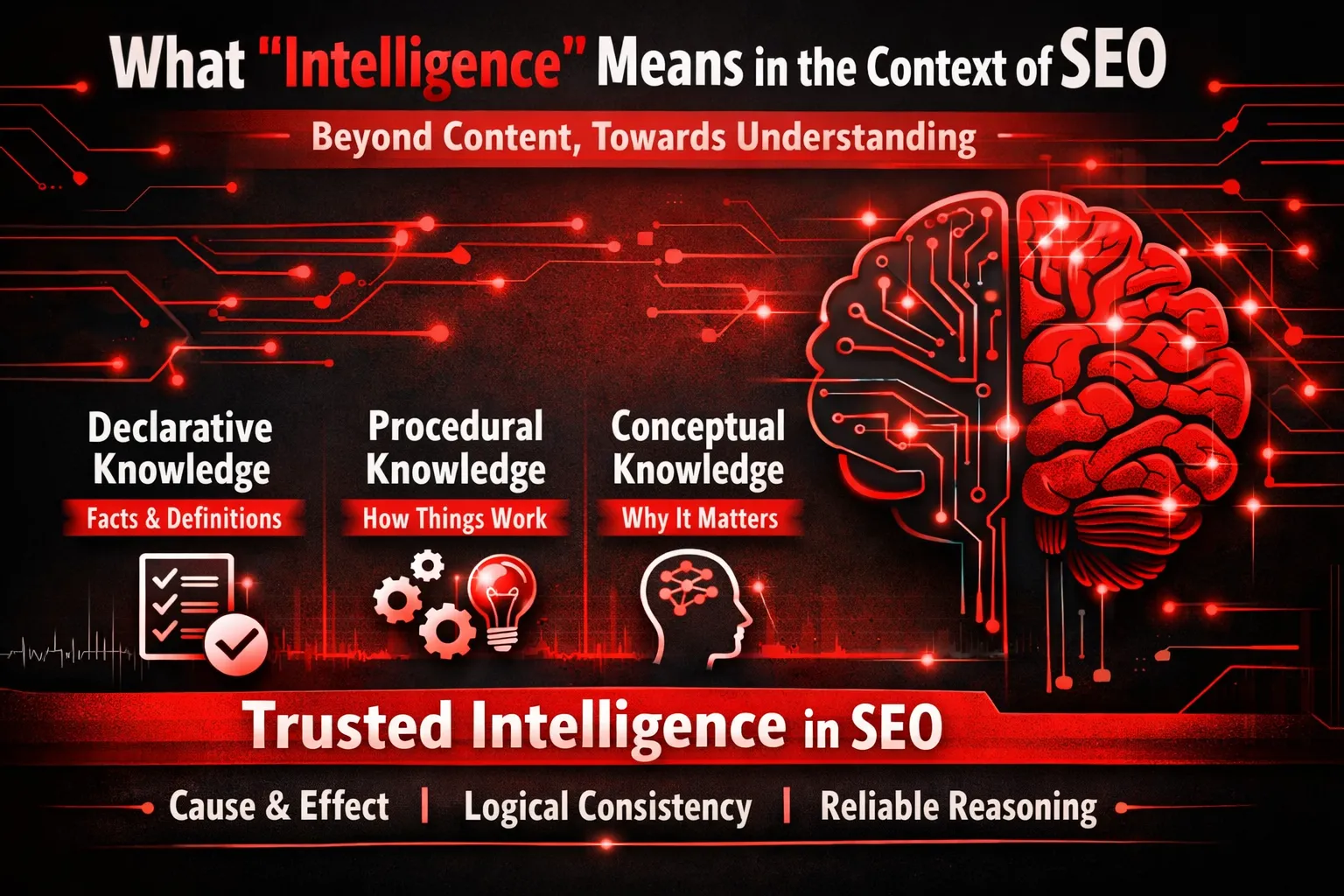

What “Intelligence” Means in the Context of SEO

When we say search engines now evaluate intelligence rather than content, we are not referring to word count, publishing frequency, or how many blog posts a brand produces in a month. Intelligence, in modern SEO, is about how well a source understands a topic, explains it consistently, and reasons about it over time. This shift fundamentally changes what authority looks like.

Intelligence ≠ Content Volume

For years, SEO rewarded scale. More pages meant more keywords, more entry points, and more chances to rank. That logic no longer holds. Publishing hundreds of articles does not automatically translate into authority if those articles repeat surface-level ideas or paraphrase what already exists elsewhere.

Search engines now distinguish between thin expertise at scale and deep reasoning expressed clearly. Thin expertise looks impressive in volume but collapses under scrutiny—definitions change from page to page, explanations lack causality, and insights never move beyond the obvious. Deep reasoning, on the other hand, may exist across fewer pages, but each page reinforces the same understanding, builds upon prior ideas, and demonstrates genuine comprehension of the subject.

Authority is no longer cumulative by quantity; it is compounded by clarity and coherence.

The Three Layers of Search Intelligence

Modern search systems evaluate content across multiple layers of understanding:

- Declarative Knowledge

This is the foundational layer—facts, definitions, and basic statements. For example, explaining what SEO is or defining a technical term. Almost every site can produce declarative knowledge, which makes it the weakest differentiator.

- Procedural Knowledge

This layer explains how things work. It includes processes, workflows, step-by-step explanations, and implementation details. Procedural knowledge signals experience, but it can still be copied or imitated.

- Conceptual Knowledge

This is where true intelligence emerges. Conceptual knowledge explains why something works, how different ideas relate to each other, and what underlying principles govern outcomes. It connects dots instead of listing steps.

Search engines increasingly prioritize this third layer because it is hardest to fake and easiest to validate over time.

Why Search Engines Prefer Conceptual Depth

Machines are no longer passive readers; they are evaluators of reasoning. At a conceptual level, search engines analyze:

- Cause-and-effect relationships rather than isolated claims

- Predictable reasoning paths instead of random tips

- Consistent explanations across contexts, queries, and formats

If a source explains why something matters in one article but contradicts itself elsewhere, trust erodes. Conceptual depth provides stability—machines can anticipate how you would answer related questions, even if you haven’t published that exact page yet.

Intelligence as Pattern Stability

Ultimately, intelligence in SEO is about pattern stability. Trust emerges when your explanations do not conflict across pages, your perspective remains logically aligned with observable reality, and your conclusions consistently follow from your premises.

Search engines are not just indexing topics; they are modeling how you think. They look for repeatable thinking patterns, not isolated keyword matches. When your content reflects stable, coherent reasoning, it signals something far more valuable than optimization—it signals intelligence worth trusting.

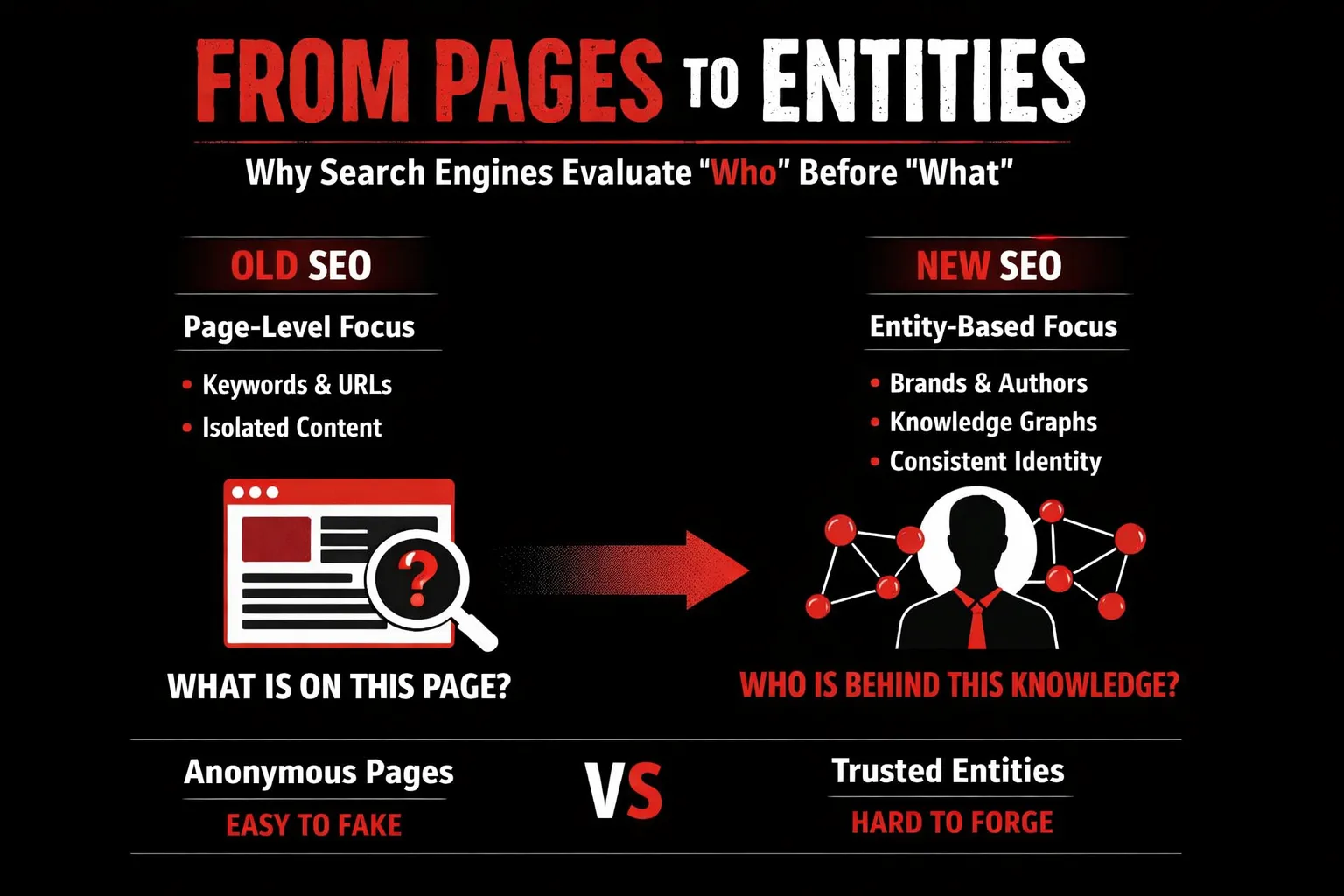

From Pages to Entities: Why Search Engines Evaluate “Who” Before “What”

For years, SEO operated on the assumption that pages were the unit of trust. Optimize a page well enough—right keywords, enough backlinks, decent structure—and it could rank, even if the source behind it was weak or inconsistent. That era is effectively over. Modern search engines have learned a hard lesson: pages are easy to manipulate, but identities are not.

The Decline of Page-Level Trust

A page can be rewritten overnight. It can be optimized, over-optimized, or spun into something it was never meant to be. This made page-level evaluation unreliable at scale. As manipulation increased, search engines shifted their focus upward—from what a page says to who is saying it.

Entities, unlike pages, carry history. They accumulate behavior, consistency, and context over time. While a page can be faked, an entity that repeatedly demonstrates expertise across multiple touchpoints is far harder to fabricate convincingly. This is why page-level trust has declined—and entity-level trust has taken its place.

What Is an Entity in Modern Search?

In modern search systems, an entity is a uniquely identifiable “thing” that exists independently of any single page. This includes brands, authors, products, organizations, and even well-defined concepts.

What makes entities powerful is persistence. An entity is not confined to one URL—it is represented across:

- Content published on-site

- Mentions across the web

- Structured data and schema

- External references, citations, and associations

Search engines build a unified understanding of an entity by connecting these signals. The result is a persistent digital identity that can be evaluated, remembered, and compared over time.

How Entity Confidence Is Built

Entity confidence doesn’t come from one great article. It comes from pattern consistency. Search engines look for:

- Consistent messaging across topics and formats

- Repeated demonstration of expertise in a specific domain

- Clear topical focus sustained over time

When an entity explains the same concepts in compatible ways across multiple contexts, it signals reliable reasoning. That reliability is what search engines learn to trust.

Why Intelligence Is Always Entity-Attached

Intelligence without identity is noise. Search engines do not trust anonymous insight, no matter how well-written it appears. They trust identifiable, repeatable sources whose thinking can be observed, validated, and cross-checked.

In today’s SEO landscape, intelligence is not evaluated in isolation. It is always attached to someone or something. And the stronger, clearer, and more consistent that entity is, the more likely its intelligence will be trusted—by machines and humans alike.

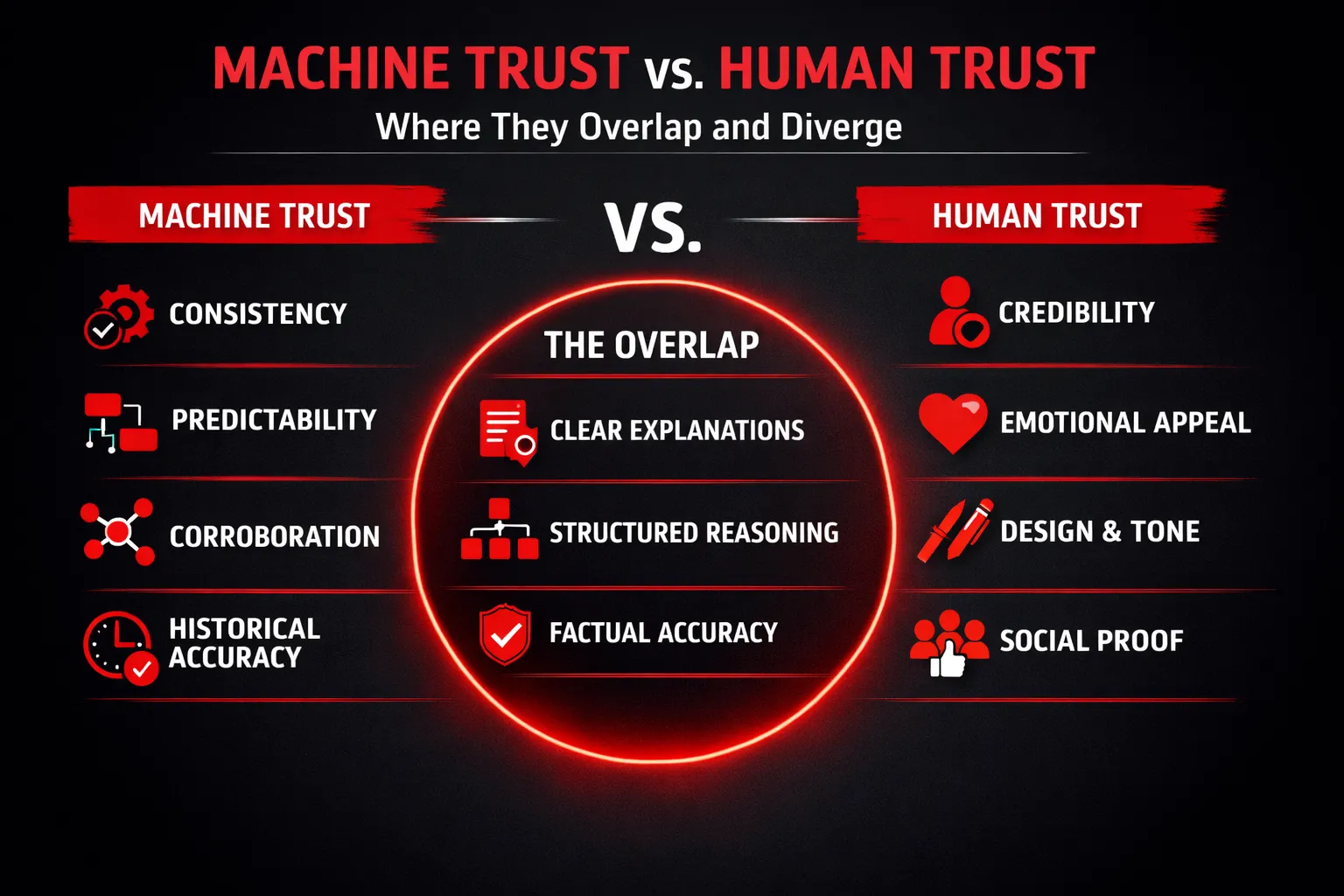

Machine Trust vs Human Trust: Where They Overlap and Diverge

Trust has always been at the heart of search—but the nature of that trust has fundamentally changed. In the past, SEO focused almost entirely on human persuasion: attract clicks, reduce bounce rates, and look authoritative enough to convince users. Today, that equation is incomplete. Before a human ever sees your content, a machine has already decided whether it deserves to be shown. Understanding the difference between how humans trust and how machines trust—and where those two systems intersect—is now essential to modern SEO.

How Humans Decide Trust

Human trust is intuitive, emotional, and often subconscious. When a user lands on a page, they make rapid judgments based on signals that feel credible rather than those that are objectively verifiable.

Visual design plays a major role. Clean layouts, readable typography, and professional UI create an immediate sense of legitimacy. Tone and language matter just as much—confident, clear, and authoritative writing builds reassurance, while vague or overly promotional language erodes it. Humans also rely heavily on social proof: testimonials, brand mentions, case studies, author bios, and recognizable logos. These signals answer an implicit question in the user’s mind: “Do others trust this source?”

In short, humans trust what looks credible, sounds confident, and feels familiar.

How Machines Decide Trust

Machines operate on a completely different logic. They don’t respond to aesthetics or persuasion. Search engines and AI systems evaluate trust through patterns and reliability over time.

Consistency is a primary signal. Machines look for stable definitions, aligned explanations, and non-contradictory viewpoints across pages. Predictability matters: trustworthy sources explain topics in a way that aligns with known facts and expected outcomes. Corroboration is critical—claims that match other trusted sources strengthen confidence, while isolated or extreme assertions weaken it. Over time, historical accuracy becomes decisive. Sources that are repeatedly correct gain trust; those that mislead or fluctuate lose it.

Machines don’t ask, “Does this look authoritative?” They ask, “Has this source proven reliable?”

The Overlap Zone

The most powerful SEO happens in the overlap between machine trust and human trust. Clear explanations benefit both. Structured reasoning—step-by-step logic, defined terms, and causal clarity—makes content easier for machines to interpret and humans to understand. The absence of exaggeration is critical: measured, precise language builds credibility across both audiences.

This overlap zone is where intelligence becomes visible.

Why SEO Must Satisfy Both

Machine trust enables visibility. Without it, your content never reaches the user. Human trust enables conversion. Without it, visibility produces no value. Intelligence—clear, consistent, and reliable reasoning—is the bridge between the two. Modern SEO succeeds not by choosing between humans and machines, but by earning the trust of both simultaneously.

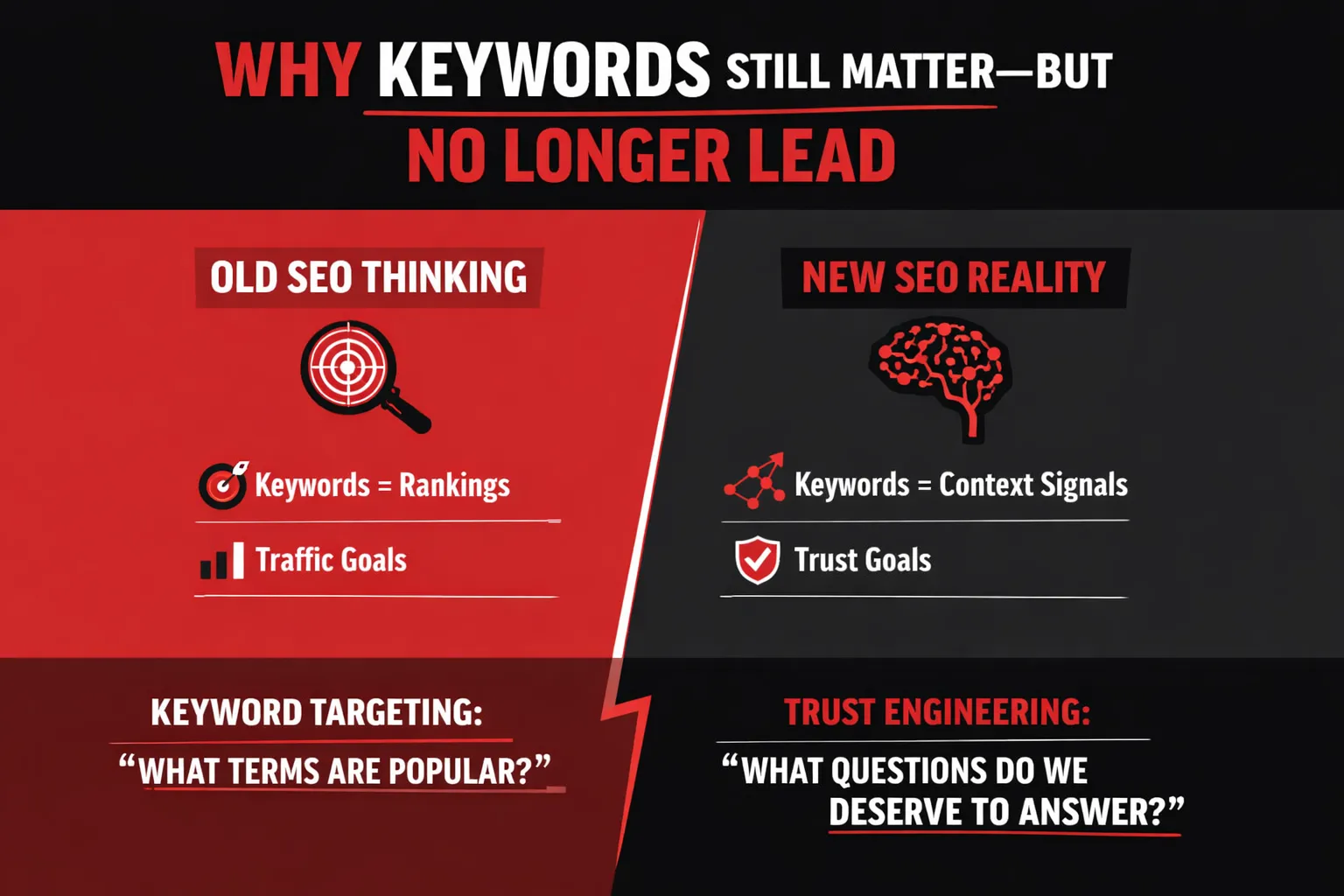

Why Keywords Still Matter—But No Longer Lead

Keywords are not dead—but their role in SEO has been fundamentally redefined. In modern search, keywords no longer act as ranking levers. Instead, they function as entry signals—the starting point that helps search engines recognize what domain of knowledge a piece of content belongs to. The mistake many brands make is assuming that because keywords still exist, they still lead. They don’t.

Keywords as Entry Signals, Not Ranking Drivers

Search engines use keywords primarily to identify the topic space a page is attempting to address. They help systems determine whether a piece of content is about “technical SEO,” “brand trust,” or “AI search behavior.” Once that topic is recognized, keywords largely step out of the decision-making process.

Authority is not granted because a keyword appears frequently or prominently. It is granted when a source demonstrates consistent, reliable understanding of the topic across multiple contexts. In other words, keywords open the door—but intelligence decides whether you’re allowed to stay in the room.

The Role of Keywords in Cognitive SEO

In cognitive SEO, keywords serve a structural role, not a competitive one. They help define topical boundaries, signaling what a piece of content will and will not cover. This allows search engines to accurately position your content within a broader knowledge graph.

Keywords also help machines categorize context. When used naturally and consistently, they reinforce semantic clarity, ensuring that your explanations align with the correct conceptual neighborhood. However, their value lies in supporting understanding—not in forcing relevance.

The Danger of Keyword-First Thinking

When keywords lead strategy, content fractures. Teams create multiple pages targeting slight keyword variations, resulting in content fragmentation. Explanations become repetitive, shallow, or—worse—contradictory. Over time, this produces conflicting interpretations of the same topic across a site, eroding machine trust.

Search engines don’t penalize this immediately—but they do stop believing you.

Reframing Keyword Research

Modern SEO requires a shift in mindset. Instead of asking, “What keywords should we target?” the more powerful question is:

“What questions should we be trusted to answer?”

Keywords should emerge naturally from clear, authoritative answers—not dictate them. In the era of cognitive search, trust is built by depth, coherence, and consistency—not by keyword dominance.

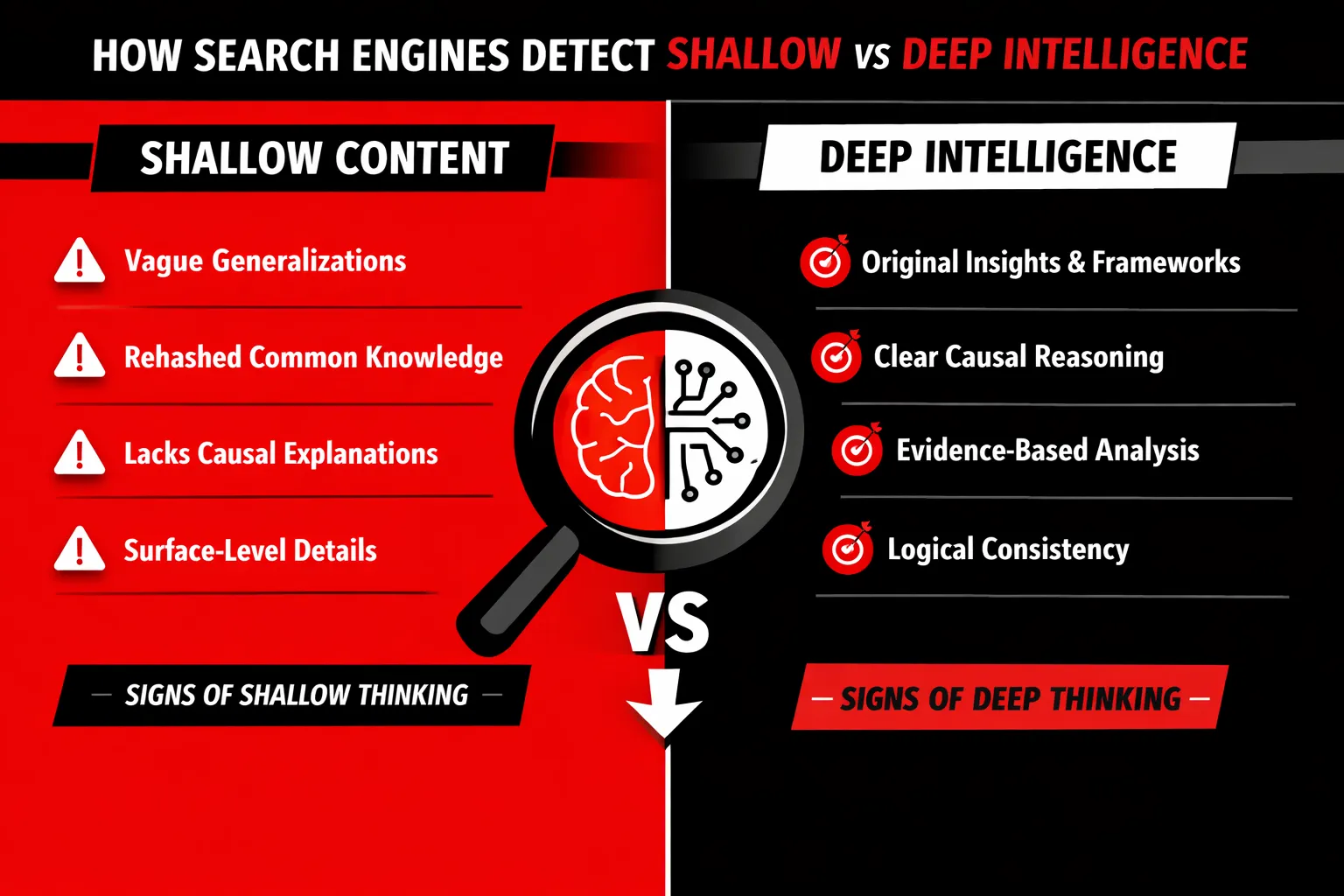

How Search Engines Detect Shallow vs Deep Intelligence

Search engines no longer evaluate content at face value. They evaluate how well a source thinks. Beneath rankings and visibility lies a deeper filtering system—one that separates shallow information from genuinely reliable intelligence. This distinction determines not just where a page appears, but whether a source deserves to be trusted repeatedly across queries, contexts, and time.

Signals of Shallow Content

Shallow content is not defined by length or formatting. It is defined by cognitive weakness.

One of the most common signals is over-generalization. When content makes broad claims without boundaries—“SEO is all about quality content” or “AI changes everything”—it offers no precision for machines to evaluate. Search engines look for specificity because intelligence operates within constraints, not slogans.

Another strong indicator is the absence of causal explanation. Shallow content describes what happens but avoids explaining why. For example, stating that “internal linking improves rankings” without explaining how it influences crawl paths, entity reinforcement, or semantic clustering leaves a conceptual gap. Machines interpret this as incomplete reasoning.

Finally, reworded common knowledge is a major red flag. Content that simply paraphrases what already exists across the web adds no new understanding. Even if grammatically polished, it lacks informational gain. Search engines are designed to detect redundancy at scale, and repetition without insight signals low intellectual contribution.

Signals of Deep Intelligence

Deep intelligence is detectable because it demonstrates thinking, not just writing.

One of its strongest markers is the presence of original frameworks. When content introduces a new way to organize ideas, explain relationships, or classify concepts, it shows interpretive effort. Machines recognize frameworks as evidence of independent reasoning rather than extraction.

Another signal is clearly stated assumptions. Intelligent content reveals the conditions under which its claims hold true. By clarifying context—industry, scale, constraints—it reduces ambiguity. This clarity increases predictability, which search engines associate with trust.

Lastly, logical progression matters. Deep content moves step by step, building ideas in sequence rather than stacking claims randomly. When conclusions follow premises cleanly, machines can model the reasoning path. This coherence is a critical trust signal.

Cross-Page Consistency Checks

Search engines do not evaluate pages in isolation. They perform cross-page intelligence audits.

If one page defines SEO as a technical discipline and another frames it purely as content marketing, the inconsistency weakens entity trust. Search systems check whether your pages agree with each other conceptually, not just topically.

Stable definitions across topics reinforce reliability. Consistency signals that the source is not reacting opportunistically to keywords but operating from a unified understanding.

The Role of Temporal Trust

Intelligence is not judged once—it is judged over time.

Search engines track historical accuracy. When a source repeatedly publishes information that ages well, its trust score compounds. Conversely, recurring errors, exaggerated predictions, or constantly shifting stances degrade credibility.

Search engines remember past mistakes because intelligence is cumulative. Trust, once broken, requires far more evidence to rebuild. In modern SEO, being consistently right matters more than being momentarily visible.

Deep intelligence endures. Shallow content expires.

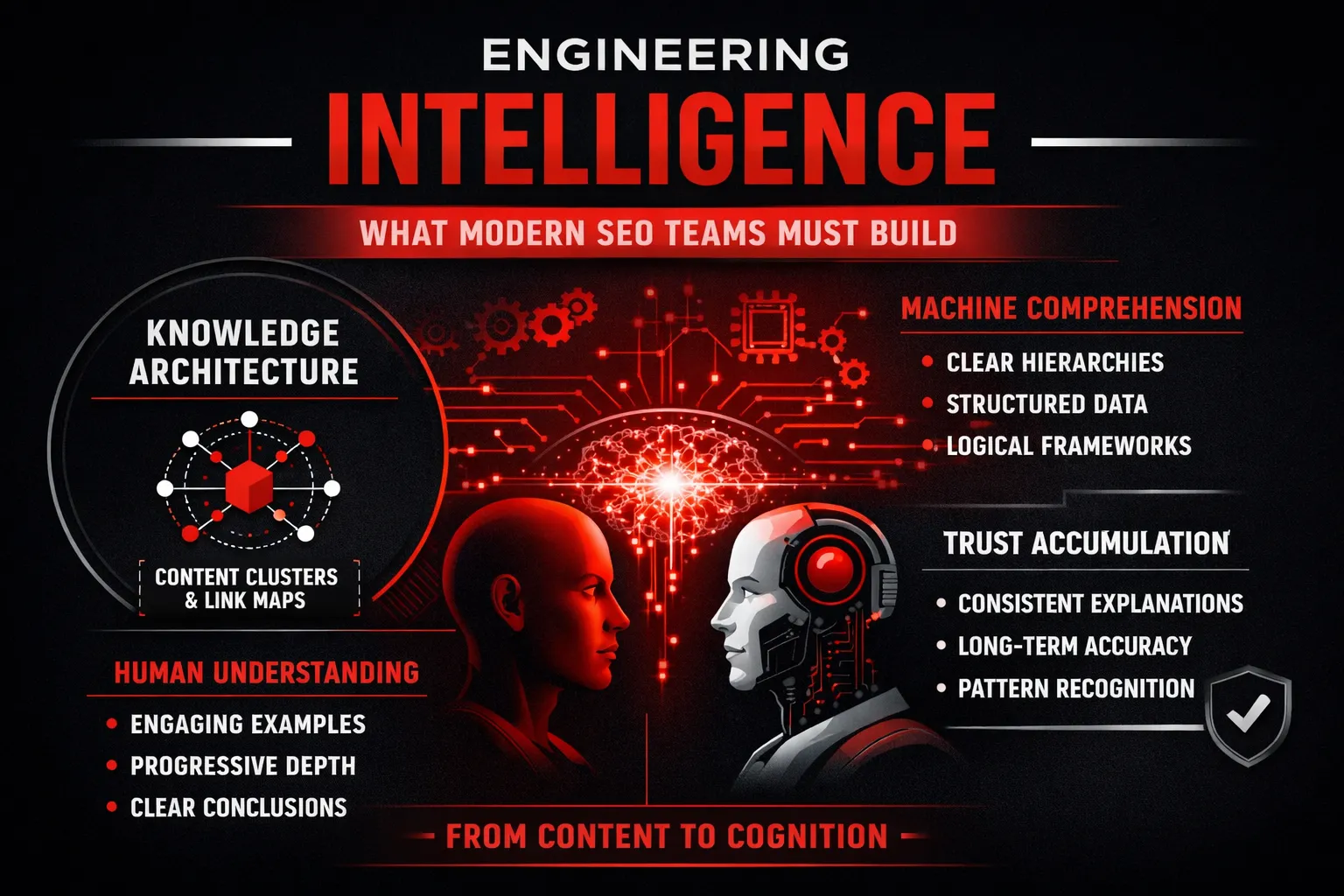

Engineering Intelligence: What Modern SEO Teams Must Build

If SEO has shifted from ranking pages to earning trust, then modern SEO teams are no longer content producers—they are intelligence architects. The goal is not to publish more pages, but to engineer a system of knowledge that search engines and humans can reliably understand, validate, and return to over time.

This requires moving beyond traditional content strategy into something deeper: intentional intelligence design.

SEO as Knowledge Architecture

Modern SEO works less like publishing and more like building a knowledge system. Individual blog posts are no longer standalone assets; they function as nodes within a larger conceptual network.

Content clusters should be designed as knowledge systems, not keyword silos. Each piece should answer a specific question while reinforcing a broader understanding of the topic. Instead of repeating similar explanations across multiple pages, each page contributes a distinct layer of meaning—definitions, implications, applications, or edge cases.

Internal linking, in this model, is not about distributing link equity. It acts as a reasoning path. Links guide both users and machines through a logical progression of ideas, showing how concepts relate, depend on each other, or expand in complexity. A well-structured internal link graph mirrors how an expert would explain the subject step by step, signaling coherence and depth to search engines.

Designing for Machine Comprehension

Machines do not infer meaning the way humans do. They rely on structure, clarity, and repetition of ideas across contexts. Designing for machine comprehension means reducing ambiguity.

Clear hierarchies help search engines understand what is foundational versus what is derivative. Core concepts should be consistently treated as such across the site, while supporting ideas remain clearly subordinate.

Explicit definitions are critical. When you define key terms precisely—and reuse those definitions consistently—you reduce interpretive uncertainty. This consistency helps machines build stable associations between concepts and your brand or entity.

Concept reuse reinforces trust. When the same ideas appear across multiple pages with aligned explanations, search engines detect predictable reasoning patterns. Predictability, not novelty, is what machines trust most.

Designing for Human Comprehension

While machines value structure, humans value clarity and progression. Intelligence must be accessible, not overwhelming.

Progressive depth ensures readers can engage at different levels of understanding. Beginners should grasp the fundamentals, while advanced readers can explore deeper layers without friction.

Examples and analogies translate abstract concepts into practical understanding. They anchor intelligence in real-world contexts, increasing credibility and retention.

Clear conclusions matter. Each piece of content should leave the reader with a resolved understanding—not just information, but insight. When humans feel intellectually satisfied, machines often interpret this as quality through engagement and consistency signals.

From Content Strategy to Intelligence Strategy

The final shift is philosophical. Content strategy focuses on output. Intelligence strategy focuses on reliability.

Editorial guidelines must prioritize:

- Reasoning quality: Are arguments logical, structured, and defensible?

- Consistency: Do explanations align across all related content?

- Trust accumulation: Does each new piece reinforce long-term credibility?

When SEO teams build intelligence instead of content, visibility becomes a byproduct. Search engines don’t reward volume—they reward trustworthy thinking, engineered at scale.

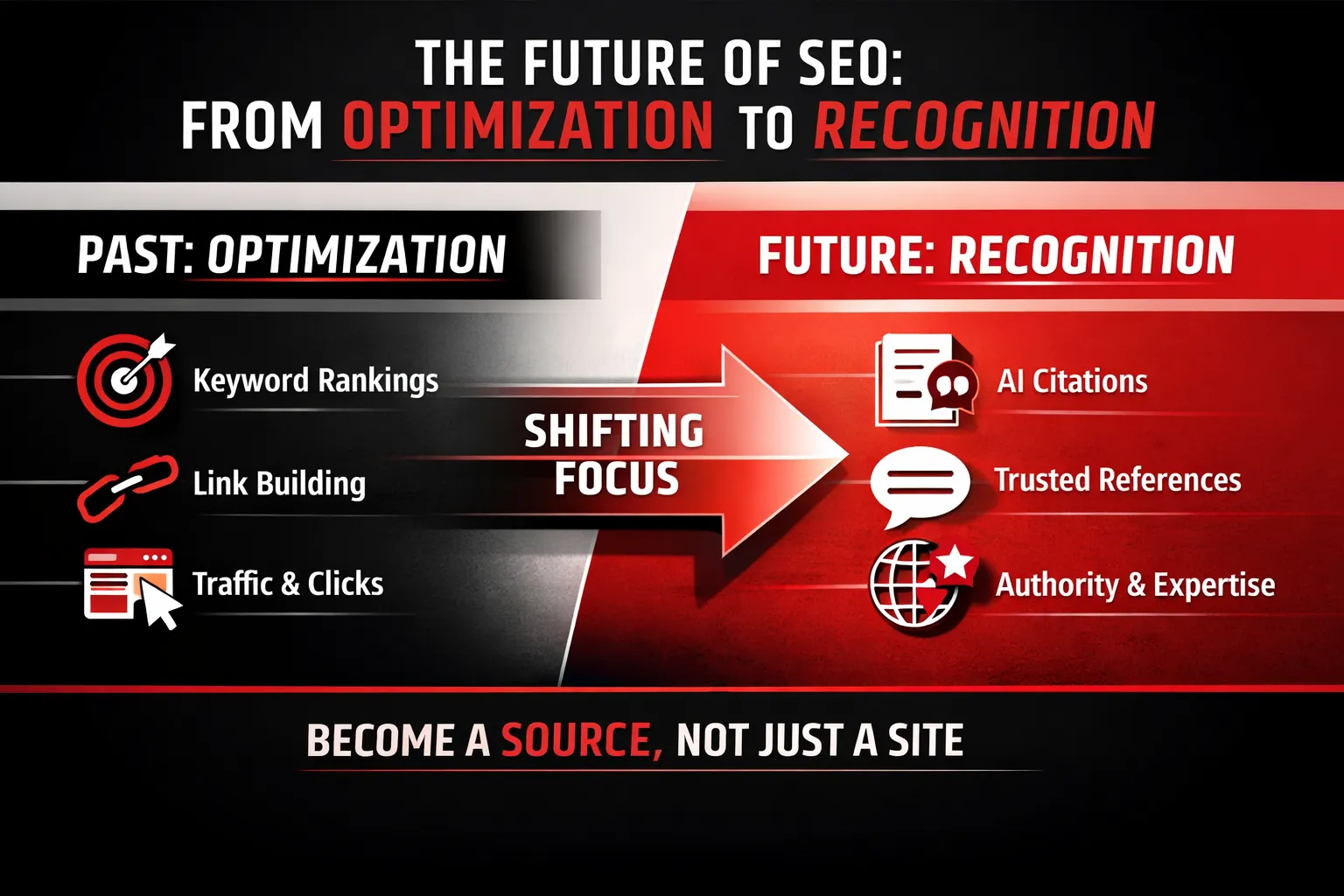

The Future of SEO: From Optimization to Recognition

The future of SEO is not a better algorithmic trick or a smarter optimization tactic—it is a fundamental shift in what success looks like. As search engines evolve from retrieval systems into reasoning engines, ranking is becoming a side effect, not the goal. Visibility no longer depends on where a page appears, but on whether a brand’s intelligence is recognized, trusted, and reused across search and AI systems.

Why Ranking Will Become Secondary

Traditional rankings were designed for a search environment where users clicked through lists of links. That behavior is rapidly disappearing. AI-powered search experiences now generate direct answers, summaries, and explanations, often without sending users to any website at all. This rise of AI answers means the primary interaction happens inside the search interface, not on the destination page.

At the same time, zero-click searches are becoming the norm. Users get what they need immediately—definitions, comparisons, step-by-step guidance—without scrolling past the first screen. In this environment, ranking first matters far less than being understood well enough to be included in the answer itself.

This is where citation-based visibility changes the rules. Search engines and AI systems increasingly rely on a small set of trusted sources to generate responses. If your brand’s knowledge is referenced, paraphrased, or cited, you gain visibility even without traffic. The question shifts from “Are we ranking?” to “Are we being used?”

Recognition as the New KPI

In the new SEO landscape, recognition replaces ranking as the primary KPI. Success is measured by signals such as:

- Being referenced when AI systems explain a topic

- Being quoted in synthesized answers

- Being used as a source across multiple queries and contexts

These signals indicate something far more valuable than a top position: machine trust. Recognition means search systems have identified your brand as a reliable authority whose explanations hold up across use cases. It reflects accumulated confidence, not one-time optimization.

Brands as Knowledge Providers

As this shift accelerates, brands are no longer competing merely as websites—they are competing as knowledge providers. The most visible brands will be those whose content is good enough to function as training data for AI systems and reliable enough to serve as trusted explainers.

In the future of SEO, the winners won’t be the best optimizers. They’ll be the brands that consistently demonstrate intelligence worth recognizing, remembering, and reusing.

Conclusion: SEO as the Discipline of Trustworthy Intelligence

The evolution of SEO can be summarized in a single, powerful shift. We have moved from keywords to cognition, where matching search terms is no longer enough, and demonstrating genuine understanding has become essential. We have shifted from pages to entities, where isolated URLs hold less value than consistent, recognizable sources of knowledge. And most importantly, we have transitioned from ranking to trust, where visibility is earned not through optimization tricks, but through sustained credibility across time, topics, and contexts.

This shift explains why many traditional SEO strategies feel increasingly ineffective. Producing more content, targeting more keywords, or chasing short-term ranking gains no longer guarantees meaningful visibility. Search engines are no longer acting as simple retrieval systems. They are evaluative systems designed to identify which sources think clearly, explain accurately, and remain consistent as knowledge evolves. In this environment, SEO stops being a marketing tactic and becomes an intellectual discipline.

The core truth is simple but uncomfortable: search engines don’t reward content creation—they reward reliable thinking at scale. They look for patterns of sound reasoning, stable definitions, and trustworthy explanations that hold up across multiple queries and user journeys. Intelligence, not volume, becomes the differentiator.

This is where the future of SEO demands a new question. Instead of asking, “How do we rank this page?”—a question rooted in manipulation and short-term wins—we must ask, “Would a machine trust our reasoning?” That single shift in mindset changes everything: how content is planned, how expertise is expressed, and how authority is built.

In the end, the brands that win search will not be the loudest or the most prolific. They will be the ones whose intelligence is clear, consistent, and worthy of trust—by both humans and machines alike.