SUPERCHARGE YOUR ONLINE VISIBILITY! CONTACT US AND LET’S ACHIEVE EXCELLENCE TOGETHER!

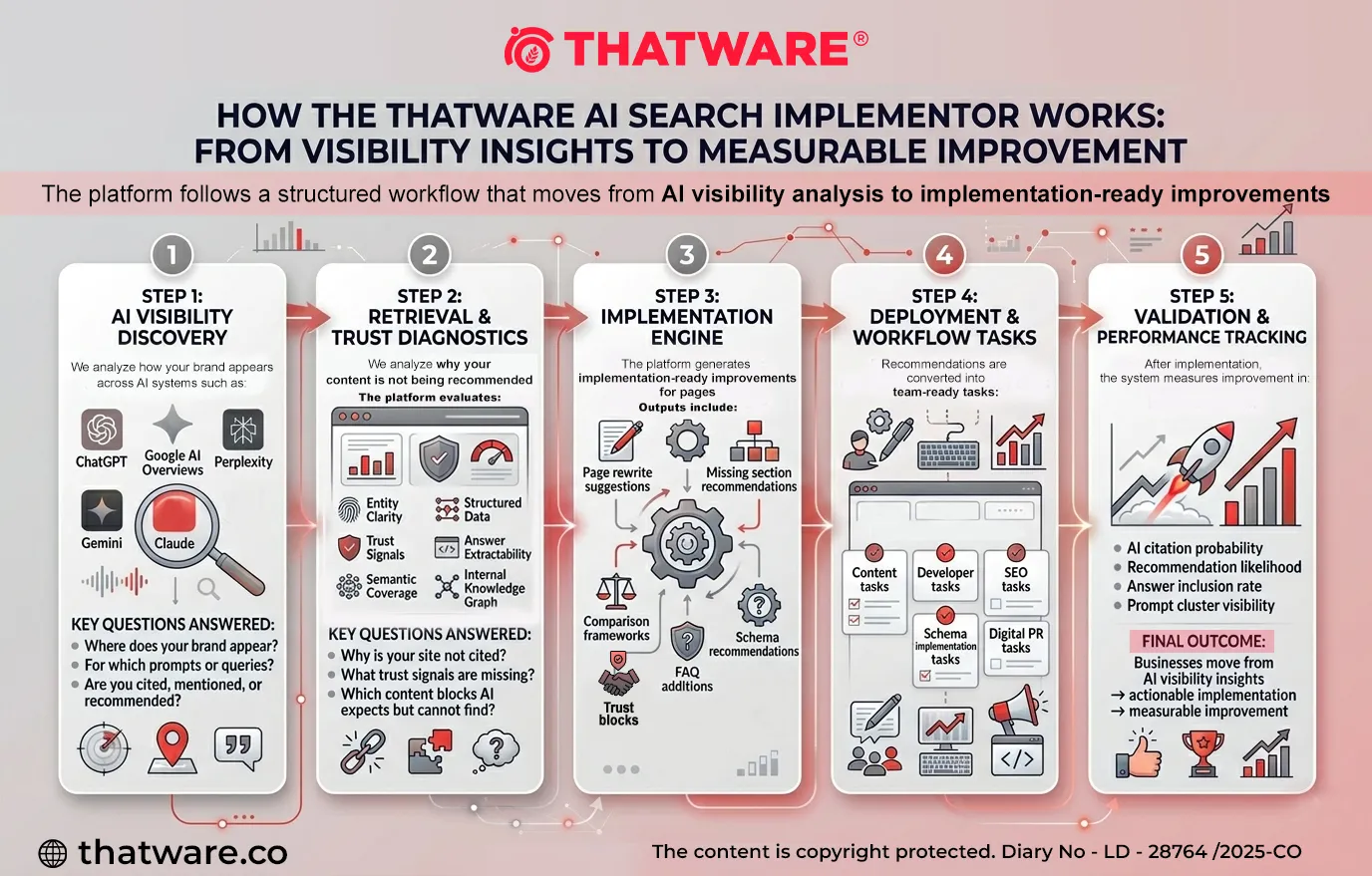

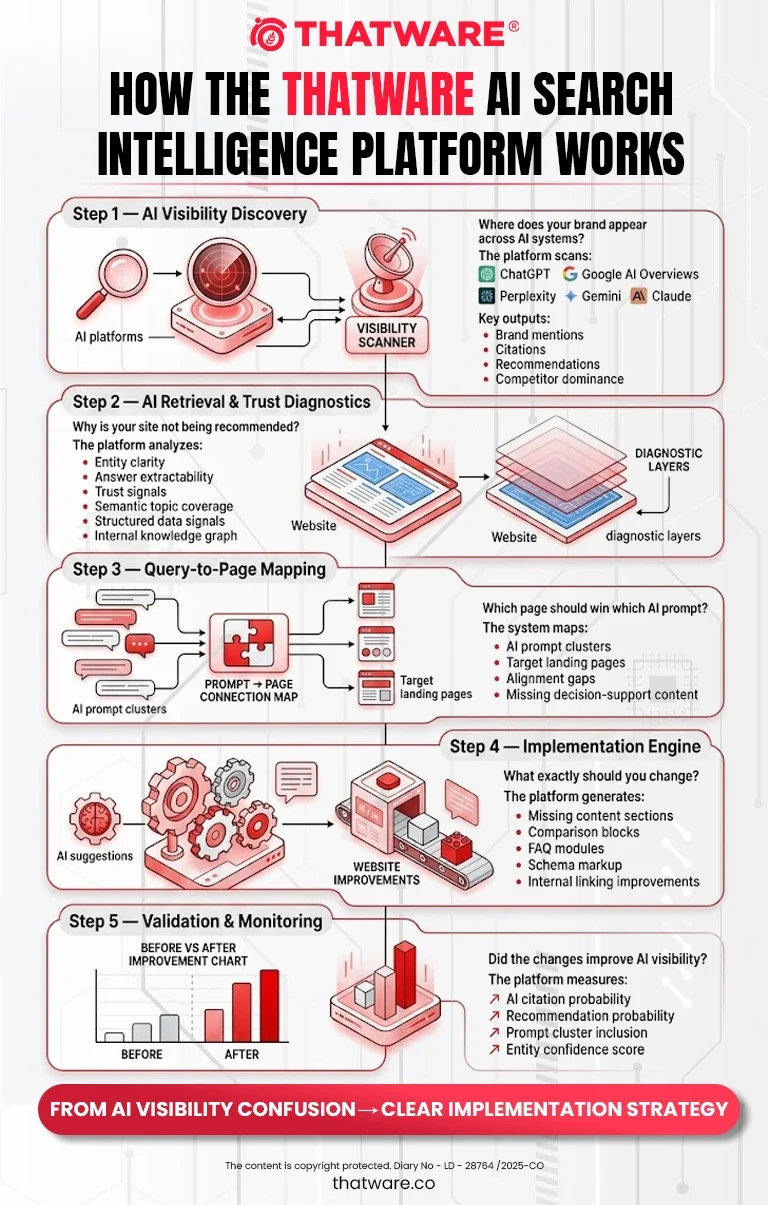

The evolution of search is rapidly shifting from traditional result pages to AI-generated answers and recommendations. Users now rely on systems like ChatGPT, Google AI Overviews, and other large language model (LLM) interfaces to discover products, services, and solutions. As a result, visibility is no longer determined only by rankings on search engines—it is increasingly determined by whether an AI system chooses to cite, recommend, or reference a brand when generating an answer.

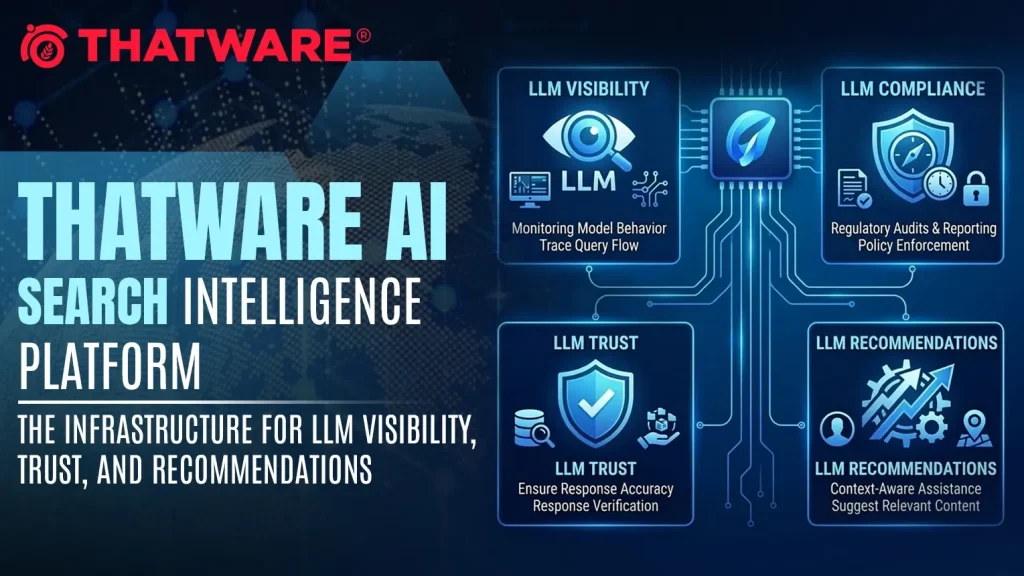

To address this shift, ThatWare has developed the LLM Tool – an AI Answer Visibility & Optimization Platform. The platform is designed to help brands understand how they appear within AI-generated responses and, more importantly, what changes are required to increase the likelihood of being cited, recommended, and trusted by AI systems.

The tool combines AI visibility analysis, retrieval diagnostics, and implementation-ready optimization insights to help organizations adapt their websites and digital assets for the emerging AI search ecosystem.

AI Surfaces Covered

Modern AI search is distributed across multiple platforms, each with its own data retrieval methods, content interpretation patterns, and recommendation behaviors. ThatWare’s LLM Tool evaluates brand visibility and recommendation patterns across the most influential AI-driven systems.

These include:

Google AI Overviews

Google’s AI-generated summaries increasingly shape user decision-making by synthesizing information from multiple sources directly in search results. The tool analyzes whether a brand’s content is being surfaced or referenced in these summaries and identifies opportunities to improve inclusion.

ChatGPT Discovery Behavior

ChatGPT is becoming a major discovery channel for services, tools, and educational content. The platform evaluates how frequently a brand is mentioned, cited, or recommended within ChatGPT-style responses and identifies the content structures that influence these outcomes.

Perplexity AI

Perplexity provides AI-generated answers with cited sources. The LLM Tool monitors whether a brand’s pages are being selected as reference sources and analyzes how competitor content is being prioritized in answers.

Google Gemini

Gemini integrates AI reasoning and knowledge retrieval with Google’s ecosystem. The tool analyzes how Gemini interprets brand content, identifies visibility gaps, and provides recommendations to improve content extractability and authority signals.

Claude

Claude emphasizes structured reasoning and contextual analysis. ThatWare’s tool evaluates content patterns that influence Claude’s response generation, including clarity, trust signals, and content structuring for AI comprehension.

By analyzing visibility across these AI surfaces, the platform provides a holistic understanding of how brands perform within the AI answer ecosystem.

Optimization Focus Areas

The ThatWare LLM Tool is not only designed to track AI visibility but also to optimize digital assets for AI answer generation and recommendation behavior. The platform focuses on several critical areas that determine whether a brand is selected or ignored by AI systems.

Answer Engine Optimization (AEO)

Answer Engine Optimization focuses on structuring content so that AI systems can easily extract and present it as part of generated responses. The tool evaluates whether website pages contain clear definitions, structured explanations, summaries, and decision-support content that AI systems can use when constructing answers.

It identifies missing answer blocks, poorly structured content, and sections that prevent AI extraction.

Generative Engine Optimization (GEO)

Generative Engine Optimization goes beyond traditional SEO by focusing on how AI systems interpret and synthesize information. The LLM Tool analyzes whether content supports AI reasoning through structured comparisons, contextual explanations, and supporting evidence that improves generative outputs.

This helps ensure that content is optimized not just for indexing but for AI reasoning and recommendation contexts.

LLM Citation Probability

One of the most important aspects of AI visibility is whether a page is selected as a source when an AI generates an answer. The platform evaluates citation likelihood by analyzing factors such as:

- factual clarity

- source credibility

- structured content blocks

- expert attribution

- data-backed statements

- references and proof signals

Based on this analysis, the tool identifies improvements that can increase the probability of a page being cited in AI-generated answers.

LLM Recommendation Probability

Beyond citations, AI systems increasingly recommend brands, tools, or providers when users ask questions such as:

- “What is the best X?”

- “Which company should I choose?”

- “What tools are recommended for this problem?”

The LLM Tool evaluates how well a website supports AI recommendation logic, including decision-support content, comparisons, proof elements, and buyer-fit explanations. It then generates actionable suggestions to improve a brand’s chances of being recommended.

AI Answer Visibility

Understanding whether a brand appears in AI-generated responses is essential for measuring digital presence in the new search landscape. The platform tracks visibility across various prompt categories and identifies patterns such as:

- when a brand is mentioned but not cited

- when competitors dominate recommendation queries

- when a brand appears in informational prompts but not commercial ones

These insights help businesses identify high-value visibility gaps in AI search behavior.

AI Retrieval Readiness

AI systems rely on retrieval mechanisms to gather information from the web before generating responses. If a website’s content is not structured properly, AI systems may struggle to extract useful information.

The LLM Tool evaluates a site’s AI retrieval readiness, including:

- content structure and extractability

- entity clarity

- schema and structured data implementation

- internal knowledge graph strength

- trust and authority signals

The platform then recommends improvements that make content easier for AI systems to retrieve, interpret, and include in generated answers.

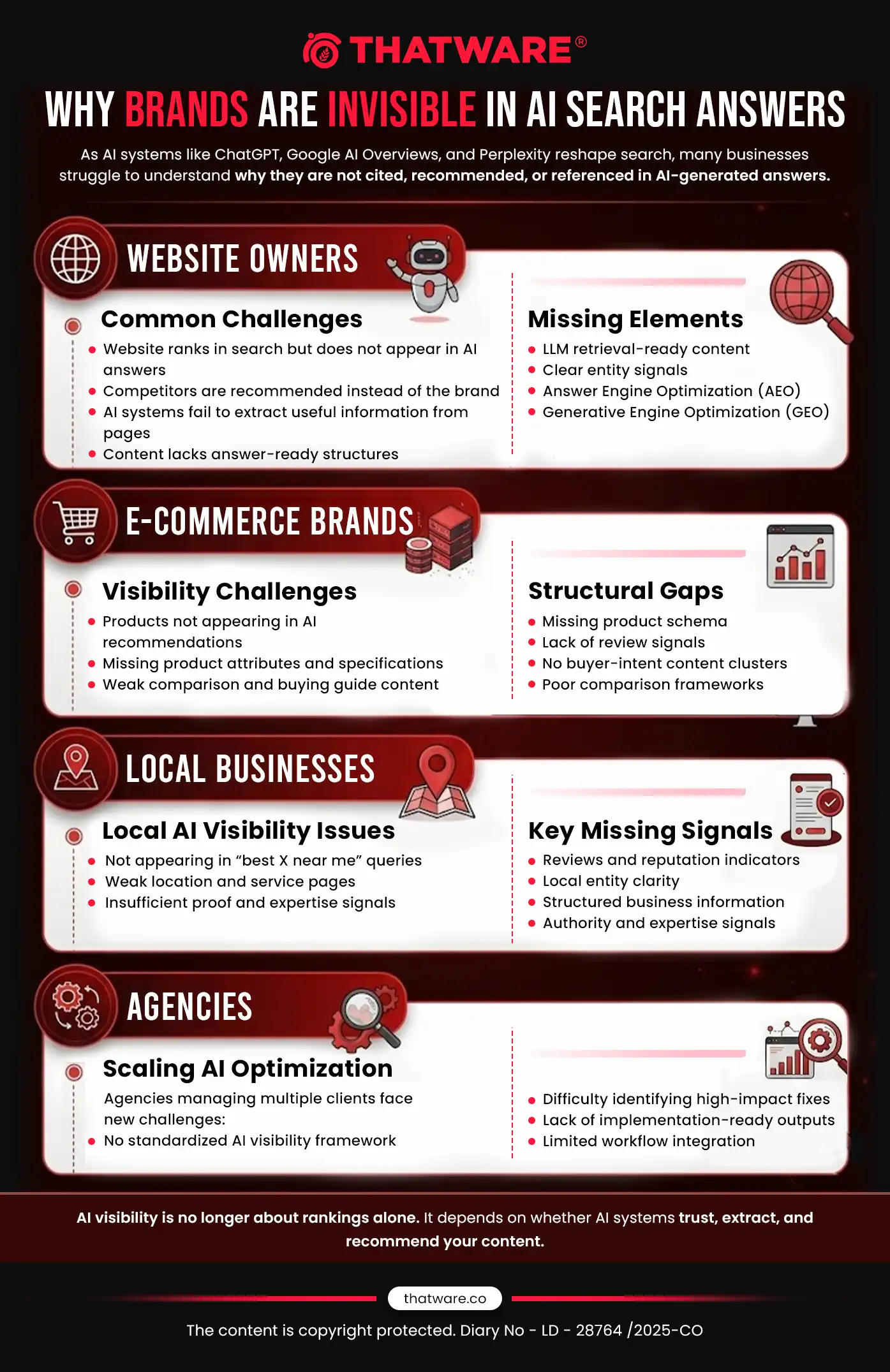

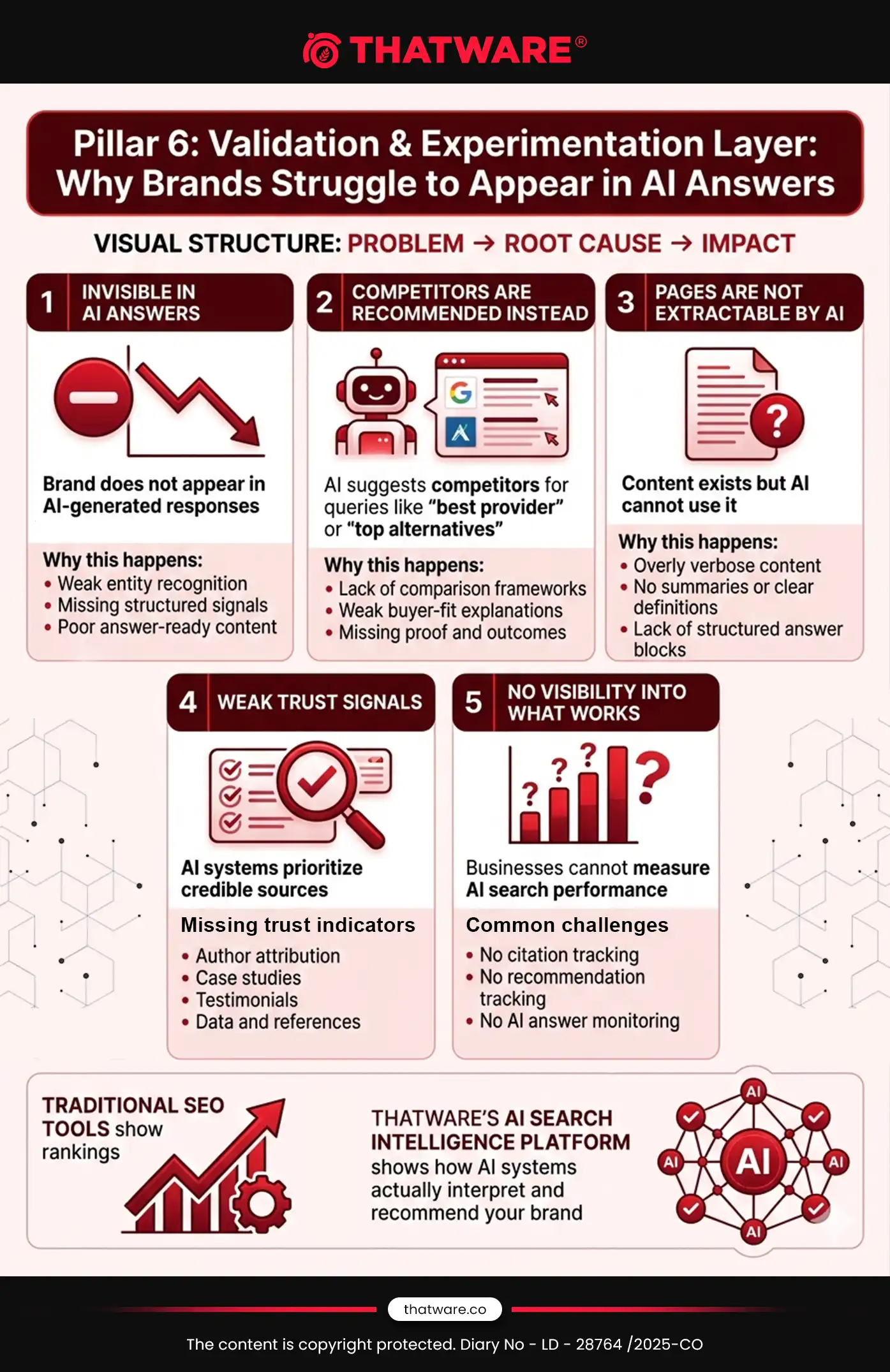

1. Core Problem Areas

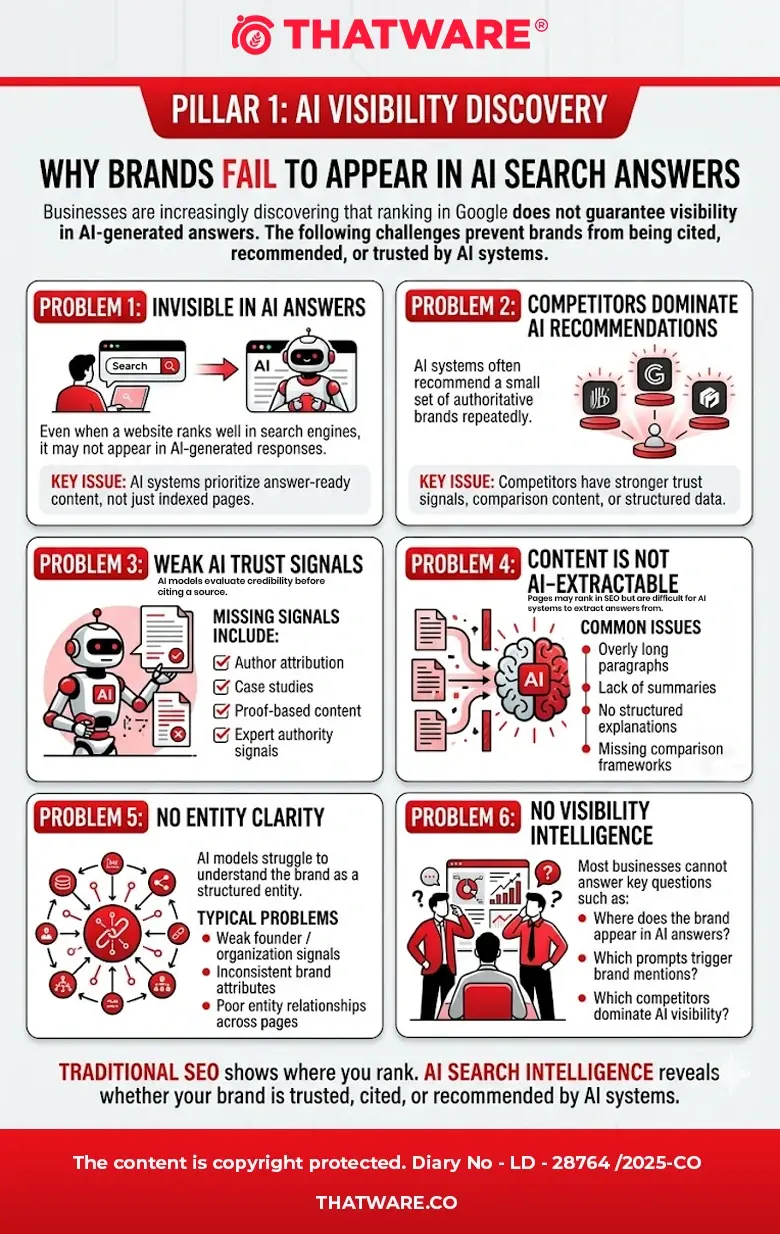

As AI-driven search systems such as Google AI Overviews, ChatGPT, Perplexity, Gemini, and Claude increasingly influence how users discover information, many businesses are experiencing a new visibility challenge. Traditional SEO metrics like rankings and impressions no longer fully explain why some brands are cited, recommended, or surfaced in AI-generated answers, while others are ignored.

Website owners, brands, and agencies now face a new layer of complexity: understanding how AI systems retrieve, evaluate, and recommend content. Most organizations lack the tools to diagnose these issues or implement improvements effectively.

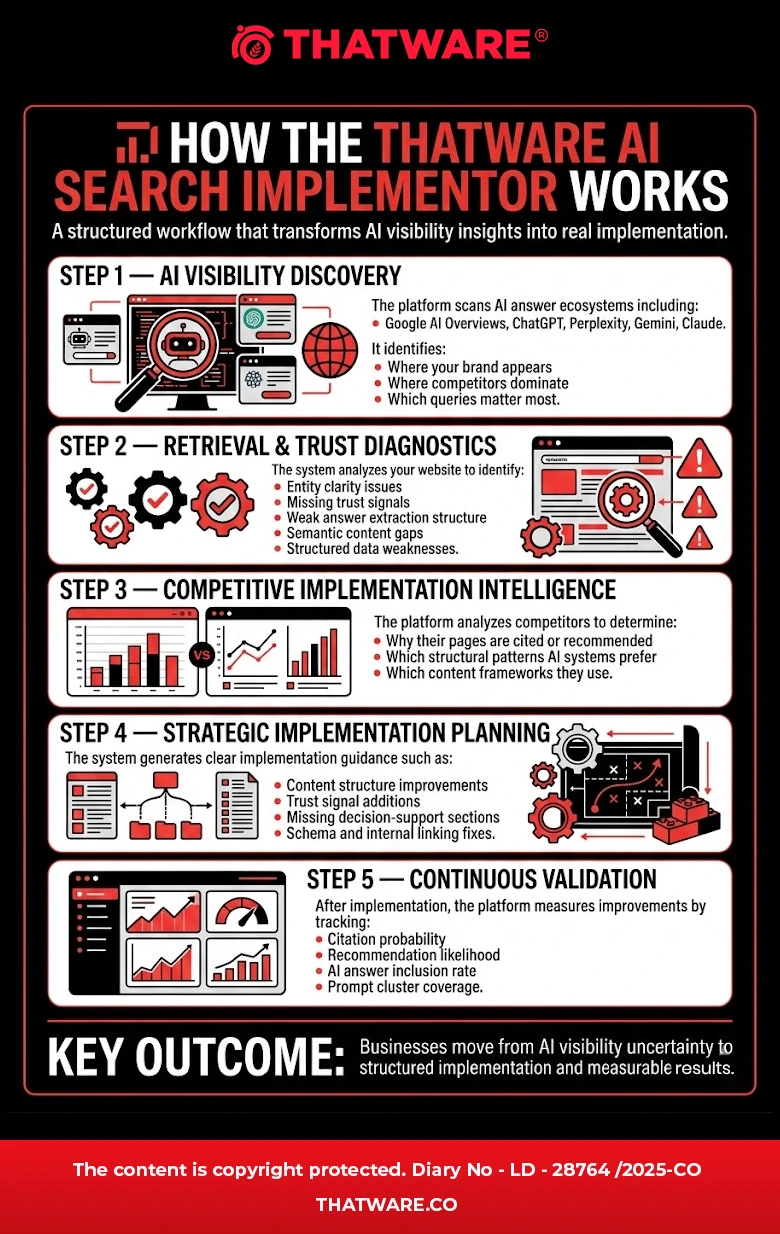

ThatWare’s AI Search Implementor is designed to solve this gap by identifying the root causes of weak AI visibility and generating clear, implementation-ready actions that help websites become more discoverable, trustworthy, and recommendable across AI answer ecosystems.

Below are the key problem areas faced by different stakeholders.

For Website Owners

Many website owners are discovering that their websites may rank in traditional search results yet remain absent from AI-generated answers. AI systems evaluate content differently from traditional search engines, prioritizing factors such as extractability, entity clarity, trust signals, and structured knowledge relationships.

As a result, business owners frequently ask questions such as:

- Why is my website not being cited or referenced in AI answers?

- Why are competitors being recommended by AI systems instead of my brand?

- Which pages on my site are reducing AI trust or retrieval confidence?

- What content elements are missing that prevent AI systems from understanding and using my information?

Common missing components often include elements related to:

- LLM Retrieval – Content structure that allows large language models to extract clear answers.

- Answer Engine Optimization (AEO) – Formatting information for AI-generated answers.

- Generative Engine Optimization (GEO) – Ensuring content aligns with generative search behaviors.

- LLM Answer Generation Readiness – Structuring content to be easily summarized, cited, and recommended.

Another major challenge for website owners is implementation clarity. Even when issues are identified, it is often unclear:

- What specific improvements should be implemented?

- Which pages should be prioritized?

- What changes can produce measurable improvements within weeks rather than months?

ThatWare’s solution addresses this challenge by diagnosing AI visibility barriers and converting them into actionable improvements that can be implemented immediately.

For E-commerce Brands

E-commerce brands face an even more dynamic challenge. AI systems increasingly influence product discovery and purchasing decisions, recommending products, comparing alternatives, and summarizing buyer options.

However, many online stores find that their products rarely appear in these AI-led recommendation flows.

Common concerns include:

- Why are my products not appearing in AI-generated product recommendations?

- Which product attributes or specifications are missing that prevent AI systems from understanding my products?

- What structured data elements or schema implementations are absent?

- Which trust signals, such as reviews, ratings, or proof elements, are required for recommendation inclusion?

- Do my category or product pages need structural redesign to support AI extraction and comparison?

Another critical gap involves buyer-intent content clusters. AI systems often recommend products based on contextual queries such as:

- “Best laptop for designers”

- “Affordable CRM for small teams”

- “Top alternatives to X”

Many e-commerce websites fail to support these recommendation scenarios because they lack comparison frameworks, buying guides, and decision-support content.

ThatWare’s platform helps e-commerce brands identify these structural gaps and implement improvements that increase the likelihood of product inclusion in AI-driven recommendations and answer summaries.

For Local Businesses

Local businesses are heavily impacted by AI-generated answers because many local queries now surface AI summaries before traditional listings.

Queries such as:

- “Best dentist near me”

- “Top marketing agency in London”

- “Reliable plumber in my area”

are increasingly answered directly by AI systems.

However, many local businesses struggle to appear in these responses due to missing entity signals, proof layers, or location authority indicators.

Typical issues include:

- The business not appearing in “best X near me” AI queries

- Missing trust layers, such as expertise signals, case studies, or verified credentials

- Weakly structured location and service pages

- Insufficient reviews, citations, and entity relationships

AI systems often evaluate local businesses using a combination of signals such as:

- Local entity clarity

- Reputation and reviews

- Proof of expertise

- Structured business information

- Service relevance

ThatWare’s solution helps local businesses strengthen these signals and restructure their digital presence to improve AI visibility in local recommendation and advisory queries.

For Agencies

Digital agencies face a different type of challenge. They must manage AI search optimization across multiple clients, often across different industries and geographies.

For agencies, the key difficulty lies in scaling AI visibility improvements efficiently.

Common questions agencies ask include:

- What AI visibility improvements can be implemented across 50 or more client websites?

- Which recommendations can be integrated directly into team workflows and project management systems?

- Which optimizations deliver the highest ROI and fastest impact for client campaigns?

Agencies need tools that go beyond diagnostics. They require platforms that provide:

- Standardized implementation frameworks

- Scalable optimization workflows

- Prioritized recommendations based on impact

- Task-ready outputs for developers, SEO teams, and content teams

ThatWare’s AI Search Implementor is designed with this scalability in mind. It converts AI visibility insights into structured tasks, implementation frameworks, and prioritized actions, enabling agencies to deploy improvements across large client portfolios efficiently.

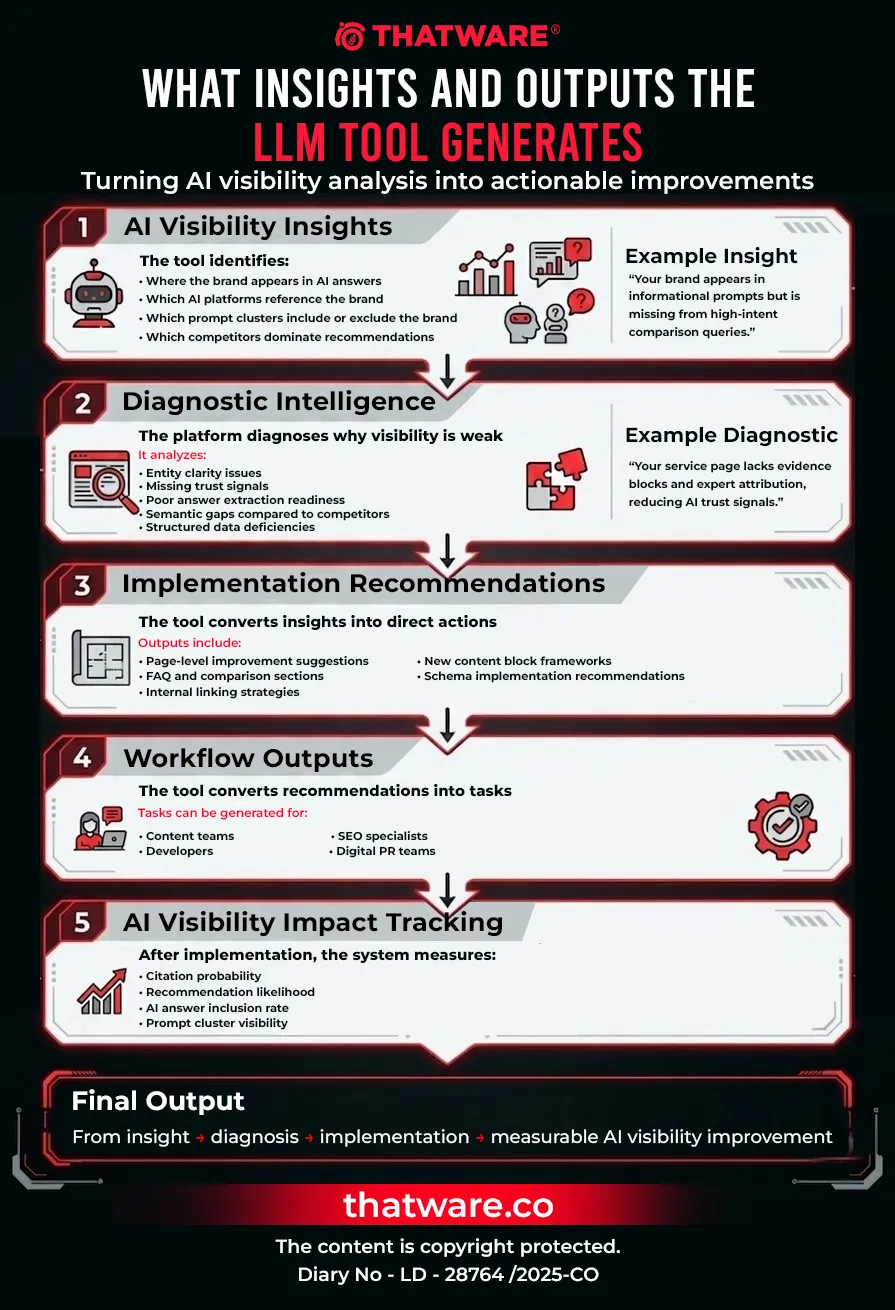

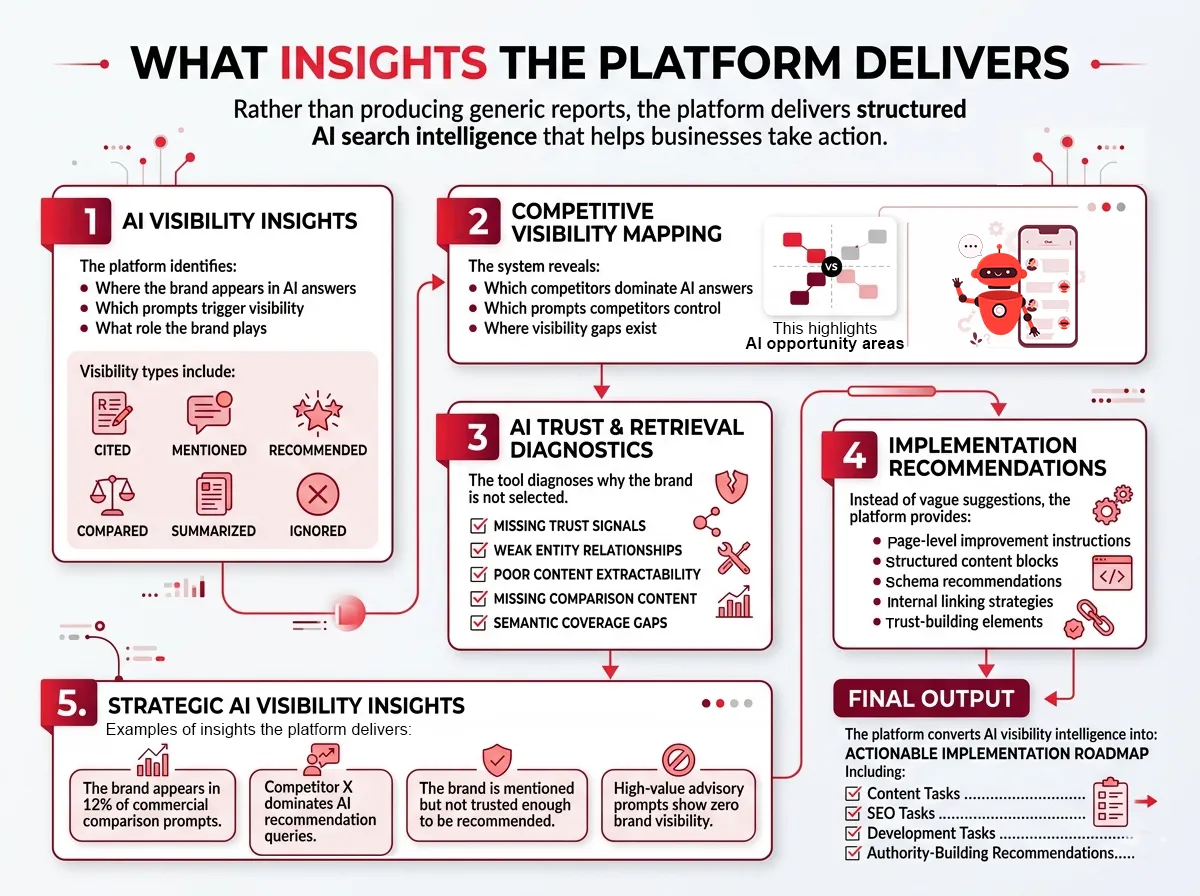

2. Product Vision

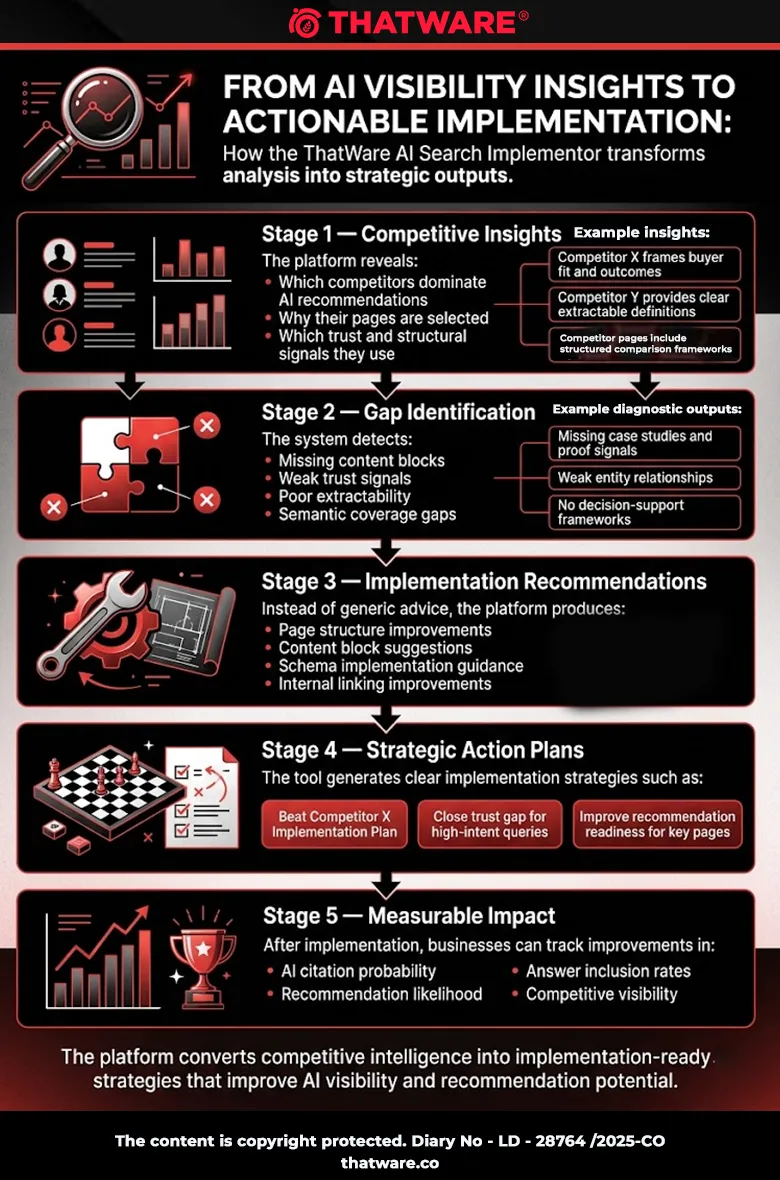

Most tools in the market stop at visibility tracking. They show whether a brand appeared in an AI response or not. While useful, this information alone does not help businesses improve their position in AI ecosystems.

ThatWare’s approach goes much further.

The platform is designed to move users from observation to action, providing clear insights into what needs to change on a website in order to improve its likelihood of being cited or recommended by AI systems.

This vision aligns with ThatWare’s long-standing focus on advanced search science, AI-driven optimization, and implementation-first strategies.

The Core Questions the Platform Must Answer

The product vision is centered around answering four fundamental questions that businesses now face in the AI search era.

1. Where does the brand appear in AI responses?

The tool analyzes how a brand appears across multiple AI-driven answer engines and conversational search platforms. It identifies:

- Which prompts trigger brand mentions

- Which AI systems surface the brand

- Whether the brand is cited, recommended, compared, summarized, or ignored

- Which competitors dominate specific prompt clusters

This visibility layer provides a clear picture of the brand’s AI search footprint.

2. Why does the brand appear — or fail to appear?

Understanding visibility alone is not enough. The platform diagnoses the underlying reasons behind AI inclusion or exclusion.

Through advanced diagnostics, the system evaluates factors such as:

- Entity clarity and brand authority signals

- Content extractability and answer-ready formatting

- Trust signals such as authorship, proof, and case studies

- Structured data and schema completeness

- Semantic coverage compared to competitors

- Internal knowledge graph strength

By identifying these structural and content-related issues, the platform reveals why AI systems trust some pages more than others.

3. What should be implemented to improve AI inclusion?

This is where the platform differentiates itself most strongly.

Rather than providing generic advice such as “improve content quality” or “increase authority,” the tool generates implementation-ready recommendations.

These include:

- Missing content sections and decision-support blocks

- Rewrite suggestions for key service or product pages

- FAQ and comparison frameworks

- Trust and proof elements such as case studies or expert signals

- Structured data recommendations

- Internal linking improvements

- Entity relationship enhancements

Each recommendation is designed to be directly actionable, allowing teams to implement improvements quickly.

4. How can improvements be validated?

Optimization for AI systems must be measurable.

The platform continuously evaluates how implemented changes affect a brand’s AI visibility and recommendation likelihood. It measures indicators such as:

- Citation probability

- Recommendation probability

- Prompt cluster inclusion rates

- Answer extraction readiness

- Entity confidence signals

This feedback loop ensures that users can clearly see whether the changes they implement are actually improving their AI search presence.

3. Product Pillars

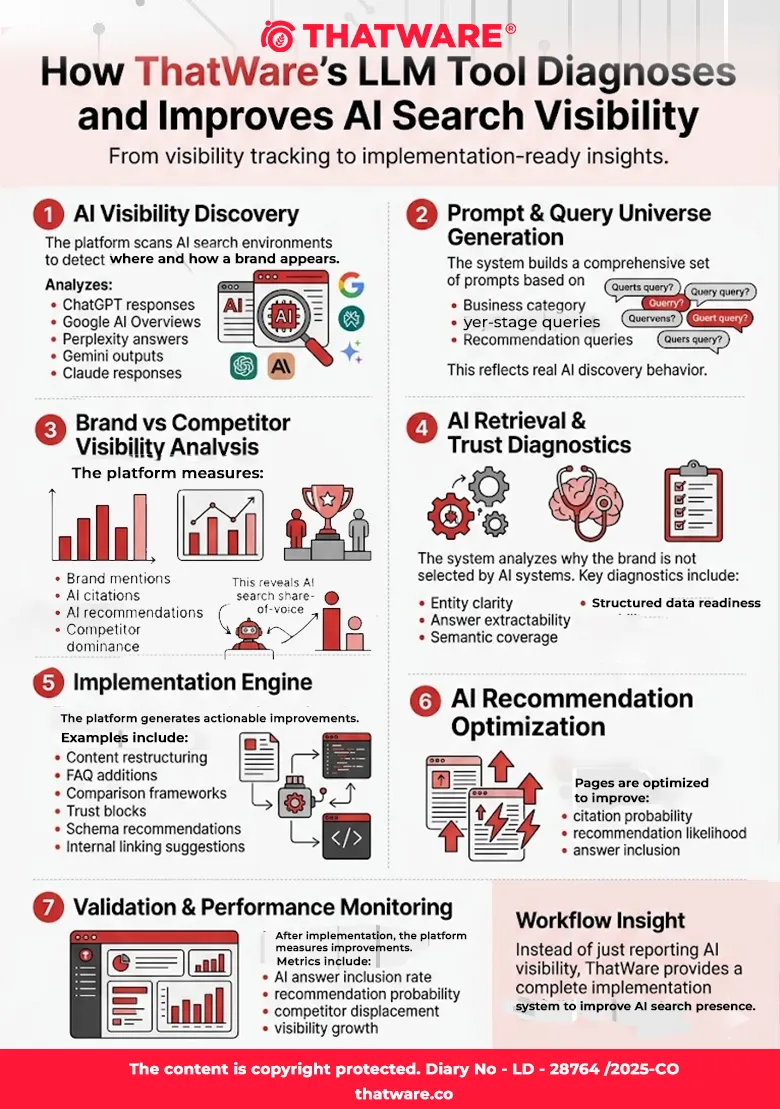

The ThatWare AI Search Implementor platform is designed around seven core product pillars. Each pillar focuses on a critical stage of AI search visibility — from discovery and diagnostics to implementation and validation.

These pillars ensure that the platform does not simply report AI visibility metrics but actively helps businesses understand, fix, and improve their presence across AI answer ecosystems.

The first pillar establishes the foundation of the entire platform: discovering where a brand appears in AI-generated answers and how it is positioned relative to competitors.

Pillar 1: AI Visibility Discovery

Purpose

The AI Visibility Discovery layer is responsible for detecting where and how a brand appears across AI-driven search and answer systems.

Modern search behavior is increasingly mediated by AI assistants such as Google AI Overviews, ChatGPT, Perplexity, Gemini, and Claude. These systems synthesize answers rather than simply displaying links, which means brands must now compete for inclusion inside AI-generated responses.

This pillar enables ThatWare’s platform to systematically analyze AI outputs and determine how frequently, where, and in what context a brand is surfaced.

Rather than relying on traditional ranking metrics, the system evaluates AI answer inclusion patterns, identifying opportunities where a brand is missing or underrepresented.

Key Questions This Pillar Answers

The AI Visibility Discovery system helps businesses understand:

- Where does the brand appear across AI answer ecosystems?

- For which prompts, queries, and user intents does the brand surface?

- What role does the brand play within AI-generated responses?

This layer gives organizations clarity on how AI systems currently perceive and represent their brand.

Visibility Roles

When a brand appears in an AI-generated answer, it may appear in different roles. ThatWare’s platform classifies brand appearances into structured visibility categories:

- Cited: The brand is referenced as a source or authority used by the AI system.

- Mentioned: The brand appears in the response but without strong endorsement.

- Recommended: The AI system actively suggests the brand as a preferred option.

- Compared: The brand appears as part of a comparison with competitors.

- Summarized: The AI system extracts information from the brand’s content and summarizes it in the response.

- Ignored: The brand is absent despite strong relevance to the query.

Understanding these roles helps organizations determine whether they are merely visible or genuinely trusted by AI systems.

Competitive Context

AI search is highly competitive, and many answers are dominated by a small set of authoritative brands.

ThatWare’s AI Visibility Discovery layer evaluates competitive dynamics by answering:

- Which competitors dominate AI-generated answers?

- Which prompt clusters are controlled by competitors?

- Which brands are consistently recommended by AI systems?

By mapping competitor visibility patterns, businesses can identify where competitors are winning and where opportunities exist to capture AI attention.

Core Features

To power the AI Visibility Discovery layer, the platform includes several specialized capabilities.

Prompt / Query Universe Generator

The platform automatically generates a comprehensive universe of relevant prompts based on:

- Business category

- Products or services

- Buyer-stage queries

- Recommendation queries

- Comparison queries

- Industry-specific language

This ensures the analysis reflects real AI discovery behavior, not just traditional keyword lists.

Brand vs Competitor Visibility Scanner

The scanner evaluates how often a brand appears compared to competitors across AI responses.

It identifies:

- Relative visibility share

- Competitor dominance in specific prompt clusters

- Brand inclusion gaps in high-value queries

This creates a clear picture of AI search share-of-voice.

AI Answer Snapshot Archive

The platform captures and stores snapshots of AI-generated answers across different models and prompt scenarios.

This allows users to:

- Review historical AI responses

- Track visibility changes over time

- Analyze how AI models represent their brand

It also helps teams understand how their content is interpreted and summarized by AI systems.

Mention, Citation, and Recommendation Classification

Every detected brand appearance is automatically classified based on its role in the answer.

This classification allows the platform to differentiate between:

- passive mentions

- authoritative citations

- direct recommendations

By separating these roles, ThatWare’s system provides deeper insight into AI trust signals.

Query Intent Clustering

Prompts are grouped into intent-based clusters so organizations can understand visibility patterns across different user journeys.

Instead of analyzing thousands of isolated prompts, the system identifies patterns within structured query groups.

Surface Segmentation

AI queries are segmented by user intent, allowing businesses to understand where they are visible across different stages of the decision journey.

Prompts are categorized into the following segments:

- Informational: Users looking for explanations, definitions, or general knowledge.

- Commercial: Users researching services, providers, or solutions.

- Comparative: Queries comparing multiple options or providers.

- Local: Location-based queries such as “best agency near me.”

- Product Discovery: Queries focused on discovering products or tools.

- Support / Advisory: Users seeking guidance or expert advice.

- Transaction Preparation: Users preparing to purchase or select a provider.

This segmentation helps businesses identify which types of AI queries they currently dominate and which ones represent missed opportunities.

Output Example

Instead of providing simple counts of brand mentions, the ThatWare platform delivers actionable insights.

Examples include:

- The brand is visible in 12% of commercial comparison prompts.

- The brand is absent in high-conversion advisory prompts, representing a missed opportunity.

- Competitor X dominates “best provider” AI recommendation queries.

- The brand is mentioned in several answers but not trusted enough to be recommended.

These insights provide the strategic foundation for the platform’s next layers — diagnostics and implementation — which guide businesses on how to improve their AI visibility and recommendation potential.

Pillar 2: AI Retrieval & Trust Diagnostics

One of the most critical challenges in AI search visibility is not simply ranking in traditional search engines—it is being trusted, extracted, and cited by AI systems.

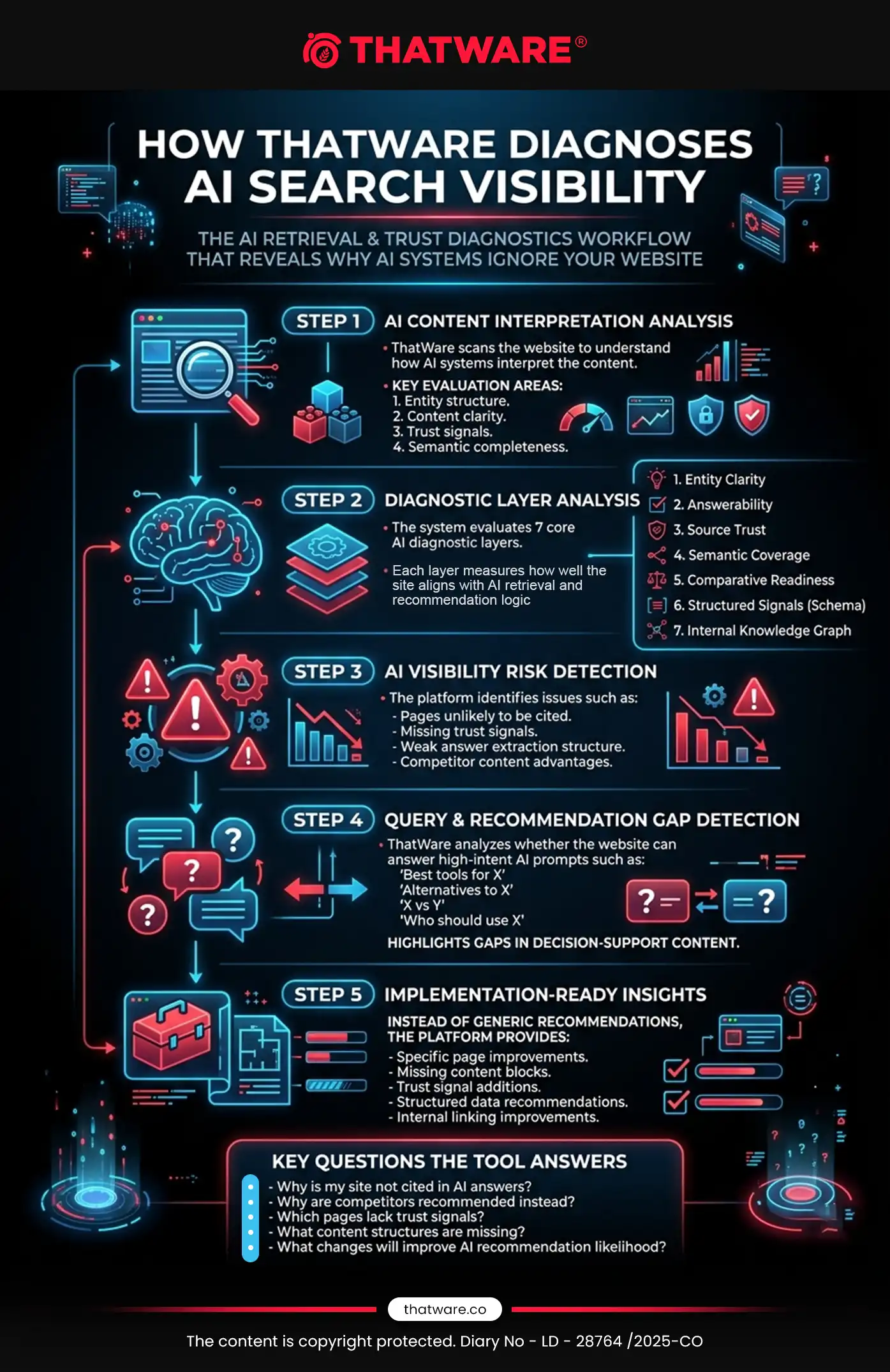

Modern AI systems such as ChatGPT, Google AI Overviews, Perplexity, Gemini, and Claude rely on sophisticated retrieval and reasoning mechanisms. These systems do not simply index pages; they evaluate entity clarity, factual trust, semantic completeness, and answer extractability before referencing or recommending a source.

The AI Retrieval & Trust Diagnostics pillar within the ThatWare platform is designed to uncover why a website fails to appear in AI-generated answers, recommendations, and citations. Instead of delivering generic SEO recommendations, the system analyzes a website through multiple AI-specific diagnostic layers to identify structural, semantic, and trust-related weaknesses that reduce the likelihood of AI inclusion.

This diagnostic framework transforms traditional website analysis into AI-native retrieval intelligence, enabling brands to understand how their content is interpreted by large language models and answer engines.

Diagnostic Layers

1. Entity Clarity

AI systems heavily rely on entity understanding to determine authority and contextual relevance. If a brand’s identity, relationships, and attributes are unclear, AI models struggle to associate the website with relevant queries.

ThatWare’s entity clarity diagnostics analyze whether the website clearly defines the brand as a structured entity within its domain.

The system evaluates:

- Whether the brand identity is clearly defined across pages

- Whether relationships between founder, company, services, and products are logically structured

- Whether entity attributes remain consistent throughout the website

- Whether the website communicates a clear entity hierarchy that AI systems can interpret

Weak entity clarity often leads to situations where a brand is mentioned but not recommended, or indexed but not trusted as a source.

By identifying inconsistencies and missing relationships, the platform helps websites build a stronger entity footprint, making them more recognizable to AI systems.

2. Answerability

AI answer engines prioritize content that can be easily extracted into answer-ready units. Pages that are overly verbose, poorly structured, or lacking clear informational segments are less likely to be selected by AI systems.

ThatWare evaluates how easily a page can be transformed into AI answers.

The system analyzes whether pages contain:

- Clear definitions

- Step-by-step explanations

- Structured comparisons

- Practical use cases

- Concise summaries

It also detects whether pages are too narrative-heavy or fluffy, which can reduce their usefulness for answer extraction.

Through answerability diagnostics, the platform identifies content that may rank in search engines but still fails to appear in AI responses due to poor extraction readiness.

3. Source Trust

Trust signals play a critical role in determining whether an AI system cites a source. AI models increasingly favor content that demonstrates verifiable authority, credibility, and expertise.

ThatWare evaluates the presence and strength of trust signals across a website.

These signals include:

- Clear author attribution

- External citations and references

- Testimonials and user feedback

- Case studies and proof of outcomes

- Professional credentials and expertise indicators

If a website lacks these credibility signals, AI systems may avoid citing the content even if the information is relevant.

The platform highlights missing proof layers and helps websites strengthen the evidence-based credibility that AI systems prefer.

4. Semantic Coverage

AI systems assess whether a page covers a topic comprehensively. If important subtopics are missing, the system may consider competitor pages more complete and therefore more suitable for answering queries.

ThatWare performs deep semantic analysis to detect coverage gaps.

This includes identifying:

- Missing subtopics within a content cluster

- Areas where competitors provide deeper explanations

- Conceptual gaps that weaken topical authority

By highlighting these gaps, the tool helps businesses expand their content strategically so that their pages better match the full informational scope expected by AI systems.

5. Comparative Readiness

Many high-intent queries involve comparisons or decision-making prompts such as:

- “Best tools for X”

- “Alternatives to X”

- “X vs Y”

AI systems frequently use comparison-oriented content to generate recommendations.

ThatWare evaluates whether a website includes decision-support content such as:

- “X vs Y” comparison pages

- “Best X” lists

- “Alternatives to X” resources

- “Why choose X” explanations

- “Who should use X” guidance

If a website lacks these structures, it may struggle to appear in commercial or recommendation-based AI queries.

This diagnostic layer ensures that websites include the decision frameworks AI systems rely on when generating recommendations.

6. Structured Signal Readiness

Structured data helps AI systems interpret page meaning and entity relationships more reliably.

ThatWare scans websites to detect missing or weak structured signals, including:

- Schema markup

- FAQ structured data

- Product or service entity markup

- Review schema

- Organization and person schema

These structured elements act as machine-readable signals that help AI systems understand the credibility and purpose of a page.

When these signals are missing, AI systems may fail to interpret the content correctly, reducing its likelihood of being used in answers.

7. Internal Knowledge Graph

AI systems also evaluate how information is connected within a website. Strong internal linking structures reinforce topic relationships and improve AI understanding.

ThatWare analyzes the internal knowledge graph of a website to identify structural weaknesses.

The system detects issues such as:

- Weak internal linking between related pages

- Orphan commercial pages that receive little contextual support

- Poor topical reinforcement within content clusters

- Lack of hub-and-spoke architecture

Without a strong internal structure, even high-quality content can appear isolated and less authoritative in AI retrieval systems.

Example Diagnostic Output

A major limitation of many SEO and AI visibility tools is that they produce generic recommendations that are difficult to act upon.

Instead of vague advice like:

“Improve content depth.”

ThatWare’s AI Retrieval & Trust Diagnostics provide specific, actionable insights.

For example:

- “Your service page defines the offering but lacks trust-bearing evidence blocks.”

- “Competitor pages include price framing, use-case fit, and proof signals that your page currently lacks.”

- “Founder and company entity signals are disconnected, weakening brand authority signals.”

- “Your content is optimized for traditional SEO indexing but not structured for AI answer extraction.”

By delivering clear explanations and implementation-ready insights, this diagnostic layer enables businesses to understand exactly what prevents their pages from being cited or recommended by AI systems.

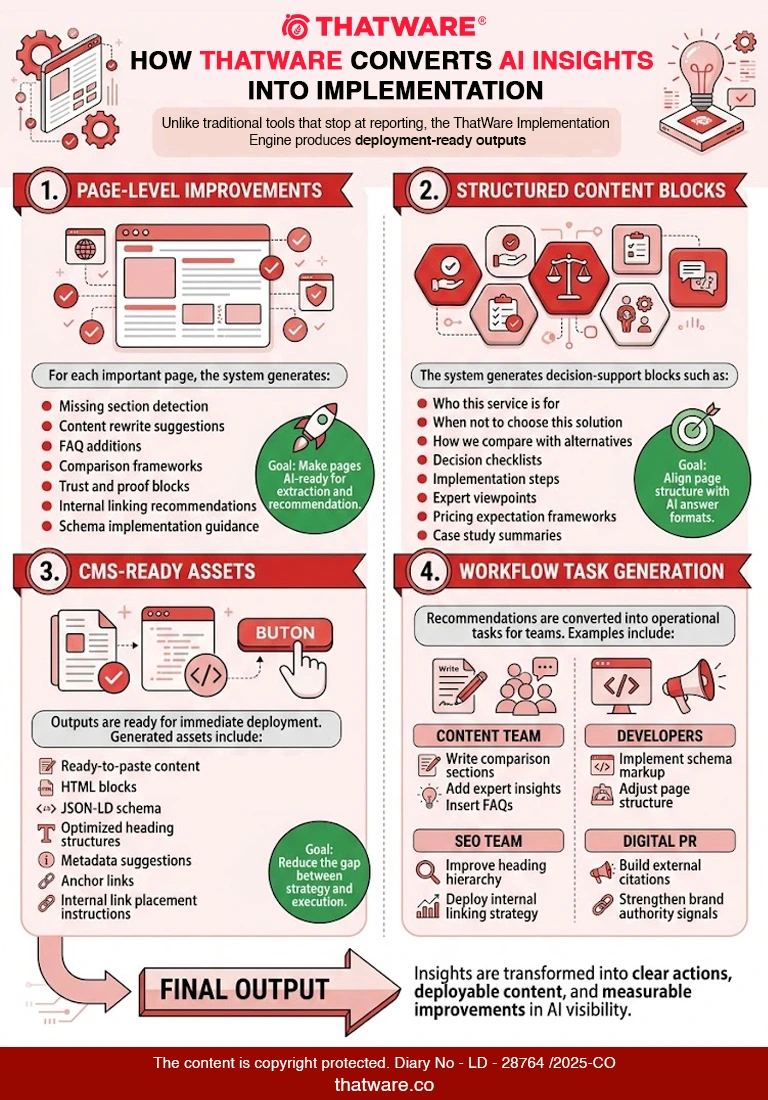

Pillar 3: Implementation Engine

The Implementation Engine is the core differentiator of the ThatWare AI Search Implementor platform. While most tools stop at reporting insights or highlighting visibility gaps, ThatWare focuses on what businesses actually need—clear, implementation-ready actions that directly improve AI visibility, citation probability, and recommendation likelihood.

AI-driven search systems such as ChatGPT, Google AI Overviews, Perplexity, Gemini, and Claude prioritize content that is structured for answer extraction, supported by strong trust signals, and aligned with user intent. Many websites fail to appear in AI answers not because they lack authority, but because their content is not packaged in a way that AI systems can easily retrieve, evaluate, and recommend.

The Implementation Engine bridges this gap by transforming diagnostics into actionable improvements at the page, block, and workflow level. Instead of telling users to “improve content quality” or “increase authority,” the system provides precise instructions, ready-to-use content structures, and deployment-ready assets.

This ensures that website owners, SEO teams, and agencies can move from analysis to implementation quickly, improving their chances of being cited and recommended by AI systems.

Page-Level Implementation

At the page level, the Implementation Engine evaluates every important URL and generates specific improvements required to make the page AI-ready.

For each page, the platform identifies structural, informational, and trust-related gaps that prevent the page from being used in AI-generated answers. It then generates clear implementation guidance tailored to that page’s purpose, intent, and competitive landscape.

Key page-level recommendations include:

Missing Sections Detection

The tool identifies essential sections that are absent but commonly present in pages that appear in AI answers. These may include comparison frameworks, decision-support sections, expert insights, or proof elements.

Rewrite Suggestions

ThatWare’s system generates optimized rewrite recommendations that make content more extractable, factual, and AI-friendly, improving the likelihood of citation or recommendation.

FAQ Additions

The engine generates high-intent FAQ sections based on prompt clusters and real user queries, improving the page’s ability to address conversational AI queries.

Comparison Blocks

Pages often fail to rank in AI responses because they lack structured comparisons. The engine suggests “X vs Y,” “Best alternatives,” and “When to choose X” sections that help AI systems present balanced answers.

Trust Blocks

AI systems prioritize content supported by evidence and authority signals. The engine recommends adding trust elements such as testimonials, proof points, statistics, case studies, and expert statements.

Statistics and Evidence Blocks

Data-driven insights increase citation probability. The engine identifies where statistics or research-backed statements should be added to strengthen factual credibility.

Author and Expert Proof Sections

Pages that demonstrate real expertise and identifiable authorship are more likely to be cited. The engine suggests author bios, credentials, and expert commentary sections.

Internal Link Suggestions

Internal linking strengthens topic authority and helps AI systems understand content relationships. The tool suggests contextual internal links between relevant pages.

Schema Recommendations

The engine detects missing structured data and recommends appropriate schema types such as:

- FAQ schema

- Organization schema

- Product schema

- Review schema

- Article schema

- Service schema

This improves how AI systems interpret the page’s context and entities.

Block-Level Implementation

Beyond page-level improvements, the Implementation Engine generates structured content blocks that help pages better support decision-making and AI answer extraction.

These blocks are designed to mirror the types of content AI systems frequently extract and summarize.

Examples include:

“Who This Service Is For”

Defines the ideal audience and use cases for a product or service. This helps AI systems understand the target audience and recommendation fit.

“When Not to Choose This Solution”

This section increases trust and credibility by acknowledging scenarios where the solution may not be ideal.

“How We Compare with Alternatives”

Comparison frameworks help AI systems generate balanced recommendations.

Decision Checklists

These sections guide users through evaluation criteria and provide structured decision-support content that AI models often surface.

Implementation Steps

Step-by-step guides are highly extractable and frequently used in AI responses.

Expert Viewpoints

Statements from specialists or industry experts reinforce authority and improve trust signals.

Pricing Expectation Frameworks

These sections provide users with realistic expectations about cost, helping AI systems answer pricing-related queries.

Case Study Snippets

Short proof-driven summaries of real results strengthen credibility and support recommendation logic.

By adding these blocks, pages become more aligned with the information structures that AI systems prioritize when generating answers.

CMS-Ready Implementation

One of the most powerful aspects of ThatWare’s Implementation Engine is that it does not stop at recommendations. It produces deployment-ready assets that teams can immediately implement within their CMS.

This dramatically reduces friction between analysis and execution.

Generated outputs include:

Ready-to-Paste Copy

Fully written sections optimized for AI answer extraction and user readability.

HTML Content Blocks

Structured HTML modules that can be inserted directly into CMS editors.

JSON-LD Schema Markup

The system generates structured data in JSON-LD format, ready for implementation without additional engineering effort.

Metadata Suggestions

Recommendations for title tags, meta descriptions, and structured headings aligned with AI query intent.

Anchor Links

Anchor structures that improve content navigation and allow AI systems to reference specific sections.

Heading Structure Optimization

Suggested H1, H2, and H3 hierarchy to improve clarity, extraction readiness, and topical organization.

Internal Link Placement Instructions

Detailed instructions on where to place links between pages to strengthen the site’s internal knowledge graph.

By delivering CMS-ready implementation components, ThatWare ensures that recommendations can move from strategy to deployment within minutes rather than weeks.

Workflow Implementation

The final step of the Implementation Engine is turning recommendations into clear operational tasks that teams can execute.

Many SEO and AI visibility tools fail because they generate insights without translating them into actionable workflows. ThatWare solves this by converting every recommendation into task-level outputs aligned with real team roles.

Examples include:

Content Team Tasks

- Create new sections or blocks

- Rewrite existing content for answer extraction

- Add comparison frameworks

- Insert FAQs and expert insights

Developer Tasks

- Implement schema markup

- Adjust page structure

- Add technical enhancements for structured content

SEO Team Tasks

- Optimize heading hierarchy

- Implement internal linking recommendations

- Improve topical coverage and entity alignment

Schema Engineering Tasks

- Deploy structured data models

- Validate schema implementation

- Align entity relationships across pages

Outreach and Digital PR Tasks

- Strengthen trust signals through citations

- Build external validation and authority signals

- Acquire references and mentions that improve credibility

Pillar 4: Query-to-Page Mapping Engine

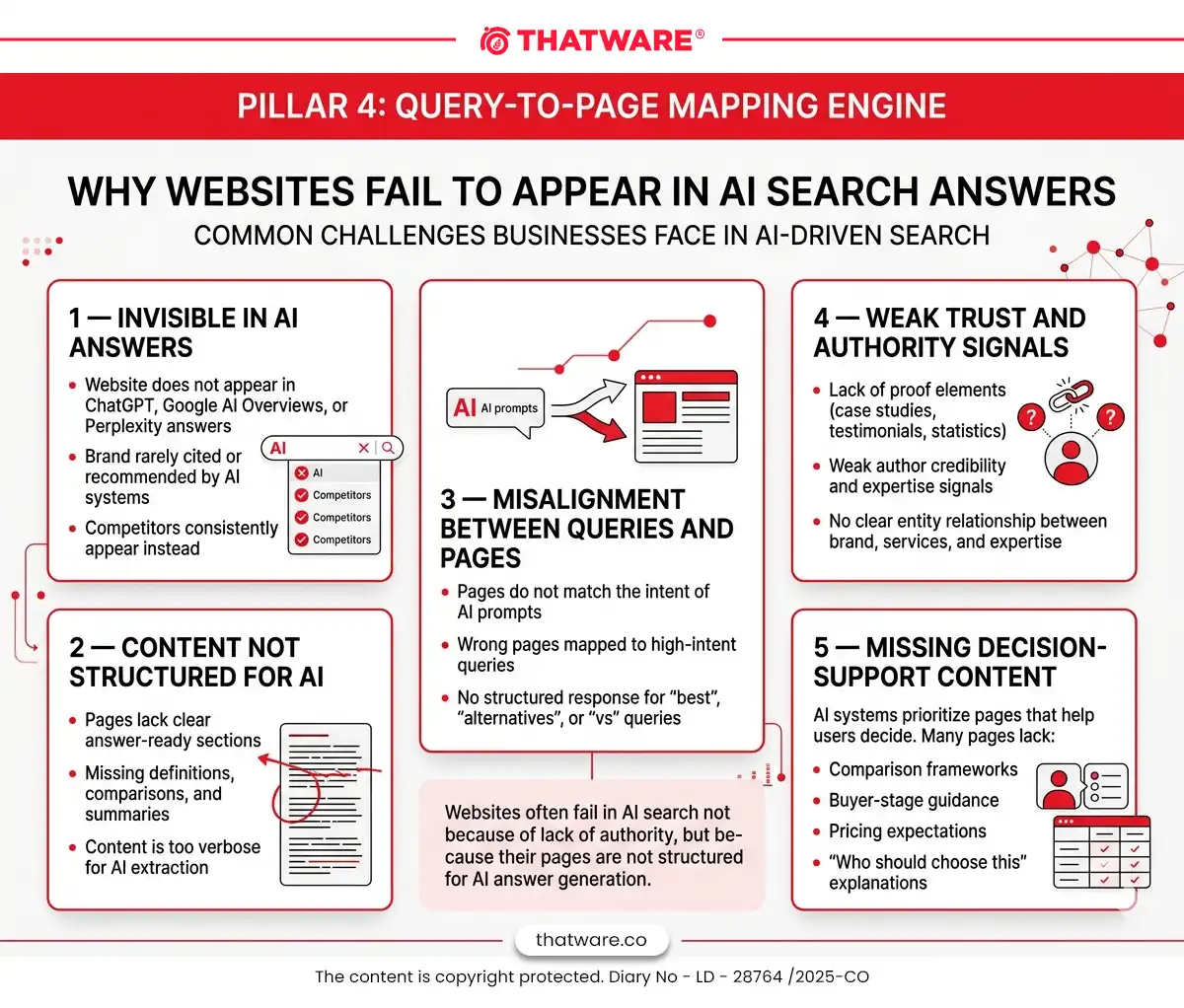

One of the most powerful and differentiated components of the ThatWare AI Search Implementor is the Query-to-Page Mapping Engine. While most tools only track prompts or measure visibility, this engine directly connects AI search behavior to the exact pages that should win those answers.

In AI-driven discovery systems like Google AI Overviews, ChatGPT, Perplexity, Gemini, and Claude, answers are generated from pages that best match the intent, structure, authority signals, and decision-support content required by the query. However, many websites fail to appear not because they lack authority, but because their pages are misaligned with the intent and structure required by AI systems.

The Query-to-Page Mapping Engine solves this gap by identifying which prompts matter, determining which page should serve those prompts, diagnosing misalignment, and generating implementation-ready improvements.

Purpose

The engine connects AI prompt behavior to site architecture and content readiness, enabling businesses to optimize pages specifically for AI answer inclusion and recommendation.

It systematically maps:

- Prompt clusters — groups of AI queries representing real discovery behavior.

- Target pages — the pages on a site that should logically satisfy those prompts.

- Alignment problems — structural, semantic, or trust-related issues preventing the page from being selected.

- Required improvements — specific implementation steps needed to improve AI citation or recommendation likelihood.

This approach transforms AI optimization from guesswork into a clear, implementable strategy tied directly to revenue pages.

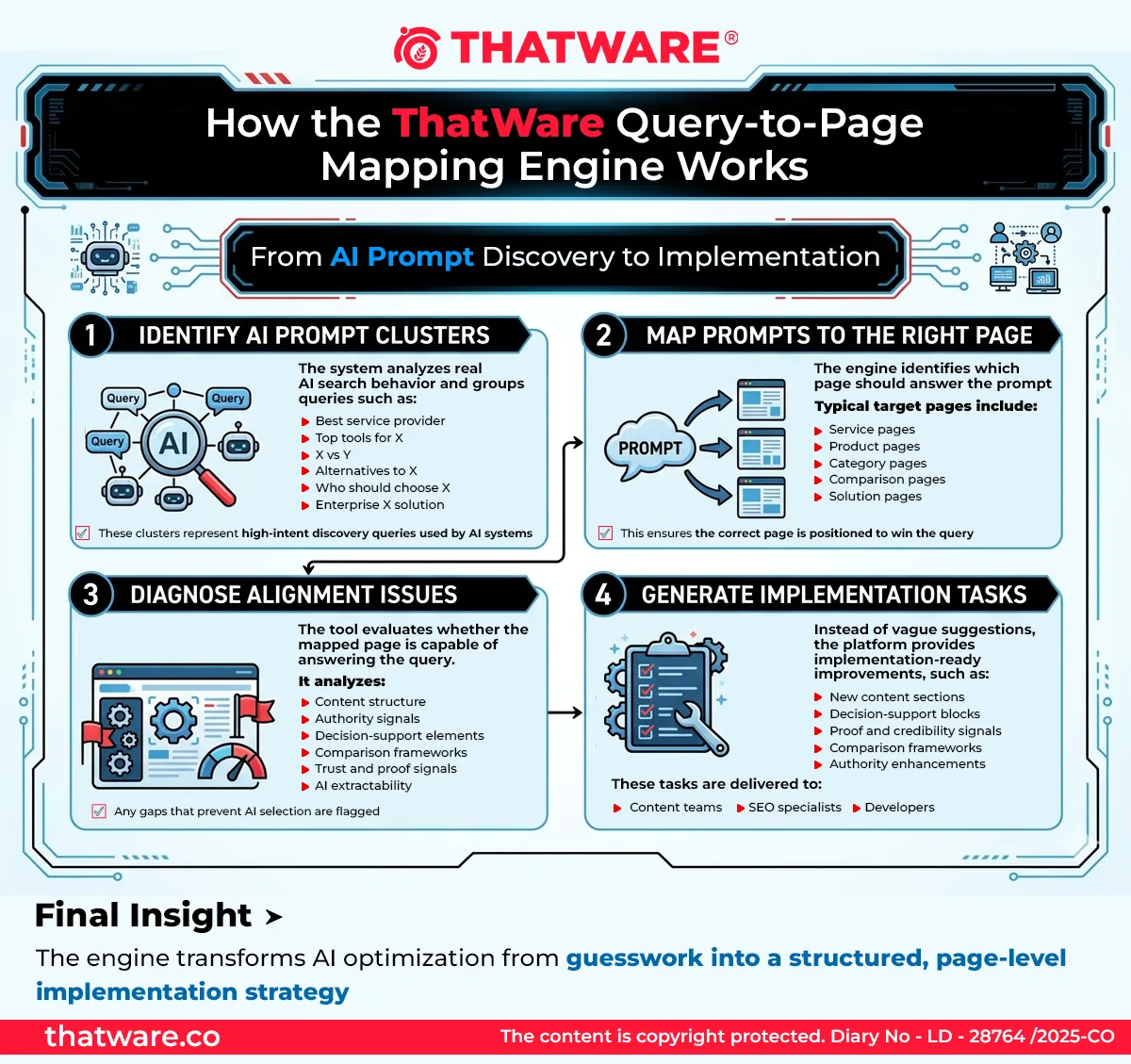

How the Engine Works

The Query-to-Page Mapping Engine follows a structured process.

1. Prompt Cluster Identification

The system generates and analyzes clusters of prompts representing common AI search behaviors, including:

- “Best service provider”

- “Top tools for X”

- “X vs Y”

- “Alternatives to X”

- “Who should choose X”

- “Affordable X”

- “Enterprise X solution”

These clusters represent high-intent discovery and recommendation queries used across AI systems.

2. Page Mapping

Once prompt clusters are identified, the system maps them to the most relevant page on the site, typically a:

- Service page

- Product page

- Category page

- Comparison page

- Solution page

This mapping ensures the right page is positioned to win the prompt.

3. Alignment Diagnostics

The engine then analyzes whether the mapped page is actually capable of answering the prompt effectively.

It evaluates:

- Content structure

- Authority signals

- Decision-support elements

- Comparison frameworks

- Proof and credibility signals

- AI extractability

If the page lacks these components, the system flags alignment gaps.

4. Implementation Recommendations

Instead of generic advice, the platform generates precise improvements that help the page align with the intent of the prompt cluster.

This includes:

- New content sections

- Structural improvements

- Authority signals

- Comparison frameworks

- Proof elements

- Decision-support content

These recommendations are generated as implementation-ready tasks for content teams, SEO specialists, and developers.

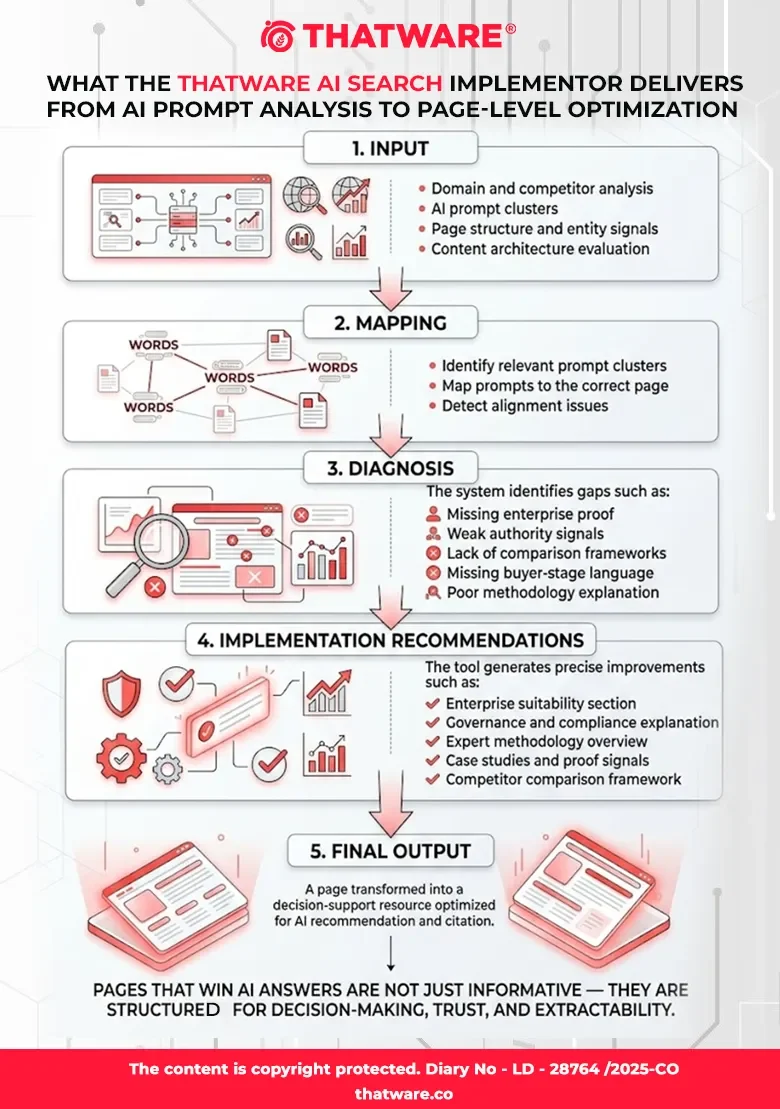

Example

Prompt Cluster

“Best AI SEO agency for enterprise brands”

Mapped Page

Enterprise SEO service page

Detected Problems

- Lack of enterprise-specific proof

- Weak authority and expertise signals

- No structured comparison framework

- Missing buyer-stage language

- Insufficient methodology explanation

Suggested Implementation

The system recommends improvements designed to increase the page’s probability of being recommended in AI answers.

Recommended additions include:

- Enterprise suitability section explaining which organizations benefit from the service

- Governance and compliance explanation outlining enterprise-level operational processes

- Expert-led methodology overview describing ThatWare’s proprietary approach

- Enterprise case studies demonstrating real-world outcomes

- Competitor comparison framework explaining how ThatWare differs from other agencies

These improvements help transform the page into a decision-support resource, which is exactly the type of content AI systems prioritize when generating recommendations.

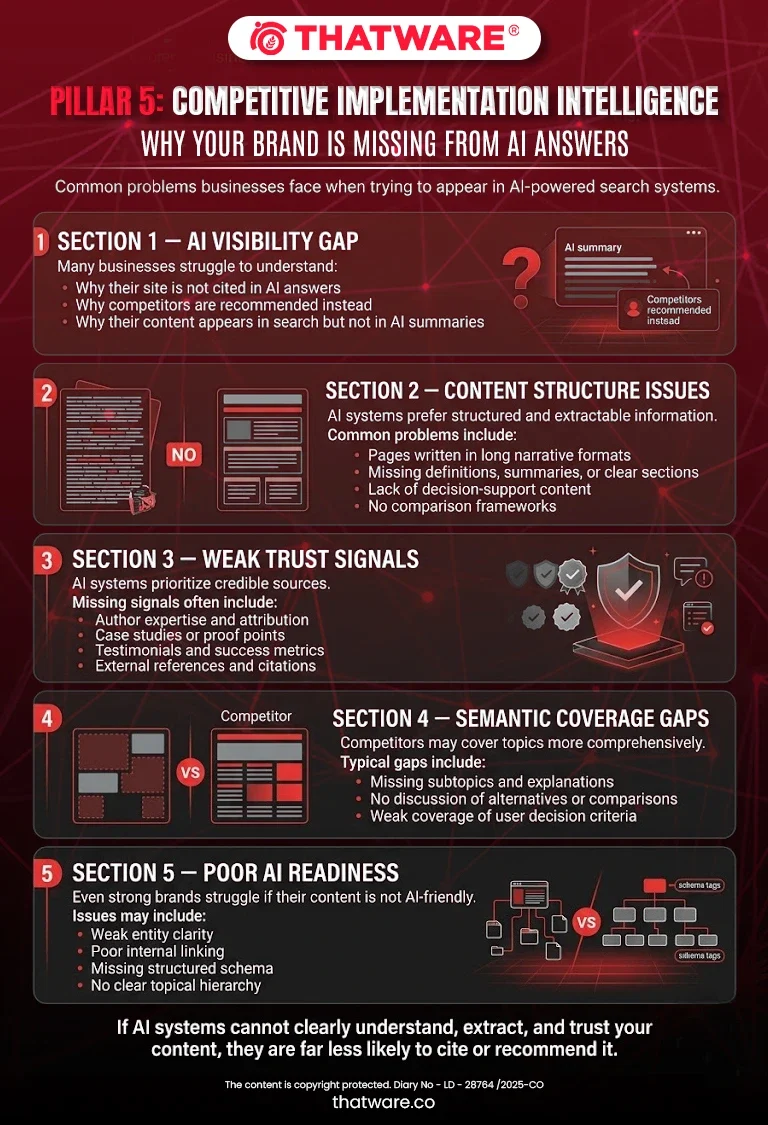

Pillar 5: Competitive Implementation Intelligence

One of the most critical capabilities of the ThatWare AI Search Implementor is understanding why competitors are being cited, recommended, or prioritized by AI systems. Visibility in AI-generated answers is rarely accidental. It is typically the result of how content is structured, how trust signals are presented, and how clearly a page supports decision-making for users.

The Competitive Implementation Intelligence layer goes beyond basic competitor tracking. Instead of simply identifying which competitors appear in AI answers, the platform analyzes how and why their content performs better in AI-driven recommendation environments such as Google AI Overviews, ChatGPT discovery, Perplexity, Gemini, and Claude.

By reverse-engineering competitor success patterns, ThatWare enables businesses to identify precise implementation opportunities that can improve their likelihood of being cited or recommended.

Competitor Page Pattern Analysis

AI systems tend to favor pages that are clearly structured for answer extraction and decision support. The tool analyzes competitor pages to detect structural patterns that contribute to their inclusion in AI responses.

This includes identifying:

- How competitors define their services or products

- Whether they use clear summaries, structured explanations, or comparison frameworks

- How they frame use cases, buyer fit, and expected outcomes

- The presence of decision-support content such as FAQs, checklists, and summaries

For example, the tool may detect that a competitor’s page begins with a concise definition and outcome-focused summary, which makes it easier for AI systems to extract and reference that content in generated answers.

ThatWare’s platform highlights these structural advantages and recommends equivalent or improved implementations for the user’s pages.

Semantic Gap Detection

Another major reason competitors dominate AI answers is semantic coverage.

AI systems prefer sources that demonstrate comprehensive topical coverage. If competitor pages cover key subtopics, related concepts, or decision criteria that a brand’s pages ignore, the competitor is more likely to be selected.

The Competitive Implementation Intelligence engine identifies:

- Missing concepts

- Underserved query intents

- Absent comparison discussions

- Weak explanation depth

For example, the tool may detect that competitors include sections such as:

- “Who this solution is best for”

- “Common use cases”

- “Alternatives to this approach”

- “How to evaluate providers”

If these elements are missing from the user’s page, the platform recommends specific content blocks to close the semantic gap.

Trust Signal Comparison

Trust is a critical factor in AI recommendations. AI models tend to prefer sources that demonstrate credibility, expertise, and validation.

The tool evaluates competitor pages against the user’s pages across multiple trust indicators, including:

- Author expertise and attribution

- Case studies and proof points

- Testimonials and client references

- Industry recognition or credentials

- External citations and research references

- Data-backed claims and statistics

If competitors present clear evidence of outcomes and authority, the system highlights the difference and recommends implementation improvements.

For instance, if competitors include quantifiable results or client success stories, the tool may suggest adding:

- Case study summaries

- Client outcome metrics

- Industry-specific proof blocks

Review Footprint Comparison

For certain industries—particularly local businesses, SaaS platforms, and service providers—reviews and reputation signals significantly influence AI recommendations.

The platform analyzes:

- Volume and distribution of reviews

- Presence of structured review schema

- Placement of testimonial content within pages

- Third-party validation signals

If competitors demonstrate stronger review signals, the platform identifies the gap and provides suggestions to strengthen credibility signals across relevant pages.

Content Packaging Comparison

Even when two sites have similar expertise, the one that packages information more clearly for extraction is more likely to be selected by AI systems.

The tool evaluates how competitor content is packaged in terms of:

- Structured summaries

- Extractable definitions

- Comparison frameworks

- Clear headings and answer blocks

- Decision-support sections

This analysis helps identify situations where a brand already has strong expertise but fails to present it in AI-friendly formats.

For example, the system may determine that competitors present their expertise through clear step-by-step frameworks, while the user’s page presents similar information in long narrative paragraphs that are harder for AI systems to extract.

Example Insights Generated by the Tool

The Competitive Implementation Intelligence module produces clear explanations such as:

- “Competitor X is selected in AI recommendation flows because they clearly frame buyer fit, expected outcomes, and industry specialization.”

- “Competitor Y is frequently cited because their pages contain concise, extractable definitions supported by evidence and statistics.”

- “Your brand demonstrates strong authority, but the information is not structured in a way that supports AI recommendation or citation.”

These insights provide actionable understanding of the competitive landscape within AI search environments.

Strategic Outputs

The ultimate goal of this pillar is not simply competitor analysis but strategic implementation guidance. The platform converts competitor insights into concrete strategies that users can deploy.

Examples of strategic outputs include:

Beat Competitor X Implementation Plan

The system generates a structured plan outlining:

- Content improvements required to surpass the competitor

- Trust signals that need strengthening

- Additional sections or frameworks required on key pages

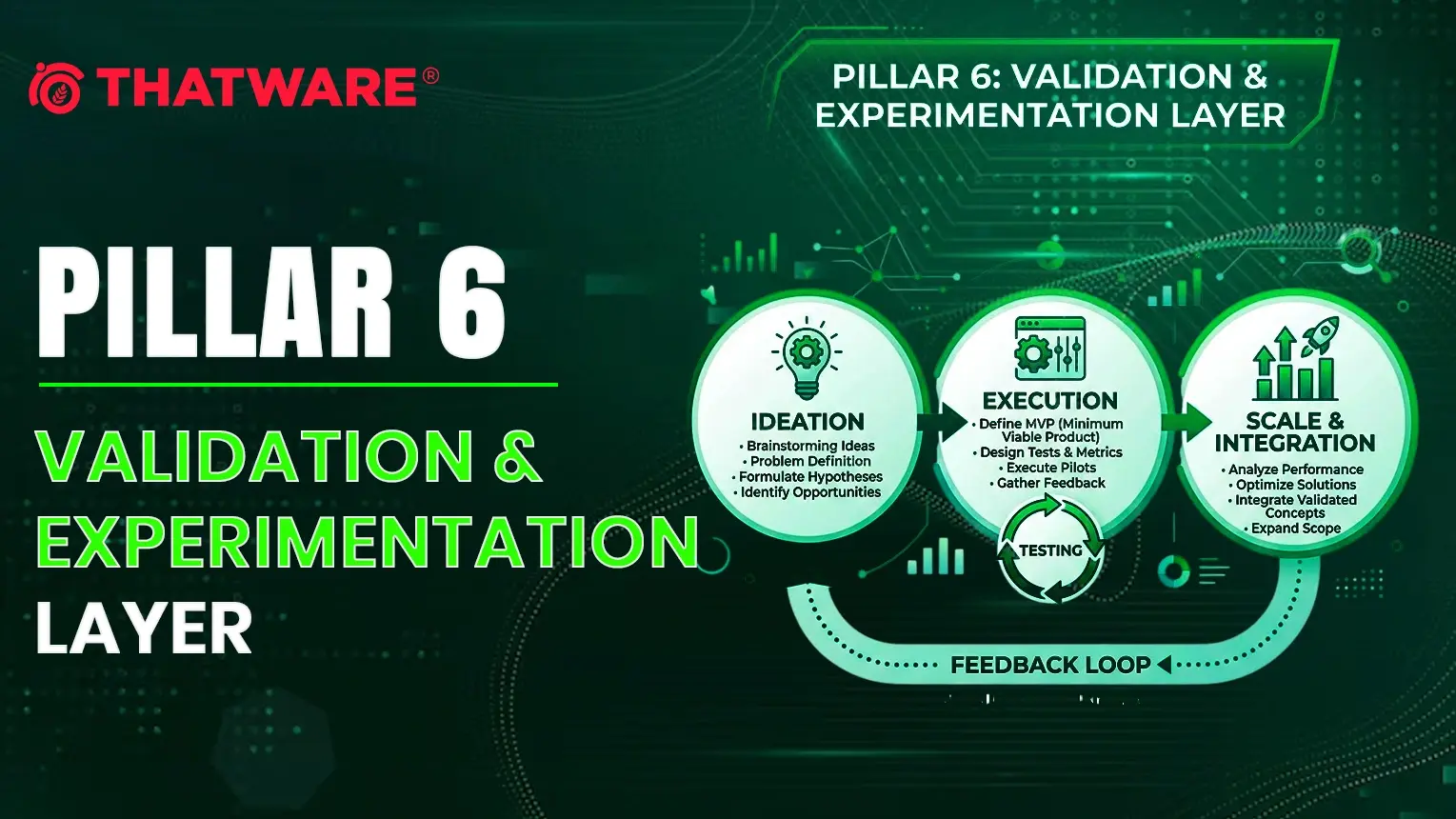

Pillar 6: Validation & Experimentation Layer

The Validation & Experimentation Layer is where the platform moves beyond diagnostics and implementation to prove real performance improvement in AI search ecosystems. While many tools stop at recommendations, ThatWare’s system closes the loop by validating whether implemented changes actually increase a brand’s visibility and recommendation likelihood across AI answer engines.

This layer ensures that optimization efforts are not based on assumptions. Instead, every change made to a website can be tested, measured, and refined using AI behavior simulations and structured performance tracking.

At its core, this pillar transforms the platform into an ongoing optimization system rather than a one-time auditing tool.

ThatWare designed this layer to answer one critical question:

Did the implementation actually improve the brand’s ability to be cited, recommended, or surfaced in AI-generated answers?

The Validation Workflow

The validation engine operates through a structured experimentation cycle that mirrors modern product experimentation frameworks.

1. Detect Issue

The process begins with the diagnostic system identifying weaknesses that reduce AI visibility. These may include missing decision-support content, weak entity signals, insufficient trust elements, or pages that lack extractable answer structures.

For example, the system may detect that a service page is not structured in a way that allows AI systems to easily extract recommendations or comparisons.

2. Recommend Fix

Once the issue is identified, the platform generates implementation-ready recommendations tailored to the specific page and prompt clusters.

Examples of fixes may include:

- Adding decision-support sections such as “Who This Service Is For”

- Implementing comparison frameworks

- Adding structured data and schema

- Improving entity relationships

- Introducing proof signals such as case studies, statistics, or expert commentary

Each recommendation is designed to improve the page’s retrieval readiness and recommendation potential.

3. Implement Fix

After recommendations are approved, the changes are implemented through the platform’s Implementation Studio or deployed by the content, development, or SEO teams.

Because ThatWare’s system generates CMS-ready blocks, schema structures, and task-level workflows, implementation can occur quickly and systematically across multiple pages.

4. Re-evaluate Pages

Once changes are live, the platform automatically re-crawls and analyzes the updated pages.

This step evaluates improvements in areas such as:

- content extractability

- trust signal coverage

- entity clarity

- decision-support completeness

- internal knowledge graph alignment

This ensures the page now meets the structural expectations required for AI answer extraction.

5. Simulate AI Responses

One of the most advanced components of this pillar is the AI response simulation engine.

The platform runs relevant prompt clusters across AI answer environments and analyzes how the updated page aligns with answer-generation patterns.

This allows the system to evaluate whether the page is now more likely to be:

- cited as a source

- included in summarized answers

- recommended as a provider

- referenced in comparisons

- surfaced in advisory prompts

Instead of waiting months for indirect signals, this simulation provides early indicators of AI visibility improvement.

6. Measure Improvements

The final step is to measure the impact of the implementation.

The platform compares pre-implementation and post-implementation states, identifying improvements in citation potential, recommendation readiness, and answer inclusion.

This closes the feedback loop and allows teams to continuously refine optimization strategies.

Through this process, the platform evolves from a static analyzer into a continuous experimentation engine for AI search visibility.

Metrics to Track

To accurately measure progress, the Validation & Experimentation Layer tracks a series of AI visibility and recommendation readiness metrics.

These metrics are designed to reflect how AI systems interpret and utilize website content.

Citation Probability

This metric estimates the likelihood that a page will be used as a source reference in AI-generated answers.

The score is influenced by:

- factual anchors

- definitional clarity

- structured content

- credible citations

- expert attribution

Pages with higher citation probability are more likely to appear as trusted references in AI responses.

Recommendation Probability

Recommendation probability measures how likely a page or brand is to be recommended by AI systems in commercial or advisory prompts.

This score considers:

- decision-support content

- buyer-fit explanations

- comparative frameworks

- proof and outcomes

- service or product clarity

Improving this metric increases the chances that AI systems will suggest the brand when users ask questions such as:

- “best provider”

- “top alternatives”

- “which company should I choose”

Answer Inclusion Rate

This metric tracks how frequently a brand appears in AI-generated responses across a given prompt cluster.

The platform measures:

- inclusion in summaries

- brand mentions

- citations

- recommendation placements

Tracking this rate over time helps organizations understand whether their visibility in AI ecosystems is expanding or declining.

Prompt Cluster Win Rate

Prompt clusters represent groups of related queries that reflect real user intent.

Examples include:

- “best SEO agency”

- “alternatives to X”

- “how to choose an SEO provider”

The win rate measures how often the brand appears in AI responses within a given cluster compared to competitors.

This helps identify strategic gaps in AI search presence.

Trust Signal Completeness

AI systems increasingly evaluate trust and credibility signals before surfacing sources.

This metric measures the completeness of trust indicators such as:

- case studies

- testimonials

- expert attribution

- statistics and data points

- references and citations

- transparent authorship

Improving trust signal completeness increases both citation and recommendation potential.

Entity Confidence Score

AI models rely heavily on entity understanding.

The Entity Confidence Score measures how clearly the platform can interpret relationships between:

- company

- founder

- services

- products

- locations

- industry entities

Weak entity relationships often prevent brands from being recognized as authoritative sources.

Strengthening these signals improves the likelihood that AI systems recognize and reference the brand entity.

Implementation Completion Percentage

This metric tracks how much of the recommended optimization roadmap has been implemented.

It provides visibility into:

- completed tasks

- pending actions

- partially implemented fixes

This ensures that teams remain aligned with the optimization roadmap generated by the platform.

Business Impact Mapping

Ultimately, optimization must connect to business outcomes.

The Business Impact Mapping system links technical improvements to measurable commercial metrics such as:

- high-intent prompt coverage

- money page optimization

- competitive displacement

- increased AI-driven discovery potential

By mapping AI visibility improvements to business priorities, the platform ensures that optimization efforts are always tied to revenue-driving opportunities.

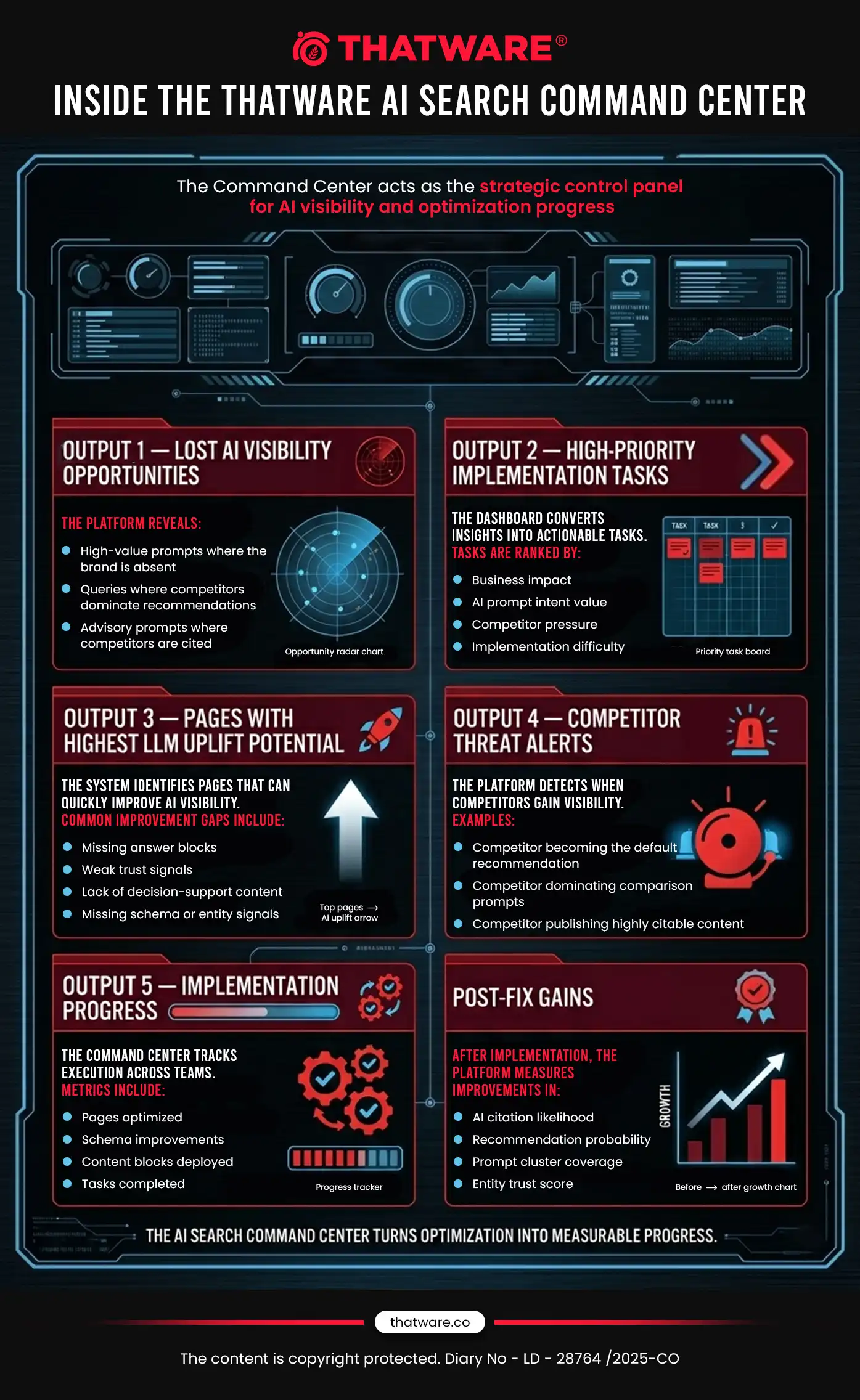

Pillar 7: AI Search Command Center

After the core implementation and optimization engines of the platform are mature, the next layer is the AI Search Command Center. This module acts as the strategic control panel for organizations that want continuous oversight of their AI search visibility, implementation progress, and competitive positioning.

Unlike traditional SEO dashboards that only report rankings or traffic, the ThatWare AI Search Command Center is designed to monitor AI recommendation ecosystems and guide ongoing optimization efforts across AI answer engines such as ChatGPT, Google AI Overviews, Perplexity, Gemini, and Claude.

However, it is important that this dashboard layer comes after the action and implementation engines. ThatWare’s philosophy for this platform is implementation-first intelligence. Businesses do not need more reporting dashboards—they need clear actions that improve AI recommendation and citation probability. Once those actions are in place, the Command Center becomes the interface that monitors progress and reveals new opportunities.

The AI Search Command Center provides a unified view of the most important signals affecting a brand’s visibility within AI-generated answers.

Lost AI Visibility Opportunities

The Command Center highlights prompt clusters and high-value query categories where the brand currently fails to appear in AI-generated answers.

Instead of simply showing missing mentions, the system identifies opportunity gaps, such as:

- High-intent prompts where competitors dominate recommendations

- Commercial queries where the brand is mentioned but not recommended

- Advisory queries where the brand is completely absent

This allows teams to immediately understand where the largest AI search growth opportunities exist.

High-Priority Implementation Tasks

The dashboard continuously aggregates recommendations from the platform’s Implementation Engine and converts them into a prioritized action list.

These tasks are ranked based on factors such as:

- Business impact

- Prompt intent value

- Competitor pressure

- Ease of implementation

This ensures that teams always know which actions will produce the highest improvement in AI visibility and recommendation probability.

Pages with Highest LLM Uplift Potential

One of the most valuable insights provided by the Command Center is identifying pages that have the highest potential to improve AI answer inclusion.

These pages may already have authority and traffic but may be missing critical elements such as:

- Extractable answer blocks

- Trust and proof signals

- Decision-support content

- Structured entity signals

The platform flags these pages so teams can focus on quick wins that significantly increase AI recommendation likelihood.

Competitor Threat Alerts

The Command Center monitors competitor performance across AI systems and highlights when competitors begin to dominate new prompt clusters or recommendation paths.

Examples include:

- Competitors becoming the default recommendation for “best provider” queries

- Competitor pages appearing repeatedly in AI-generated comparisons

- Competitors publishing content patterns that increase citation likelihood

These alerts help brands respond quickly with counter-strategies and content improvements.

Schema and Entity Issues

AI systems rely heavily on structured signals and entity clarity when selecting sources for answers.

The Command Center continuously monitors the site for issues such as:

- Missing or inconsistent schema markup

- Weak organization or person entities

- Incomplete product or service entities

- Broken entity relationships across pages

By surfacing these problems in one place, the system ensures that the brand maintains a strong entity and knowledge graph footprint, which directly improves AI trust.

Prompt Cluster Performance

This section tracks how the brand performs across different AI prompt categories, including:

- Informational prompts

- Commercial comparison queries

- Advisory prompts

- Product discovery queries

- Local recommendation prompts

The dashboard reveals which prompt clusters are:

- Dominated by competitors

- Emerging opportunity areas

- Already performing well

This insight allows marketing teams to align their content and implementation strategies with real AI query behavior.

Implementation Velocity

A key differentiator of the ThatWare platform is its focus on execution, not just insights.

The Command Center therefore measures how quickly recommendations are implemented across the site. This includes metrics such as:

- Tasks completed by team role (SEO, content, development)

- Pages optimized in the current cycle

- Schema improvements deployed

- Content blocks added to strengthen answer extraction

Tracking implementation velocity ensures that organizations translate insights into measurable improvements.

Post-Fix Gains

Once recommended improvements are implemented, the Command Center tracks the impact of those changes.

The system measures improvements in metrics such as:

- AI citation likelihood

- Recommendation probability

- Prompt cluster inclusion rate

- Entity confidence score

- Extraction readiness score

By comparing performance before and after implementation, teams can clearly see which actions are driving AI visibility improvements.

4. Product Development Phases

To build a powerful and scalable AI visibility optimization platform, ThatWare will develop the tool in structured phases. Each phase progressively expands the system’s capabilities—from diagnostics to implementation, automation, and advanced AI intelligence.

This phased approach ensures the product delivers immediate value early, while gradually introducing deeper automation and intelligence layers.

Phase 1 — MVP (Minimum Viable Product)

Core Promise

The MVP will focus on delivering a clear and actionable value proposition:

“Paste your domain and competitors, and ThatWare will show exactly what to implement on your key pages to improve AI recommendation and citation likelihood.”

Instead of creating another analytics dashboard, the MVP prioritizes implementation intelligence—helping businesses understand why they are not appearing in AI-generated answers and what exact changes will improve their chances of being cited or recommended.

Inputs

Users will provide a small set of inputs that allow the platform to analyze AI visibility and page readiness.

Key inputs include:

- Domain – the website being analyzed.

- Competitors – 3–5 primary competitors competing for similar AI answer visibility.

- Business category – industry classification to guide prompt and intent generation.

- Products or services – core offerings that should appear in AI responses.

- Target geography – especially important for local businesses or region-based services.

- Key pages – priority pages such as service pages, product pages, or category pages.

These inputs allow the system to create a structured AI visibility analysis environment.

Outputs

The MVP will generate a set of implementation-focused insights rather than generic reports.

AI Visibility Snapshot

The tool will provide an overview of how the brand currently appears across AI answer ecosystems, including:

- citation presence

- brand mentions

- recommendation inclusion

- competitor dominance across prompts

This snapshot helps users understand their baseline AI search visibility.

Prompt Cluster Map

The system will generate clusters of AI queries relevant to the business, such as:

- informational queries

- comparison queries

- recommendation queries

- problem–solution queries

- local intent queries

- buyer-stage queries

Each cluster will show where the brand appears and where competitors dominate.

Page Diagnostics

The platform will analyze key pages and identify why they are not being selected by AI systems.

Diagnostics may include:

- missing trust signals

- weak entity definitions

- lack of decision-support content

- insufficient structured data

- missing comparison or recommendation frameworks

- poor extraction readiness for AI answers

The output clearly explains why the page fails to qualify for citation or recommendation.

Implementation Recommendations

Instead of generic advice, the tool will generate specific page-level implementation instructions, including:

- missing content sections

- structural improvements

- decision-support content additions

- comparison framework suggestions

- trust and proof blocks

Each recommendation is tied to improving AI citation or recommendation potential.

Content Block Generation

To accelerate implementation, the platform will generate ready-to-use content blocks such as:

- FAQs

- comparison sections

- expert insight blocks

- decision checklists

- trust and proof elements

- use-case explanations

These blocks are designed to make pages AI-answer friendly and extractable.

Schema Suggestions

The MVP will identify gaps in structured data and suggest improvements such as:

- organization schema

- service or product schema

- FAQ schema

- review schema

- author and expert schema

These signals help improve entity recognition and AI retrieval readiness.

Internal Linking Suggestions

The system will identify weak internal linking structures and recommend:

- hub-and-spoke structures

- contextual links between related topics

- authority signal reinforcement across money pages

Strong internal linking helps AI systems understand topical authority and relationships.

Prioritized Action Roadmap

All recommendations will be organized into a clear implementation roadmap, prioritized by:

- expected impact on AI visibility

- implementation difficulty

- page importance

- commercial intent relevance

This ensures users can quickly identify the most valuable actions to implement first.

Phase 2 — Assisted Implementation

Once the MVP establishes the diagnostic and recommendation engine, the next phase will focus on operationalizing implementation.

Many organizations struggle not with insights but with executing improvements across teams. Phase 2 addresses this gap.

Workflow Integration

The platform will integrate with common project management tools, allowing recommendations to be exported directly into team workflows, including:

- Jira

- Trello

- Asana

This converts platform insights into actionable tasks for teams.

Page Rewrite Drafts

The system will generate draft rewrites for key sections, enabling faster implementation by content teams.

Examples include:

- rewritten introductions

- improved definitions

- comparison sections

- structured answer blocks

- decision-support content

These drafts provide a starting point that can be refined by editors.

Developer Tickets

Technical recommendations will be converted into developer-ready tasks, such as:

- schema implementation

- structured data updates

- internal link architecture improvements

- markup or metadata changes

This ensures technical fixes are clearly actionable.

Schema Deployment Suggestions

Instead of simply identifying missing schema, the platform will provide implementation-ready structured data suggestions, including JSON-LD snippets and integration guidance.

CMS-Ready Content Modules

The system will also generate CMS-ready modules, enabling teams to quickly insert AI-friendly content structures into pages.

Examples include:

- comparison modules

- FAQ blocks

- proof sections

- expert commentary sections

This significantly reduces the friction of implementing AI optimization improvements.

Phase 3 — Integrations & Automation

Once implementation workflows are established, the next step is automation and deeper platform integration.

CMS Integrations

ThatWare will integrate the platform with major content management systems, including:

- WordPress

- Shopify

- Webflow

These integrations will allow the platform to suggest and eventually assist in implementing improvements directly within the CMS environment.

Internal Linking Assistant

The system will automatically detect linking opportunities across the site and suggest strategic connections between:

- service pages

- blog content

- topical hubs

- commercial pages

This strengthens the site’s topical authority and entity relationships.

Content Refresh Monitoring

The platform will monitor content freshness and signal when pages need updates due to:

- competitor improvements

- new AI query patterns

- outdated statistics or references

- missing emerging subtopics

This ensures pages remain AI-recommendation ready over time.

Competitor Monitoring

The tool will also monitor competitor activity, detecting:

- new pages targeting important prompts

- structural improvements competitors introduce

- emerging content patterns in AI answers

This allows businesses to react quickly to competitive changes.

Phase 4 — Advanced AI Intelligence

In the final phase, the platform evolves into a full AI search intelligence system.

This phase introduces predictive modeling and deeper AI behavior analysis.

Recommendation Likelihood Modeling

The platform will estimate how likely a page is to be recommended by AI systems for specific prompts.

The model will consider factors such as:

- content extractability

- trust signals

- decision-support content

- entity clarity

- semantic coverage

This creates a Recommendation Readiness Score for each page.

Brand Trust Fingerprinting

The system will build a model of a brand’s trust footprint across the web, including:

- entity presence

- author credibility

- citations and mentions

- proof signals

- industry authority indicators

This helps identify trust gaps that affect AI recommendation decisions.

Citation Probability Modeling

The platform will analyze the structural and semantic characteristics of pages to estimate their likelihood of being cited by AI systems.

Factors may include:

- factual clarity

- structured definitions

- expert attribution

- evidence-backed claims

- content structure

This provides a measurable citation probability score.

Multi-Model Query Testing

Finally, the platform will simulate queries across multiple AI systems, such as:

- ChatGPT

- Gemini

- Perplexity

- Claude

This allows businesses to observe how their brand appears across different AI environments and identify opportunities to improve cross-model visibility and recommendation inclusion.

5. Product Architecture

The ThatWare AI Search Implementor is built on a modular architecture designed to transform AI visibility insights into practical implementation actions. Each module performs a distinct role in detecting AI search opportunities, diagnosing gaps, generating implementation recommendations, and validating improvements.

The architecture ensures that businesses not only understand how AI systems perceive their brand, but also receive clear, execution-ready strategies to improve their presence across AI-powered search environments such as ChatGPT, Google AI Overviews, Perplexity, Gemini, and Claude.

Module 1 — Domain Intelligence Setup

The Domain Intelligence Setup module is the foundational layer of the ThatWare platform. Its purpose is to understand the complete digital context of a brand before conducting AI visibility analysis.

Instead of relying on isolated page audits, ThatWare builds a holistic profile of the organization, its offerings, and its competitive ecosystem. This allows the platform to align AI visibility insights with real business priorities.

This module collects and analyzes:

• Business Category

Identifies the industry and service/product classification of the business. This enables the platform to generate relevant query clusters and benchmark competitors within the correct market segment.

• Products and Services

Maps the primary offerings of the business. This helps the system determine which pages and topics should appear in AI-generated recommendations.

• Target Audience

Defines the intended user segments, such as enterprise buyers, small businesses, local consumers, or industry professionals. Understanding the audience helps ThatWare generate prompts aligned with real buyer intent.

• Geographic Locations

Detects the locations where the business operates or targets customers. This is particularly important for identifying local AI search opportunities such as “best service near me.”

• Competitors

Builds a competitive landscape by identifying major competing brands and websites. These competitors are then used for comparative analysis across AI responses.

• Money Pages

Identifies high-value pages such as service pages, product pages, category pages, or landing pages that directly drive revenue. These pages become the primary targets for AI optimization.

• Content Clusters

Maps topical clusters and content hubs across the website to understand the site’s authority and coverage within specific subject areas.

• Brand Entity Profile

Constructs a structured entity representation of the brand including the organization, founders, products, services, and industry relationships. This is critical for improving AI trust and citation potential.

By establishing this foundational intelligence, the ThatWare platform ensures that all downstream analysis is context-aware and business-focused.

Module 2 — Query Universe Builder

The Query Universe Builder is responsible for generating the complete spectrum of prompts and queries that users may ask AI systems when searching for information, services, or recommendations related to the brand.

Rather than focusing only on traditional keyword research, this module models AI-native search behavior — the way users interact with conversational search systems.

The system generates multiple categories of prompts, including:

• Informational Queries

Questions where users are seeking knowledge, explanations, or guidance related to a topic.

• Comparison Queries

Queries that compare products, services, tools, or providers. These prompts often drive high-intent decisions.

• Recommendation Prompts

Queries where users ask AI systems to suggest the best tools, services, or providers.

• Local Queries

Location-based queries such as “best agency near me” or “top service provider in a specific city.”

• Problem-Solution Prompts

Queries where users describe a problem and ask for recommended solutions.

• Buyer-Stage Queries

Prompts that reflect different stages of the purchase journey, from early research to final vendor selection.

• “Best X” Queries

Recommendation-based prompts that often influence AI-driven rankings and suggestions.

• “Alternatives to X” Queries

Queries where users seek alternatives to specific tools, brands, or services.

By generating this query universe, ThatWare creates a realistic model of the conversational queries AI systems must answer. This allows the platform to evaluate whether a brand is appearing in the most commercially valuable prompt clusters.

Module 3 — AI Response Monitoring

Once the query universe is generated, the AI Response Monitoring module evaluates how different AI systems respond to those prompts.

This module systematically captures and analyzes responses generated by AI platforms, identifying whether and how a brand appears in the answers.

For each prompt, the system performs the following analysis:

• Capture AI Responses